Mary L. Gray

AI Red-Teaming is a Sociotechnical System. Now What?

Dec 12, 2024Abstract:As generative AI technologies find more and more real-world applications, the importance of testing their performance and safety seems paramount. ``Red-teaming'' has quickly become the primary approach to test AI models--prioritized by AI companies, and enshrined in AI policy and regulation. Members of red teams act as adversaries, probing AI systems to test their safety mechanisms and uncover vulnerabilities. Yet we know too little about this work and its implications. This essay calls for collaboration between computer scientists and social scientists to study the sociotechnical systems surrounding AI technologies, including the work of red-teaming, to avoid repeating the mistakes of the recent past. We highlight the importance of understanding the values and assumptions behind red-teaming, the labor involved, and the psychological impacts on red-teamers.

The Human Factor in AI Red Teaming: Perspectives from Social and Collaborative Computing

Jul 10, 2024Abstract:Rapid progress in general-purpose AI has sparked significant interest in "red teaming," a practice of adversarial testing originating in military and cybersecurity applications. AI red teaming raises many questions about the human factor, such as how red teamers are selected, biases and blindspots in how tests are conducted, and harmful content's psychological effects on red teamers. A growing body of HCI and CSCW literature examines related practices-including data labeling, content moderation, and algorithmic auditing. However, few, if any, have investigated red teaming itself. This workshop seeks to consider the conceptual and empirical challenges associated with this practice, often rendered opaque by non-disclosure agreements. Future studies may explore topics ranging from fairness to mental health and other areas of potential harm. We aim to facilitate a community of researchers and practitioners who can begin to meet these challenges with creativity, innovation, and thoughtful reflection.

Participation in the age of foundation models

May 29, 2024

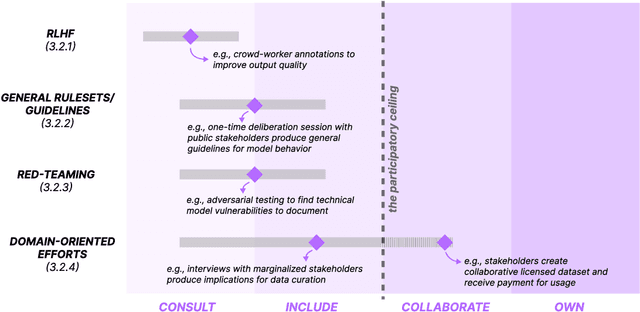

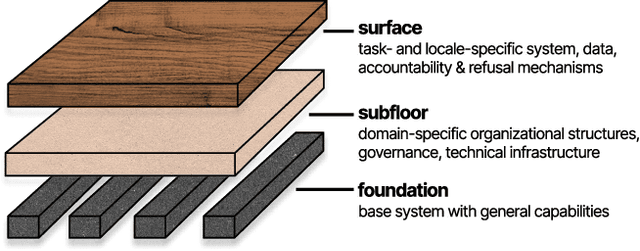

Abstract:Growing interest and investment in the capabilities of foundation models has positioned such systems to impact a wide array of public services. Alongside these opportunities is the risk that these systems reify existing power imbalances and cause disproportionate harm to marginalized communities. Participatory approaches hold promise to instead lend agency and decision-making power to marginalized stakeholders. But existing approaches in participatory AI/ML are typically deeply grounded in context - how do we apply these approaches to foundation models, which are, by design, disconnected from context? Our paper interrogates this question. First, we examine existing attempts at incorporating participation into foundation models. We highlight the tension between participation and scale, demonstrating that it is intractable for impacted communities to meaningfully shape a foundation model that is intended to be universally applicable. In response, we develop a blueprint for participatory foundation models that identifies more local, application-oriented opportunities for meaningful participation. In addition to the "foundation" layer, our framework proposes the "subfloor'' layer, in which stakeholders develop shared technical infrastructure, norms and governance for a grounded domain, and the "surface'' layer, in which affected communities shape the use of a foundation model for a specific downstream task. The intermediate "subfloor'' layer scopes the range of potential harms to consider, and affords communities more concrete avenues for deliberation and intervention. At the same time, it avoids duplicative effort by scaling input across relevant use cases. Through three case studies in clinical care, financial services, and journalism, we illustrate how this multi-layer model can create more meaningful opportunities for participation than solely intervening at the foundation layer.

* 13 pages, 2 figures. Appeared at FAccT '24

Can Workers Meaningfully Consent to Workplace Wellbeing Technologies?

Mar 13, 2023

Abstract:Sensing technologies deployed in the workplace can collect detailed data about individual activities and group interactions that are otherwise difficult to capture. A hopeful application of these technologies is that they can help businesses and workers optimize productivity and wellbeing. However, given the inherent and structural power dynamics in the workplace, the prevalent approach of accepting tacit compliance to monitor work activities rather than seeking workers' meaningful consent raises privacy and ethical concerns. This paper unpacks a range of challenges that workers face when consenting to workplace wellbeing technologies. Using a hypothetical case to prompt reflection among six multi-stakeholder focus groups involving 15 participants, we explored participants' expectations and capacity to consent to workplace sensing technologies. We sketched possible interventions that could better support more meaningful consent to workplace wellbeing technologies by drawing on critical computing and feminist scholarship -- which reframes consent from a purely individual choice to a structural condition experienced at the individual level that needs to be freely given, reversible, informed, enthusiastic, and specific (FRIES). The focus groups revealed that workers are vulnerable to meaningless consent -- dynamics that undo the value of data gathered in the name of "wellbeing," as well as an erosion of autonomy in the workplace. To meaningfully consent, participants wanted changes to how the technology works and is being used, as well as to the policies and practices surrounding the technology. Our mapping of what prevents workers from meaningfully consenting to workplace wellbeing technologies (challenges) and what they require to do so (interventions) underscores that the lack of meaningful consent is a structural problem requiring socio-technical solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge