Markus Huber

Reinforcement learning of minimalist grammars

Apr 30, 2020

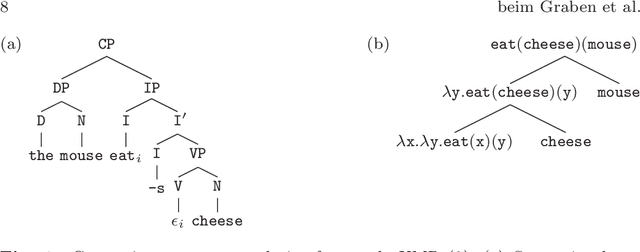

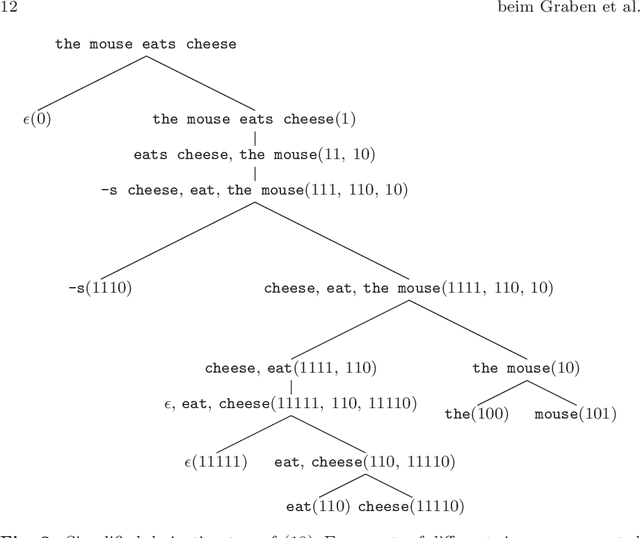

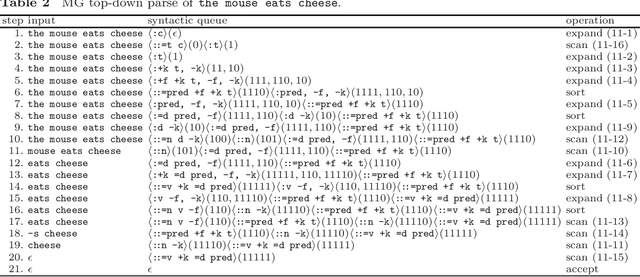

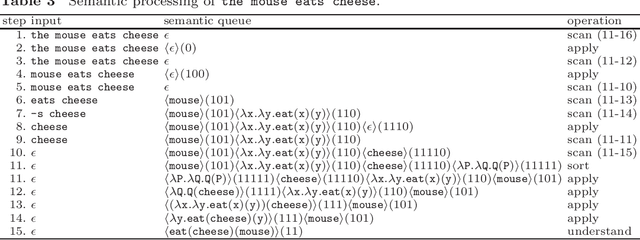

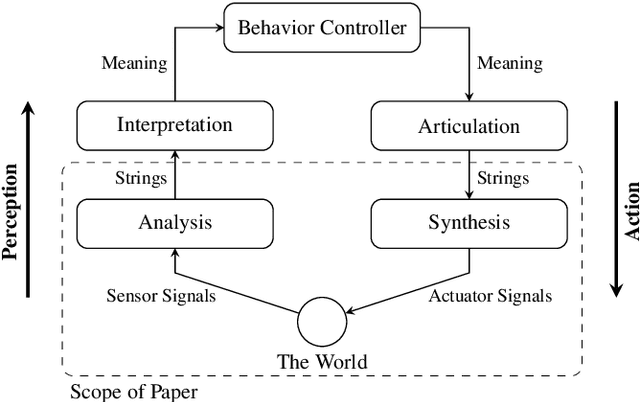

Abstract:Speech-controlled user interfaces facilitate the operation of devices and household functions to laymen. State-of-the-art language technology scans the acoustically analyzed speech signal for relevant keywords that are subsequently inserted into semantic slots to interpret the user's intent. In order to develop proper cognitive information and communication technologies, simple slot-filling should be replaced by utterance meaning transducers (UMT) that are based on semantic parsers and a mental lexicon, comprising syntactic, phonetic and semantic features of the language under consideration. This lexicon must be acquired by a cognitive agent during interaction with its users. We outline a reinforcement learning algorithm for the acquisition of syntax and semantics of English utterances, based on minimalist grammar (MG), a recent computational implementation of generative linguistics. English declarative sentences are presented to the agent by a teacher in form of utterance meaning pairs (UMP) where the meanings are encoded as formulas of predicate logic. Since MG codifies universal linguistic competence through inference rules, thereby separating innate linguistic knowledge from the contingently acquired lexicon, our approach unifies generative grammar and reinforcement learning, hence potentially resolving the still pending Chomsky-Skinner controversy.

Vector symbolic architectures for context-free grammars

Mar 11, 2020

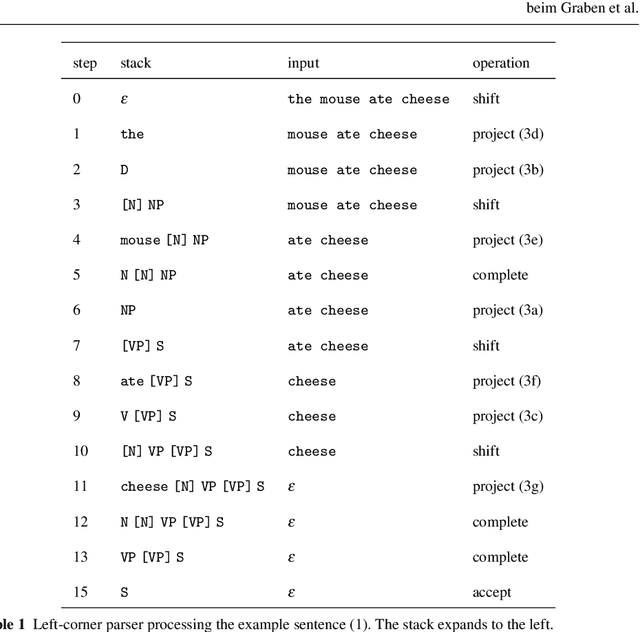

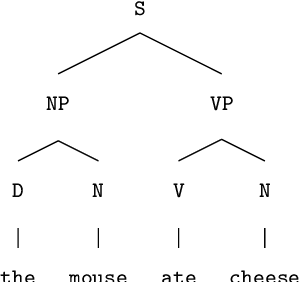

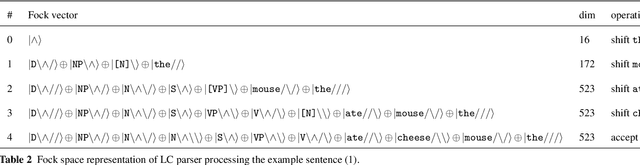

Abstract:Background / introduction. Vector symbolic architectures (VSA) are a viable approach for the hyperdimensional representation of symbolic data, such as documents, syntactic structures, or semantic frames. Methods. We present a rigorous mathematical framework for the representation of phrase structure trees and parse-trees of context-free grammars (CFG) in Fock space, i.e. infinite-dimensional Hilbert space as being used in quantum field theory. We define a novel normal form for CFG by means of term algebras. Using a recently developed software toolbox, called FockBox, we construct Fock space representations for the trees built up by a CFG left-corner (LC) parser. Results. We prove a universal representation theorem for CFG term algebras in Fock space and illustrate our findings through a low-dimensional principal component projection of the LC parser states. Conclusions. Our approach could leverage the development of VSA for explainable artificial intelligence (XAI) by means of hyperdimensional deep neural computation. It could be of significance for the improvement of cognitive user interfaces and other applications of VSA in machine learning.

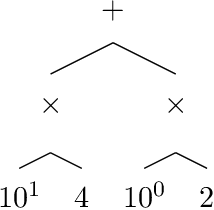

Reinforcement Learning of Minimalist Numeral Grammars

Jun 11, 2019

Abstract:Speech-controlled user interfaces facilitate the operation of devices and household functions to laymen. State-of-the-art language technology scans the acoustically analyzed speech signal for relevant keywords that are subsequently inserted into semantic slots to interpret the user's intent. In order to develop proper cognitive information and communication technologies, simple slot-filling should be replaced by utterance meaning transducers (UMT) that are based on semantic parsers and a \emph{mental lexicon}, comprising syntactic, phonetic and semantic features of the language under consideration. This lexicon must be acquired by a cognitive agent during interaction with its users. We outline a reinforcement learning algorithm for the acquisition of the syntactic morphology and arithmetic semantics of English numerals, based on minimalist grammar (MG), a recent computational implementation of generative linguistics. Number words are presented to the agent by a teacher in form of utterance meaning pairs (UMP) where the meanings are encoded as arithmetic terms from a suitable term algebra. Since MG encodes universal linguistic competence through inference rules, thereby separating innate linguistic knowledge from the contingently acquired lexicon, our approach unifies generative grammar and reinforcement learning, hence potentially resolving the still pending Chomsky-Skinner controversy.

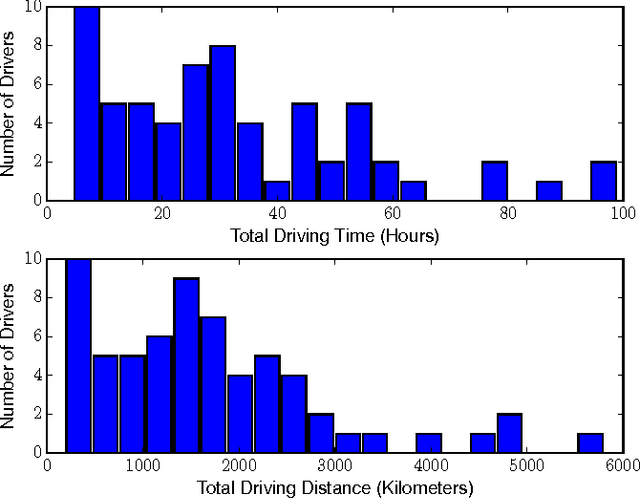

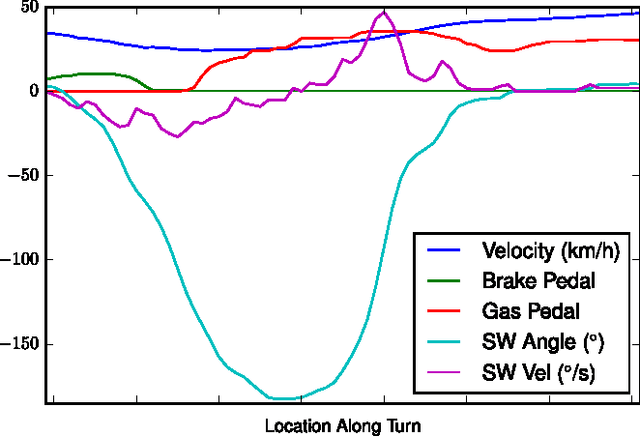

Driver Identification Using Automobile Sensor Data from a Single Turn

Jun 09, 2017

Abstract:As automotive electronics continue to advance, cars are becoming more and more reliant on sensors to perform everyday driving operations. These sensors are omnipresent and help the car navigate, reduce accidents, and provide comfortable rides. However, they can also be used to learn about the drivers themselves. In this paper, we propose a method to predict, from sensor data collected at a single turn, the identity of a driver out of a given set of individuals. We cast the problem in terms of time series classification, where our dataset contains sensor readings at one turn, repeated several times by multiple drivers. We build a classifier to find unique patterns in each individual's driving style, which are visible in the data even on such a short road segment. To test our approach, we analyze a new dataset collected by AUDI AG and Audi Electronics Venture, where a fleet of test vehicles was equipped with automotive data loggers storing all sensor readings on real roads. We show that turns are particularly well-suited for detecting variations across drivers, especially when compared to straightaways. We then focus on the 12 most frequently made turns in the dataset, which include rural, urban, highway on-ramps, and more, obtaining accurate identification results and learning useful insights about driver behavior in a variety of settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge