Marco Martínez

MusicMagus: Zero-Shot Text-to-Music Editing via Diffusion Models

Feb 09, 2024Abstract:Recent advances in text-to-music generation models have opened new avenues in musical creativity. However, music generation usually involves iterative refinements, and how to edit the generated music remains a significant challenge. This paper introduces a novel approach to the editing of music generated by such models, enabling the modification of specific attributes, such as genre, mood and instrument, while maintaining other aspects unchanged. Our method transforms text editing to \textit{latent space manipulation} while adding an extra constraint to enforce consistency. It seamlessly integrates with existing pretrained text-to-music diffusion models without requiring additional training. Experimental results demonstrate superior performance over both zero-shot and certain supervised baselines in style and timbre transfer evaluations. Additionally, we showcase the practical applicability of our approach in real-world music editing scenarios.

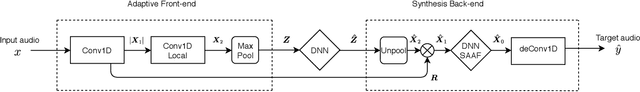

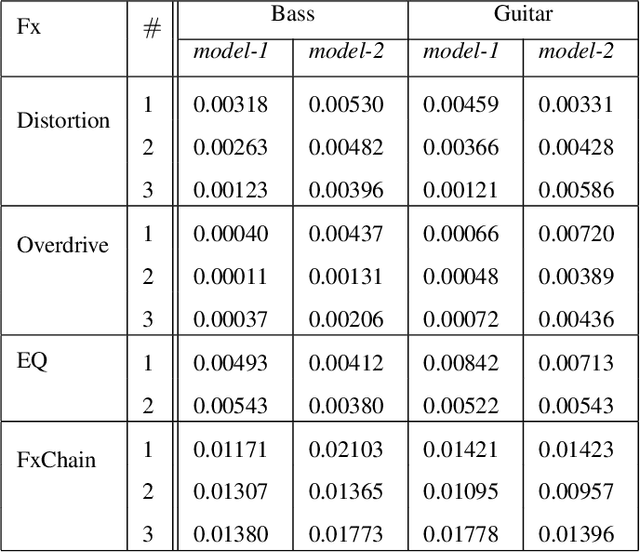

Modeling of nonlinear audio effects with end-to-end deep neural networks

Oct 15, 2018

Abstract:In the context of music production, distortion effects are mainly used for aesthetic reasons and are usually applied to electric musical instruments. Most existing methods for nonlinear modeling are often either simplified or optimized to a very specific circuit. In this work, we investigate deep learning architectures for audio processing and we aim to find a general purpose end-to-end deep neural network to perform modeling of nonlinear audio effects. We show the network modeling various nonlinearities and we discuss the generalization capabilities among different instruments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge