Liang Guotao

Codebook Transfer with Part-of-Speech for Vector-Quantized Image Modeling

Mar 15, 2024

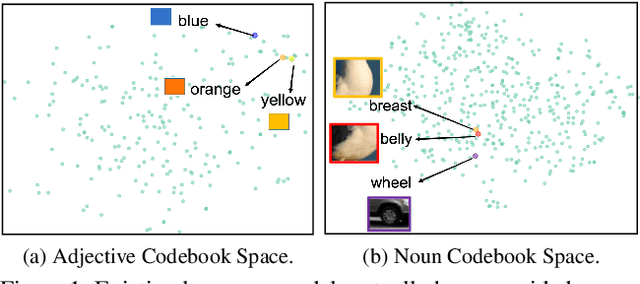

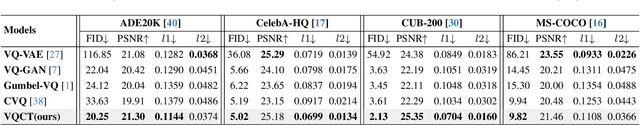

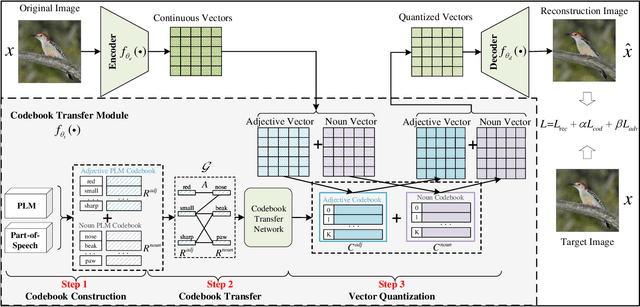

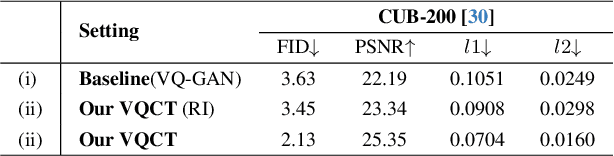

Abstract:Vector-Quantized Image Modeling (VQIM) is a fundamental research problem in image synthesis, which aims to represent an image with a discrete token sequence. Existing studies effectively address this problem by learning a discrete codebook from scratch and in a code-independent manner to quantize continuous representations into discrete tokens. However, learning a codebook from scratch and in a code-independent manner is highly challenging, which may be a key reason causing codebook collapse, i.e., some code vectors can rarely be optimized without regard to the relationship between codes and good codebook priors such that die off finally. In this paper, inspired by pretrained language models, we find that these language models have actually pretrained a superior codebook via a large number of text corpus, but such information is rarely exploited in VQIM. To this end, we propose a novel codebook transfer framework with part-of-speech, called VQCT, which aims to transfer a well-trained codebook from pretrained language models to VQIM for robust codebook learning. Specifically, we first introduce a pretrained codebook from language models and part-of-speech knowledge as priors. Then, we construct a vision-related codebook with these priors for achieving codebook transfer. Finally, a novel codebook transfer network is designed to exploit abundant semantic relationships between codes contained in pretrained codebooks for robust VQIM codebook learning. Experimental results on four datasets show that our VQCT method achieves superior VQIM performance over previous state-of-the-art methods.

HTP: Exploiting Holistic Temporal Patterns for Sequential Recommendation

Jul 22, 2023

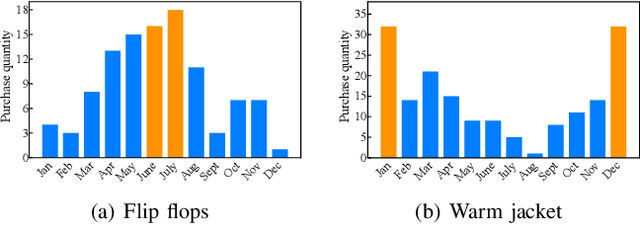

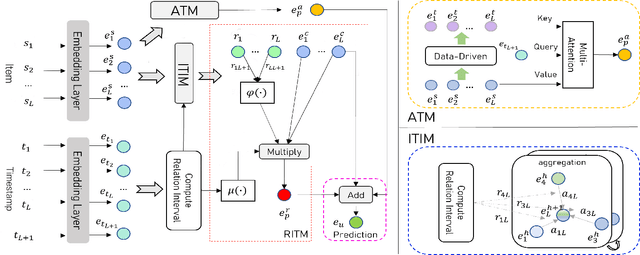

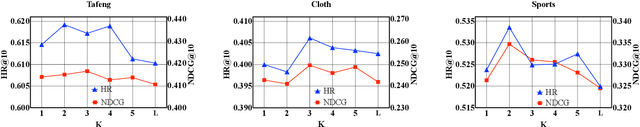

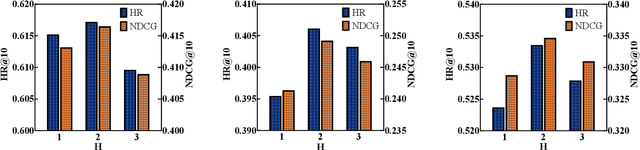

Abstract:Sequential recommender systems have demonstrated a huge success for next-item recommendation by explicitly exploiting the temporal order of users' historical interactions. In practice, user interactions contain more useful temporal information beyond order, as shown by some pioneering studies. In this paper, we systematically investigate various temporal information for sequential recommendation and identify three types of advantageous temporal patterns beyond order, including absolute time information, relative item time intervals and relative recommendation time intervals. We are the first to explore item-oriented absolute time patterns. While existing models consider only one or two of these three patterns, we propose a novel holistic temporal pattern based neural network, named HTP, to fully leverage all these three patterns. In particular, we introduce novel components to address the subtle correlations between relative item time intervals and relative recommendation time intervals, which render a major technical challenge. Extensive experiments on three real-world benchmark datasets show that our HTP model consistently and substantially outperforms many state-of-the-art models. Our code is publically available at https://github.com/623851394/HTP/tree/main/HTP-main

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge