Kyujin Choi

An Offline Meta Black-box Optimization Framework for Adaptive Design of Urban Traffic Light Management Systems

Aug 14, 2024Abstract:Complex urban road networks with high vehicle occupancy frequently face severe traffic congestion. Designing an effective strategy for managing multiple traffic lights plays a crucial role in managing congestion. However, most current traffic light management systems rely on human-crafted decisions, which may not adapt well to diverse traffic patterns. In this paper, we delve into two pivotal design components of the traffic light management system that can be dynamically adjusted to various traffic conditions: phase combination and phase time allocation. While numerous studies have sought an efficient strategy for managing traffic lights, most of these approaches consider a fixed traffic pattern and are limited to relatively small road networks. To overcome these limitations, we introduce a novel and practical framework to formulate the optimization of such design components using an offline meta black-box optimization. We then present a simple yet effective method to efficiently find a solution for the aforementioned problem. In our framework, we first collect an offline meta dataset consisting of pairs of design choices and corresponding congestion measures from various traffic patterns. After collecting the dataset, we employ the Attentive Neural Process (ANP) to predict the impact of the proposed design on congestion across various traffic patterns with well-calibrated uncertainty. Finally, Bayesian optimization, with ANP as a surrogate model, is utilized to find an optimal design for unseen traffic patterns through limited online simulations. Our experiment results show that our method outperforms state-of-the-art baselines on complex road networks in terms of the number of waiting vehicles. Surprisingly, the deployment of our method into a real-world traffic system was able to improve traffic throughput by 4.80\% compared to the original strategy.

Two-stage training algorithm for AI robot soccer

Apr 13, 2021

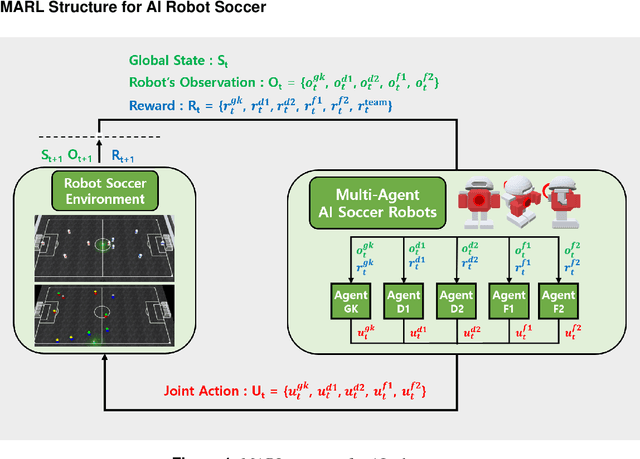

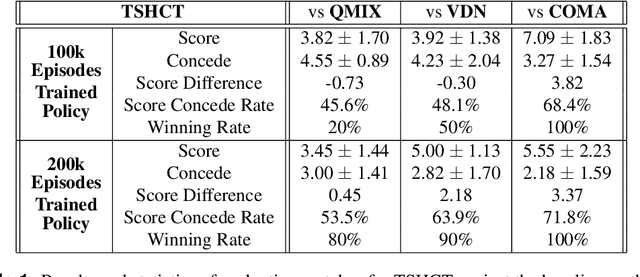

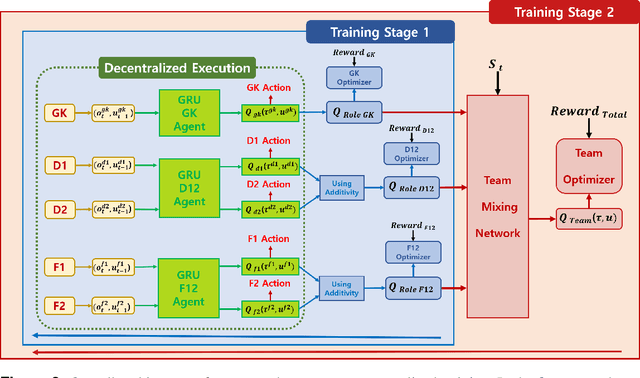

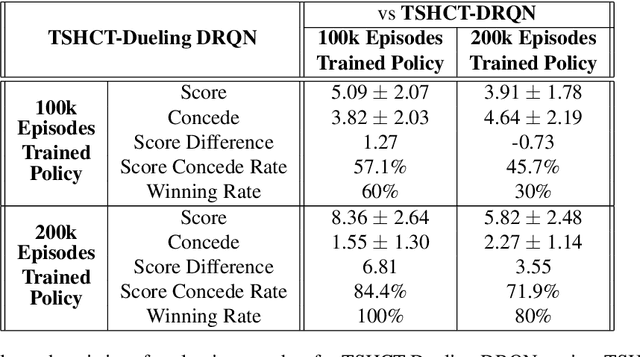

Abstract:In multi-agent reinforcement learning, the cooperative learning behavior of agents is very important. In the field of heterogeneous multi-agent reinforcement learning, cooperative behavior among different types of agents in a group is pursued. Learning a joint-action set during centralized training is an attractive way to obtain such cooperative behavior, however, this method brings limited learning performance with heterogeneous agents. To improve the learning performance of heterogeneous agents during centralized training, two-stage heterogeneous centralized training which allows the training of multiple roles of heterogeneous agents is proposed. During training, two training processes are conducted in a series. One of the two stages is to attempt training each agent according to its role, aiming at the maximization of individual role rewards. The other is for training the agents as a whole to make them learn cooperative behaviors while attempting to maximize shared collective rewards, e.g., team rewards. Because these two training processes are conducted in a series in every timestep, agents can learn how to maximize role rewards and team rewards simultaneously. The proposed method is applied to 5 versus 5 AI robot soccer for validation. Simulation results show that the proposed method can train the robots of the robot soccer team effectively, achieving higher role rewards and higher team rewards as compared to other approaches that can be used to solve problems of training cooperative multi-agent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge