Kyle D. Julian

Generating Probabilistic Safety Guarantees for Neural Network Controllers

Mar 01, 2021

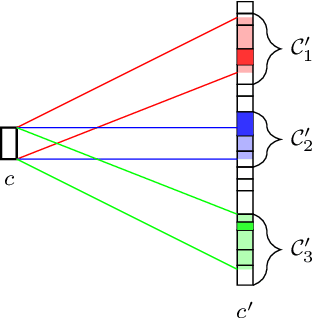

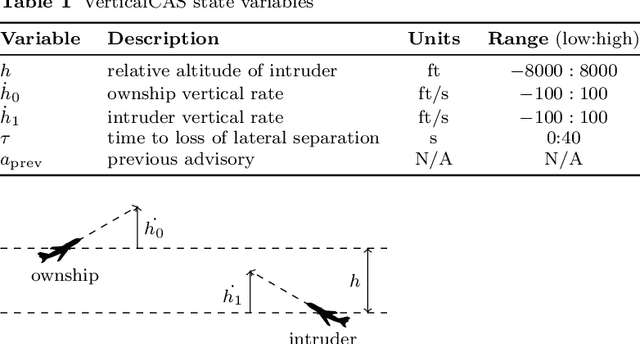

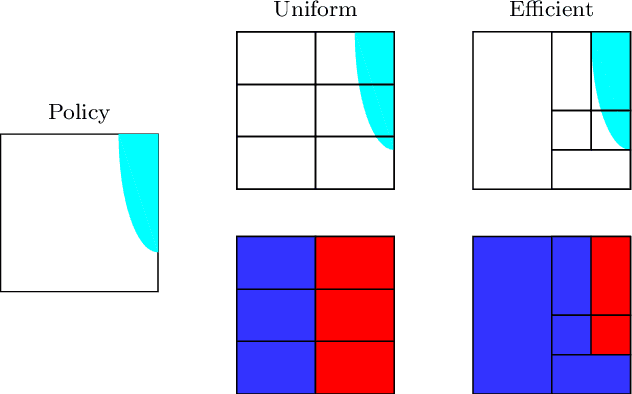

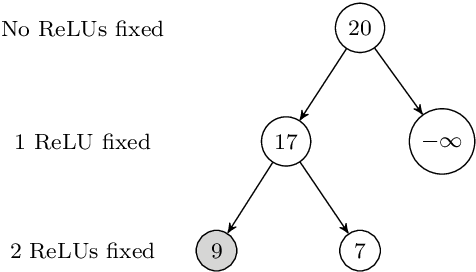

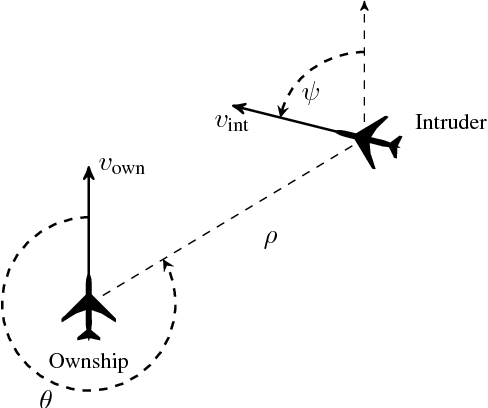

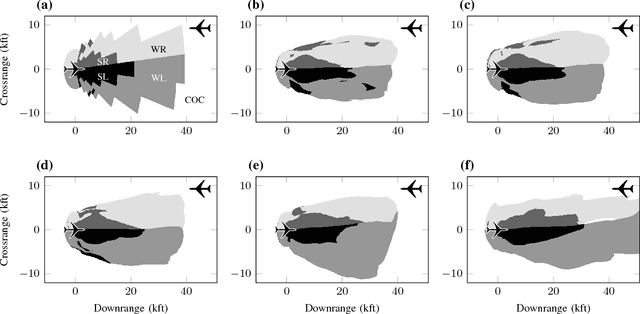

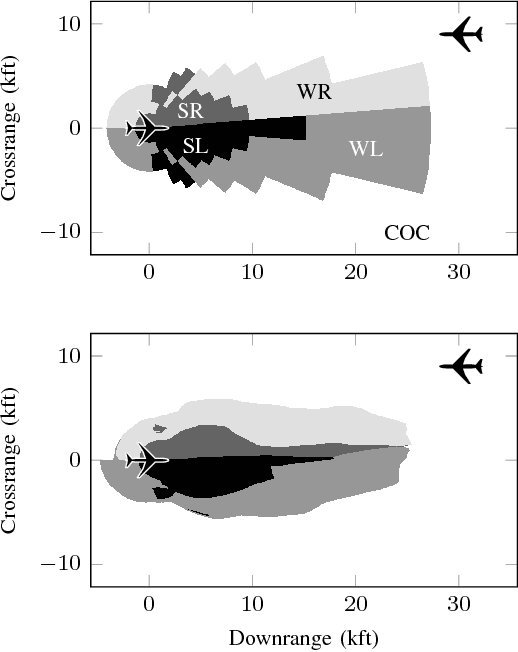

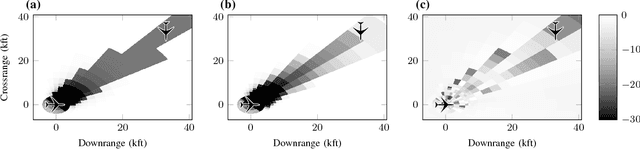

Abstract:Neural networks serve as effective controllers in a variety of complex settings due to their ability to represent expressive policies. The complex nature of neural networks, however, makes their output difficult to verify and predict, which limits their use in safety-critical applications. While simulations provide insight into the performance of neural network controllers, they are not enough to guarantee that the controller will perform safely in all scenarios. To address this problem, recent work has focused on formal methods to verify properties of neural network outputs. For neural network controllers, we can use a dynamics model to determine the output properties that must hold for the controller to operate safely. In this work, we develop a method to use the results from neural network verification tools to provide probabilistic safety guarantees on a neural network controller. We develop an adaptive verification approach to efficiently generate an overapproximation of the neural network policy. Next, we modify the traditional formulation of Markov decision process (MDP) model checking to provide guarantees on the overapproximated policy given a stochastic dynamics model. Finally, we incorporate techniques in state abstraction to reduce overapproximation error during the model checking process. We show that our method is able to generate meaningful probabilistic safety guarantees for aircraft collision avoidance neural networks that are loosely inspired by Airborne Collision Avoidance System X (ACAS X), a family of collision avoidance systems that formulates the problem as a partially observable Markov decision process (POMDP).

Global Optimization of Objective Functions Represented by ReLU Networks

Oct 08, 2020

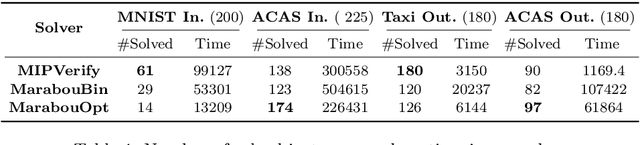

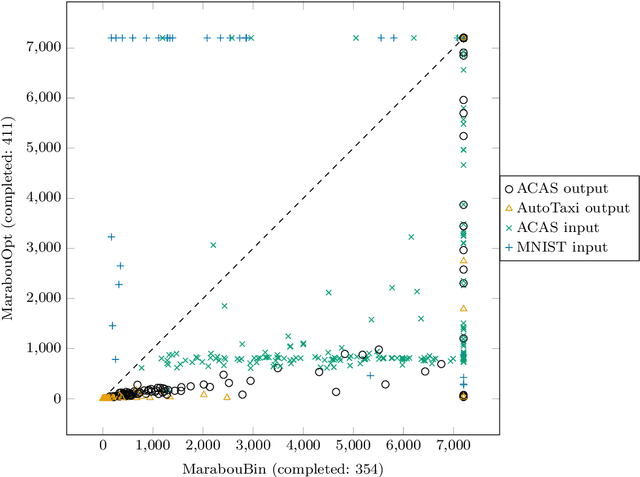

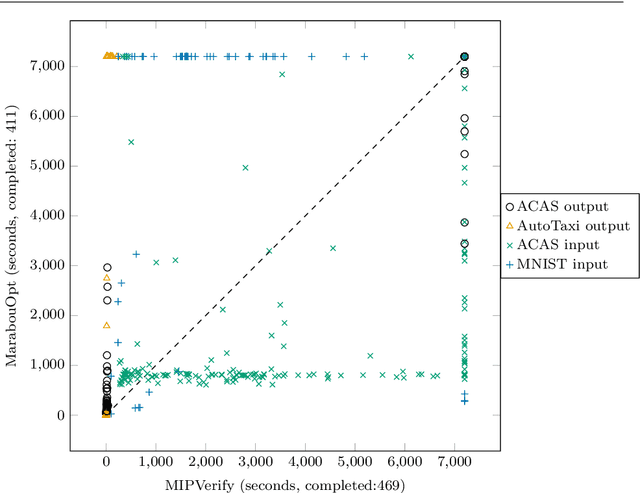

Abstract:Neural networks (NN) learn complex non-convex functions, making them desirable solutions in many contexts. Applying NNs to safety-critical tasks demands formal guarantees about their behavior. Recently, a myriad of verification solutions for NNs emerged using reachability, optimization, and search based techniques. Particularly interesting are adversarial examples, which reveal ways the network can fail. They are widely generated using incomplete methods, such as local optimization, which cannot guarantee optimality. We propose strategies to extend existing verifiers to provide provably optimal adversarial examples. Naive approaches combine bisection search with an off-the-shelf verifier, resulting in many expensive calls to the verifier. Instead, our proposed approach yields tightly integrated optimizers, achieving better runtime performance. We extend Marabou, an SMT-based verifier, and compare it with the bisection based approach and MIPVerify, an optimization based verifier.

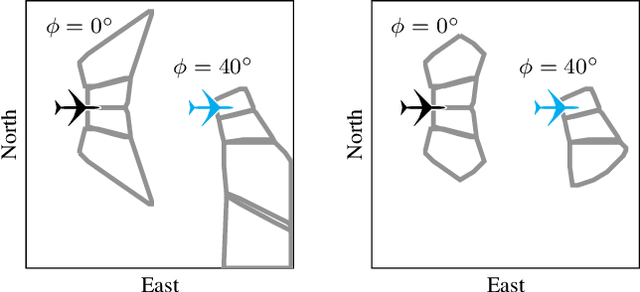

Validation of Image-Based Neural Network Controllers through Adaptive Stress Testing

Mar 05, 2020

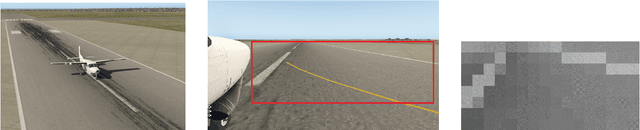

Abstract:Neural networks have become state-of-the-art for computer vision problems because of their ability to efficiently model complex functions from large amounts of data. While neural networks can be shown to perform well empirically for a variety of tasks, their performance is difficult to guarantee. Neural network verification tools have been developed that can certify robustness with respect to a given input image; however, for neural network systems used in closed-loop controllers, robustness with respect to individual images does not address multi-step properties of the neural network controller and its environment. Furthermore, neural network systems interacting in the physical world and using natural images are operating in a black-box environment, making formal verification intractable. This work combines the adaptive stress testing (AST) framework with neural network verification tools to search for the most likely sequence of image disturbances that cause the neural network controlled system to reach a failure. An autonomous aircraft taxi application is presented, and results show that the AST method finds failures with more likely image disturbances than baseline methods. Further analysis of AST results revealed an explainable cause of the failure, giving insight into the problematic scenarios that should be addressed.

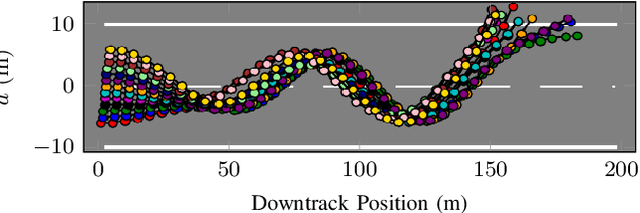

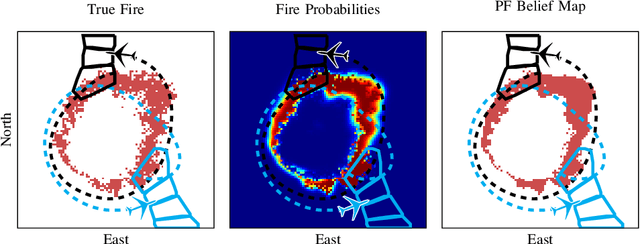

Image-based Guidance of Autonomous Aircraft for Wildfire Surveillance and Prediction

Mar 01, 2019

Abstract:Small unmanned aircraft can help firefighters combat wildfires by providing real-time surveillance of the growing fires. However, guiding the aircraft autonomously given only wildfire images is a challenging problem. This work models noisy images obtained from on-board cameras and proposes two approaches to filtering the wildfire images. The first approach uses a simple Kalman filter to reduce noise and update a belief map in observed areas. The second approach uses a particle filter to predict wildfire growth and uses observations to estimate uncertainties relating to wildfire expansion. The belief maps are used to train a deep reinforcement learning controller, which learns a policy to navigate the aircraft to survey the wildfire while avoiding flight directly over the fire. Simulation results show that the proposed controllers precisely guide the aircraft and accurately estimate wildfire growth, and a study of observation noise demonstrates the robustness of the particle filter approach.

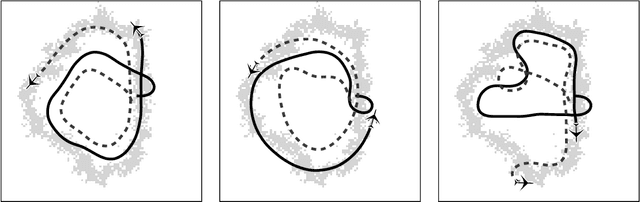

Distributed Wildfire Surveillance with Autonomous Aircraft using Deep Reinforcement Learning

Oct 09, 2018

Abstract:Teams of autonomous unmanned aircraft can be used to monitor wildfires, enabling firefighters to make informed decisions. However, controlling multiple autonomous fixed-wing aircraft to maximize forest fire coverage is a complex problem. The state space is high dimensional, the fire propagates stochastically, the sensor information is imperfect, and the aircraft must coordinate with each other to accomplish their mission. This work presents two deep reinforcement learning approaches for training decentralized controllers that accommodate the high dimensionality and uncertainty inherent in the problem. The first approach controls the aircraft using immediate observations of the individual aircraft. The second approach allows aircraft to collaborate on a map of the wildfire's state and maintain a time history of locations visited, which are used as inputs to the controller. Simulation results show that both approaches allow the aircraft to accurately track wildfire expansions and outperform an online receding horizon controller. Additional simulations demonstrate that the approach scales with different numbers of aircraft and generalizes to different wildfire shapes.

Deep Neural Network Compression for Aircraft Collision Avoidance Systems

Oct 09, 2018

Abstract:One approach to designing decision making logic for an aircraft collision avoidance system frames the problem as a Markov decision process and optimizes the system using dynamic programming. The resulting collision avoidance strategy can be represented as a numeric table. This methodology has been used in the development of the Airborne Collision Avoidance System X (ACAS X) family of collision avoidance systems for manned and unmanned aircraft, but the high dimensionality of the state space leads to very large tables. To improve storage efficiency, a deep neural network is used to approximate the table. With the use of an asymmetric loss function and a gradient descent algorithm, the parameters for this network can be trained to provide accurate estimates of table values while preserving the relative preferences of the possible advisories for each state. By training multiple networks to represent subtables, the network also decreases the required runtime for computing the collision avoidance advisory. Simulation studies show that the network improves the safety and efficiency of the collision avoidance system. Because only the network parameters need to be stored, the required storage space is reduced by a factor of 1000, enabling the collision avoidance system to operate using current avionics systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge