June Sig Sung

Controllable speech synthesis by learning discrete phoneme-level prosodic representations

Nov 29, 2022

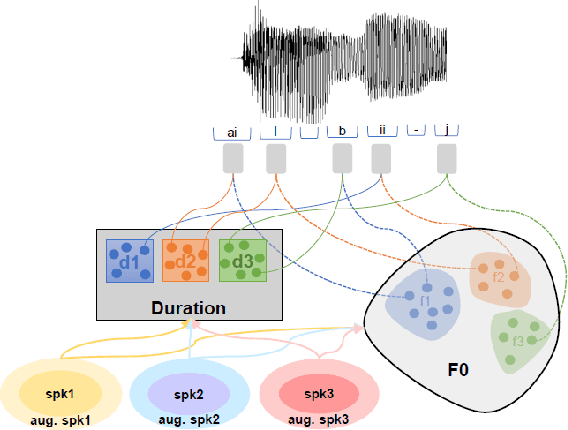

Abstract:In this paper, we present a novel method for phoneme-level prosody control of F0 and duration using intuitive discrete labels. We propose an unsupervised prosodic clustering process which is used to discretize phoneme-level F0 and duration features from a multispeaker speech dataset. These features are fed as an input sequence of prosodic labels to a prosody encoder module which augments an autoregressive attention-based text-to-speech model. We utilize various methods in order to improve prosodic control range and coverage, such as augmentation, F0 normalization, balanced clustering for duration and speaker-independent clustering. The final model enables fine-grained phoneme-level prosody control for all speakers contained in the training set, while maintaining the speaker identity. Instead of relying on reference utterances for inference, we introduce a prior prosody encoder which learns the style of each speaker and enables speech synthesis without the requirement of reference audio. We also fine-tune the multispeaker model to unseen speakers with limited amounts of data, as a realistic application scenario and show that the prosody control capabilities are maintained, verifying that the speaker-independent prosodic clustering is effective. Experimental results show that the model has high output speech quality and that the proposed method allows efficient prosody control within each speaker's range despite the variability that a multispeaker setting introduces.

Predicting phoneme-level prosody latents using AR and flow-based Prior Networks for expressive speech synthesis

Nov 02, 2022

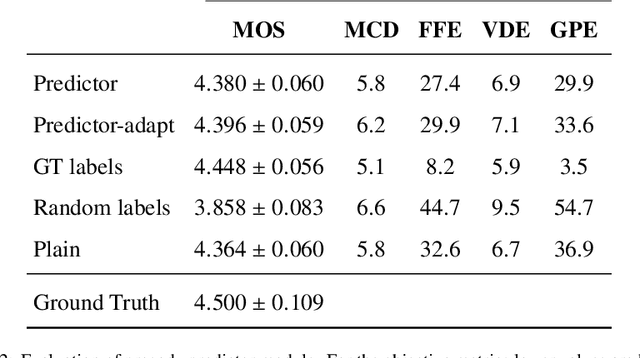

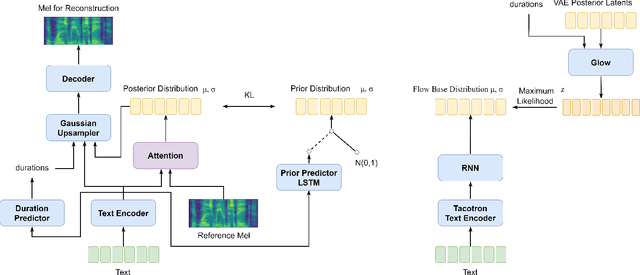

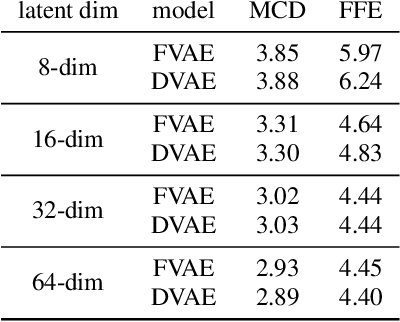

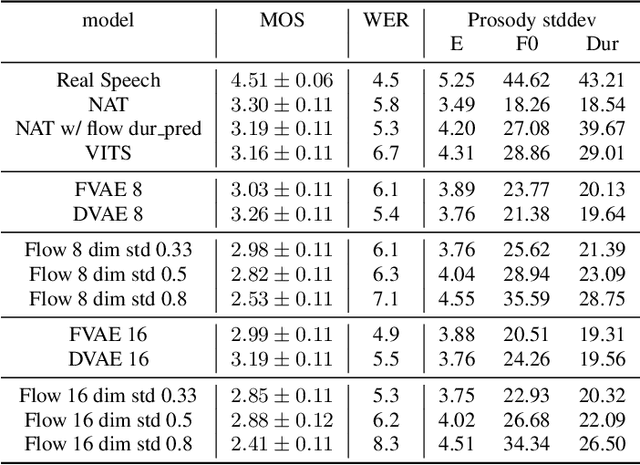

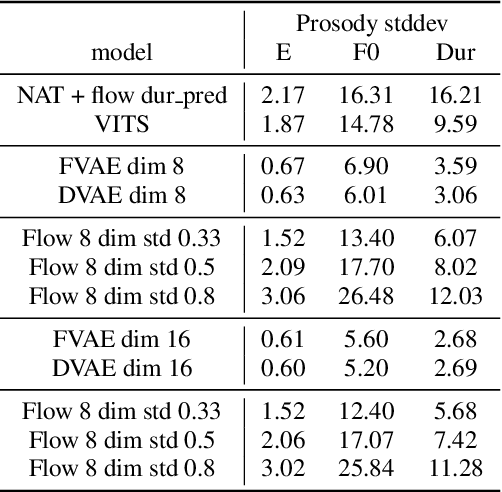

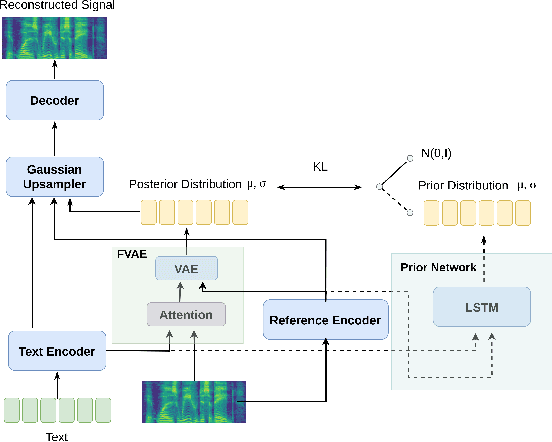

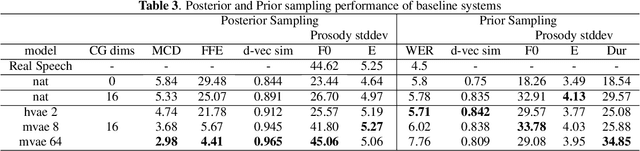

Abstract:A large part of the expressive speech synthesis literature focuses on learning prosodic representations of the speech signal which are then modeled by a prior distribution during inference. In this paper, we compare different prior architectures at the task of predicting phoneme level prosodic representations extracted with an unsupervised FVAE model. We use both subjective and objective metrics to show that normalizing flow based prior networks can result in more expressive speech at the cost of a slight drop in quality. Furthermore, we show that the synthesized speech has higher variability, for a given text, due to the nature of normalizing flows. We also propose a Dynamical VAE model, that can generate higher quality speech although with decreased expressiveness and variability compared to the flow based models.

Investigating Content-Aware Neural Text-To-Speech MOS Prediction Using Prosodic and Linguistic Features

Nov 01, 2022

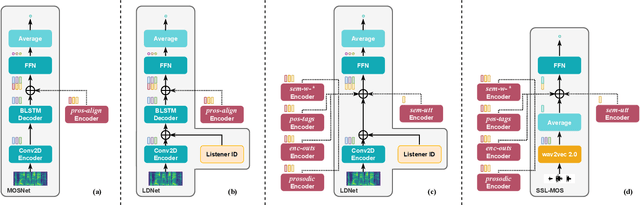

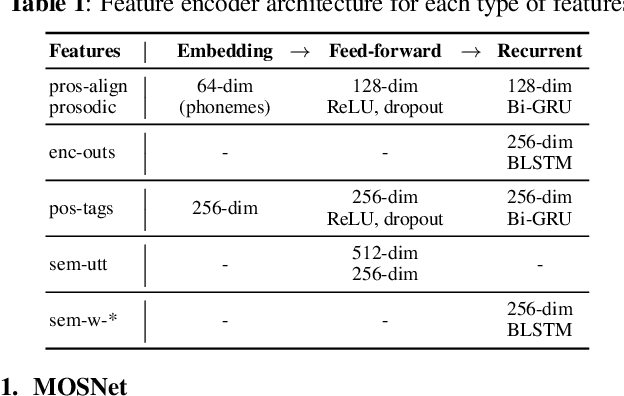

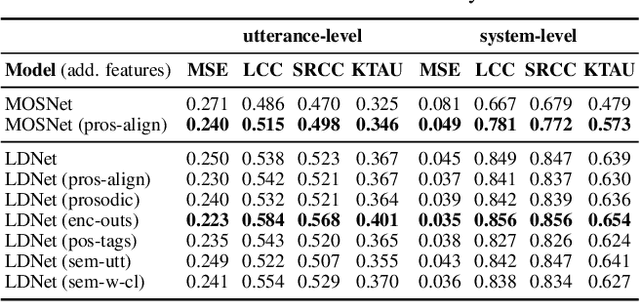

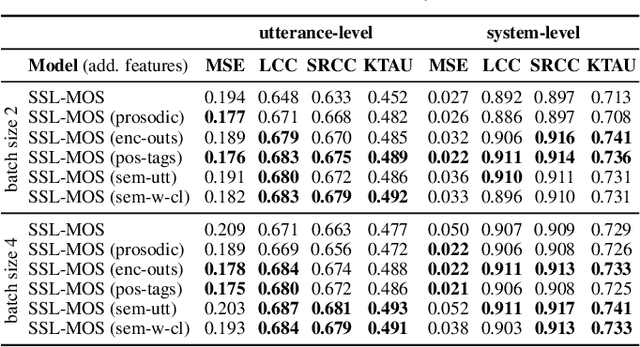

Abstract:Current state-of-the-art methods for automatic synthetic speech evaluation are based on MOS prediction neural models. Such MOS prediction models include MOSNet and LDNet that use spectral features as input, and SSL-MOS that relies on a pretrained self-supervised learning model that directly uses the speech signal as input. In modern high-quality neural TTS systems, prosodic appropriateness with regard to the spoken content is a decisive factor for speech naturalness. For this reason, we propose to include prosodic and linguistic features as additional inputs in MOS prediction systems, and evaluate their impact on the prediction outcome. We consider phoneme level F0 and duration features as prosodic inputs, as well as Tacotron encoder outputs, POS tags and BERT embeddings as higher-level linguistic inputs. All MOS prediction systems are trained on SOMOS, a neural TTS-only dataset with crowdsourced naturalness MOS evaluations. Results show that the proposed additional features are beneficial in the MOS prediction task, by improving the predicted MOS scores' correlation with the ground truths, both at utterance-level and system-level predictions.

Learning utterance-level representations through token-level acoustic latents prediction for Expressive Speech Synthesis

Nov 01, 2022

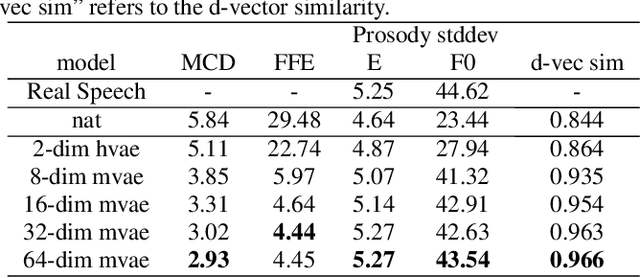

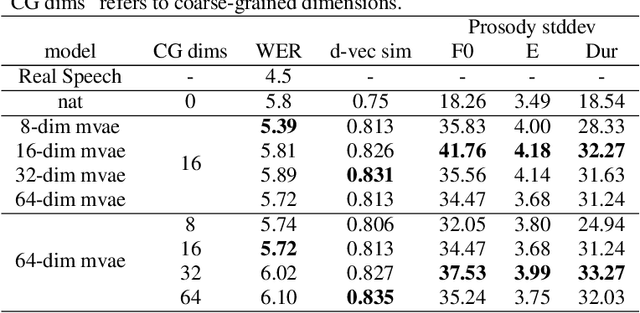

Abstract:This paper proposes an Expressive Speech Synthesis model that utilizes token-level latent prosodic variables in order to capture and control utterance-level attributes, such as character acting voice and speaking style. Current works aim to explicitly factorize such fine-grained and utterance-level speech attributes into different representations extracted by modules that operate in the corresponding level. We show that the fine-grained latent space also captures coarse-grained information, which is more evident as the dimension of latent space increases in order to capture diverse prosodic representations. Therefore, a trade-off arises between the diversity of the token-level and utterance-level representations and their disentanglement. We alleviate this issue by first capturing rich speech attributes into a token-level latent space and then, separately train a prior network that given the input text, learns utterance-level representations in order to predict the phoneme-level, posterior latents extracted during the previous step. Both qualitative and quantitative evaluations are used to demonstrate the effectiveness of the proposed approach. Audio samples are available in our demo page.

Generating Gender-Ambiguous Text-to-Speech Voices

Nov 01, 2022

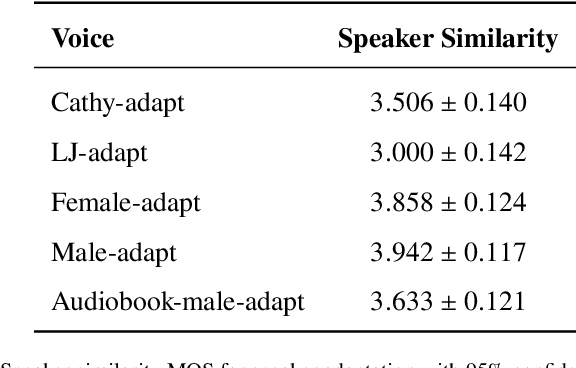

Abstract:The gender of a voice assistant or any voice user interface is a central element of its perceived identity. While a female voice is a common choice, there is an increasing interest in alternative approaches where the gender is ambiguous rather than clearly identifying as female or male. This work addresses the task of generating gender-ambiguous text-to-speech (TTS) voices that do not correspond to any existing person. This is accomplished by sampling from a latent speaker embeddings' space that was formed while training a multilingual, multi-speaker TTS system on data from multiple male and female speakers. Various options are investigated regarding the sampling process. In our experiments, the effects of different sampling choices on the gender ambiguity and the naturalness of the resulting voices are evaluated. The proposed method is shown able to efficiently generate novel speakers that are superior to a baseline averaged speaker embedding. To our knowledge, this is the first systematic approach that can reliably generate a range of gender-ambiguous voices to meet diverse user requirements.

Cross-lingual Text-To-Speech with Flow-based Voice Conversion for Improved Pronunciation

Oct 31, 2022

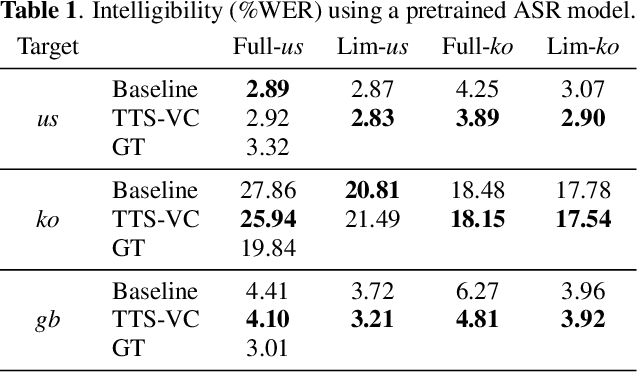

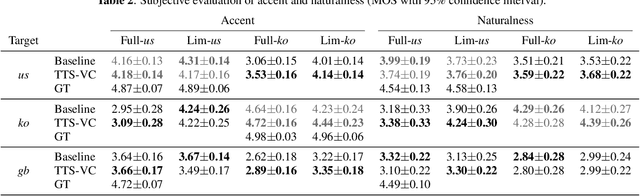

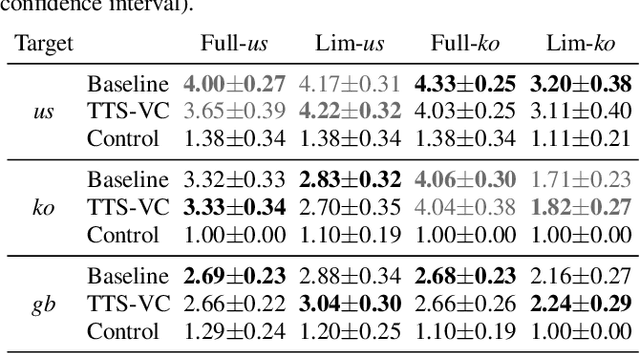

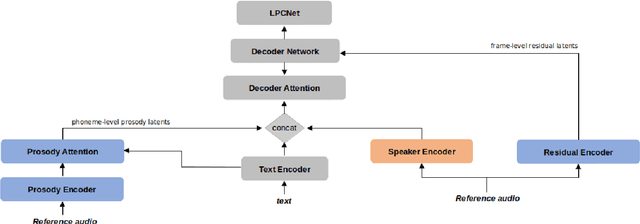

Abstract:This paper presents a method for end-to-end cross-lingual text-to-speech (TTS) which aims to preserve the target language's pronunciation regardless of the original speaker's language. The model used is based on a non-attentive Tacotron architecture, where the decoder has been replaced with a normalizing flow network conditioned on the speaker identity, allowing both TTS and voice conversion (VC) to be performed by the same model due to the inherent linguistic content and speaker identity disentanglement. When used in a cross-lingual setting, acoustic features are initially produced with a native speaker of the target language and then voice conversion is applied by the same model in order to convert these features to the target speaker's voice. We verify through objective and subjective evaluations that our method can have benefits compared to baseline cross-lingual synthesis. By including speakers averaging 7.5 minutes of speech, we also present positive results on low-resource scenarios.

Fine-grained Noise Control for Multispeaker Speech Synthesis

Apr 11, 2022

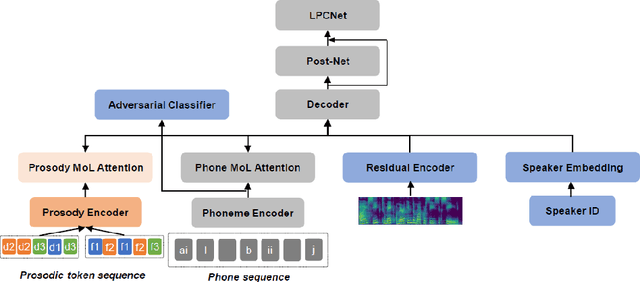

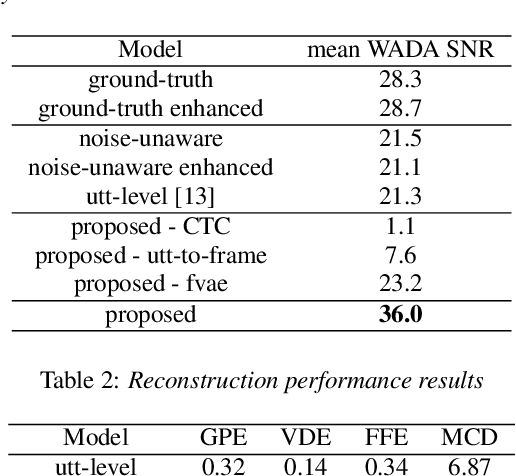

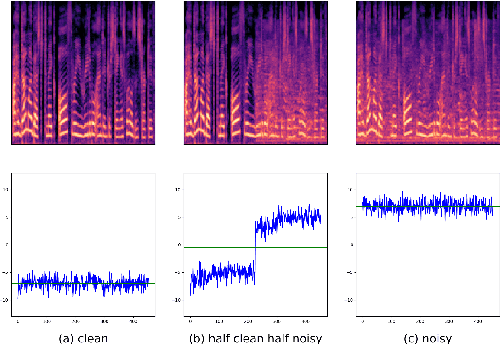

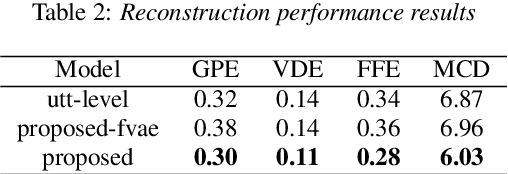

Abstract:A text-to-speech (TTS) model typically factorizes speech attributes such as content, speaker and prosody into disentangled representations.Recent works aim to additionally model the acoustic conditions explicitly, in order to disentangle the primary speech factors, i.e. linguistic content, prosody and timbre from any residual factors, such as recording conditions and background noise.This paper proposes unsupervised, interpretable and fine-grained noise and prosody modeling. We incorporate adversarial training, representation bottleneck and utterance-to-frame modeling in order to learn frame-level noise representations. To the same end, we perform fine-grained prosody modeling via a Fully Hierarchical Variational AutoEncoder (FVAE) which additionally results in more expressive speech synthesis.

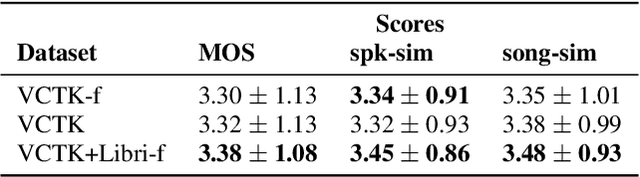

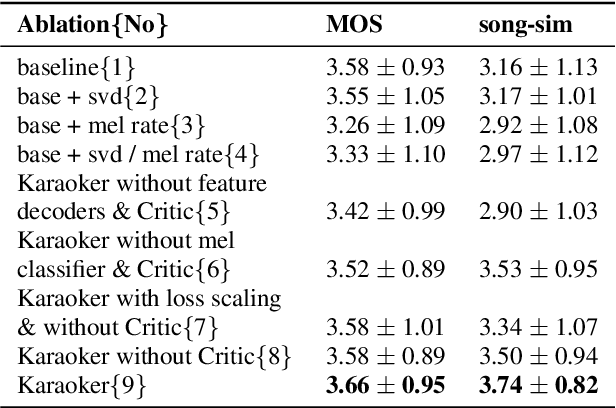

Karaoker: Alignment-free singing voice synthesis with speech training data

Apr 08, 2022

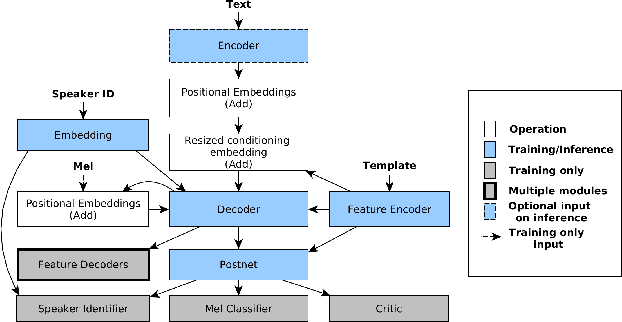

Abstract:Existing singing voice synthesis models (SVS) are usually trained on singing data and depend on either error-prone time-alignment and duration features or explicit music score information. In this paper, we propose Karaoker, a multispeaker Tacotron-based model conditioned on voice characteristic features that is trained exclusively on spoken data without requiring time-alignments. Karaoker synthesizes singing voice following a multi-dimensional template extracted from a source waveform of an unseen speaker/singer. The model is jointly conditioned with a single deep convolutional encoder on continuous data including pitch, intensity, harmonicity, formants, cepstral peak prominence and octaves. We extend the text-to-speech training objective with feature reconstruction, classification and speaker identification tasks that guide the model to an accurate result. Except for multi-tasking, we also employ a Wasserstein GAN training scheme as well as new losses on the acoustic model's output to further refine the quality of the model.

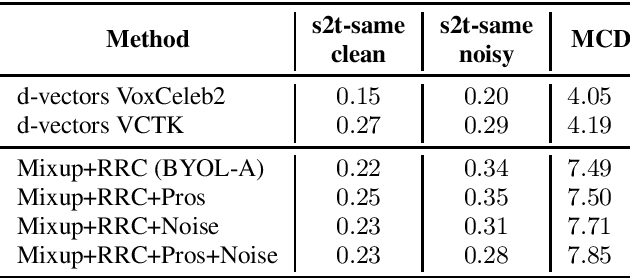

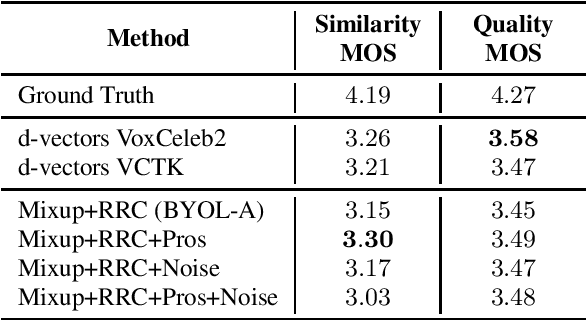

Self supervised learning for robust voice cloning

Apr 07, 2022

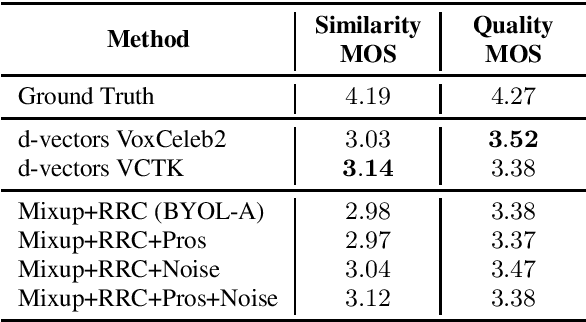

Abstract:Voice cloning is a difficult task which requires robust and informative features incorporated in a high quality TTS system in order to effectively copy an unseen speaker's voice. In our work, we utilize features learned in a self-supervised framework via the Bootstrap Your Own Latent (BYOL) method, which is shown to produce high quality speech representations when specific audio augmentations are applied to the vanilla algorithm. We further extend the augmentations in the training procedure to aid the resulting features to capture the speaker identity and to make them robust to noise and acoustic conditions. The learned features are used as pre-trained utterance-level embeddings and as inputs to a Non-Attentive Tacotron based architecture, aiming to achieve multispeaker speech synthesis without utilizing additional speaker features. This method enables us to train our model in an unlabeled multispeaker dataset as well as use unseen speaker embeddings to copy a speaker's voice. Subjective and objective evaluations are used to validate the proposed model, as well as the robustness to the acoustic conditions of the target utterance.

SOMOS: The Samsung Open MOS Dataset for the Evaluation of Neural Text-to-Speech Synthesis

Apr 06, 2022

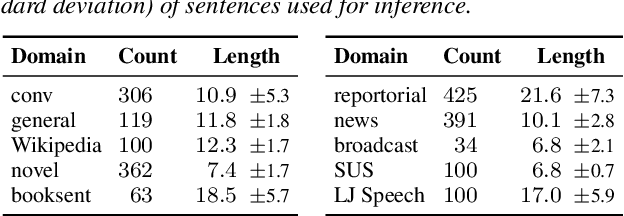

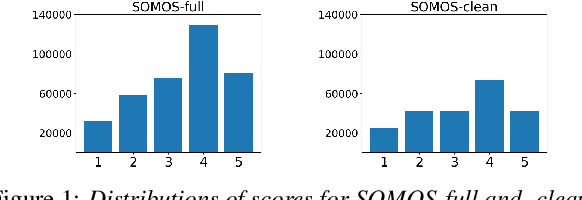

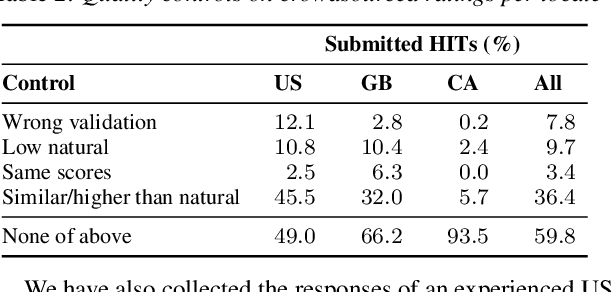

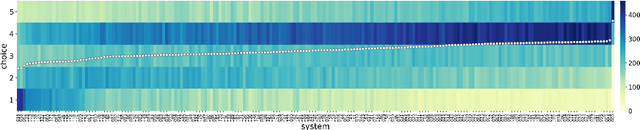

Abstract:In this work, we present the SOMOS dataset, the first large-scale mean opinion scores (MOS) dataset consisting of solely neural text-to-speech (TTS) samples. It can be employed to train automatic MOS prediction systems focused on the assessment of modern synthesizers, and can stimulate advancements in acoustic model evaluation. It consists of 20K synthetic utterances of the LJ Speech voice, a public domain speech dataset which is a common benchmark for building neural acoustic models and vocoders. Utterances are generated from 200 TTS systems including vanilla neural acoustic models as well as models which allow prosodic variations. An LPCNet vocoder is used for all systems, so that the samples' variation depends only on the acoustic models. The synthesized utterances provide balanced and adequate domain and length coverage. We collect MOS naturalness evaluations on 3 English Amazon Mechanical Turk locales and share practices leading to reliable crowdsourced annotations for this task. Baseline results of state-of-the-art MOS prediction models on the SOMOS dataset are presented, while we show the challenges that such models face when assigned to evaluate synthetic utterances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge