Jinchao Huang

A Practical Two-stage Ranking Framework for Cross-market Recommendation

Apr 27, 2022

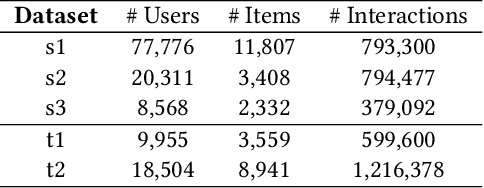

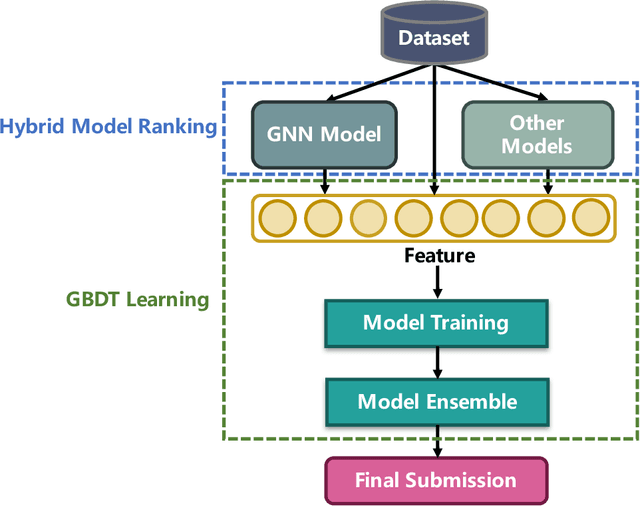

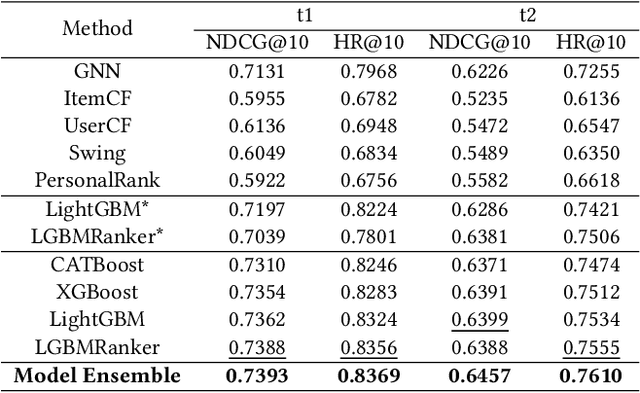

Abstract:Cross-market recommendation aims to recommend products to users in a resource-scarce target market by leveraging user behaviors from similar rich-resource markets, which is crucial for E-commerce companies but receives less research attention. In this paper, we present our detailed solution adopted in the cross-market recommendation contest, i.e., WSDM CUP 2022. To better utilize collaborative signals and similarities between target and source markets, we carefully consider multiple features as well as stacking learning models consisting of deep graph recommendation models (Graph Neural Network, DeepWalk, etc.) and traditional recommendation models (ItemCF, UserCF, Swing, etc.). Furthermore, We adopt tree-based ensembling methods, e.g., LightGBM, which show superior performance in prediction task to generate final results. We conduct comprehensive experiments on the XMRec dataset, verifying the effectiveness of our model. The proposed solution of our team WSDM_Coggle_ is selected as the second place submission.

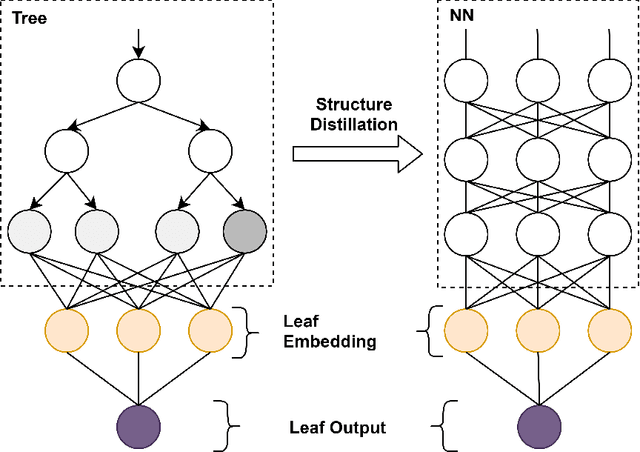

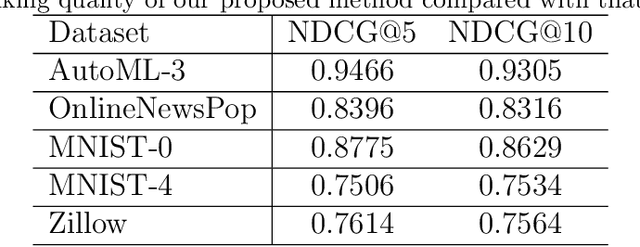

Joint learning of interpretation and distillation

May 24, 2020

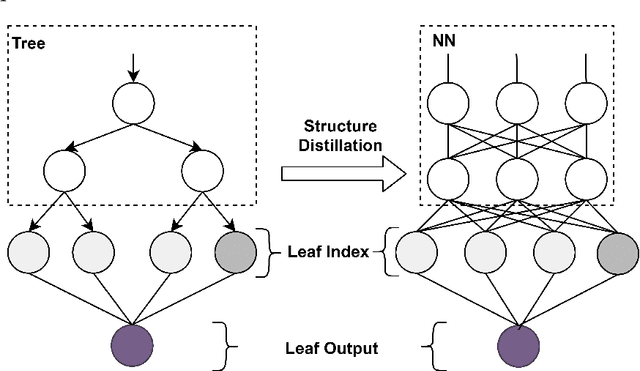

Abstract:The extra trust brought by the model interpretation has made it an indispensable part of machine learning systems. But to explain a distilled model's prediction, one may either work with the student model itself, or turn to its teacher model. This leads to a more fundamental question: if a distilled model should give a similar prediction for a similar reason as its teacher model on the same input? This question becomes even more crucial when the two models have dramatically different structure, taking GBDT2NN for example. This paper conducts an empirical study on the new approach to explaining each prediction of GBDT2NN, and how imitating the explanation can further improve the distillation process as an auxiliary learning task. Experiments on several benchmarks show that the proposed methods achieve better performance on both explanations and predictions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge