Jiayue Yu

Self-Adaptive Robust Motion Planning for High DoF Robot Manipulator using Deep MPC

Jul 17, 2024

Abstract:In contemporary control theory, self-adaptive methodologies are highly esteemed for their inherent flexibility and robustness in managing modeling uncertainties. Particularly, robust adaptive control stands out owing to its potent capability of leveraging robust optimization algorithms to approximate cost functions and relax the stringent constraints often associated with conventional self-adaptive control paradigms. Deep learning methods, characterized by their extensive layered architecture, offer significantly enhanced approximation prowess. Notwithstanding, the implementation of deep learning is replete with challenges, particularly the phenomena of vanishing and exploding gradients encountered during the training process. This paper introduces a self-adaptive control scheme integrating a deep MPC, governed by an innovative weight update law designed to mitigate the vanishing and exploding gradient predicament by employing the gradient sign exclusively. The proffered controller is a self-adaptive dynamic inversion mechanism, integrating an augmented state observer within an auxiliary estimation circuit to enhance the training phase. This approach enables the deep MPC to learn the entire plant model in real-time and the efficacy of the controller is demonstrated through simulations involving a high-DoF robot manipulator, wherein the controller adeptly learns the nonlinear plant dynamics expeditiously and exhibits commendable performance in the motion planning task.

Development and Application of a Monte Carlo Tree Search Algorithm for Simulating Da Vinci Code Game Strategies

Mar 15, 2024

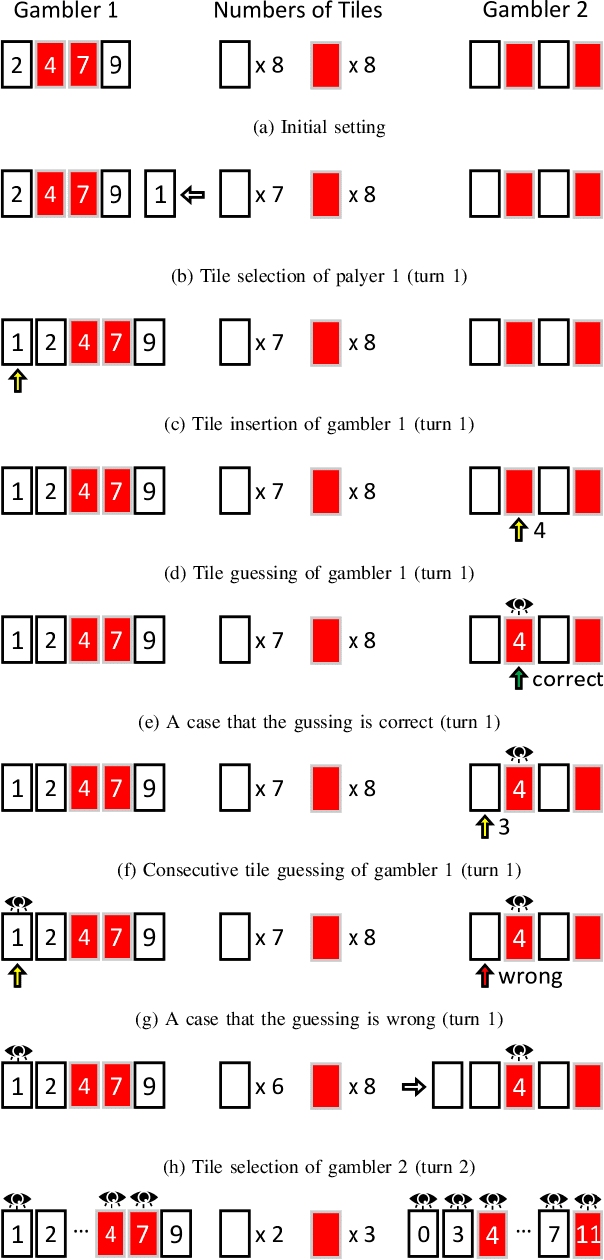

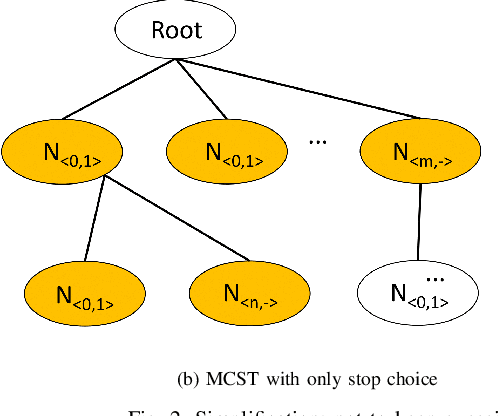

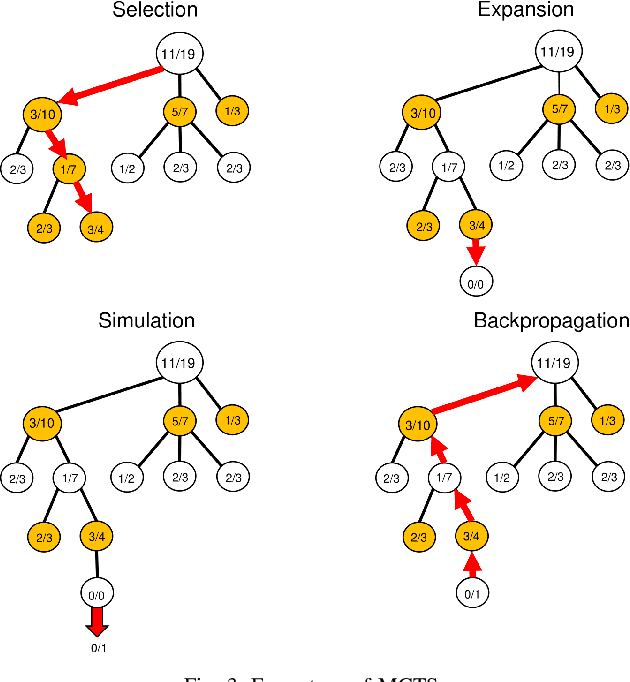

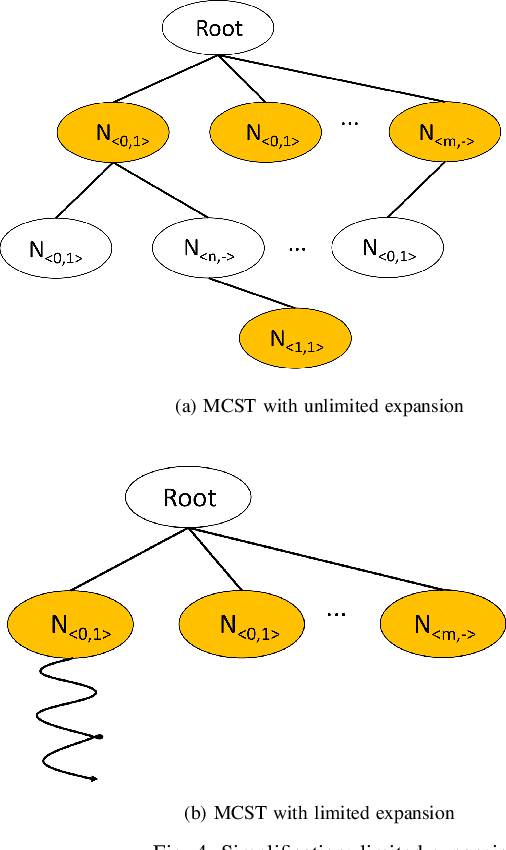

Abstract:In this study, we explore the efficiency of the Monte Carlo Tree Search (MCTS), a prominent decision-making algorithm renowned for its effectiveness in complex decision environments, contingent upon the volume of simulations conducted. Notwithstanding its broad applicability, the algorithm's performance can be adversely impacted in certain scenarios, particularly within the domain of game strategy development. This research posits that the inherent branch divergence within the Da Vinci Code board game significantly impedes parallelism when executed on Graphics Processing Units (GPUs). To investigate this hypothesis, we implemented and meticulously evaluated two variants of the MCTS algorithm, specifically designed to assess the impact of branch divergence on computational performance. Our comparative analysis reveals a linear improvement in performance with the CPU-based implementation, in stark contrast to the GPU implementation, which exhibits a non-linear enhancement pattern and discernible performance troughs. These findings contribute to a deeper understanding of the MCTS algorithm's behavior in divergent branch scenarios, highlighting critical considerations for optimizing game strategy algorithms on parallel computing architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge