Jia Wei Sii

Chirpy3D: Continuous Part Latents for Creative 3D Bird Generation

Jan 07, 2025

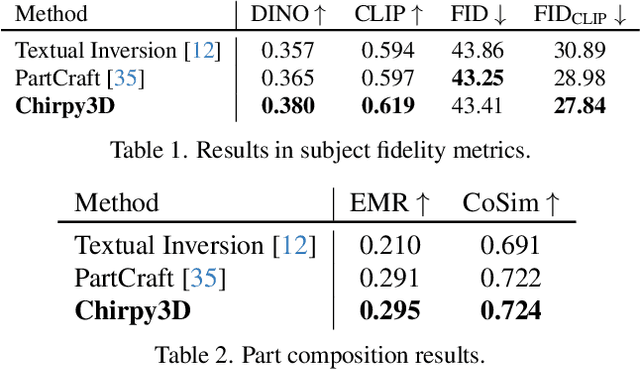

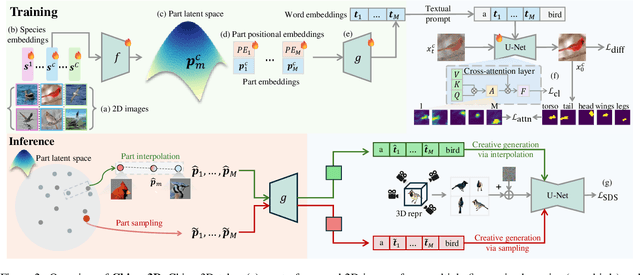

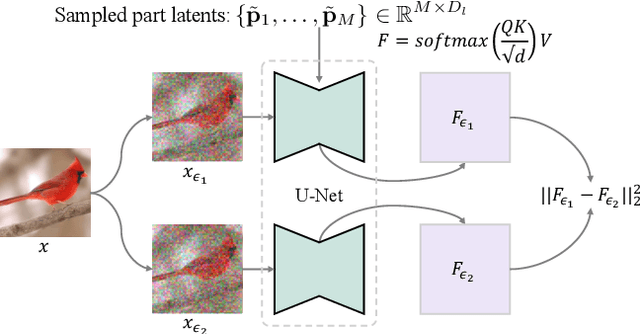

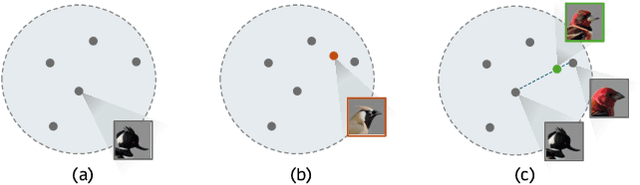

Abstract:In this paper, we push the boundaries of fine-grained 3D generation into truly creative territory. Current methods either lack intricate details or simply mimic existing objects -- we enable both. By lifting 2D fine-grained understanding into 3D through multi-view diffusion and modeling part latents as continuous distributions, we unlock the ability to generate entirely new, yet plausible parts through interpolation and sampling. A self-supervised feature consistency loss further ensures stable generation of these unseen parts. The result is the first system capable of creating novel 3D objects with species-specific details that transcend existing examples. While we demonstrate our approach on birds, the underlying framework extends beyond things that can chirp! Code will be released at https://github.com/kamwoh/chirpy3d.

Protégé: Learn and Generate Basic Makeup Styles with Generative Adversarial Networks (GANs)

Dec 29, 2024

Abstract:Makeup is no longer confined to physical application; people now use mobile apps to digitally apply makeup to their photos, which they then share on social media. However, while this shift has made makeup more accessible, designing diverse makeup styles tailored to individual faces remains a challenge. This challenge currently must still be done manually by humans. Existing systems, such as makeup recommendation engines and makeup transfer techniques, offer limitations in creating innovative makeups for different individuals "intuitively" -- significant user effort and knowledge needed and limited makeup options available in app. Our motivation is to address this challenge by proposing Prot\'eg\'e, a new makeup application, leveraging recent generative model -- GANs to learn and automatically generate makeup styles. This is a task that existing makeup applications (i.e., makeup recommendation systems using expert system and makeup transfer methods) are unable to perform. Extensive experiments has been conducted to demonstrate the capability of Prot\'eg\'e in learning and creating diverse makeups, providing a convenient and intuitive way, marking a significant leap in digital makeup technology!

Gorgeous: Create Your Desired Character Facial Makeup from Any Ideas

Apr 22, 2024

Abstract:Contemporary makeup transfer methods primarily focus on replicating makeup from one face to another, considerably limiting their use in creating diverse and creative character makeup essential for visual storytelling. Such methods typically fail to address the need for uniqueness and contextual relevance, specifically aligning with character and story settings as they depend heavily on existing facial makeup in reference images. This approach also presents a significant challenge when attempting to source a perfectly matched facial makeup style, further complicating the creation of makeup designs inspired by various story elements, such as theme, background, and props that do not necessarily feature faces. To address these limitations, we introduce $Gorgeous$, a novel diffusion-based makeup application method that goes beyond simple transfer by innovatively crafting unique and thematic facial makeup. Unlike traditional methods, $Gorgeous$ does not require the presence of a face in the reference images. Instead, it draws artistic inspiration from a minimal set of three to five images, which can be of any type, and transforms these elements into practical makeup applications directly on the face. Our comprehensive experiments demonstrate that $Gorgeous$ can effectively generate distinctive character facial makeup inspired by the chosen thematic reference images. This approach opens up new possibilities for integrating broader story elements into character makeup, thereby enhancing the narrative depth and visual impact in storytelling.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge