Jason K. Johnson

Learning Planar Ising Models

Feb 03, 2015

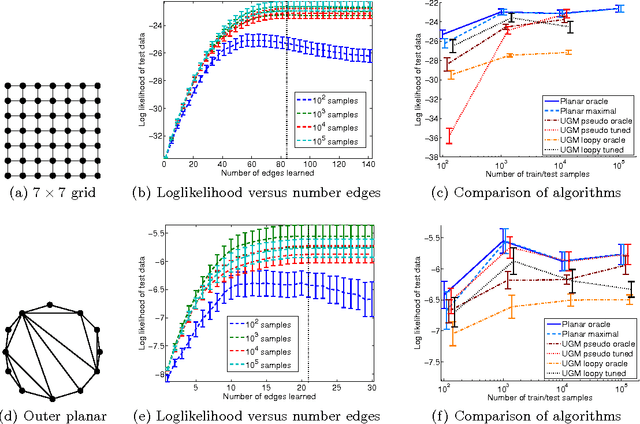

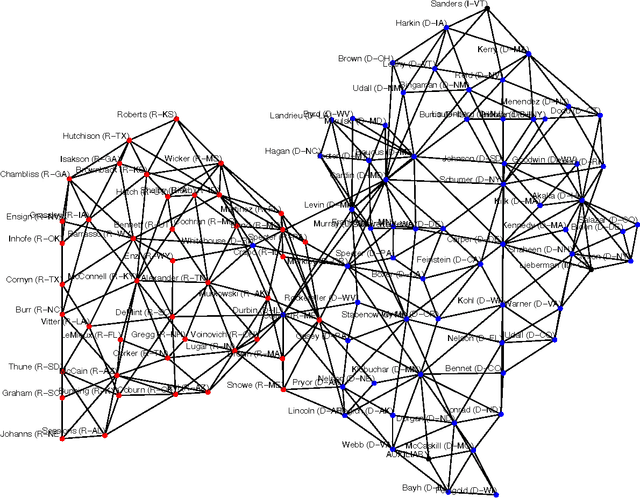

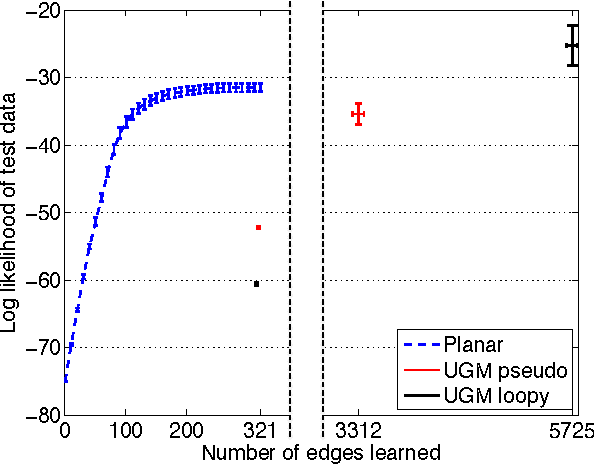

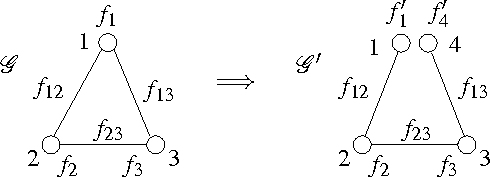

Abstract:Inference and learning of graphical models are both well-studied problems in statistics and machine learning that have found many applications in science and engineering. However, exact inference is intractable in general graphical models, which suggests the problem of seeking the best approximation to a collection of random variables within some tractable family of graphical models. In this paper, we focus on the class of planar Ising models, for which exact inference is tractable using techniques of statistical physics. Based on these techniques and recent methods for planarity testing and planar embedding, we propose a simple greedy algorithm for learning the best planar Ising model to approximate an arbitrary collection of binary random variables (possibly from sample data). Given the set of all pairwise correlations among variables, we select a planar graph and optimal planar Ising model defined on this graph to best approximate that set of correlations. We demonstrate our method in simulations and for the application of modeling senate voting records.

Fixing Convergence of Gaussian Belief Propagation

Jul 04, 2009

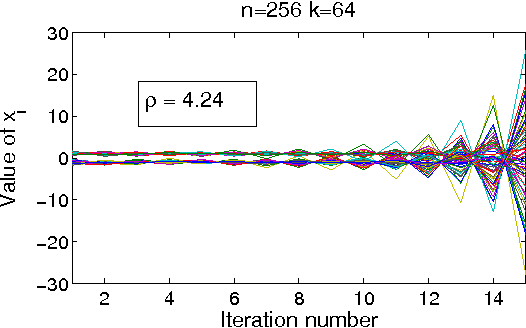

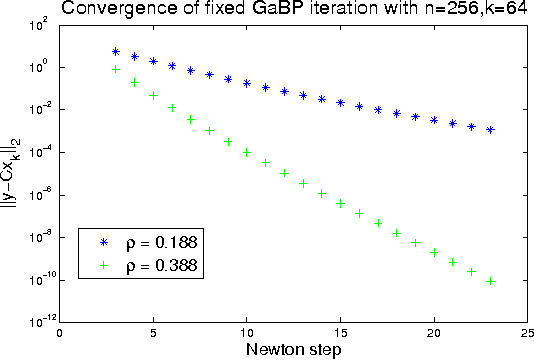

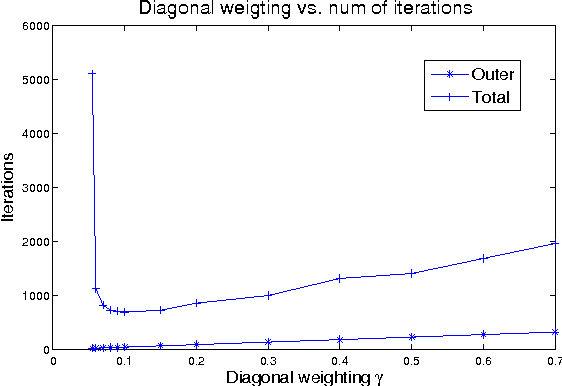

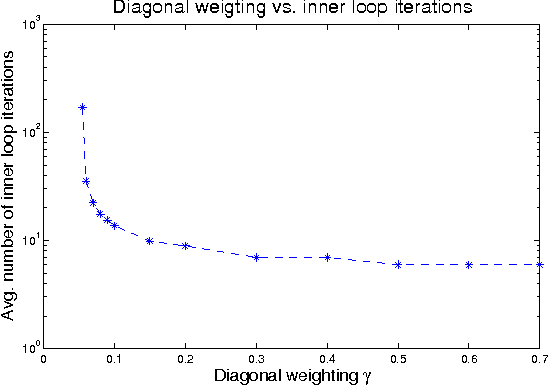

Abstract:Gaussian belief propagation (GaBP) is an iterative message-passing algorithm for inference in Gaussian graphical models. It is known that when GaBP converges it converges to the correct MAP estimate of the Gaussian random vector and simple sufficient conditions for its convergence have been established. In this paper we develop a double-loop algorithm for forcing convergence of GaBP. Our method computes the correct MAP estimate even in cases where standard GaBP would not have converged. We further extend this construction to compute least-squares solutions of over-constrained linear systems. We believe that our construction has numerous applications, since the GaBP algorithm is linked to solution of linear systems of equations, which is a fundamental problem in computer science and engineering. As a case study, we discuss the linear detection problem. We show that using our new construction, we are able to force convergence of Montanari's linear detection algorithm, in cases where it would originally fail. As a consequence, we are able to increase significantly the number of users that can transmit concurrently.

Lagrangian Relaxation for MAP Estimation in Graphical Models

Sep 28, 2007

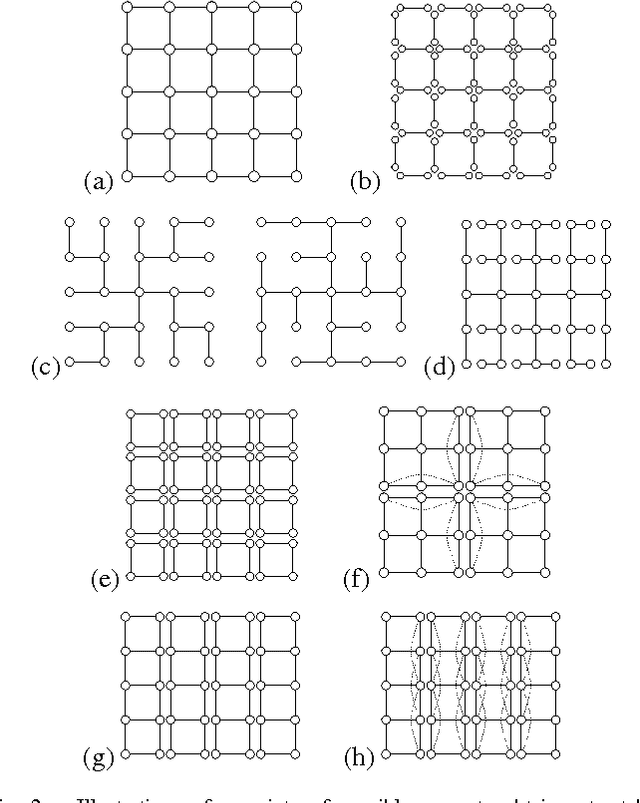

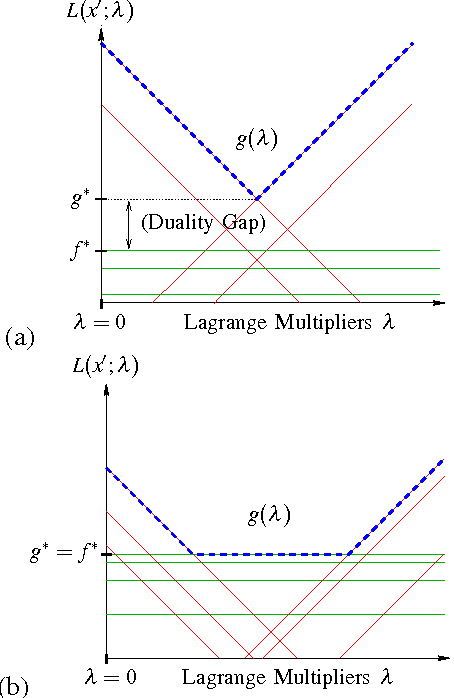

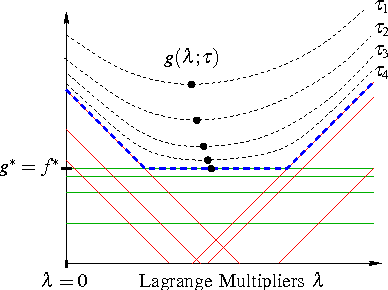

Abstract:We develop a general framework for MAP estimation in discrete and Gaussian graphical models using Lagrangian relaxation techniques. The key idea is to reformulate an intractable estimation problem as one defined on a more tractable graph, but subject to additional constraints. Relaxing these constraints gives a tractable dual problem, one defined by a thin graph, which is then optimized by an iterative procedure. When this iterative optimization leads to a consistent estimate, one which also satisfies the constraints, then it corresponds to an optimal MAP estimate of the original model. Otherwise there is a ``duality gap'', and we obtain a bound on the optimal solution. Thus, our approach combines convex optimization with dynamic programming techniques applicable for thin graphs. The popular tree-reweighted max-product (TRMP) method may be seen as solving a particular class of such relaxations, where the intractable graph is relaxed to a set of spanning trees. We also consider relaxations to a set of small induced subgraphs, thin subgraphs (e.g. loops), and a connected tree obtained by ``unwinding'' cycles. In addition, we propose a new class of multiscale relaxations that introduce ``summary'' variables. The potential benefits of such generalizations include: reducing or eliminating the ``duality gap'' in hard problems, reducing the number or Lagrange multipliers in the dual problem, and accelerating convergence of the iterative optimization procedure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge