James McCann

Social Gesture Recognition in spHRI: Leveraging Fabric-Based Tactile Sensing on Humanoid Robots

Mar 05, 2025Abstract:Humans are able to convey different messages using only touch. Equipping robots with the ability to understand social touch adds another modality in which humans and robots can communicate. In this paper, we present a social gesture recognition system using a fabric-based, large-scale tactile sensor integrated onto the arms of a humanoid robot. We built a social gesture dataset using multiple participants and extracted temporal features for classification. By collecting real-world data on a humanoid robot, our system provides valuable insights into human-robot social touch, further advancing the development of spHRI systems for more natural and effective communication.

CoFRIDA: Self-Supervised Fine-Tuning for Human-Robot Co-Painting

Feb 21, 2024

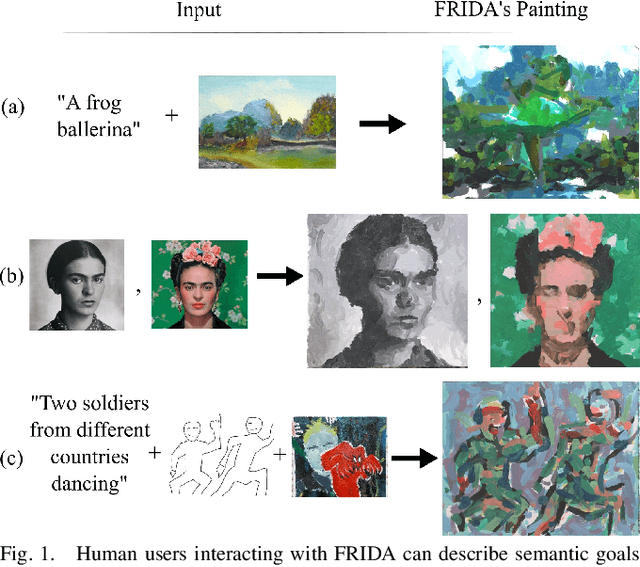

Abstract:Prior robot painting and drawing work, such as FRIDA, has focused on decreasing the sim-to-real gap and expanding input modalities for users, but the interaction with these systems generally exists only in the input stages. To support interactive, human-robot collaborative painting, we introduce the Collaborative FRIDA (CoFRIDA) robot painting framework, which can co-paint by modifying and engaging with content already painted by a human collaborator. To improve text-image alignment, FRIDA's major weakness, our system uses pre-trained text-to-image models; however, pre-trained models in the context of real-world co-painting do not perform well because they (1) do not understand the constraints and abilities of the robot and (2) cannot perform co-painting without making unrealistic edits to the canvas and overwriting content. We propose a self-supervised fine-tuning procedure that can tackle both issues, allowing the use of pre-trained state-of-the-art text-image alignment models with robots to enable co-painting in the physical world. Our open-source approach, CoFRIDA, creates paintings and drawings that match the input text prompt more clearly than FRIDA, both from a blank canvas and one with human created work. More generally, our fine-tuning procedure successfully encodes the robot's constraints and abilities into a foundation model, showcasing promising results as an effective method for reducing sim-to-real gaps.

Customizing Textile and Tactile Skins for Interactive Industrial Robots

Aug 06, 2023

Abstract:Tactile skins made from textiles enhance robot-human interaction by localizing contact points and measuring contact forces. This paper presents a solution for rapidly fabricating, calibrating, and deploying these skins on industrial robot arms. The novel automated skin calibration procedure maps skin locations to robot geometry and calibrates contact force. Through experiments on a FANUC LR Mate 200id/7L industrial robot, we demonstrate that tactile skins made from textiles can be effectively used for human-robot interaction in industrial environments, and can provide unique opportunities in robot control and learning, making them a promising technology for enhancing robot perception and interaction.

RobotSweater: Scalable, Generalizable, and Customizable Machine-Knitted Tactile Skins for Robots

Mar 06, 2023

Abstract:Tactile sensing is essential for robots to perceive and react to the environment. However, it remains a challenge to make large-scale and flexible tactile skins on robots. Industrial machine knitting provides solutions to manufacture customizable fabrics. Along with functional yarns, it can produce highly customizable circuits that can be made into tactile skins for robots. In this work, we present RobotSweater, a machine-knitted pressure-sensitive tactile skin that can be easily applied on robots. We design and fabricate a parameterized multi-layer tactile skin using off-the-shelf yarns, and characterize our sensor on both a flat testbed and a curved surface to show its robust contact detection, multi-contact localization, and pressure sensing capabilities. The sensor is fabricated using a well-established textile manufacturing process with a programmable industrial knitting machine, which makes it highly customizable and low-cost. The textile nature of the sensor also makes it easily fit curved surfaces of different robots and have a friendly appearance. Using our tactile skins, we conduct closed-loop control with tactile feedback for two applications: (1) human lead-through control of a robot arm, and (2) human-robot interaction with a mobile robot.

FRIDA: A Collaborative Robot Painter with a Differentiable, Real2Sim2Real Planning Environment

Oct 03, 2022

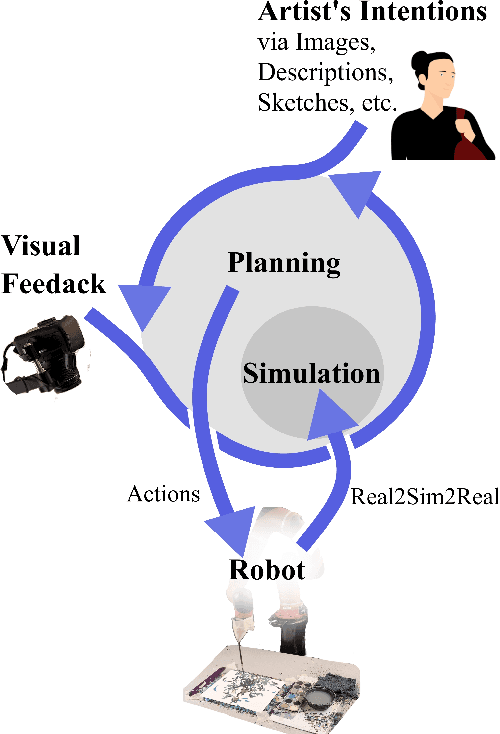

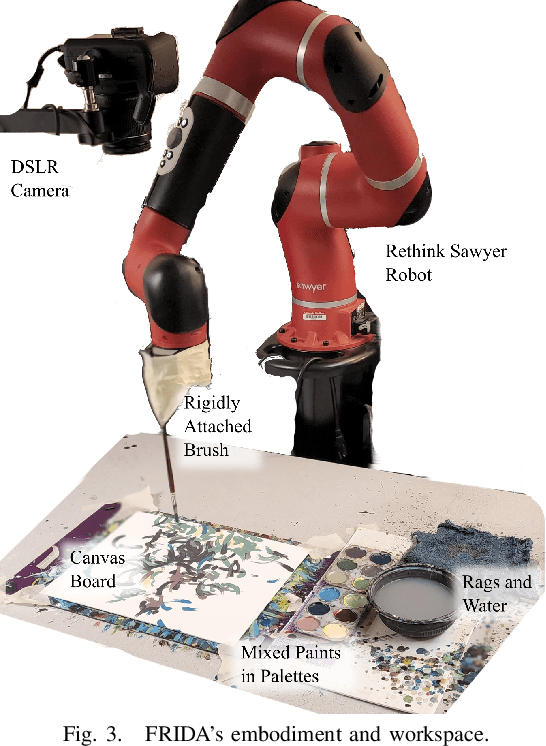

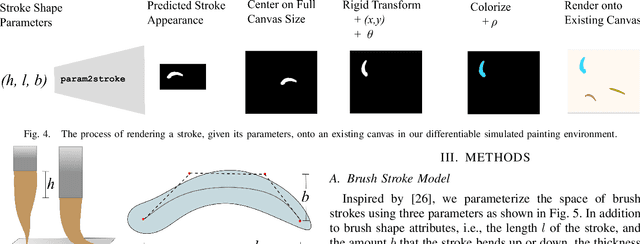

Abstract:Painting is an artistic process of rendering visual content that achieves the high-level communication goals of an artist that may change dynamically throughout the creative process. In this paper, we present a Framework and Robotics Initiative for Developing Arts (FRIDA) that enables humans to produce paintings on canvases by collaborating with a painter robot using simple inputs such as language descriptions or images. FRIDA introduces several technical innovations for computationally modeling a creative painting process. First, we develop a fully differentiable simulation environment for painting, adopting the idea of real to simulation to real (real2sim2real). We show that our proposed simulated painting environment is higher fidelity to reality than existing simulation environments used for robot painting. Second, to model the evolving dynamics of a creative process, we develop a planning approach that can continuously optimize the painting plan based on the evolving canvas with respect to the high-level goals. In contrast to existing approaches where the content generation process and action planning are performed independently and sequentially, FRIDA adapts to the stochastic nature of using paint and a brush by continually re-planning and re-assessing its semantic goals based on its visual perception of the painting progress. We describe the details on the technical approach as well as the system integration.

Structural Design Using Laplacian Shells

Jun 25, 2019

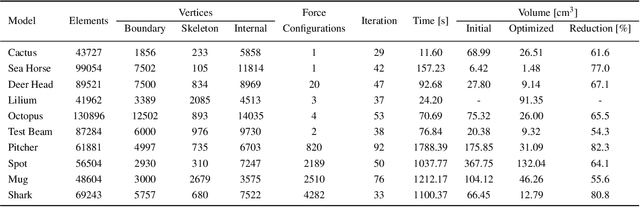

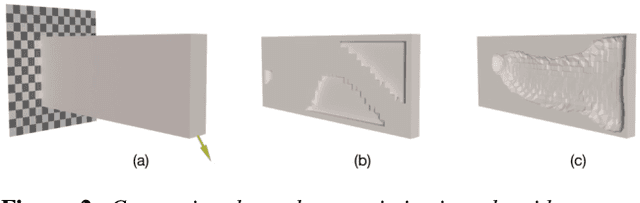

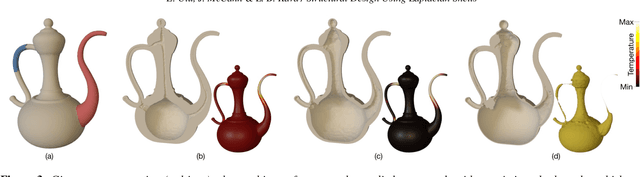

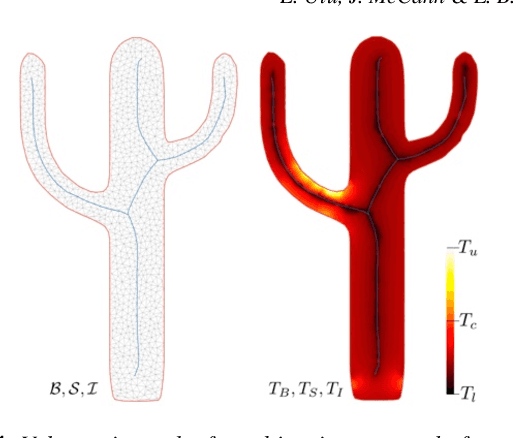

Abstract:We introduce a method to design lightweight shell objects that are structurally robust under the external forces they may experience during use. Given an input 3D model and a general description of the external forces, our algorithm generates a structurally-sound minimum weight shell object. Our approach works by altering the local shell thickness repeatedly based on the stresses that develop inside the object. A key issue in shell design is that large thickness values might result in self-intersections on the inner boundary creating a significant computational challenge during optimization. To address this, we propose a shape parametrization based on the solution to the Laplace's equation that guarantees smooth and intersection-free shell boundaries. Combined with our gradient-free optimization algorithm, our method provides a practical solution to the structural design of hollow objects with a single inner cavity. We demonstrate our method on a variety of problems with arbitrary 3D models under complex force configurations and validate its performance with physical experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge