Ivan Fung

Configurable Multilingual ASR with Speech Summary Representations

Oct 06, 2024

Abstract:Approximately half of the world's population is multilingual, making multilingual ASR (MASR) essential. Deploying multiple monolingual models is challenging when the ground-truth language is unknown in advance. This motivates research efforts on configurable multilingual MASR models that can be prompted manually or adapted automatically to recognise specific languages. In this paper, we present the Configurable MASR model with Summary Vector (csvMASR), a novel architecture designed to enhance configurability. Our approach leverages adapters and introduces speech summary vector representations, inspired by conversational summary representations in speech diarization, to combine outputs from language-specific components at the utterance level. We also incorporate an auxiliary language classification loss to enhance configurability. Using data from 7 languages in the Multilingual Librispeech (MLS) dataset, csvMASR outperforms existing MASR models and reduces the word error rate (WER) from 10.33\% to 9.95\% when compared with the baseline. Additionally, csvMASR demonstrates superior performance in language classification and prompting tasks.

Robust End-to-End Diarization with Domain Adaptive Training and Multi-Task Learning

Dec 12, 2023

Abstract:Due to the scarcity of publicly available diarization data, the model performance can be improved by training a single model with data from different domains. In this work, we propose to incorporate domain information to train a single end-to-end diarization model for multiple domains. First, we employ domain adaptive training with parameter-efficient adapters for on-the-fly model reconfiguration. Second, we introduce an auxiliary domain classification task to make the diarization model more domain-aware. For seen domains, the combination of our proposed methods reduces the absolute DER from 17.66% to 16.59% when compared with the baseline. During inference, adapters from ground-truth domains are not available for unseen domains. We demonstrate our model exhibits a stronger generalizability to unseen domains when adapters are removed. For two unseen domains, this improves the DER performance from 39.91% to 23.09% and 25.32% to 18.76% over the baseline, respectively.

Transformer Attractors for Robust and Efficient End-to-End Neural Diarization

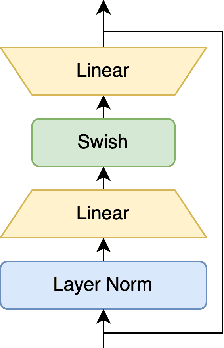

Dec 11, 2023Abstract:End-to-end neural diarization with encoder-decoder based attractors (EEND-EDA) is a method to perform diarization in a single neural network. EDA handles the diarization of a flexible number of speakers by using an LSTM-based encoder-decoder that generates a set of speaker-wise attractors in an autoregressive manner. In this paper, we propose to replace EDA with a transformer-based attractor calculation (TA) module. TA is composed of a Combiner block and a Transformer decoder. The main function of the combiner block is to generate conversational dependent (CD) embeddings by incorporating learned conversational information into a global set of embeddings. These CD embeddings will then serve as the input for the transformer decoder. Results on public datasets show that EEND-TA achieves 2.68% absolute DER improvement over EEND-EDA. EEND-TA inference is 1.28 times faster than that of EEND-EDA.

LostNet: A smart way for lost and find

Jan 05, 2023Abstract:Due to the enormous population growth of cities in recent years, objects are frequently lost and unclaimed on public transportation, in restaurants, or any other public areas. While services like Find My iPhone can easily identify lost electronic devices, more valuable objects cannot be tracked in an intelligent manner, making it impossible for administrators to reclaim a large number of lost and found items in a timely manner. We present a method that significantly reduces the complexity of searching by comparing previous images of lost and recovered things provided by the owner with photos taken when registered lost and found items are received. In this research, we will primarily design a photo matching network by combining the fine-tuning method of MobileNetv2 with CBAM Attention and using the Internet framework to develop an online lost and found image identification system. Our implementation gets a testing accuracy of 96.8% using only 665.12M GLFOPs and 3.5M training parameters. It can recognize practice images and can be run on a regular laptop.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge