Igor Ganichev

Auto-Vectorizing TensorFlow Graphs: Jacobians, Auto-Batching And Beyond

Mar 08, 2019

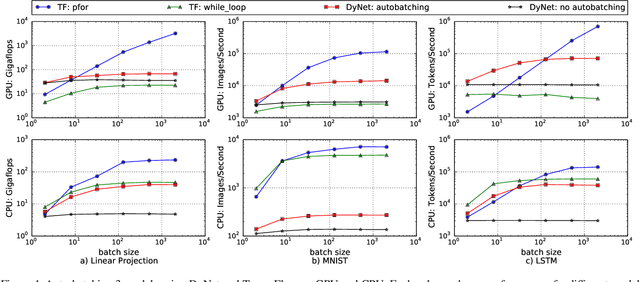

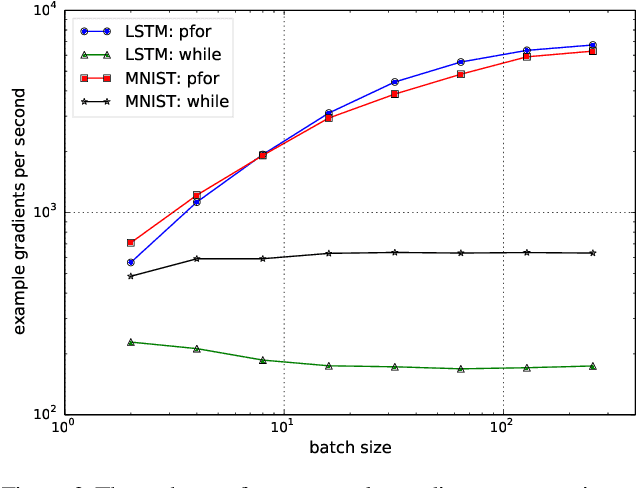

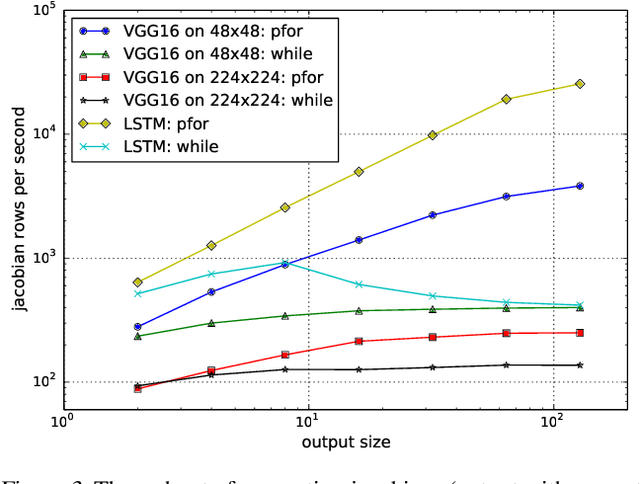

Abstract:We propose a static loop vectorization optimization on top of high level dataflow IR used by frameworks like TensorFlow. A new statically vectorized parallel-for abstraction is provided on top of TensorFlow, and used for applications ranging from auto-batching and per-example gradients, to jacobian computation, optimized map functions and input pipeline optimization. We report huge speedups compared to both loop based implementations, as well as run-time batching adopted by the DyNet framework.

TensorFlow Eager: A Multi-Stage, Python-Embedded DSL for Machine Learning

Feb 27, 2019

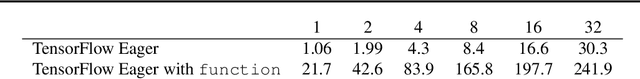

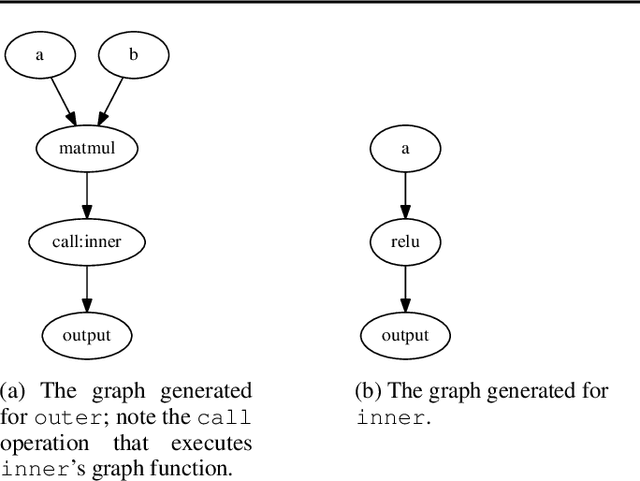

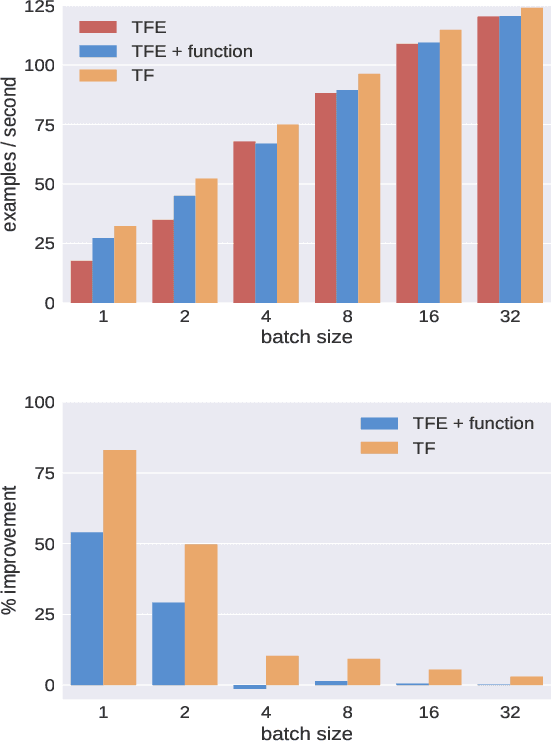

Abstract:TensorFlow Eager is a multi-stage, Python-embedded domain-specific language for hardware-accelerated machine learning, suitable for both interactive research and production. TensorFlow, which TensorFlow Eager extends, requires users to represent computations as dataflow graphs; this permits compiler optimizations and simplifies deployment but hinders rapid prototyping and run-time dynamism. TensorFlow Eager eliminates these usability costs without sacrificing the benefits furnished by graphs: It provides an imperative front-end to TensorFlow that executes operations immediately and a JIT tracer that translates Python functions composed of TensorFlow operations into executable dataflow graphs. TensorFlow Eager thus offers a multi-stage programming model that makes it easy to interpolate between imperative and staged execution in a single package.

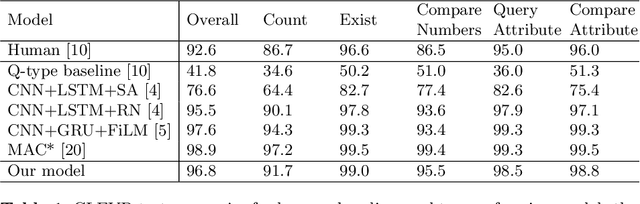

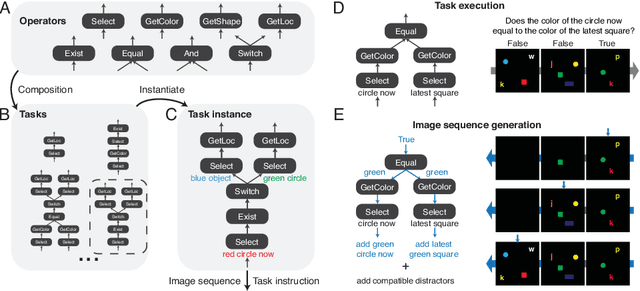

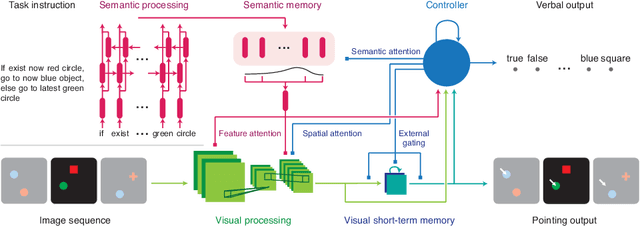

A Dataset and Architecture for Visual Reasoning with a Working Memory

Jul 20, 2018

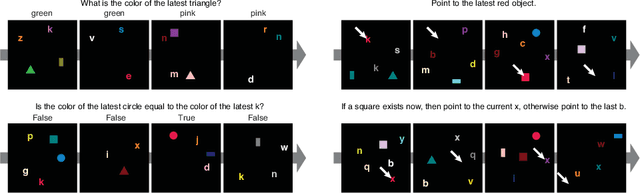

Abstract:A vexing problem in artificial intelligence is reasoning about events that occur in complex, changing visual stimuli such as in video analysis or game play. Inspired by a rich tradition of visual reasoning and memory in cognitive psychology and neuroscience, we developed an artificial, configurable visual question and answer dataset (COG) to parallel experiments in humans and animals. COG is much simpler than the general problem of video analysis, yet it addresses many of the problems relating to visual and logical reasoning and memory -- problems that remain challenging for modern deep learning architectures. We additionally propose a deep learning architecture that performs competitively on other diagnostic VQA datasets (i.e. CLEVR) as well as easy settings of the COG dataset. However, several settings of COG result in datasets that are progressively more challenging to learn. After training, the network can zero-shot generalize to many new tasks. Preliminary analyses of the network architectures trained on COG demonstrate that the network accomplishes the task in a manner interpretable to humans.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge