Iakovos S. Venieris

Flexible Intelligent Metasurface for Downlink Communications under Statistical CSI

Dec 28, 2025Abstract:Flexible intelligent metasurface (FIM) is a recently developed, groundbreaking hardware technology with promising potential for 6G wireless systems. Unlike conventional rigid antenna array (RAA)-based transmitters, FIM-assisted transmitters can dynamically alter their physical surface through morphing, offering new degrees of freedom to enhance system performance. In this letter, we depart from prior works that rely on instantaneous channel state information (CSI) and instead address the problem of average sum spectral efficiency maximization under statistical CSI in a FIM-assisted downlink multiuser multiple-input single-output setting. To this end, we first derive the spatial correlation matrix for the FIM-aided transmitter and then propose an iterative FIM optimization algorithm based on the gradient projection method. Simulation results show that with statistical CSI, the FIM-aided system provides a significant performance gain over its RAA-based counterpart in scenarios with strong spatial channel correlation, whereas the gain diminishes when the channels are weakly correlated.

ML-Enabled Eavesdropper Detection in Beyond 5G IIoT Networks

May 05, 2025Abstract:Advanced fifth generation (5G) and beyond (B5G) communication networks have revolutionized wireless technologies, supporting ultra-high data rates, low latency, and massive connectivity. However, they also introduce vulnerabilities, particularly in decentralized Industrial Internet of Things (IIoT) environments. Traditional cryptographic methods struggle with scalability and complexity, leading researchers to explore Artificial Intelligence (AI)-driven physical layer techniques for secure communications. In this context, this paper focuses on the utilization of Machine and Deep Learning (ML/DL) techniques to tackle with the common problem of eavesdropping detection. To this end, a simulated industrial B5G heterogeneous wireless network is used to evaluate the performance of various ML/DL models, including Random Forests (RF), Deep Convolutional Neural Networks (DCNN), and Long Short-Term Memory (LSTM) networks. These models classify users as either legitimate or malicious ones based on channel state information (CSI), position data, and transmission power. According to the presented numerical results, DCNN and RF models achieve a detection accuracy approaching 100\% in identifying eavesdroppers with zero false alarms. In general, this work underlines the great potential of combining AI and Physical Layer Security (PLS) for next-generation wireless networks in order to address evolving security threats.

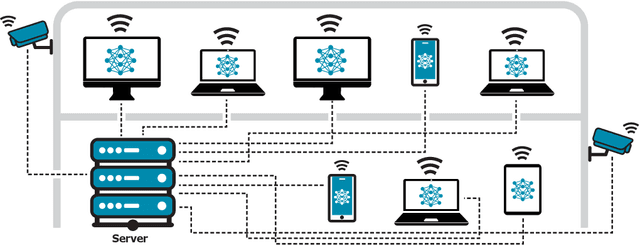

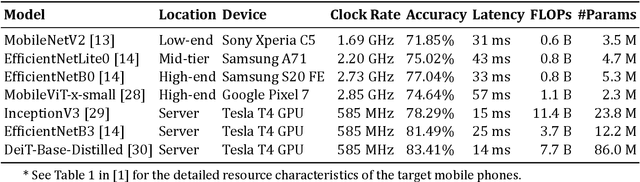

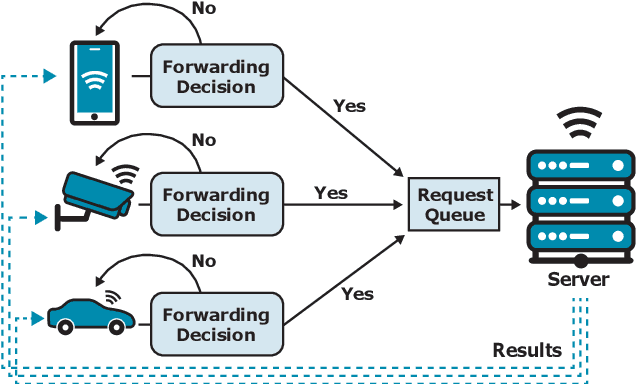

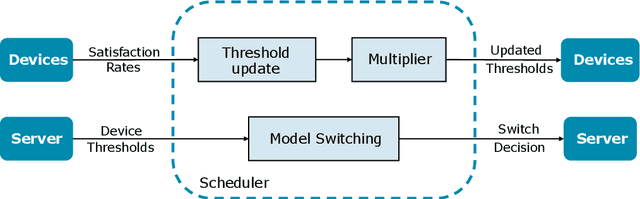

MultiTASC++: A Continuously Adaptive Scheduler for Edge-Based Multi-Device Cascade Inference

Dec 05, 2024

Abstract:Cascade systems, consisting of a lightweight model processing all samples and a heavier, high-accuracy model refining challenging samples, have become a widely-adopted distributed inference approach to achieving high accuracy and maintaining a low computational burden for mobile and IoT devices. As intelligent indoor environments, like smart homes, continue to expand, a new scenario emerges, the multi-device cascade. In this setting, multiple diverse devices simultaneously utilize a shared heavy model hosted on a server, often situated within or close to the consumer environment. This work introduces MultiTASC++, a continuously adaptive multi-tenancy-aware scheduler that dynamically controls the forwarding decision functions of devices to optimize system throughput while maintaining high accuracy and low latency. Through extensive experimentation in diverse device environments and with varying server-side models, we demonstrate the scheduler's efficacy in consistently maintaining a targeted satisfaction rate while providing the highest available accuracy across different device tiers and workloads of up to 100 devices. This demonstrates its scalability and efficiency in addressing the unique challenges of collaborative DNN inference in dynamic and diverse IoT environments.

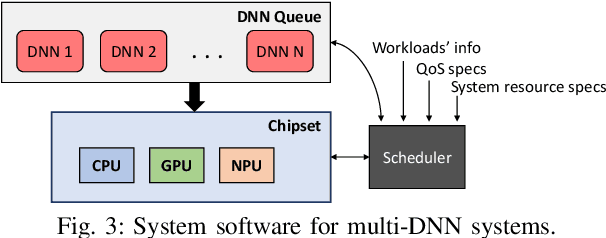

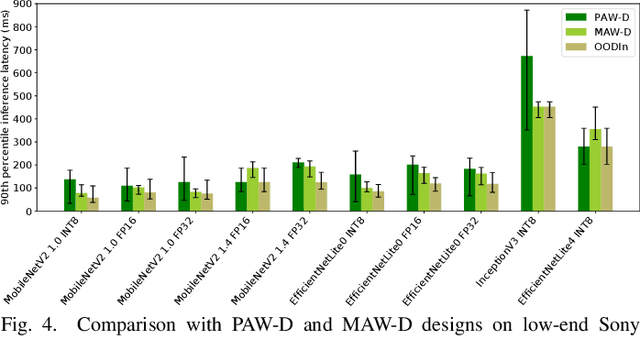

CARIn: Constraint-Aware and Responsive Inference on Heterogeneous Devices for Single- and Multi-DNN Workloads

Sep 02, 2024

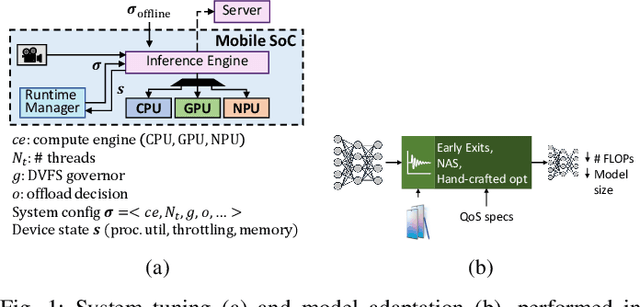

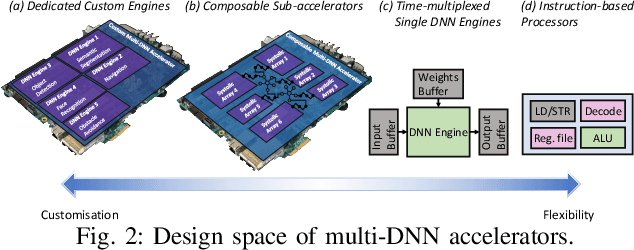

Abstract:The relentless expansion of deep learning applications in recent years has prompted a pivotal shift toward on-device execution, driven by the urgent need for real-time processing, heightened privacy concerns, and reduced latency across diverse domains. This article addresses the challenges inherent in optimising the execution of deep neural networks (DNNs) on mobile devices, with a focus on device heterogeneity, multi-DNN execution, and dynamic runtime adaptation. We introduce CARIn, a novel framework designed for the optimised deployment of both single- and multi-DNN applications under user-defined service-level objectives. Leveraging an expressive multi-objective optimisation framework and a runtime-aware sorting and search algorithm (RASS) as the MOO solver, CARIn facilitates efficient adaptation to dynamic conditions while addressing resource contention issues associated with multi-DNN execution. Notably, RASS generates a set of configurations, anticipating subsequent runtime adaptation, ensuring rapid, low-overhead adjustments in response to environmental fluctuations. Extensive evaluation across diverse tasks, including text classification, scene recognition, and face analysis, showcases the versatility of CARIn across various model architectures, such as Convolutional Neural Networks and Transformers, and realistic use cases. We observe a substantial enhancement in the fair treatment of the problem's objectives, reaching 1.92x when compared to single-model designs and up to 10.69x in contrast to the state-of-the-art OODIn framework. Additionally, we achieve a significant gain of up to 4.06x over hardware-unaware designs in multi-DNN applications. Finally, our framework sustains its performance while effectively eliminating the time overhead associated with identifying the optimal design in response to environmental challenges.

Near-Field Beamforming for Stacked Intelligent Metasurfaces-assisted MIMO Networks

Aug 03, 2024

Abstract:Stacked intelligent metasurfaces (SIMs) have recently gained significant interest since they enable precoding in the wave domain that comes with increased processing capability and reduced energy consumption. The study of SIMs and high frequency propagation make the study of the performance in the near field of crucial importance. Hence, in this work, we focus on SIM-assisted multiuser multiple-input multiple-output (MIMO) systems operating in the near field region. To this end, we formulate the weighted sum rate maximisation problem in terms of the transmit power and the phase shifts of the SIM. By applying a block coordinate descent (BCD)-relied algorithm, numerical results show the enhanced performance of the SIM in the near field with respect to the far field.

Achievable Rate Optimization for Large Stacked Intelligent Metasurfaces Based on Statistical CSI

May 29, 2024

Abstract:Stacked intelligent metasurface (SIM) is an emerging design that consists of multiple layers of metasurfaces. A SIM enables holographic multiple-input multiple-output (HMIMO) precoding in the wave domain, which results in the reduction of energy consumption and hardware cost. On the ground of multiuser beamforming, this letter focuses on the downlink achievable rate and its maximization. Contrary to previous works on multiuser SIM, we consider statistical channel state information (CSI) as opposed to instantaneous CSI to overcome challenges such as large overhead. Also, we examine the performance of large surfaces. We apply an alternating optimization (AO) algorithm regarding the phases of the SIM and the allocated transmit power. Simulations illustrate the performance of the considered large SIM-assisted design as well as the comparison between different CSI considerations.

MultiTASC: A Multi-Tenancy-Aware Scheduler for Cascaded DNN Inference at the Consumer Edge

Jun 22, 2023Abstract:Cascade systems comprise a two-model sequence, with a lightweight model processing all samples and a heavier, higher-accuracy model conditionally refining harder samples to improve accuracy. By placing the light model on the device side and the heavy model on a server, model cascades constitute a widely used distributed inference approach. With the rapid expansion of intelligent indoor environments, such as smart homes, the new setting of Multi-Device Cascade is emerging where multiple and diverse devices are to simultaneously use a shared heavy model on the same server, typically located within or close to the consumer environment. This work presents MultiTASC, a multi-tenancy-aware scheduler that adaptively controls the forwarding decision functions of the devices in order to maximize the system throughput, while sustaining high accuracy and low latency. By explicitly considering device heterogeneity, our scheduler improves the latency service-level objective (SLO) satisfaction rate by 20-25 percentage points (pp) over state-of-the-art cascade methods in highly heterogeneous setups, while serving over 40 devices, showcasing its scalability.

Exploring the Performance and Efficiency of Transformer Models for NLP on Mobile Devices

Jun 20, 2023Abstract:Deep learning (DL) is characterised by its dynamic nature, with new deep neural network (DNN) architectures and approaches emerging every few years, driving the field's advancement. At the same time, the ever-increasing use of mobile devices (MDs) has resulted in a surge of DNN-based mobile applications. Although traditional architectures, like CNNs and RNNs, have been successfully integrated into MDs, this is not the case for Transformers, a relatively new model family that has achieved new levels of accuracy across AI tasks, but poses significant computational challenges. In this work, we aim to make steps towards bridging this gap by examining the current state of Transformers' on-device execution. To this end, we construct a benchmark of representative models and thoroughly evaluate their performance across MDs with different computational capabilities. Our experimental results show that Transformers are not accelerator-friendly and indicate the need for software and hardware optimisations to achieve efficient deployment.

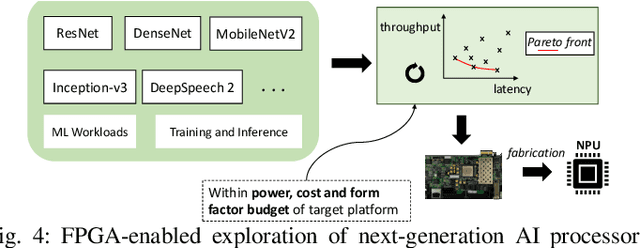

How to Reach Real-Time AI on Consumer Devices? Solutions for Programmable and Custom Architectures

Jun 21, 2021

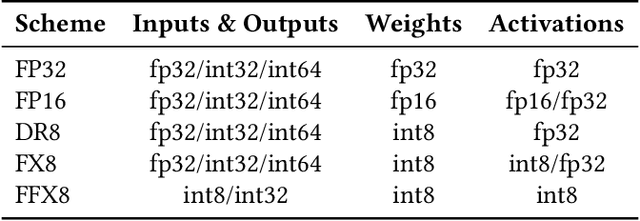

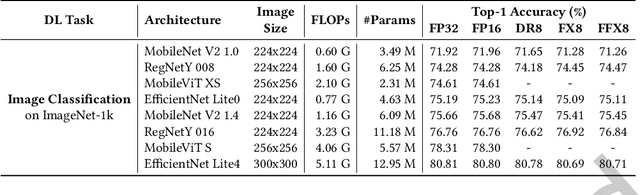

Abstract:The unprecedented performance of deep neural networks (DNNs) has led to large strides in various Artificial Intelligence (AI) inference tasks, such as object and speech recognition. Nevertheless, deploying such AI models across commodity devices faces significant challenges: large computational cost, multiple performance objectives, hardware heterogeneity and a common need for high accuracy, together pose critical problems to the deployment of DNNs across the various embedded and mobile devices in the wild. As such, we have yet to witness the mainstream usage of state-of-the-art deep learning algorithms across consumer devices. In this paper, we provide preliminary answers to this potentially game-changing question by presenting an array of design techniques for efficient AI systems. We start by examining the major roadblocks when targeting both programmable processors and custom accelerators. Then, we present diverse methods for achieving real-time performance following a cross-stack approach. These span model-, system- and hardware-level techniques, and their combination. Our findings provide illustrative examples of AI systems that do not overburden mobile hardware, while also indicating how they can improve inference accuracy. Moreover, we showcase how custom ASIC- and FPGA-based accelerators can be an enabling factor for next-generation AI applications, such as multi-DNN systems. Collectively, these results highlight the critical need for further exploration as to how the various cross-stack solutions can be best combined in order to bring the latest advances in deep learning close to users, in a robust and efficient manner.

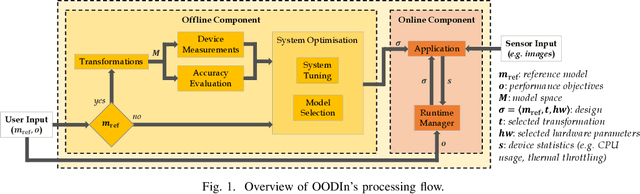

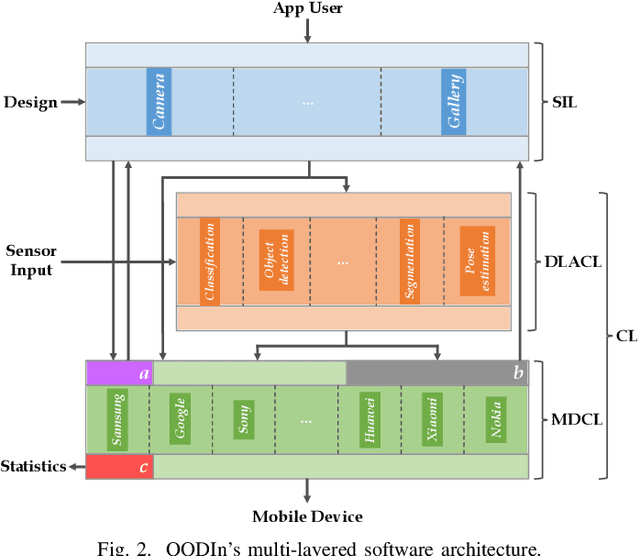

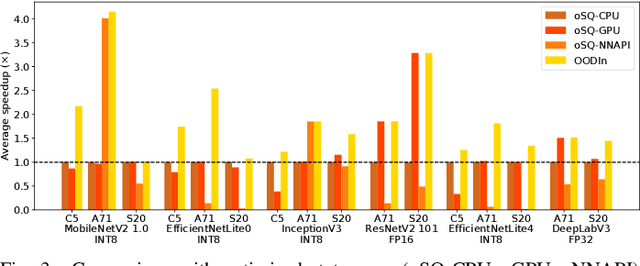

OODIn: An Optimised On-Device Inference Framework for Heterogeneous Mobile Devices

Jun 08, 2021

Abstract:Radical progress in the field of deep learning (DL) has led to unprecedented accuracy in diverse inference tasks. As such, deploying DL models across mobile platforms is vital to enable the development and broad availability of the next-generation intelligent apps. Nevertheless, the wide and optimised deployment of DL models is currently hindered by the vast system heterogeneity of mobile devices, the varying computational cost of different DL models and the variability of performance needs across DL applications. This paper proposes OODIn, a framework for the optimised deployment of DL apps across heterogeneous mobile devices. OODIn comprises a novel DL-specific software architecture together with an analytical framework for modelling DL applications that: (1) counteract the variability in device resources and DL models by means of a highly parametrised multi-layer design; and (2) perform a principled optimisation of both model- and system-level parameters through a multi-objective formulation, designed for DL inference apps, in order to adapt the deployment to the user-specified performance requirements and device capabilities. Quantitative evaluation shows that the proposed framework consistently outperforms status-quo designs across heterogeneous devices and delivers up to 4.3x and 3.5x performance gain over highly optimised platform- and model-aware designs respectively, while effectively adapting execution to dynamic changes in resource availability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge