Hyoung Suk Park

An Iterative Reconstruction Method for Dental Cone-Beam Computed Tomography with a Truncated Field of View

Aug 11, 2025Abstract:In dental cone-beam computed tomography (CBCT), compact and cost-effective system designs often use small detectors, resulting in a truncated field of view (FOV) that does not fully encompass the patient's head. In iterative reconstruction approaches, the discrepancy between the actual projection and the forward projection within the truncated FOV accumulates over iterations, leading to significant degradation in the reconstructed image quality. In this study, we propose a two-stage approach to mitigate truncation artifacts in dental CBCT. In the first stage, we employ Implicit Neural Representation (INR), leveraging its superior representation power, to generate a prior image over an extended region so that its forward projection fully covers the patient's head. To reduce computational and memory burdens, INR reconstruction is performed with a coarse voxel size. The forward projection of this prior image is then used to estimate the discrepancy due to truncated FOV in the measured projection data. In the second stage, the discrepancy-corrected projection data is utilized in a conventional iterative reconstruction process within the truncated region. Our numerical results demonstrate that the proposed two-grid approach effectively suppresses truncation artifacts, leading to improved CBCT image quality.

Adaptive Multi-resolution Hash-Encoding Framework for INR-based Dental CBCT Reconstruction with Truncated FOV

Jun 14, 2025Abstract:Implicit neural representation (INR), particularly in combination with hash encoding, has recently emerged as a promising approach for computed tomography (CT) image reconstruction. However, directly applying INR techniques to 3D dental cone-beam CT (CBCT) with a truncated field of view (FOV) is challenging. During the training process, if the FOV does not fully encompass the patient's head, a discrepancy arises between the measured projections and the forward projections computed within the truncated domain. This mismatch leads the network to estimate attenuation values inaccurately, producing severe artifacts in the reconstructed images. In this study, we propose a computationally efficient INR-based reconstruction framework that leverages multi-resolution hash encoding for 3D dental CBCT with a truncated FOV. To mitigate truncation artifacts, we train the network over an expanded reconstruction domain that fully encompasses the patient's head. For computational efficiency, we adopt an adaptive training strategy that uses a multi-resolution grid: finer resolution levels and denser sampling inside the truncated FOV, and coarser resolution levels with sparser sampling outside. To maintain consistent input dimensionality of the network across spatially varying resolutions, we introduce an adaptive hash encoder that selectively activates the lower-level features of the hash hierarchy for points outside the truncated FOV. The proposed method with an extended FOV effectively mitigates truncation artifacts. Compared with a naive domain extension using fixed resolution levels and a fixed sampling rate, the adaptive strategy reduces computational time by over 60% for an image volume of 800x800x600, while preserving the PSNR within the truncated FOV.

Neural Representation-Based Method for Metal-induced Artifact Reduction in Dental CBCT Imaging

Jul 27, 2023Abstract:This study introduces a novel reconstruction method for dental cone-beam computed tomography (CBCT), focusing on effectively reducing metal-induced artifacts commonly encountered in the presence of prevalent metallic implants. Despite significant progress in metal artifact reduction techniques, challenges persist owing to the intricate physical interactions between polychromatic X-ray beams and metal objects, which are further compounded by the additional effects associated with metal-tooth interactions and factors specific to the dental CBCT data environment. To overcome these limitations, we propose an implicit neural network that generates two distinct and informative tomographic images. One image represents the monochromatic attenuation distribution at a specific energy level, whereas the other captures the nonlinear beam-hardening factor resulting from the polychromatic nature of X-ray beams. In contrast to existing CT reconstruction techniques, the proposed method relies exclusively on the Beer--Lambert law, effectively preventing the generation of metal-induced artifacts during the backprojection process commonly implemented in conventional methods. Extensive experimental evaluations demonstrate that the proposed method effectively reduces metal artifacts while providing high-quality image reconstructions, thus emphasizing the significance of the second image in capturing the nonlinear beam-hardening factor.

Automatic 3D Registration of Dental CBCT and Face Scan Data using 2D Projection images

May 17, 2023

Abstract:This paper presents a fully automatic registration method of dental cone-beam computed tomography (CBCT) and face scan data. It can be used for a digital platform of 3D jaw-teeth-face models in a variety of applications, including 3D digital treatment planning and orthognathic surgery. Difficulties in accurately merging facial scans and CBCT images are due to the different image acquisition methods and limited area of correspondence between the two facial surfaces. In addition, it is difficult to use machine learning techniques because they use face-related 3D medical data with radiation exposure, which are difficult to obtain for training. The proposed method addresses these problems by reusing an existing machine-learning-based 2D landmark detection algorithm in an open-source library and developing a novel mathematical algorithm that identifies paired 3D landmarks from knowledge of the corresponding 2D landmarks. A main contribution of this study is that the proposed method does not require annotated training data of facial landmarks because it uses a pre-trained facial landmark detection algorithm that is known to be robust and generalized to various 2D face image models. Note that this reduces a 3D landmark detection problem to a 2D problem of identifying the corresponding landmarks on two 2D projection images generated from two different projection angles. Here, the 3D landmarks for registration were selected from the sub-surfaces with the least geometric change under the CBCT and face scan environments. For the final fine-tuning of the registration, the Iterative Closest Point method was applied, which utilizes geometrical information around the 3D landmarks. The experimental results show that the proposed method achieved an averaged surface distance error of 0.74 mm for three pairs of CBCT and face scan datasets.

A robust multi-domain network for short-scanning amyloid PET reconstruction

May 17, 2023

Abstract:This paper presents a robust multi-domain network designed to restore low-quality amyloid PET images acquired in a short period of time. The proposed method is trained on pairs of PET images from short (2 minutes) and standard (20 minutes) scanning times, sourced from multiple domains. Learning relevant image features between these domains with a single network is challenging. Our key contribution is the introduction of a mapping label, which enables effective learning of specific representations between different domains. The network, trained with various mapping labels, can efficiently correct amyloid PET datasets in multiple training domains and unseen domains, such as those obtained with new radiotracers, acquisition protocols, or PET scanners. Internal, temporal, and external validations demonstrate the effectiveness of the proposed method. Notably, for external validation datasets from unseen domains, the proposed method achieved comparable or superior results relative to methods trained with these datasets, in terms of quantitative metrics such as normalized root mean-square error and structure similarity index measure. Two nuclear medicine physicians evaluated the amyloid status as positive or negative for the external validation datasets, with accuracies of 0.970 and 0.930 for readers 1 and 2, respectively.

Nonlinear ill-posed problem in low-dose dental cone-beam computed tomography

Mar 03, 2023

Abstract:This paper describes the mathematical structure of the ill-posed nonlinear inverse problem of low-dose dental cone-beam computed tomography (CBCT) and explains the advantages of a deep learning-based approach to the reconstruction of computed tomography images over conventional regularization methods. This paper explains the underlying reasons why dental CBCT is more ill-posed than standard computed tomography. Despite this severe ill-posedness, the demand for dental CBCT systems is rapidly growing because of their cost competitiveness and low radiation dose. We then describe the limitations of existing methods in the accurate restoration of the morphological structures of teeth using dental CBCT data severely damaged by metal implants. We further discuss the usefulness of panoramic images generated from CBCT data for accurate tooth segmentation. We also discuss the possibility of utilizing radiation-free intra-oral scan data as prior information in CBCT image reconstruction to compensate for the damage to data caused by metal implants.

Metal Artifact Reduction with Intra-Oral Scan Data for 3D Low Dose Maxillofacial CBCT Modeling

Feb 08, 2022

Abstract:Low-dose dental cone beam computed tomography (CBCT) has been increasingly used for maxillofacial modeling. However, the presence of metallic inserts, such as implants, crowns, and dental filling, causes severe streaking and shading artifacts in a CBCT image and loss of the morphological structures of the teeth, which consequently prevents accurate segmentation of bones. A two-stage metal artifact reduction method is proposed for accurate 3D low-dose maxillofacial CBCT modeling, where a key idea is to utilize explicit tooth shape prior information from intra-oral scan data whose acquisition does not require any extra radiation exposure. In the first stage, an image-to-image deep learning network is employed to mitigate metal-related artifacts. To improve the learning ability, the proposed network is designed to take advantage of the intra-oral scan data as side-inputs and perform multi-task learning of auxiliary tooth segmentation. In the second stage, a 3D maxillofacial model is constructed by segmenting the bones from the dental CBCT image corrected in the first stage. For accurate bone segmentation, weighted thresholding is applied, wherein the weighting region is determined depending on the geometry of the intra-oral scan data. Because acquiring a paired training dataset of metal-artifact-free and metal artifact-affected dental CBCT images is challenging in clinical practice, an automatic method of generating a realistic dataset according to the CBCT physics model is introduced. Numerical simulations and clinical experiments show the feasibility of the proposed method, which takes advantage of tooth surface information from intra-oral scan data in 3D low dose maxillofacial CBCT modeling.

Unpaired image denoising using a generative adversarial network in X-ray CT

Mar 04, 2019

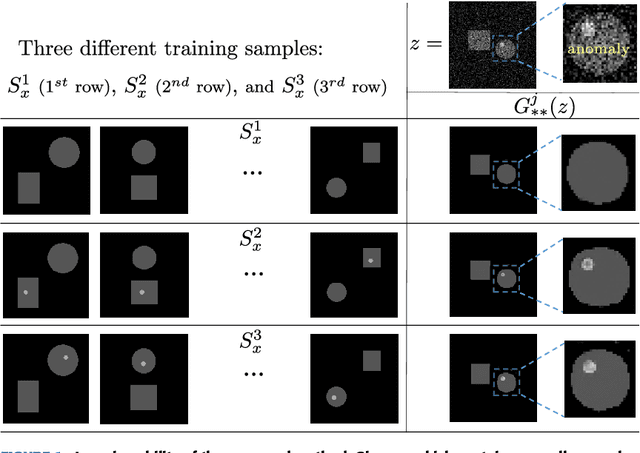

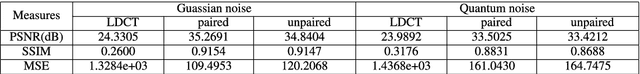

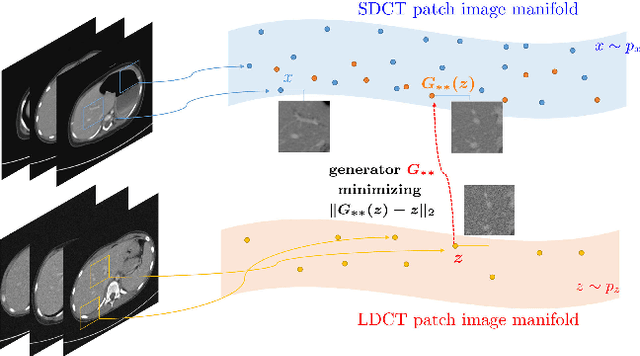

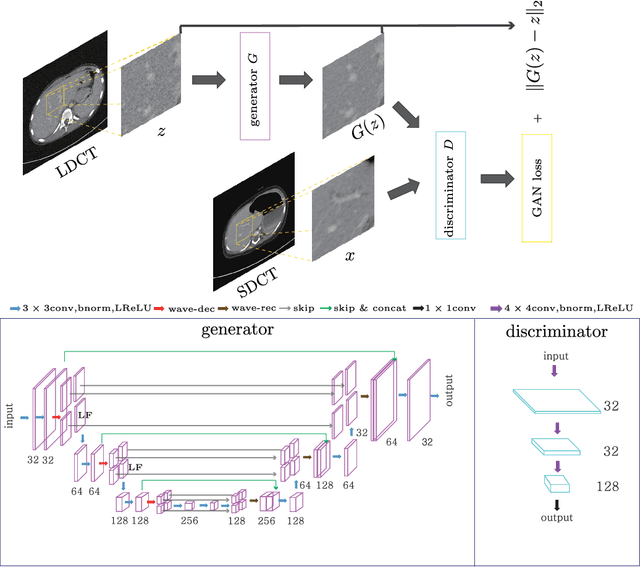

Abstract:This paper proposes a deep learning-based denoising method for noisy low-dose computerized tomography (CT) images in the absence of paired training data. The proposed method uses a fidelity-embedded generative adversarial network (GAN) to learn a denoising function from unpaired training data of low-dose CT (LDCT) and standard-dose CT (SDCT) images, where the denoising function is the optimal generator in the GAN framework. Given an optimal discriminator in the GAN, the generator is optimized by minimizing a weighted sum of two losses: the Kullback-Leibler divergence between an SDCT data distribution and a generated distribution, and the $\ell_2$ loss between the LDCT image and the corresponding generated images (or denoised image). The experimental results show that the proposed deep-learning method with unpaired datasets performs comparably to a method using paired datasets. Clinical experiment was also performed to show the validity of the proposed method for non-Gaussian noise arising in the low-dose X-ray CT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge