Huthaifa I Ashqar

Object Detection using Oriented Window Learning Vi-sion Transformer: Roadway Assets Recognition

Jun 15, 2024

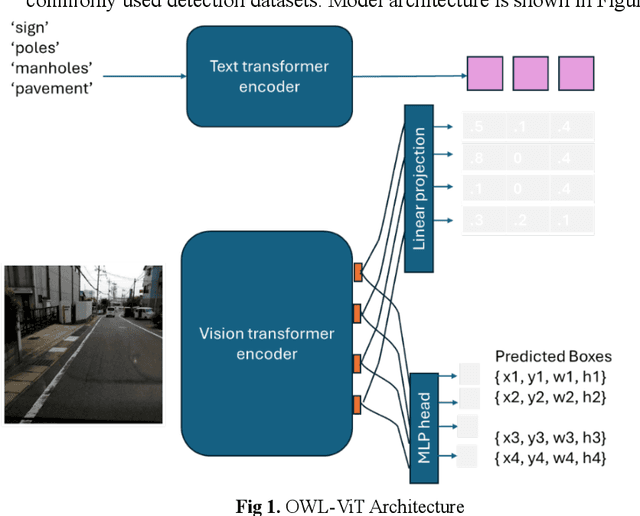

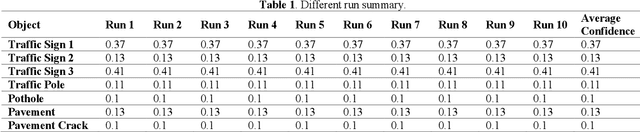

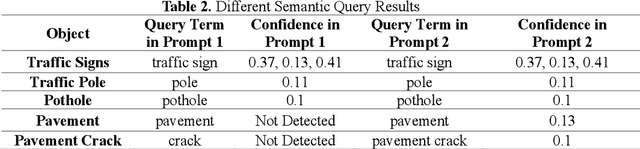

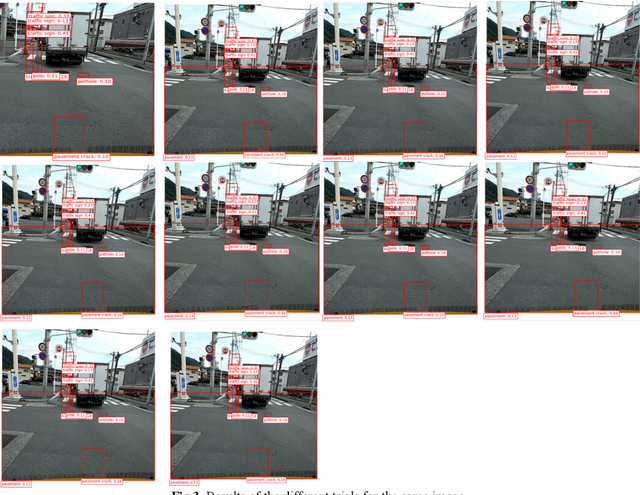

Abstract:Object detection is a critical component of transportation systems, particularly for applications such as autonomous driving, traffic monitoring, and infrastructure maintenance. Traditional object detection methods often struggle with limited data and variability in object appearance. The Oriented Window Learning Vision Transformer (OWL-ViT) offers a novel approach by adapting window orientations to the geometry and existence of objects, making it highly suitable for detecting diverse roadway assets. This study leverages OWL-ViT within a one-shot learning framework to recognize transportation infrastructure components, such as traffic signs, poles, pavement, and cracks. This study presents a novel method for roadway asset detection using OWL-ViT. We conducted a series of experiments to evaluate the performance of the model in terms of detection consistency, semantic flexibility, visual context adaptability, resolution robustness, and impact of non-max suppression. The results demonstrate the high efficiency and reliability of the OWL-ViT across various scenarios, underscoring its potential to enhance the safety and efficiency of intelligent transportation systems.

Eyeballing Combinatorial Problems: A Case Study of Using Multimodal Large Language Models to Solve Traveling Salesman Problems

Jun 11, 2024

Abstract:Multimodal Large Language Models (MLLMs) have demonstrated proficiency in processing di-verse modalities, including text, images, and audio. These models leverage extensive pre-existing knowledge, enabling them to address complex problems with minimal to no specific training examples, as evidenced in few-shot and zero-shot in-context learning scenarios. This paper investigates the use of MLLMs' visual capabilities to 'eyeball' solutions for the Traveling Salesman Problem (TSP) by analyzing images of point distributions on a two-dimensional plane. Our experiments aimed to validate the hypothesis that MLLMs can effectively 'eyeball' viable TSP routes. The results from zero-shot, few-shot, self-ensemble, and self-refine zero-shot evaluations show promising outcomes. We anticipate that these findings will inspire further exploration into MLLMs' visual reasoning abilities to tackle other combinatorial problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge