Hung The Nguyen

Disturbances in Influence of a Shepherding Agent is More Impactful than Sensorial Noise During Swarm Guidance

Oct 03, 2020

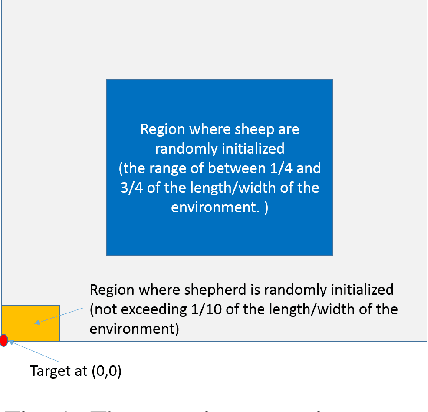

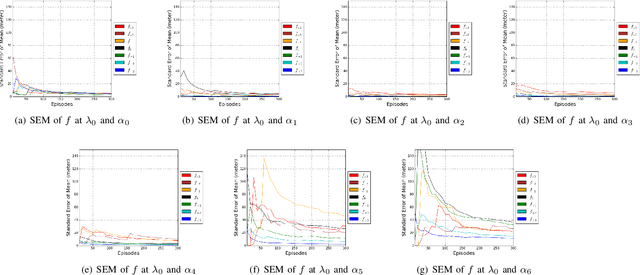

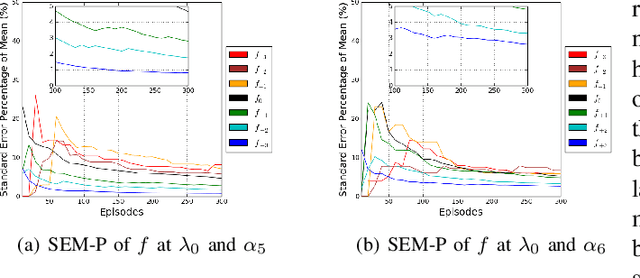

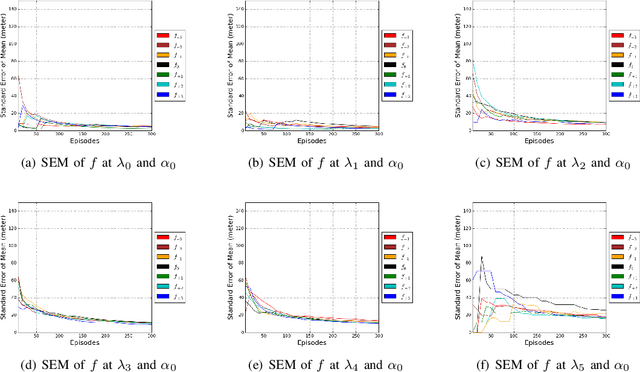

Abstract:The guidance of a large swarm is a challenging control problem. Shepherding offers one approach to guide a large swarm using a few shepherding agents (sheepdogs). While noise is an inherent characteristic in many real-world problems, the impact of noise on shepherding is not a well-studied problem. We study two forms of noise. First, we evaluate noise in the sensorial information received by the shepherd about the location of sheep. Second, we evaluate noise in the ability of the sheepdog to influence sheep due to disturbance forces occurring during actuation. We study both types of noise in this paper, and investigate the performance of Str\"{o}mbom's approach under these actuation and perception noises. To ensure that the parameterisation of the algorithm creates a stable performance, we need to run a large number of simulations, while increasing the number of random episodes until stability is achieved. We then systematically study the impact of sensorial and actuation noise on performance. Str\"{o}mbom's approach is found to be more sensitive to actuation noise than perception noise. This implies that it is more important for the shepherding agent to influence the sheep more accurately by reducing actuation noise than attempting to reduce noise in its sensors. Moreover, different levels of noise required different parameterisation for the shepherding agent, where the threshold needed by an agent to decide whether or not to collect astray sheep is different for different noise levels.

Continuous Deep Hierarchical Reinforcement Learning for Ground-Air Swarm Shepherding

Apr 27, 2020

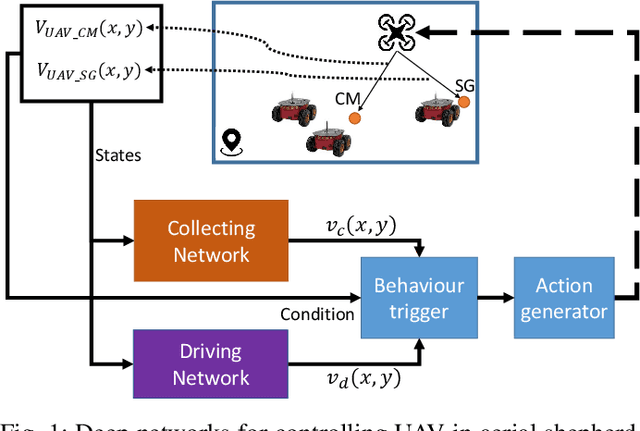

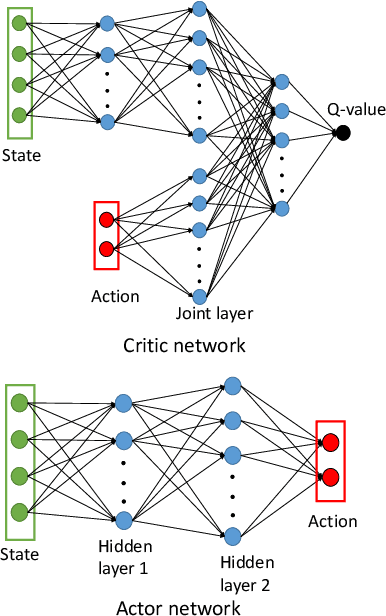

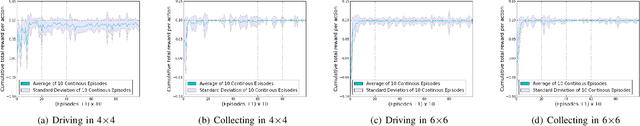

Abstract:The control and guidance of multi-robots (swarm) is a non-trivial problem due to the complexity inherent in the coupled interaction among the group. Whether the swarm is cooperative or non cooperative, lessons could be learnt from sheepdogs herding sheep. Biomimicry of shepherding offers computational methods for swarm control with the potential to generalize and scale in different environments. However, learning to shepherd is complex due to the large search space that a machine learner is faced with. We present a deep hierarchical reinforcement learning approach for shepherding, whereby an unmanned aerial vehicle (UAV) learns to act as an Aerial sheepdog to control and guide a swarm of unmanned ground vehicles (UGVs). The approach extends our previous work on machine education to decompose the search space into hierarchically organized curriculum. Each lesson in the curriculum is learnt by a deep reinforcement learning model. The hierarchy is formed by fusing the outputs of the model. The approach is demonstrated first in a high-fidelity robotic-operating-system (ROS)-based simulation environment, then with physical UGVs and a UAV in an in-door testing facility. We investigate the ability of the method to generalize as the models move from simulation to the real-world and as the models move from one scale to another.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge