Houston Claure

Dynamic Fairness Perceptions in Human-Robot Interaction

Sep 11, 2024

Abstract:People deeply care about how fairly they are treated by robots. The established paradigm for probing fairness in Human-Robot Interaction (HRI) involves measuring the perception of the fairness of a robot at the conclusion of an interaction. However, such an approach is limited as interactions vary over time, potentially causing changes in fairness perceptions as well. To validate this idea, we conducted a 2x2 user study with a mixed design (N=40) where we investigated two factors: the timing of unfair robot actions (early or late in an interaction) and the beneficiary of those actions (either another robot or the participant). Our results show that fairness judgments are not static. They can shift based on the timing of unfair robot actions. Further, we explored using perceptions of three key factors (reduced welfare, conduct, and moral transgression) proposed by a Fairness Theory from Organizational Justice to predict momentary perceptions of fairness in our study. Interestingly, we found that the reduced welfare and moral transgression factors were better predictors than all factors together. Our findings reinforce the idea that unfair robot behavior can shape perceptions of group dynamics and trust towards a robot and pave the path to future research directions on moment-to-moment fairness perceptions

Designing for Fairness in Human-Robot Interactions

May 31, 2024

Abstract:The foundation of successful human collaboration is deeply rooted in the principles of fairness. As robots are increasingly prevalent in various parts of society where they are working alongside groups and teams of humans, their ability to understand and act according to principles of fairness becomes crucial for their effective integration. This is especially critical when robots are part of multi-human teams, where they must make continuous decisions regarding the allocation of resources. These resources can be material, such as tools, or communicative, such as gaze direction, and must be distributed fairly among team members to ensure optimal team performance and healthy group dynamics. Therefore, our research focuses on understanding how robots can effectively participate within human groups by making fair decisions while contributing positively to group dynamics and outcomes. In this paper, I discuss advances toward ensuring that robots are capable of considering human notions of fairness in their decision-making.

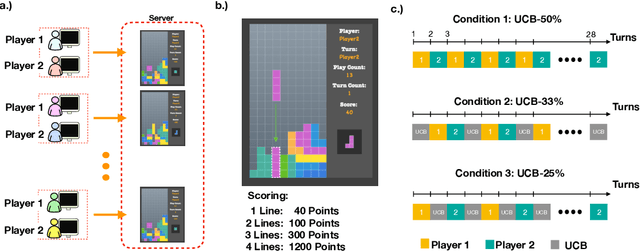

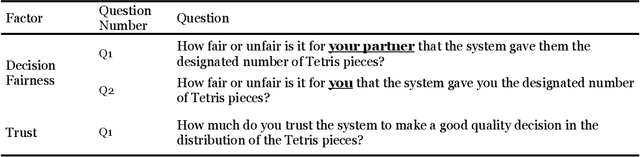

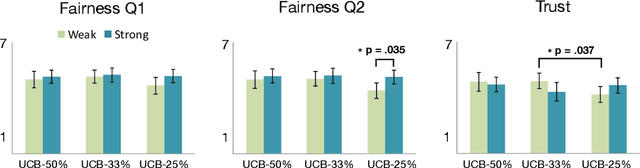

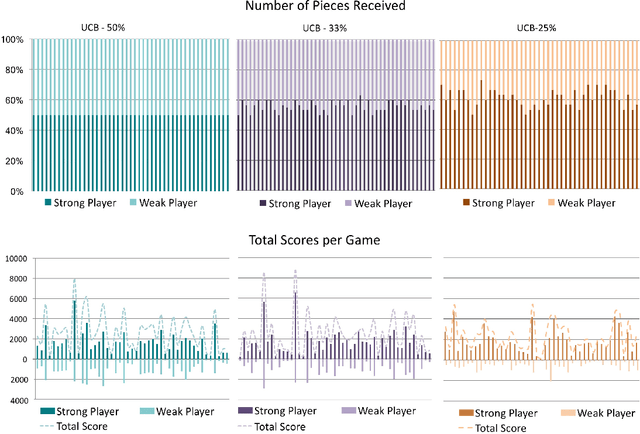

Reinforcement Learning with Fairness Constraints for Resource Distribution in Human-Robot Teams

Jul 08, 2019

Abstract:Much work in robotics and operations research has focused on optimal resource distribution, where an agent dynamically decides how to sequentially distribute resources among different candidates. However, most work ignores the notion of fairness in candidate selection. In the case where a robot distributes resources to human team members, disproportionately favoring the highest performing teammate can have negative effects in team dynamics and system acceptance. We introduce a multi-armed bandit algorithm with fairness constraints, where a robot distributes resources to human teammates of different skill levels. In this problem, the robot does not know the skill level of each human teammate, but learns it by observing their performance over time. We define fairness as a constraint on the minimum rate that each human teammate is selected throughout the task. We provide theoretical guarantees on performance and perform a large-scale user study, where we adjust the level of fairness in our algorithm. Results show that fairness in resource distribution has a significant effect on users' trust in the system.

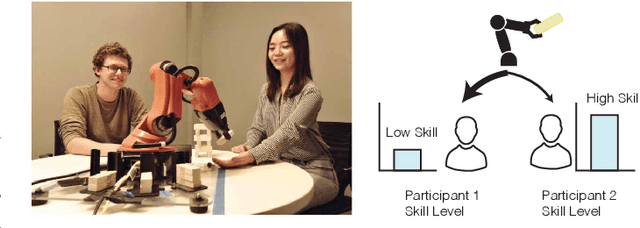

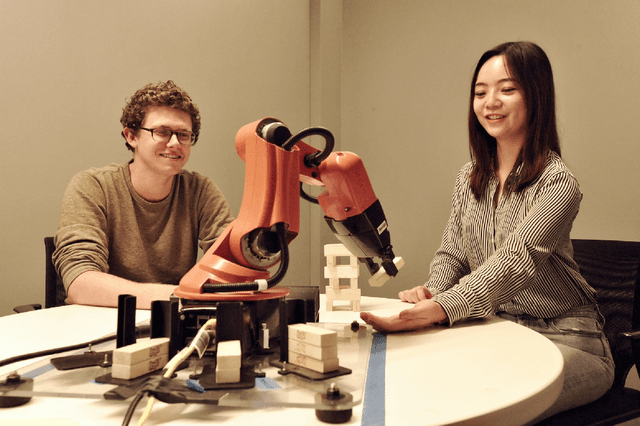

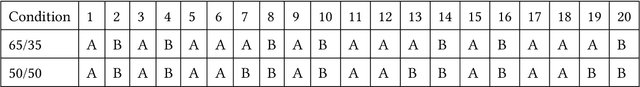

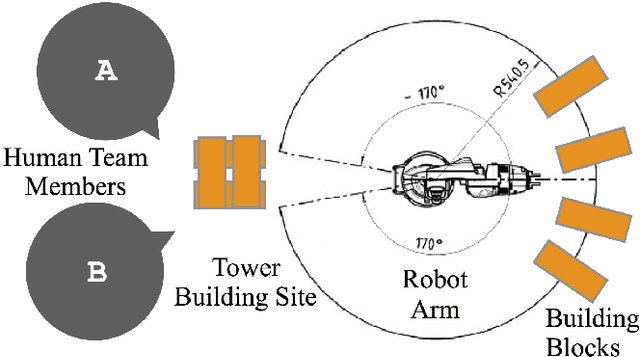

Robot Assisted Tower Construction - A Resource Distribution Task to Study Human-Robot Collaboration and Interaction with Groups of People

Dec 22, 2018

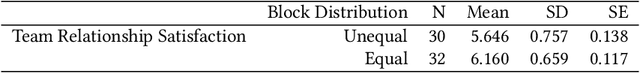

Abstract:Research on human-robot collaboration or human-robot teaming, has focused predominantly on understanding and enabling collaboration between a single robot and a single human. Extending human-robot collaboration research beyond the dyad, raises novel questions about how a robot should distribute resources among group members and about what the social and task related consequences of the distribution are. Methodological advances are needed to allow researchers to collect data about human robot collaboration that involves multiple people. This paper presents Tower Construction, a novel resource distribution task that allows researchers to examine collaboration between a robot and groups of people. By focusing on the question of whether and how a robot's distribution of resources (wooden blocks required for a building task) affects collaboration dynamics and outcomes, we provide a case of how this task can be applied in a laboratory study with 124 participants to collect data about human robot collaboration that involves multiple humans. We highlight the kinds of insights the task can yield. In particular we find that the distribution of resources affects perceptions of performance, and interpersonal dynamics between human team-members.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge