Hongbing Hu

Bridging the Gap: Transforming Natural Language Questions into SQL Queries via Abstract Query Pattern and Contextual Schema Markup

Feb 20, 2025Abstract:Large language models have demonstrated excellent performance in many tasks, including Text-to-SQL, due to their powerful in-context learning capabilities. They are becoming the mainstream approach for Text-to-SQL. However, these methods still have a significant gap compared to human performance, especially on complex questions. As the complexity of questions increases, the gap between questions and SQLs increases. We identify two important gaps: the structural mapping gap and the lexical mapping gap. To tackle these two gaps, we propose PAS-SQL, an efficient SQL generation pipeline based on LLMs, which alleviates gaps through Abstract Query Pattern (AQP) and Contextual Schema Markup (CSM). AQP aims to obtain the structural pattern of the question by removing database-related information, which enables us to find structurally similar demonstrations. CSM aims to associate database-related text span in the question with specific tables or columns in the database, which alleviates the lexical mapping gap. Experimental results on the Spider and BIRD datasets demonstrate the effectiveness of our proposed method. Specifically, PAS-SQL + GPT-4o sets a new state-of-the-art on the Spider benchmark with an execution accuracy of 87.9\%, and achieves leading results on the BIRD dataset with an execution accuracy of 64.67\%.

Trifo-VIO: Robust and Efficient Stereo Visual Inertial Odometry using Points and Lines

Sep 18, 2018

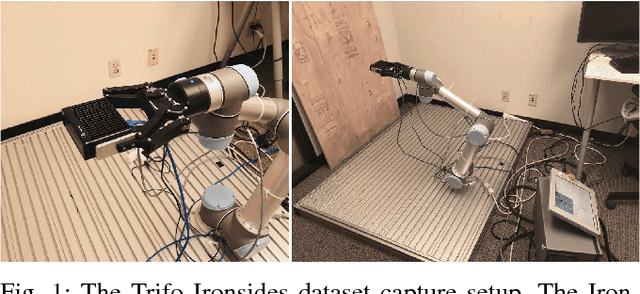

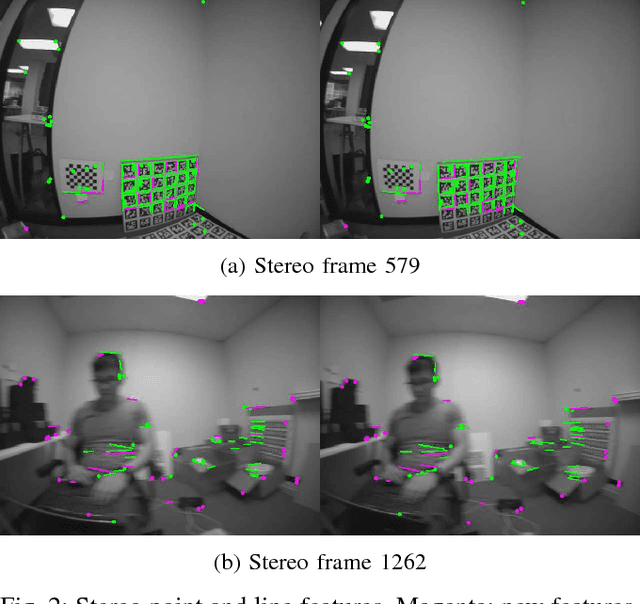

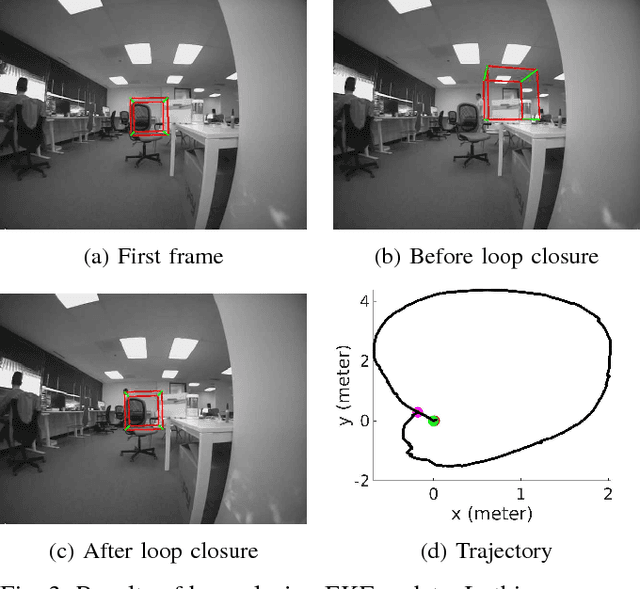

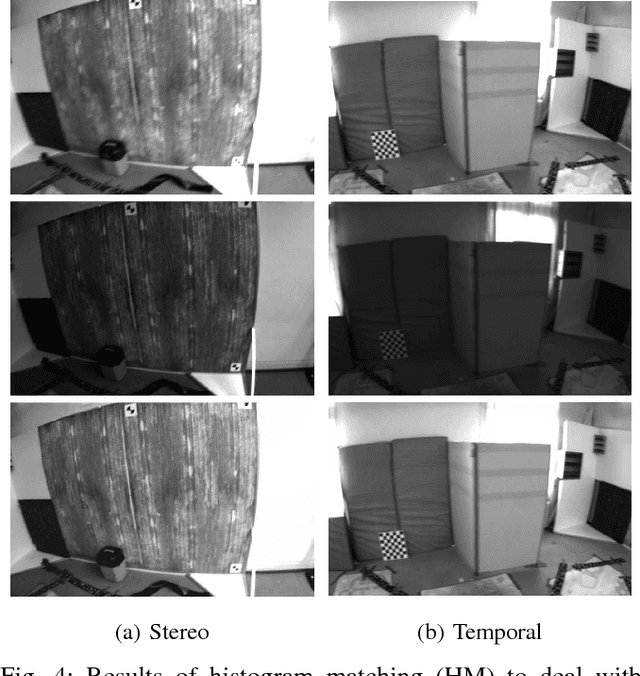

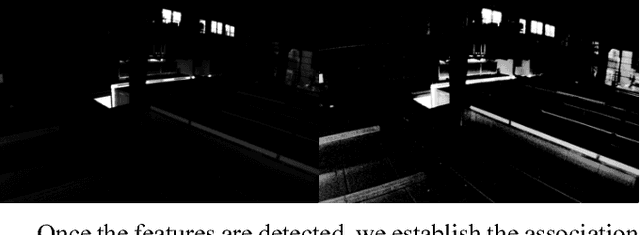

Abstract:In this paper, we present the Trifo Visual Inertial Odometry (Trifo-VIO), a tightly-coupled filtering-based stereo VIO system using both points and lines. Line features help improve system robustness in challenging scenarios when point features cannot be reliably detected or tracked, e.g. low-texture environment or lighting change. In addition, we propose a novel lightweight filtering-based loop closing technique to reduce accumulated drift without global bundle adjustment or pose graph optimization. We formulate loop closure as EKF updates to optimally relocate the current sliding window maintained by the filter to past keyframes. We also present the Trifo Ironsides dataset, a new visual-inertial dataset, featuring high-quality synchronized stereo camera and IMU data from the Ironsides sensor [3] with various motion types and textures and millimeter-accuracy groundtruth. To validate the performance of the proposed system, we conduct extensive comparison with state-of-the-art approaches (OKVIS, VINS-MONO and S-MSCKF) using both the public EuRoC dataset and the Trifo Ironsides dataset.

PIRVS: An Advanced Visual-Inertial SLAM System with Flexible Sensor Fusion and Hardware Co-Design

Oct 02, 2017

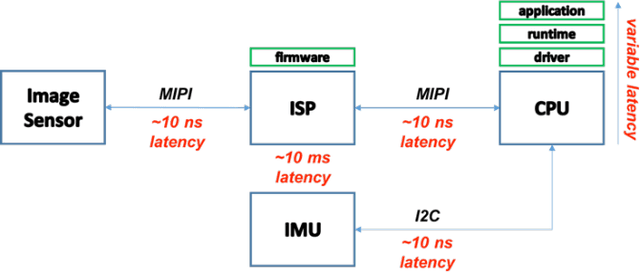

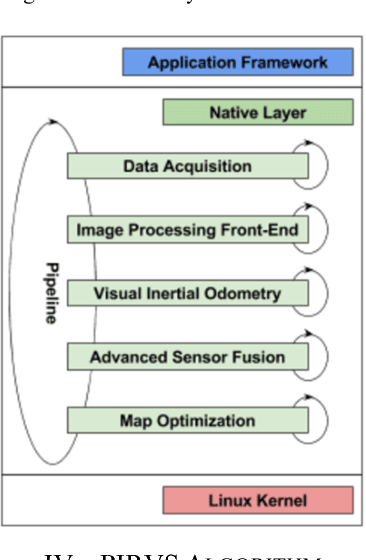

Abstract:In this paper, we present the PerceptIn Robotics Vision System (PIRVS) system, a visual-inertial computing hardware with embedded simultaneous localization and mapping (SLAM) algorithm. The PIRVS hardware is equipped with a multi-core processor, a global-shutter stereo camera, and an IMU with precise hardware synchronization. The PIRVS software features a novel and flexible sensor fusion approach to not only tightly integrate visual measurements with inertial measurements and also to loosely couple with additional sensor modalities. It runs in real-time on both PC and the PIRVS hardware. We perform a thorough evaluation of the proposed system using multiple public visual-inertial datasets. Experimental results demonstrate that our system reaches comparable accuracy of state-of-the-art visual-inertial algorithms on PC, while being more efficient on the PIRVS hardware.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge