Hifsa Asif

A Recent Survey of Vision Transformers for Medical Image Segmentation

Dec 01, 2023

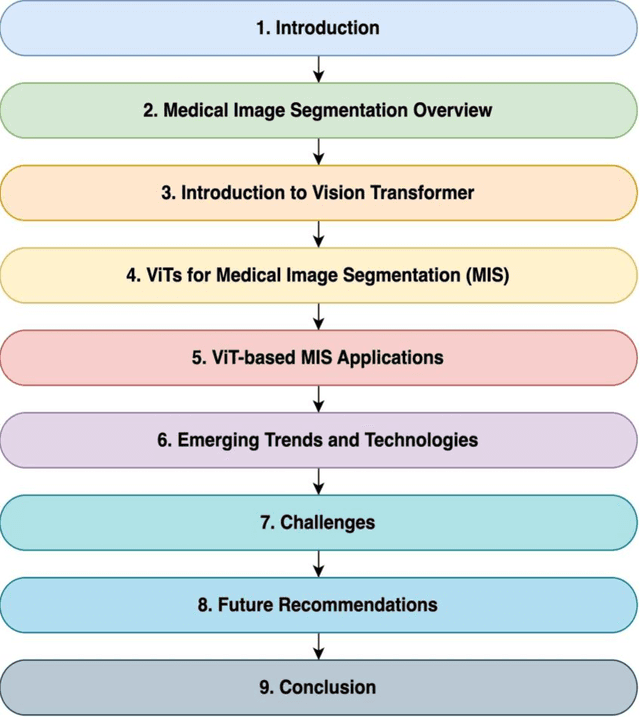

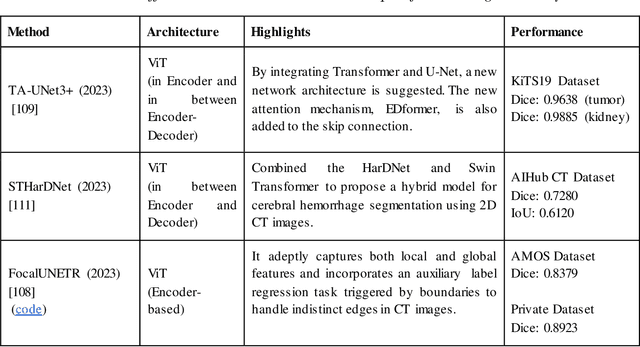

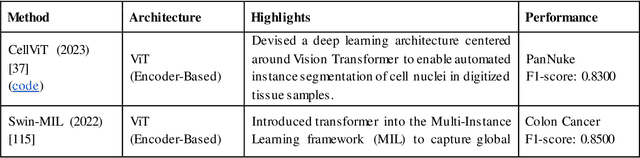

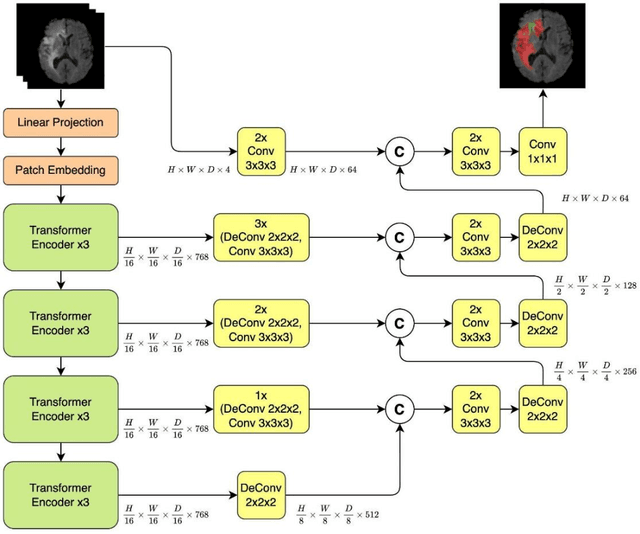

Abstract:Medical image segmentation plays a crucial role in various healthcare applications, enabling accurate diagnosis, treatment planning, and disease monitoring. In recent years, Vision Transformers (ViTs) have emerged as a promising technique for addressing the challenges in medical image segmentation. In medical images, structures are usually highly interconnected and globally distributed. ViTs utilize their multi-scale attention mechanism to model the long-range relationships in the images. However, they do lack image-related inductive bias and translational invariance, potentially impacting their performance. Recently, researchers have come up with various ViT-based approaches that incorporate CNNs in their architectures, known as Hybrid Vision Transformers (HVTs) to capture local correlation in addition to the global information in the images. This survey paper provides a detailed review of the recent advancements in ViTs and HVTs for medical image segmentation. Along with the categorization of ViT and HVT-based medical image segmentation approaches we also present a detailed overview of their real-time applications in several medical image modalities. This survey may serve as a valuable resource for researchers, healthcare practitioners, and students in understanding the state-of-the-art approaches for ViT-based medical image segmentation.

A survey of the Vision Transformers and its CNN-Transformer based Variants

May 25, 2023Abstract:Vision transformers have recently become popular as a possible alternative to convolutional neural networks (CNNs) for a variety of computer vision applications. These vision transformers due to their ability to focus on global relationships in images have large capacity, but may result in poor generalization as compared to CNNs. Very recently, the hybridization of convolution and self-attention mechanisms in vision transformers is gaining popularity due to their ability of exploiting both local and global image representations. These CNN-Transformer architectures also known as hybrid vision transformers have shown remarkable results for vision applications. Recently, due to the rapidly growing number of these hybrid vision transformers, there is a need for a taxonomy and explanation of these architectures. This survey presents a taxonomy of the recent vision transformer architectures, and more specifically that of the hybrid vision transformers. Additionally, the key features of each architecture such as the attention mechanisms, positional embeddings, multi-scale processing, and convolution are also discussed. This survey highlights the potential of hybrid vision transformers to achieve outstanding performance on a variety of computer vision tasks. Moreover, it also points towards the future directions of this rapidly evolving field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge