Herman Herman

UGV-UAV Object Geolocation in Unstructured Environments

Jan 14, 2022

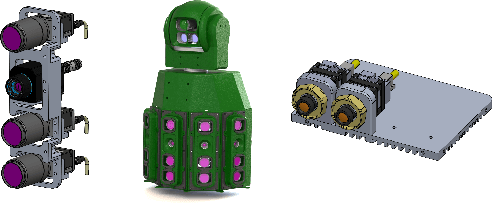

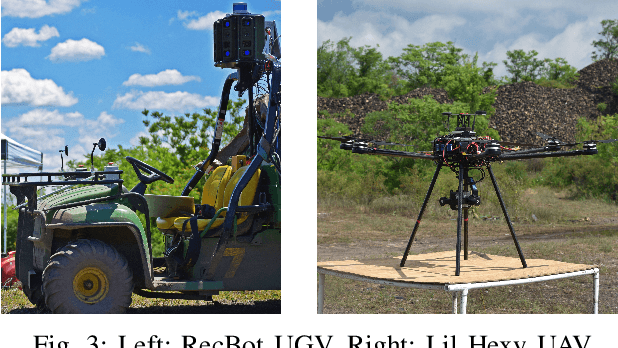

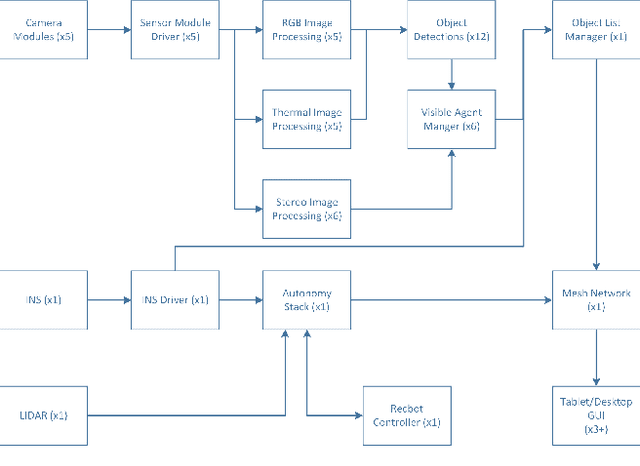

Abstract:A robotic system of multiple unmanned ground vehicles (UGVs) and unmanned aerial vehicles (UAVs) has the potential for advancing autonomous object geolocation performance. Much research has focused on algorithmic improvements on individual components, such as navigation, motion planning, and perception. In this paper, we present a UGV-UAV object detection and geolocation system, which performs perception, navigation, and planning autonomously in real scale in unstructured environment. We designed novel sensor pods equipped with multispectral (visible, near-infrared, thermal), high resolution (181.6 Mega Pixels), stereo (near-infrared pair), wide field of view (192 degree HFOV) array. We developed a novel on-board software-hardware architecture to process the high volume sensor data in real-time, and we built a custom AI subsystem composed of detection, tracking, navigation, and planning for autonomous objects geolocation in real-time. This research is the first real scale demonstration of such high speed data processing capability. Our novel modular sensor pod can boost relevant computer vision and machine learning research. Our novel hardware-software architecture is a solid foundation for system-level and component-level research. Our system is validated through data-driven offline tests as well as a series of field tests in unstructured environments. We present quantitative results as well as discussions on key robotic system level challenges which manifest when we build and test the system. This system is the first step toward a UGV-UAV cooperative reconnaissance system in the future.

Comparing Apples and Oranges: Off-Road Pedestrian Detection on the NREC Agricultural Person-Detection Dataset

Oct 26, 2017

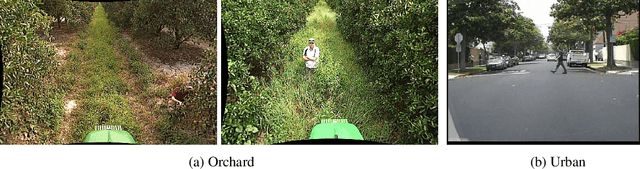

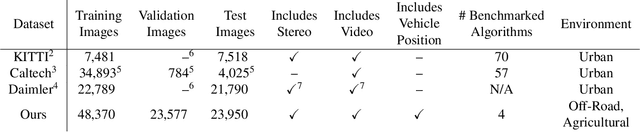

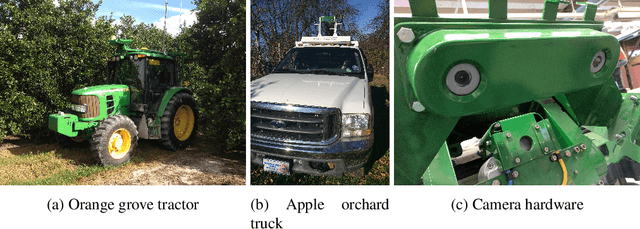

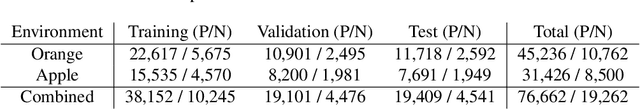

Abstract:Person detection from vehicles has made rapid progress recently with the advent of multiple highquality datasets of urban and highway driving, yet no large-scale benchmark is available for the same problem in off-road or agricultural environments. Here we present the NREC Agricultural Person-Detection Dataset to spur research in these environments. It consists of labeled stereo video of people in orange and apple orchards taken from two perception platforms (a tractor and a pickup truck), along with vehicle position data from RTK GPS. We define a benchmark on part of the dataset that combines a total of 76k labeled person images and 19k sampled person-free images. The dataset highlights several key challenges of the domain, including varying environment, substantial occlusion by vegetation, people in motion and in non-standard poses, and people seen from a variety of distances; meta-data are included to allow targeted evaluation of each of these effects. Finally, we present baseline detection performance results for three leading approaches from urban pedestrian detection and our own convolutional neural network approach that benefits from the incorporation of additional image context. We show that the success of existing approaches on urban data does not transfer directly to this domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge