David Guttendorf

Decentralized Uncertainty-Aware Active Search with a Team of Aerial Robots

Oct 11, 2024Abstract:Rapid search and rescue is critical to maximizing survival rates following natural disasters. However, these efforts are challenged by the need to search large disaster zones, lack of reliability in the communications infrastructure, and a priori unknown numbers of objects of interest (OOIs), such as injured survivors. Aerial robots are increasingly being deployed for search and rescue due to their high mobility, but there remains a gap in deploying multi-robot autonomous aerial systems for methodical search of large environments. Prior works have relied on preprogrammed paths from human operators or are evaluated only in simulation. We bridge these gaps in the state of the art by developing and demonstrating a decentralized active search system, which biases its trajectories to take additional views of uncertain OOIs. The methodology leverages stochasticity for rapid coverage in communication denied scenarios. When communications are available, robots share poses, goals, and OOI information to accelerate the rate of search. Extensive simulations and hardware experiments in Bloomingdale, OH, are conducted to validate the approach. The results demonstrate the active search approach outperforms greedy coverage-based planning in communication-denied scenarios while maintaining comparable performance in communication-enabled scenarios.

UGV-UAV Object Geolocation in Unstructured Environments

Jan 14, 2022

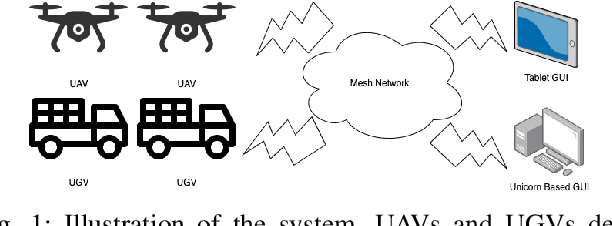

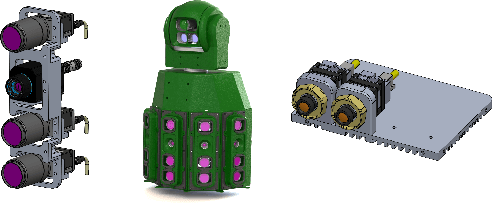

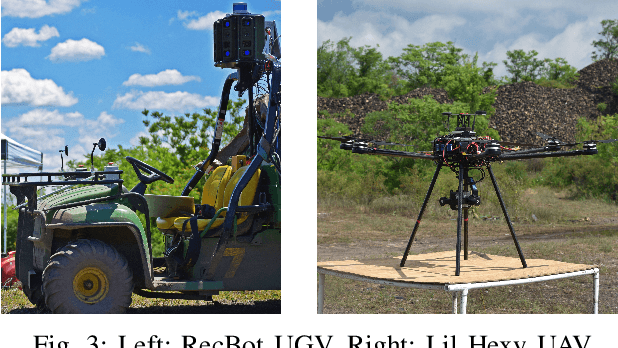

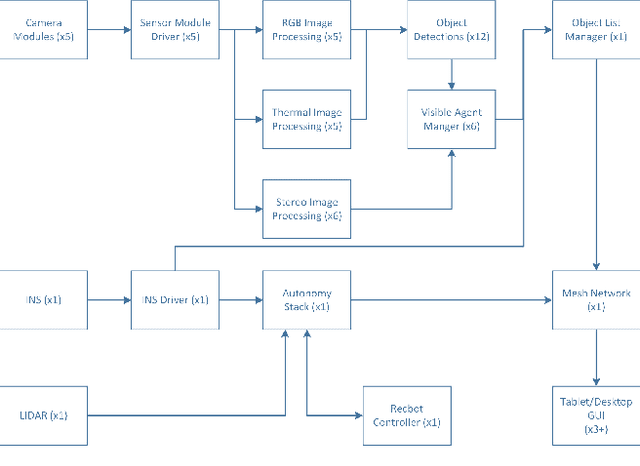

Abstract:A robotic system of multiple unmanned ground vehicles (UGVs) and unmanned aerial vehicles (UAVs) has the potential for advancing autonomous object geolocation performance. Much research has focused on algorithmic improvements on individual components, such as navigation, motion planning, and perception. In this paper, we present a UGV-UAV object detection and geolocation system, which performs perception, navigation, and planning autonomously in real scale in unstructured environment. We designed novel sensor pods equipped with multispectral (visible, near-infrared, thermal), high resolution (181.6 Mega Pixels), stereo (near-infrared pair), wide field of view (192 degree HFOV) array. We developed a novel on-board software-hardware architecture to process the high volume sensor data in real-time, and we built a custom AI subsystem composed of detection, tracking, navigation, and planning for autonomous objects geolocation in real-time. This research is the first real scale demonstration of such high speed data processing capability. Our novel modular sensor pod can boost relevant computer vision and machine learning research. Our novel hardware-software architecture is a solid foundation for system-level and component-level research. Our system is validated through data-driven offline tests as well as a series of field tests in unstructured environments. We present quantitative results as well as discussions on key robotic system level challenges which manifest when we build and test the system. This system is the first step toward a UGV-UAV cooperative reconnaissance system in the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge