Haoxiang Zhong

Anno-incomplete Multi-dataset Detection

Aug 29, 2024

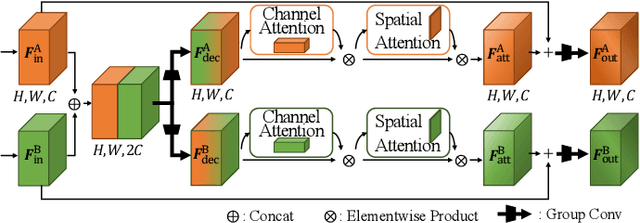

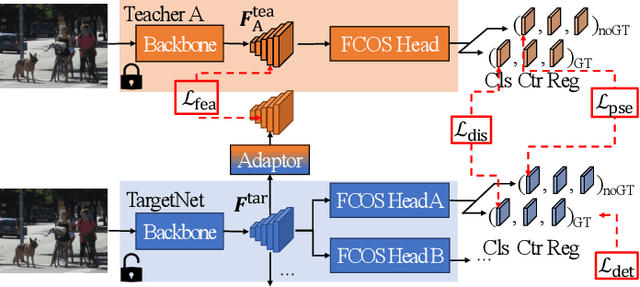

Abstract:Object detectors have shown outstanding performance on various public datasets. However, annotating a new dataset for a new task is usually unavoidable in real, since 1) a single existing dataset usually does not contain all object categories needed; 2) using multiple datasets usually suffers from annotation incompletion and heterogeneous features. We propose a novel problem as "Annotation-incomplete Multi-dataset Detection", and develop an end-to-end multi-task learning architecture which can accurately detect all the object categories with multiple partially annotated datasets. Specifically, we propose an attention feature extractor which helps to mine the relations among different datasets. Besides, a knowledge amalgamation training strategy is incorporated to accommodate heterogeneous features from different sources. Extensive experiments on different object detection datasets demonstrate the effectiveness of our methods and an improvement of 2.17%, 2.10% in mAP can be achieved on COCO and VOC respectively.

Few-Shot Backdoor Attacks on Visual Object Tracking

Jan 31, 2022

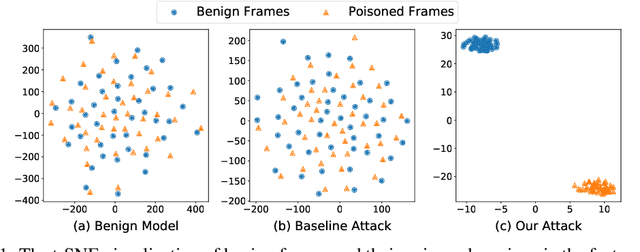

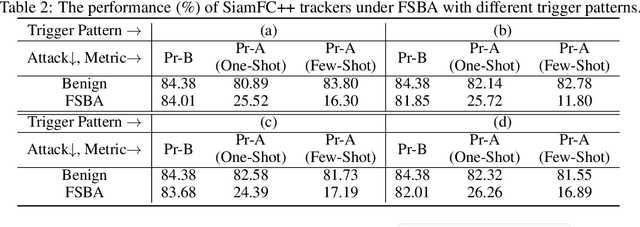

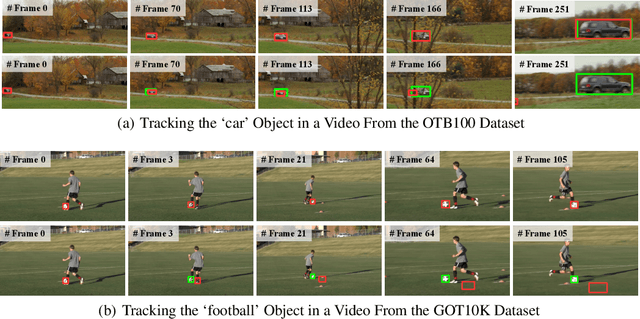

Abstract:Visual object tracking (VOT) has been widely adopted in mission-critical applications, such as autonomous driving and intelligent surveillance systems. In current practice, third-party resources such as datasets, backbone networks, and training platforms are frequently used to train high-performance VOT models. Whilst these resources bring certain convenience, they also introduce new security threats into VOT models. In this paper, we reveal such a threat where an adversary can easily implant hidden backdoors into VOT models by tempering with the training process. Specifically, we propose a simple yet effective few-shot backdoor attack (FSBA) that optimizes two losses alternately: 1) a \emph{feature loss} defined in the hidden feature space, and 2) the standard \emph{tracking loss}. We show that, once the backdoor is embedded into the target model by our FSBA, it can trick the model to lose track of specific objects even when the \emph{trigger} only appears in one or a few frames. We examine our attack in both digital and physical-world settings and show that it can significantly degrade the performance of state-of-the-art VOT trackers. We also show that our attack is resistant to potential defenses, highlighting the vulnerability of VOT models to potential backdoor attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge