Haocong Rao

Motif Guided Graph Transformer with Combinatorial Skeleton Prototype Learning for Skeleton-Based Person Re-Identification

Dec 12, 2024Abstract:Person re-identification (re-ID) via 3D skeleton data is a challenging task with significant value in many scenarios. Existing skeleton-based methods typically assume virtual motion relations between all joints, and adopt average joint or sequence representations for learning. However, they rarely explore key body structure and motion such as gait to focus on more important body joints or limbs, while lacking the ability to fully mine valuable spatial-temporal sub-patterns of skeletons to enhance model learning. This paper presents a generic Motif guided graph transformer with Combinatorial skeleton prototype learning (MoCos) that exploits structure-specific and gait-related body relations as well as combinatorial features of skeleton graphs to learn effective skeleton representations for person re-ID. In particular, motivated by the locality within joints' structure and the body-component collaboration in gait, we first propose the motif guided graph transformer (MGT) that incorporates hierarchical structural motifs and gait collaborative motifs, which simultaneously focuses on multi-order local joint correlations and key cooperative body parts to enhance skeleton relation learning. Then, we devise the combinatorial skeleton prototype learning (CSP) that leverages random spatial-temporal combinations of joint nodes and skeleton graphs to generate diverse sub-skeleton and sub-tracklet representations, which are contrasted with the most representative features (prototypes) of each identity to learn class-related semantics and discriminative skeleton representations. Extensive experiments validate the superior performance of MoCos over existing state-of-the-art models. We further show its generality under RGB-estimated skeletons, different graph modeling, and unsupervised scenarios.

A Survey of Artificial Intelligence in Gait-Based Neurodegenerative Disease Diagnosis

May 21, 2024

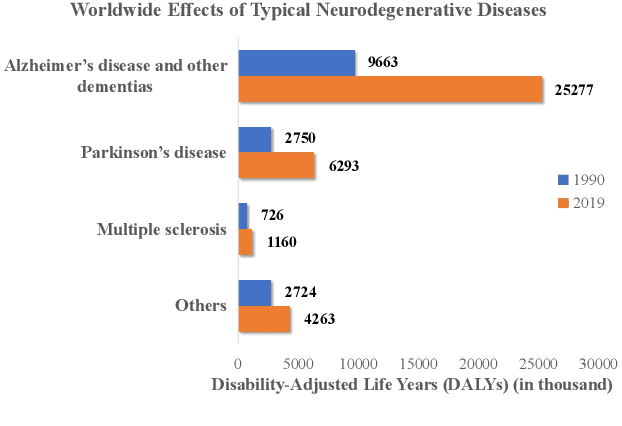

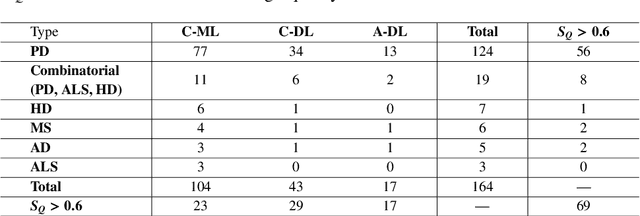

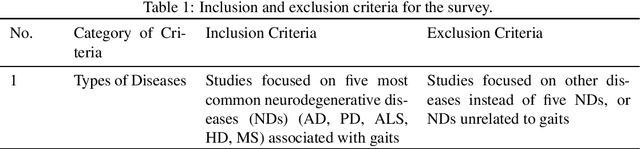

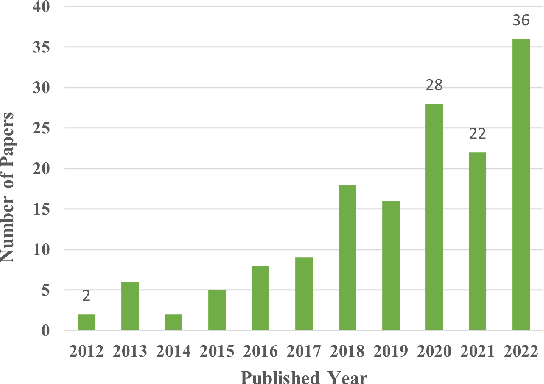

Abstract:Recent years have witnessed an increasing global population affected by neurodegenerative diseases (NDs), which traditionally require extensive healthcare resources and human effort for medical diagnosis and monitoring. As a crucial disease-related motor symptom, human gait can be exploited to characterize different NDs. The current advances in artificial intelligence (AI) models enable automatic gait analysis for NDs identification and classification, opening a new avenue to facilitate faster and more cost-effective diagnosis of NDs. In this paper, we provide a comprehensive survey on recent progress of machine learning and deep learning based AI techniques applied to diagnosis of five typical NDs through gait. We provide an overview of the process of AI-assisted NDs diagnosis, and present a systematic taxonomy of existing gait data and AI models. Through an extensive review and analysis of 164 studies, we identify and discuss the challenges, potential solutions, and future directions in this field. Finally, we envision the prospective utilization of 3D skeleton data for human gait representation and the development of more efficient AI models for NDs diagnosis. We provide a public resource repository to track and facilitate developments in this emerging field: https://github.com/Kali-Hac/AI4NDD-Survey.

A Survey on 3D Skeleton Based Person Re-Identification: Approaches, Designs, Challenges, and Future Directions

Jan 27, 2024Abstract:Person re-identification via 3D skeletons is an important emerging research area that triggers great interest in the pattern recognition community. With distinctive advantages for many application scenarios, a great diversity of 3D skeleton based person re-identification (SRID) methods have been proposed in recent years, effectively addressing prominent problems in skeleton modeling and feature learning. Despite recent advances, to the best of our knowledge, little effort has been made to comprehensively summarize these studies and their challenges. In this paper, we attempt to fill this gap by providing a systematic survey on current SRID approaches, model designs, challenges, and future directions. Specifically, we first formulate the SRID problem, and propose a taxonomy of SRID research with a summary of benchmark datasets, commonly-used model architectures, and an analytical review of different methods' characteristics. Then, we elaborate on the design principles of SRID models from multiple aspects to offer key insights for model improvement. Finally, we identify critical challenges confronting current studies and discuss several promising directions for future research of SRID.

Hierarchical Skeleton Meta-Prototype Contrastive Learning with Hard Skeleton Mining for Unsupervised Person Re-Identification

Jul 26, 2023Abstract:With rapid advancements in depth sensors and deep learning, skeleton-based person re-identification (re-ID) models have recently achieved remarkable progress with many advantages. Most existing solutions learn single-level skeleton features from body joints with the assumption of equal skeleton importance, while they typically lack the ability to exploit more informative skeleton features from various levels such as limb level with more global body patterns. The label dependency of these methods also limits their flexibility in learning more general skeleton representations. This paper proposes a generic unsupervised Hierarchical skeleton Meta-Prototype Contrastive learning (Hi-MPC) approach with Hard Skeleton Mining (HSM) for person re-ID with unlabeled 3D skeletons. Firstly, we construct hierarchical representations of skeletons to model coarse-to-fine body and motion features from the levels of body joints, components, and limbs. Then a hierarchical meta-prototype contrastive learning model is proposed to cluster and contrast the most typical skeleton features ("prototypes") from different-level skeletons. By converting original prototypes into meta-prototypes with multiple homogeneous transformations, we induce the model to learn the inherent consistency of prototypes to capture more effective skeleton features for person re-ID. Furthermore, we devise a hard skeleton mining mechanism to adaptively infer the informative importance of each skeleton, so as to focus on harder skeletons to learn more discriminative skeleton representations. Extensive evaluations on five datasets demonstrate that our approach outperforms a wide variety of state-of-the-art skeleton-based methods. We further show the general applicability of our method to cross-view person re-ID and RGB-based scenarios with estimated skeletons.

TranSG: Transformer-Based Skeleton Graph Prototype Contrastive Learning with Structure-Trajectory Prompted Reconstruction for Person Re-Identification

Mar 28, 2023Abstract:Person re-identification (re-ID) via 3D skeleton data is an emerging topic with prominent advantages. Existing methods usually design skeleton descriptors with raw body joints or perform skeleton sequence representation learning. However, they typically cannot concurrently model different body-component relations, and rarely explore useful semantics from fine-grained representations of body joints. In this paper, we propose a generic Transformer-based Skeleton Graph prototype contrastive learning (TranSG) approach with structure-trajectory prompted reconstruction to fully capture skeletal relations and valuable spatial-temporal semantics from skeleton graphs for person re-ID. Specifically, we first devise the Skeleton Graph Transformer (SGT) to simultaneously learn body and motion relations within skeleton graphs, so as to aggregate key correlative node features into graph representations. Then, we propose the Graph Prototype Contrastive learning (GPC) to mine the most typical graph features (graph prototypes) of each identity, and contrast the inherent similarity between graph representations and different prototypes from both skeleton and sequence levels to learn discriminative graph representations. Last, a graph Structure-Trajectory Prompted Reconstruction (STPR) mechanism is proposed to exploit the spatial and temporal contexts of graph nodes to prompt skeleton graph reconstruction, which facilitates capturing more valuable patterns and graph semantics for person re-ID. Empirical evaluations demonstrate that TranSG significantly outperforms existing state-of-the-art methods. We further show its generality under different graph modeling, RGB-estimated skeletons, and unsupervised scenarios.

Can ChatGPT Assess Human Personalities? A General Evaluation Framework

Mar 07, 2023Abstract:Large Language Models (LLMs) especially ChatGPT have produced impressive results in various areas, but their potential human-like psychology is still largely unexplored. Existing works study the virtual personalities of LLMs but rarely explore the possibility of analyzing human personalities via LLMs. This paper presents a generic evaluation framework for LLMs to assess human personalities based on Myers Briggs Type Indicator (MBTI) tests. Specifically, we first devise unbiased prompts by randomly permuting options in MBTI questions and adopt the average testing result to encourage more impartial answer generation. Then, we propose to replace the subject in question statements to enable flexible queries and assessments on different subjects from LLMs. Finally, we re-formulate the question instructions in a manner of correctness evaluation to facilitate LLMs to generate clearer responses. The proposed framework enables LLMs to flexibly assess personalities of different groups of people. We further propose three evaluation metrics to measure the consistency, robustness, and fairness of assessment results from state-of-the-art LLMs including ChatGPT and InstructGPT. Our experiments reveal ChatGPT's ability to assess human personalities, and the average results demonstrate that it can achieve more consistent and fairer assessments in spite of lower robustness against prompt biases compared with InstructGPT.

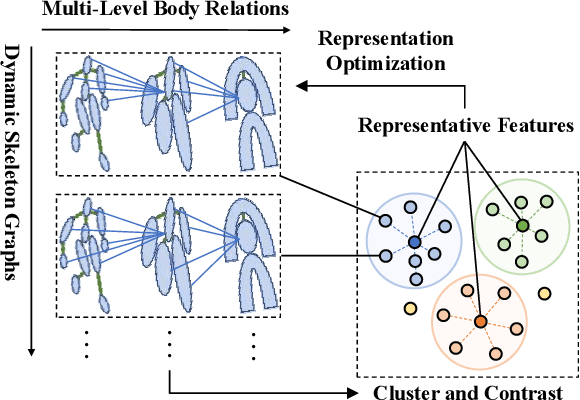

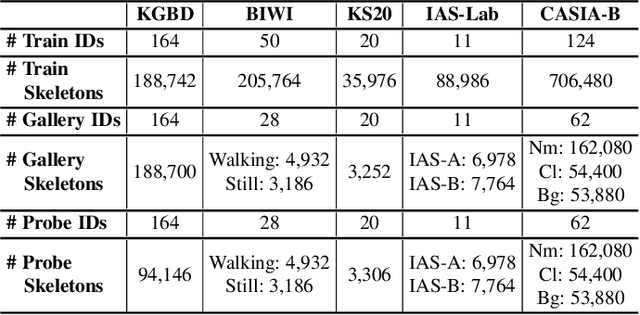

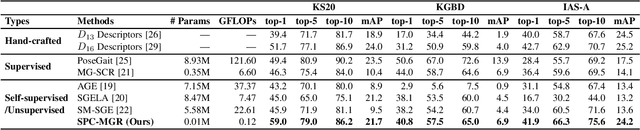

Skeleton Prototype Contrastive Learning with Multi-Level Graph Relation Modeling for Unsupervised Person Re-Identification

Aug 25, 2022

Abstract:Person re-identification (re-ID) via 3D skeletons is an important emerging topic with many merits. Existing solutions rarely explore valuable body-component relations in skeletal structure or motion, and they typically lack the ability to learn general representations with unlabeled skeleton data for person re-ID. This paper proposes a generic unsupervised Skeleton Prototype Contrastive learning paradigm with Multi-level Graph Relation learning (SPC-MGR) to learn effective representations from unlabeled skeletons to perform person re-ID. Specifically, we first construct unified multi-level skeleton graphs to fully model body structure within skeletons. Then we propose a multi-head structural relation layer to comprehensively capture relations of physically-connected body-component nodes in graphs. A full-level collaborative relation layer is exploited to infer collaboration between motion-related body parts at various levels, so as to capture rich body features and recognizable walking patterns. Lastly, we propose a skeleton prototype contrastive learning scheme that clusters feature-correlative instances of unlabeled graph representations and contrasts their inherent similarity with representative skeleton features ("skeleton prototypes") to learn discriminative skeleton representations for person re-ID. Empirical evaluations show that SPC-MGR significantly outperforms several state-of-the-art skeleton-based methods, and it also achieves highly competitive person re-ID performance for more general scenarios.

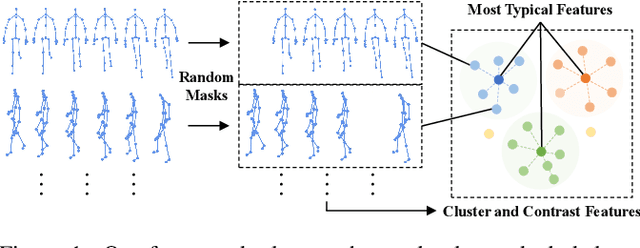

SimMC: Simple Masked Contrastive Learning of Skeleton Representations for Unsupervised Person Re-Identification

Apr 28, 2022

Abstract:Recent advances in skeleton-based person re-identification (re-ID) obtain impressive performance via either hand-crafted skeleton descriptors or skeleton representation learning with deep learning paradigms. However, they typically require skeletal pre-modeling and label information for training, which leads to limited applicability of these methods. In this paper, we focus on unsupervised skeleton-based person re-ID, and present a generic Simple Masked Contrastive learning (SimMC) framework to learn effective representations from unlabeled 3D skeletons for person re-ID. Specifically, to fully exploit skeleton features within each skeleton sequence, we first devise a masked prototype contrastive learning (MPC) scheme to cluster the most typical skeleton features (skeleton prototypes) from different subsequences randomly masked from raw sequences, and contrast the inherent similarity between skeleton features and different prototypes to learn discriminative skeleton representations without using any label. Then, considering that different subsequences within the same sequence usually enjoy strong correlations due to the nature of motion continuity, we propose the masked intra-sequence contrastive learning (MIC) to capture intra-sequence pattern consistency between subsequences, so as to encourage learning more effective skeleton representations for person re-ID. Extensive experiments validate that the proposed SimMC outperforms most state-of-the-art skeleton-based methods. We further show its scalability and efficiency in enhancing the performance of existing models. Our codes are available at https://github.com/Kali-Hac/SimMC.

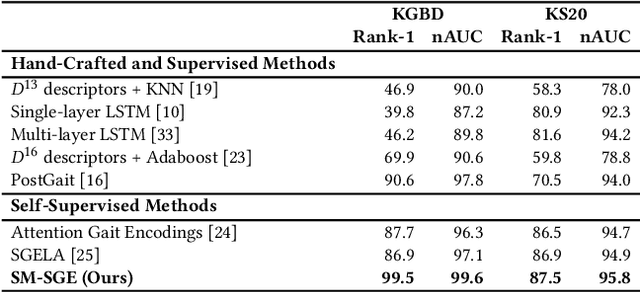

SM-SGE: A Self-Supervised Multi-Scale Skeleton Graph Encoding Framework for Person Re-Identification

Jul 05, 2021

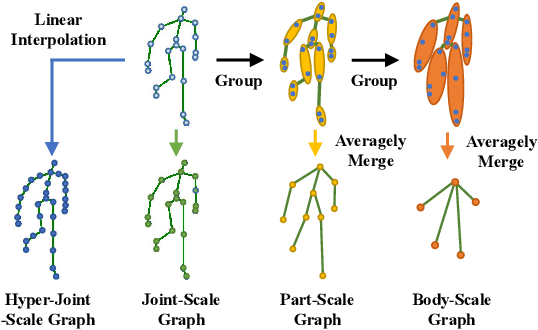

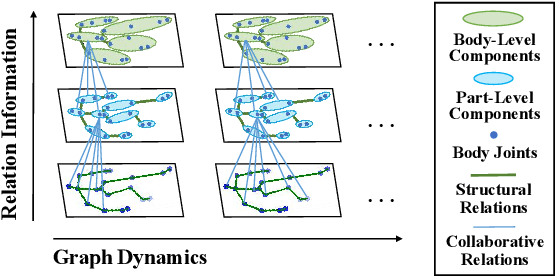

Abstract:Person re-identification via 3D skeletons is an emerging topic with great potential in security-critical applications. Existing methods typically learn body and motion features from the body-joint trajectory, whereas they lack a systematic way to model body structure and underlying relations of body components beyond the scale of body joints. In this paper, we for the first time propose a Self-supervised Multi-scale Skeleton Graph Encoding (SM-SGE) framework that comprehensively models human body, component relations, and skeleton dynamics from unlabeled skeleton graphs of various scales to learn an effective skeleton representation for person Re-ID. Specifically, we first devise multi-scale skeleton graphs with coarse-to-fine human body partitions, which enables us to model body structure and skeleton dynamics at multiple levels. Second, to mine inherent correlations between body components in skeletal motion, we propose a multi-scale graph relation network to learn structural relations between adjacent body-component nodes and collaborative relations among nodes of different scales, so as to capture more discriminative skeleton graph features. Last, we propose a novel multi-scale skeleton reconstruction mechanism to enable our framework to encode skeleton dynamics and high-level semantics from unlabeled skeleton graphs, which encourages learning a discriminative skeleton representation for person Re-ID. Extensive experiments show that SM-SGE outperforms most state-of-the-art skeleton-based methods. We further demonstrate its effectiveness on 3D skeleton data estimated from large-scale RGB videos. Our codes are open at https://github.com/Kali-Hac/SM-SGE.

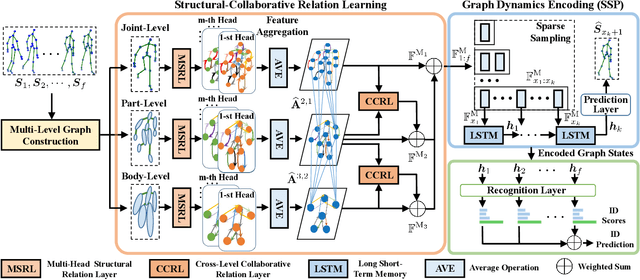

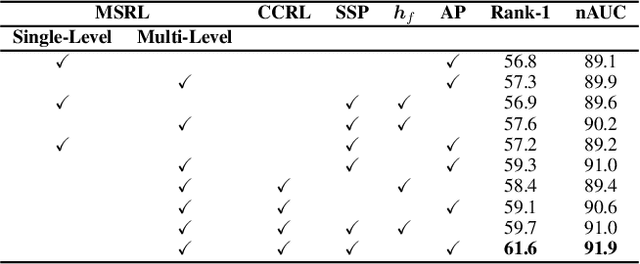

Multi-Level Graph Encoding with Structural-Collaborative Relation Learning for Skeleton-Based Person Re-Identification

Jun 06, 2021

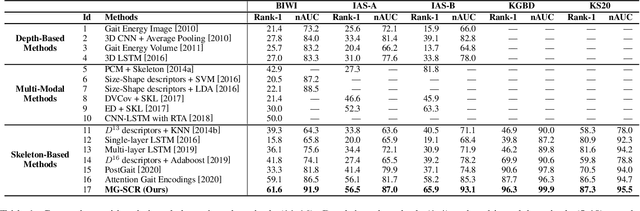

Abstract:Skeleton-based person re-identification (Re-ID) is an emerging open topic providing great value for safety-critical applications. Existing methods typically extract hand-crafted features or model skeleton dynamics from the trajectory of body joints, while they rarely explore valuable relation information contained in body structure or motion. To fully explore body relations, we construct graphs to model human skeletons from different levels, and for the first time propose a Multi-level Graph encoding approach with Structural-Collaborative Relation learning (MG-SCR) to encode discriminative graph features for person Re-ID. Specifically, considering that structurally-connected body components are highly correlated in a skeleton, we first propose a multi-head structural relation layer to learn different relations of neighbor body-component nodes in graphs, which helps aggregate key correlative features for effective node representations. Second, inspired by the fact that body-component collaboration in walking usually carries recognizable patterns, we propose a cross-level collaborative relation layer to infer collaboration between different level components, so as to capture more discriminative skeleton graph features. Finally, to enhance graph dynamics encoding, we propose a novel self-supervised sparse sequential prediction task for model pre-training, which facilitates encoding high-level graph semantics for person Re-ID. MG-SCR outperforms state-of-the-art skeleton-based methods, and it achieves superior performance to many multi-modal methods that utilize extra RGB or depth features. Our codes are available at https://github.com/Kali-Hac/MG-SCR.

* Accepted at IJCAI 2021 Main Track. Sole copyright holder is IJCAI. Codes are available at https://github.com/Kali-Hac/MG-SCR

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge