Han Su

LIRA: A Learning-based Query-aware Partition Framework for Large-scale ANN Search

Mar 30, 2025

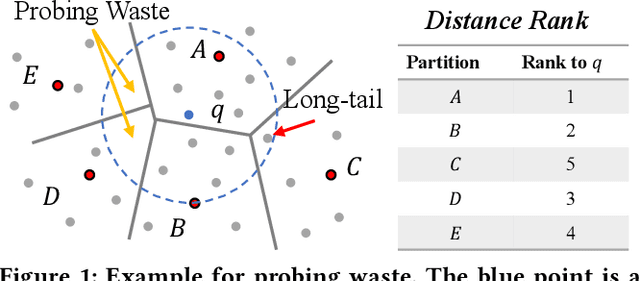

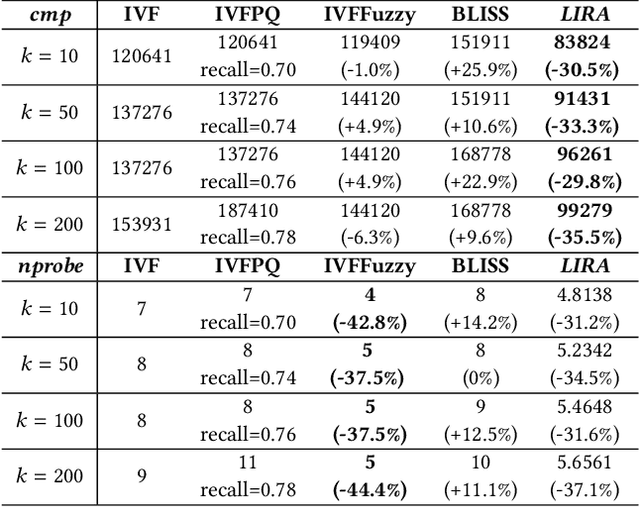

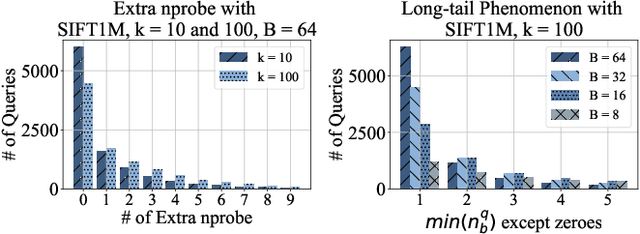

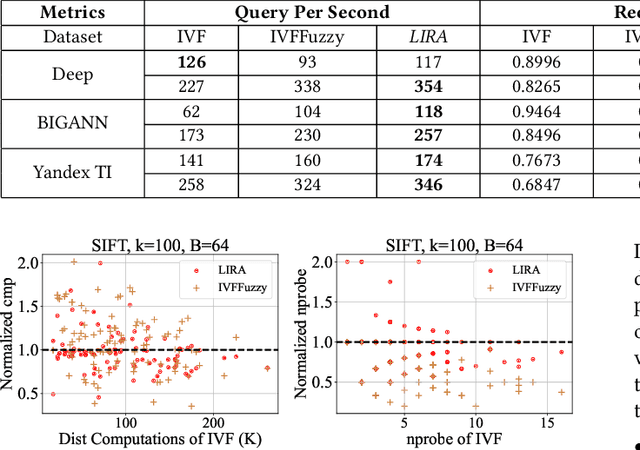

Abstract:Approximate nearest neighbor search is fundamental in information retrieval. Previous partition-based methods enhance search efficiency by probing partial partitions, yet they face two common issues. In the query phase, a common strategy is to probe partitions based on the distance ranks of a query to partition centroids, which inevitably probes irrelevant partitions as it ignores data distribution. In the partition construction phase, all partition-based methods face the boundary problem that separates a query's nearest neighbors to multiple partitions, resulting in a long-tailed kNN distribution and degrading the optimal nprobe (i.e., the number of probing partitions). To address this gap, we propose LIRA, a LearnIng-based queRy-aware pArtition framework. Specifically, we propose a probing model to directly probe the partitions containing the kNN of a query, which can reduce probing waste and allow for query-aware probing with nprobe individually. Moreover, we incorporate the probing model into a learning-based redundancy strategy to mitigate the adverse impact of the long-tailed kNN distribution on search efficiency. Extensive experiments on real-world vector datasets demonstrate the superiority of LIRA in the trade-off among accuracy, latency, and query fan-out. The codes are available at https://github.com/SimoneZeng/LIRA-ANN-search.

A New Era in Human Factors Engineering: A Survey of the Applications and Prospects of Large Multimodal Models

May 22, 2024Abstract:In recent years, the potential applications of Large Multimodal Models (LMMs) in fields such as healthcare, social psychology, and industrial design have attracted wide research attention, providing new directions for human factors research. For instance, LMM-based smart systems have become novel research subjects of human factors studies, and LMM introduces new research paradigms and methodologies to this field. Therefore, this paper aims to explore the applications, challenges, and future prospects of LMM in the domain of human factors and ergonomics through an expert-LMM collaborated literature review. Specifically, a novel literature review method is proposed, and research studies of LMM-based accident analysis, human modelling and intervention design are introduced. Subsequently, the paper discusses future trends of the research paradigm and challenges of human factors and ergonomics studies in the era of LMMs. It is expected that this study can provide a valuable perspective and serve as a reference for integrating human factors with artificial intelligence.

Parameterized Decision-making with Multi-modal Perception for Autonomous Driving

Dec 19, 2023

Abstract:Autonomous driving is an emerging technology that has advanced rapidly over the last decade. Modern transportation is expected to benefit greatly from a wise decision-making framework of autonomous vehicles, including the improvement of mobility and the minimization of risks and travel time. However, existing methods either ignore the complexity of environments only fitting straight roads, or ignore the impact on surrounding vehicles during optimization phases, leading to weak environmental adaptability and incomplete optimization objectives. To address these limitations, we propose a parameterized decision-making framework with multi-modal perception based on deep reinforcement learning, called AUTO. We conduct a comprehensive perception to capture the state features of various traffic participants around the autonomous vehicle, based on which we design a graph-based model to learn a state representation of the multi-modal semantic features. To distinguish between lane-following and lane-changing, we decompose an action of the autonomous vehicle into a parameterized action structure that first decides whether to change lanes and then computes an exact action to execute. A hybrid reward function takes into account aspects of safety, traffic efficiency, passenger comfort, and impact to guide the framework to generate optimal actions. In addition, we design a regularization term and a multi-worker paradigm to enhance the training. Extensive experiments offer evidence that AUTO can advance state-of-the-art in terms of both macroscopic and microscopic effectiveness.

Giant Panda Face Recognition Using Small Dataset

May 27, 2019

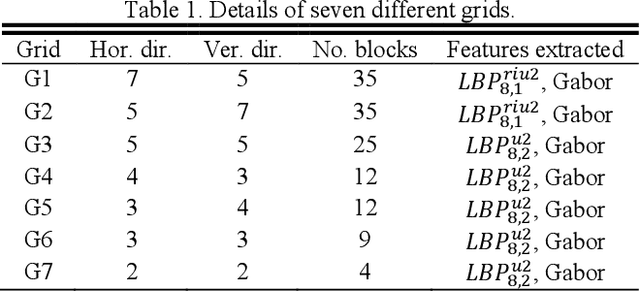

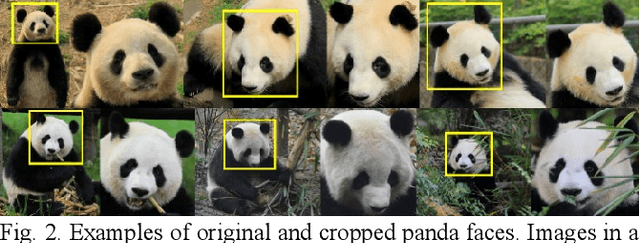

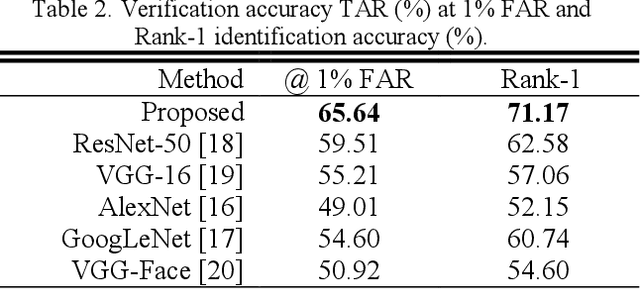

Abstract:Giant panda (panda) is a highly endangered animal. Significant efforts and resources have been put on panda conservation. To measure effectiveness of conservation schemes, estimating its population size in wild is an important task. The current population estimation approaches, including capture-recapture, human visual identification and collection of DNA from hair or feces, are invasive, subjective, costly or even dangerous to the workers who perform these tasks in wild. Cameras have been widely installed in the regions where pandas live. It opens a new possibility for non-invasive image based panda recognition. Panda face recognition is naturally a small dataset problem, because of the number of pandas in the world and the number of qualified images captured by the cameras in each encounter. In this paper, a panda face recognition algorithm, which includes alignment, large feature set extraction and matching is proposed and evaluated on a dataset consisting of 163 images. The experimental results are encouraging.

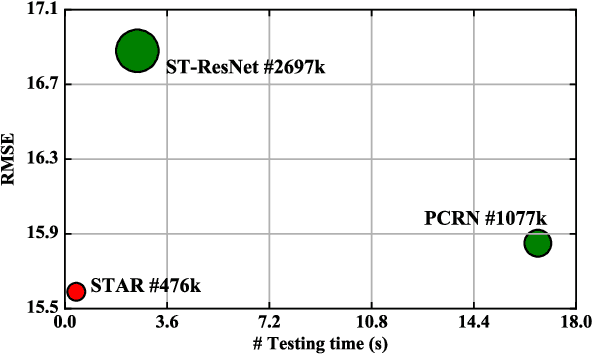

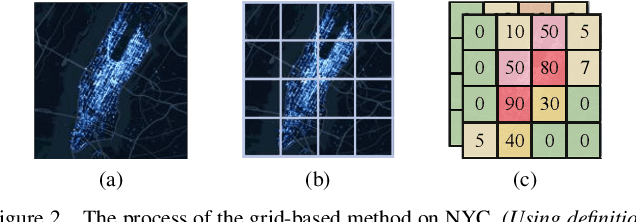

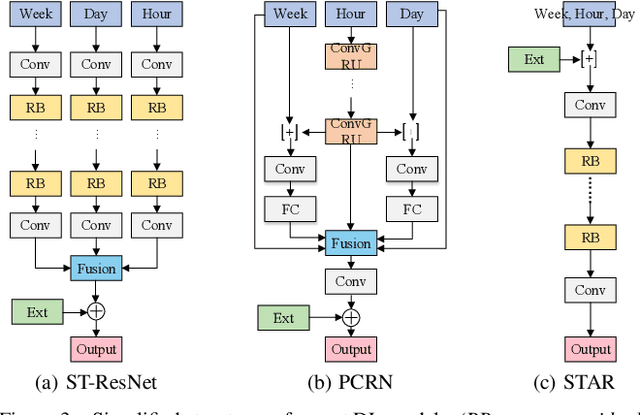

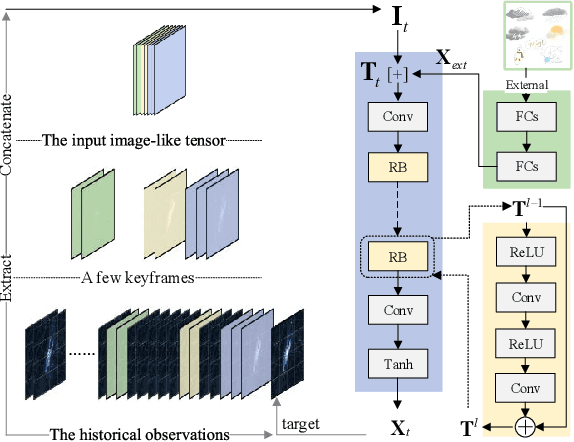

STAR: A Concise Deep Learning Framework for Citywide Human Mobility Prediction

May 16, 2019

Abstract:Human mobility forecasting in a city is of utmost importance to transportation and public safety, but with the process of urbanization and the generation of big data, intensive computing and determination of mobility pattern have become challenging. This study focuses on how to improve the accuracy and efficiency of predicting citywide human mobility via a simpler solution. A spatio-temporal mobility event prediction framework based on a single fully-convolutional residual network (STAR) is proposed. STAR is a highly simple, general and effective method for learning a single tensor representing the mobility event. Residual learning is utilized for training the deep network to derive the detailed result for scenarios of citywide prediction. Extensive benchmark evaluation results on real-world data demonstrate that STAR outperforms state-of-the-art approaches in single- and multi-step prediction while utilizing fewer parameters and achieving higher efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge