Gongze Cao

Sparsely Grouped Multi-task Generative Adversarial Networks for Facial Attribute Manipulation

Oct 19, 2018

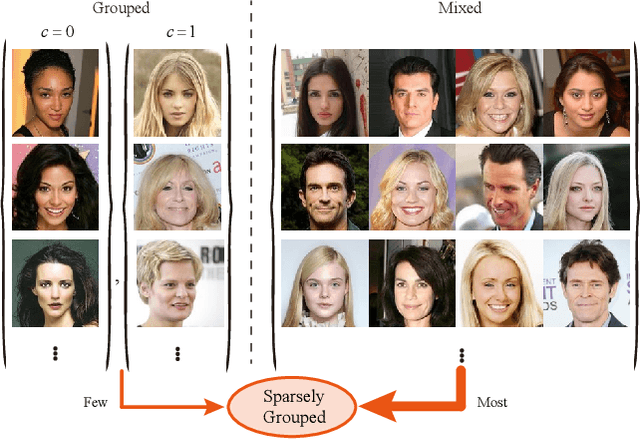

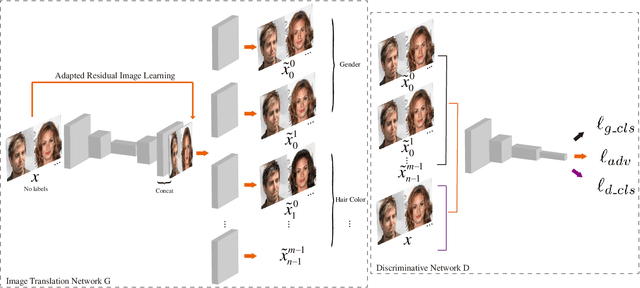

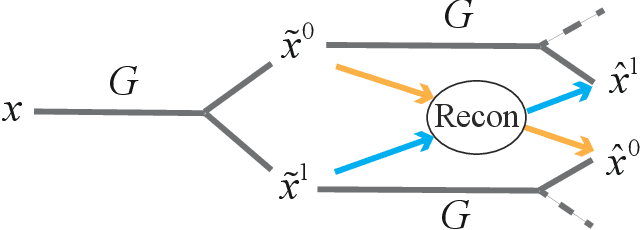

Abstract:Recently, Image-to-Image Translation (IIT) has achieved great progress in image style transfer and semantic context manipulation for images. However, existing approaches require exhaustively labelling training data, which is labor demanding, difficult to scale up, and hard to adapt to a new domain. To overcome such a key limitation, we propose Sparsely Grouped Generative Adversarial Networks (SG-GAN) as a novel approach that can translate images in sparsely grouped datasets where only a few train samples are labelled. Using a one-input multi-output architecture, SG-GAN is well-suited for tackling multi-task learning and sparsely grouped learning tasks. The new model is able to translate images among multiple groups using only a single trained model. To experimentally validate the advantages of the new model, we apply the proposed method to tackle a series of attribute manipulation tasks for facial images as a case study. Experimental results show that SG-GAN can achieve comparable results with state-of-the-art methods on adequately labelled datasets while attaining a superior image translation quality on sparsely grouped datasets.

TripletGAN: Training Generative Model with Triplet Loss

Nov 14, 2017

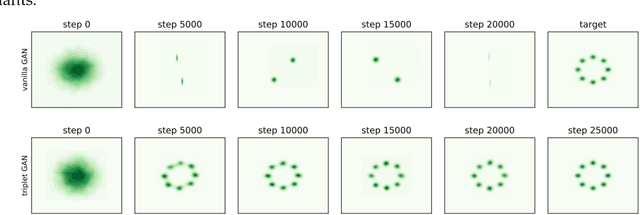

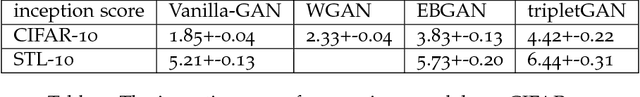

Abstract:As an effective way of metric learning, triplet loss has been widely used in many deep learning tasks, including face recognition and person-ReID, leading to many states of the arts. The main innovation of triplet loss is using feature map to replace softmax in the classification task. Inspired by this concept, we propose here a new adversarial modeling method by substituting the classification loss of discriminator with triplet loss. Theoretical proof based on IPM (Integral probability metric) demonstrates that such setting will help the generator converge to the given distribution theoretically under some conditions. Moreover, since triplet loss requires the generator to maximize distance within a class, we justify tripletGAN is also helpful to prevent mode collapse through both theory and experiment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge