Germán Mora Martín

Video super-resolution for single-photon LIDAR

Oct 19, 2022

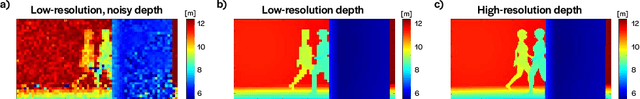

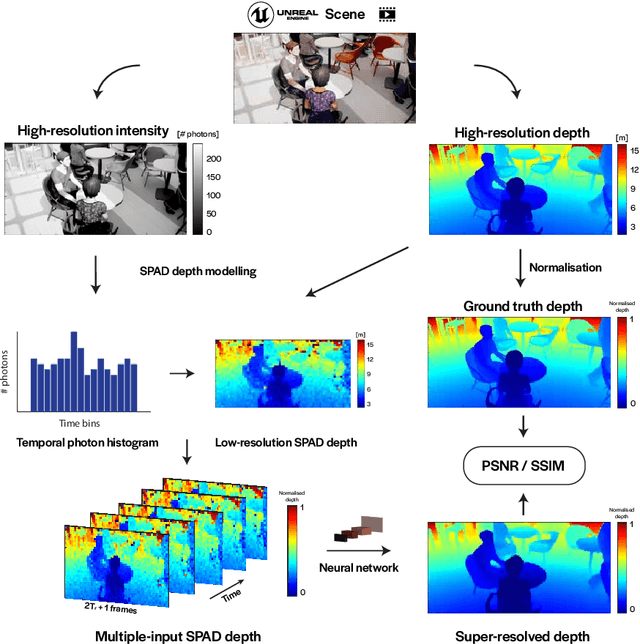

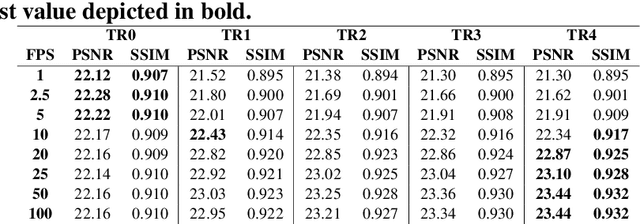

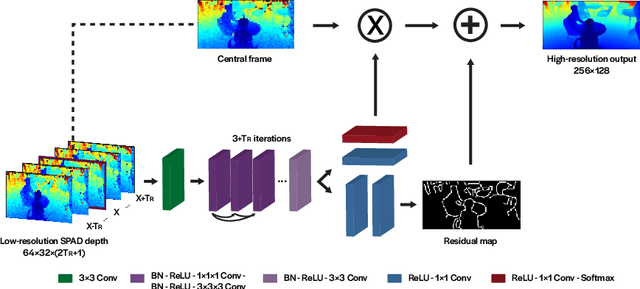

Abstract:3D Time-of-Flight (ToF) image sensors are used widely in applications such as self-driving cars, Augmented Reality (AR) and robotics. When implemented with Single-Photon Avalanche Diodes (SPADs), compact, array format sensors can be made that offer accurate depth maps over long distances, without the need for mechanical scanning. However, array sizes tend to be small, leading to low lateral resolution, which combined with low Signal-to-Noise Ratio (SNR) levels under high ambient illumination, may lead to difficulties in scene interpretation. In this paper, we use synthetic depth sequences to train a 3D Convolutional Neural Network (CNN) for denoising and upscaling (x4) depth data. Experimental results, based on synthetic as well as real ToF data, are used to demonstrate the effectiveness of the scheme. With GPU acceleration, frames are processed at >30 frames per second, making the approach suitable for low-latency imaging, as required for obstacle avoidance.

A direct time-of-flight image sensor with in-pixel surface detection and dynamic vision

Sep 23, 2022

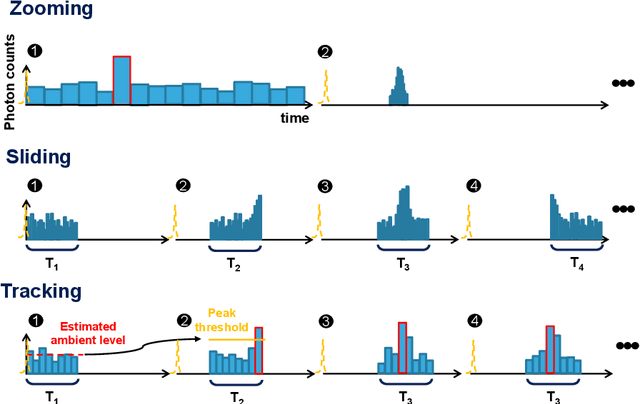

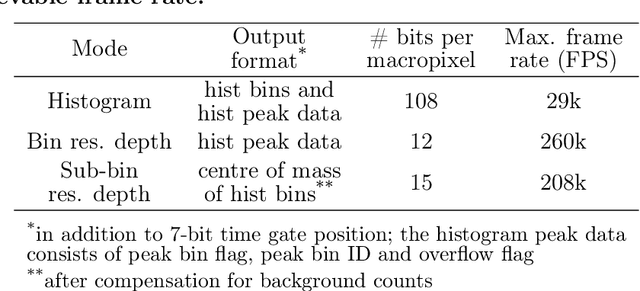

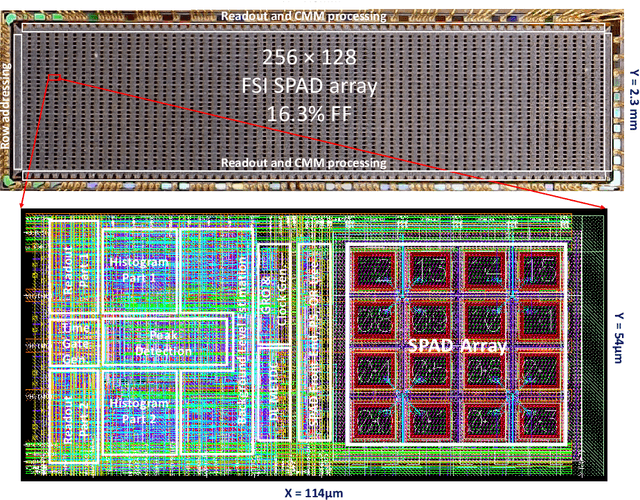

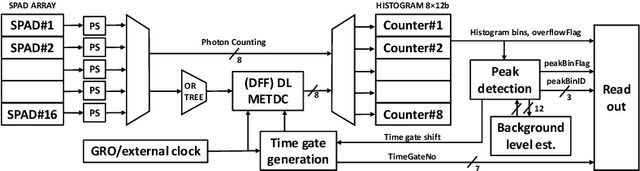

Abstract:3D flash LIDAR is an alternative to the traditional scanning LIDAR systems, promising precise depth imaging in a compact form factor, and free of moving parts, for applications such as self-driving cars, robotics and augmented reality (AR). Typically implemented using single-photon, direct time-of-flight (dToF) receivers in image sensor format, the operation of the devices can be hindered by the large number of photon events needing to be processed and compressed in outdoor scenarios, limiting frame rates and scalability to larger arrays. We here present a 64x32 pixel (256x128 SPAD) dToF imager that overcomes these limitations by using pixels with embedded histogramming, which lock onto and track the return signal. This reduces the size of output data frames considerably, enabling maximum frame rates in the 10 kFPS range or 100 kFPS for direct depth readings. The sensor offers selective readout of pixels detecting surfaces, or those sensing motion, leading to reduced power consumption and off-chip processing requirements. We demonstrate the application of the sensor in mid-range LIDAR.

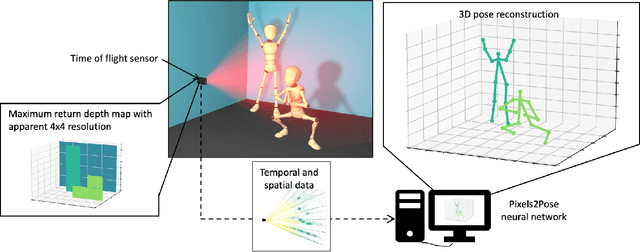

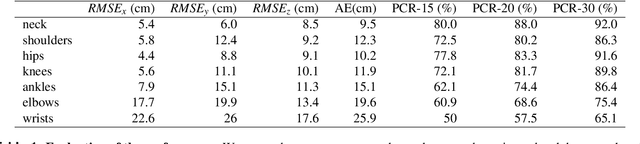

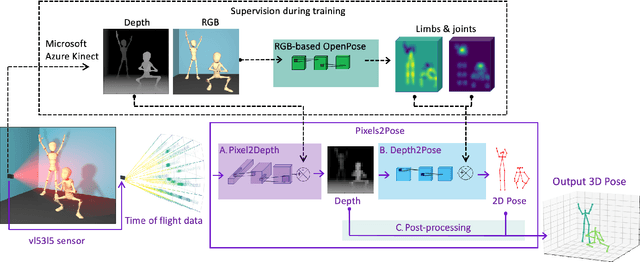

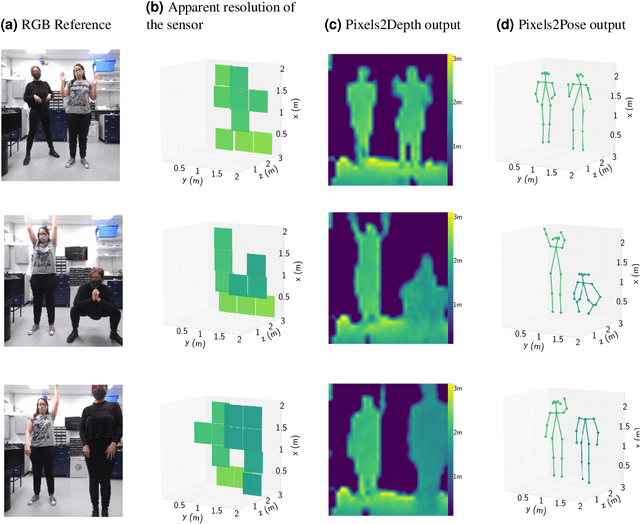

Real-time, low-cost multi-person 3D pose estimation

Oct 11, 2021

Abstract:The process of tracking human anatomy in computer vision is referred to pose estimation, and it is used in fields ranging from gaming to surveillance. Three-dimensional pose estimation traditionally requires advanced equipment, such as multiple linked intensity cameras or high-resolution time-of-flight cameras to produce depth images. However, there are applications, e.g.~consumer electronics, where significant constraints are placed on the size, power consumption, weight and cost of the usable technology. Here, we demonstrate that computational imaging methods can achieve accurate pose estimation and overcome the apparent limitations of time-of-flight sensors designed for much simpler tasks. The sensor we use is already widely integrated in consumer-grade mobile devices, and despite its low spatial resolution, only 4$\times$4 pixels, our proposed Pixels2Pose system transforms its data into accurate depth maps and 3D pose data of multiple people up to a distance of 3 m from the sensor. We are able to generate depth maps at a resolution of 32$\times$32 and 3D localization of a body parts with an error of only $\approx$10 cm at a frame rate of 7 fps. This work opens up promising real-life applications in scenarios that were previously restricted by the advanced hardware requirements and cost of time-of-flight technology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge