Video super-resolution for single-photon LIDAR

Paper and Code

Oct 19, 2022

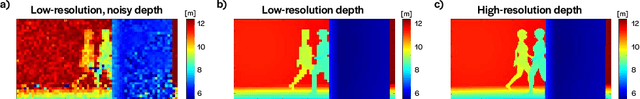

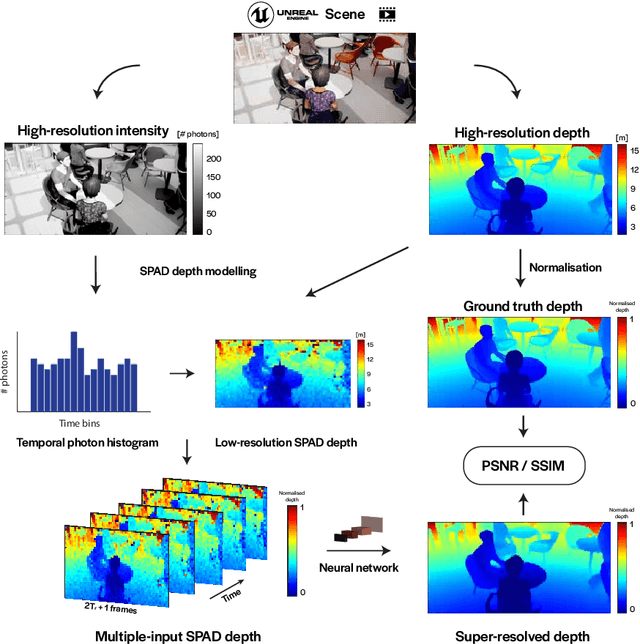

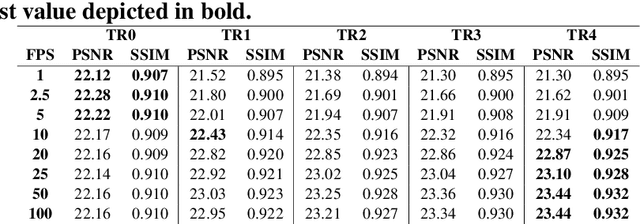

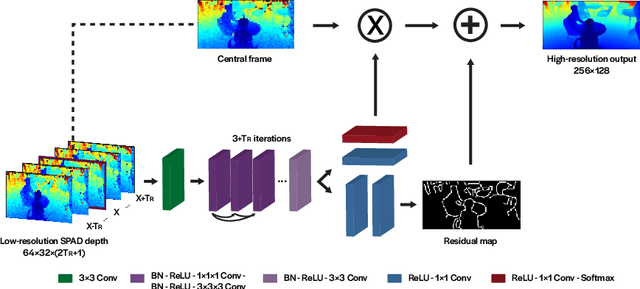

3D Time-of-Flight (ToF) image sensors are used widely in applications such as self-driving cars, Augmented Reality (AR) and robotics. When implemented with Single-Photon Avalanche Diodes (SPADs), compact, array format sensors can be made that offer accurate depth maps over long distances, without the need for mechanical scanning. However, array sizes tend to be small, leading to low lateral resolution, which combined with low Signal-to-Noise Ratio (SNR) levels under high ambient illumination, may lead to difficulties in scene interpretation. In this paper, we use synthetic depth sequences to train a 3D Convolutional Neural Network (CNN) for denoising and upscaling (x4) depth data. Experimental results, based on synthetic as well as real ToF data, are used to demonstrate the effectiveness of the scheme. With GPU acceleration, frames are processed at >30 frames per second, making the approach suitable for low-latency imaging, as required for obstacle avoidance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge