Francisco Casacuberta

DEEP: Docker-based Execution and Evaluation Platform

Feb 23, 2026Abstract:Comparative evaluation of several systems is a recurrent task in researching. It is a key step before deciding which system to use for our work, or, once our research has been conducted, to demonstrate the potential of the resulting model. Furthermore, it is the main task of competitive, public challenges evaluation. Our proposed software (DEEP) automates both the execution and scoring of machine translation and optical character recognition models. Furthermore, it is easily extensible to other tasks. DEEP is prepared to receive dockerized systems, run them (extracting information at that same time), and assess hypothesis against some references. With this approach, evaluators can achieve a better understanding of the performance of each model. Moreover, the software uses a clustering algorithm based on a statistical analysis of the significance of the results yielded by each model, according to the evaluation metrics. As a result, evaluators are able to identify clusters of performance among the swarm of proposals and have a better understanding of the significance of their differences. Additionally, we offer a visualization web-app to ensure that the results can be adequately understood and interpreted. Finally, we present an exemplary case of use of DEEP.

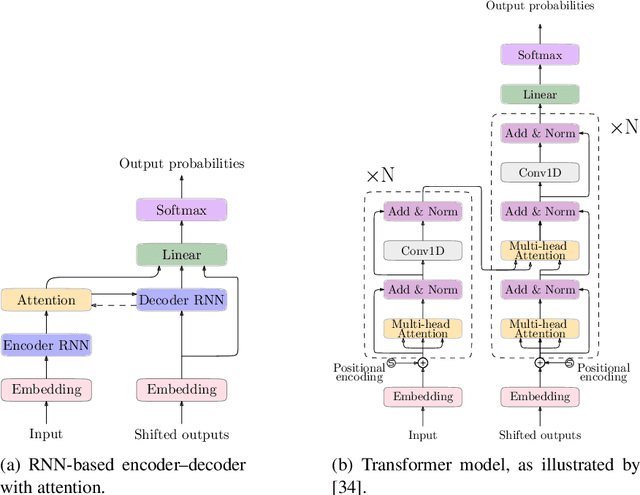

Segment-Based Interactive Machine Translation for Pre-trained Models

Jul 09, 2024Abstract:Pre-trained large language models (LLM) are starting to be widely used in many applications. In this work, we explore the use of these models in interactive machine translation (IMT) environments. In particular, we have chosen mBART (multilingual Bidirectional and Auto-Regressive Transformer) and mT5 (multilingual Text-to-Text Transfer Transformer) as the LLMs to perform our experiments. The system generates perfect translations interactively using the feedback provided by the user at each iteration. The Neural Machine Translation (NMT) model generates a preliminary hypothesis with the feedback, and the user validates new correct segments and performs a word correction--repeating the process until the sentence is correctly translated. We compared the performance of mBART, mT5, and a state-of-the-art (SoTA) machine translation model on a benchmark dataset regarding user effort, Word Stroke Ratio (WSR), Key Stroke Ratio (KSR), and Mouse Action Ratio (MAR). The experimental results indicate that mBART performed comparably with SoTA models, suggesting that it is a viable option for this field of IMT. The implications of this finding extend to the development of new machine translation models for interactive environments, as it indicates that some novel pre-trained models exhibit SoTA performance in this domain, highlighting the potential benefits of adapting these models to specific needs.

AutoNMT: A Framework to Streamline the Research of Seq2Seq Models

Feb 09, 2023

Abstract:We present AutoNMT, a framework to streamline the research of seq-to-seq models by automating the data pipeline (i.e., file management, data preprocessing, and exploratory analysis), automating experimentation in a toolkit-agnostic manner, which allows users to use either their own models or existing seq-to-seq toolkits such as Fairseq or OpenNMT, and finally, automating the report generation (plots and summaries). Furthermore, this library comes with its own seq-to-seq toolkit so that users can easily customize it for non-standard tasks.

Findings of the Covid-19 MLIA Machine Translation Task

Nov 14, 2022

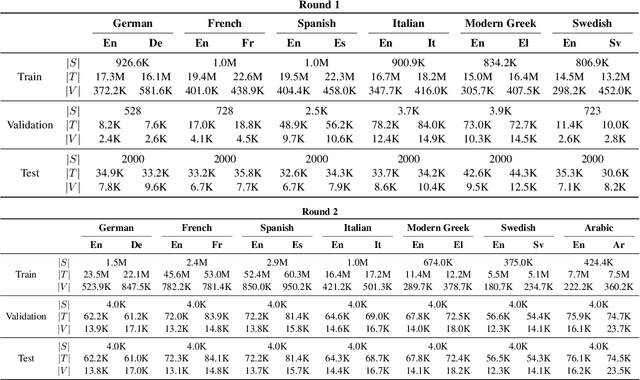

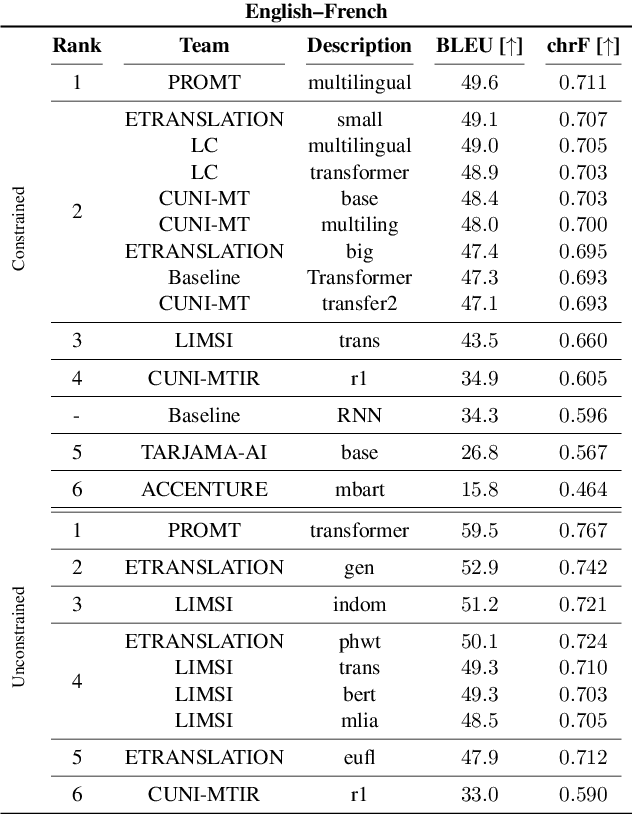

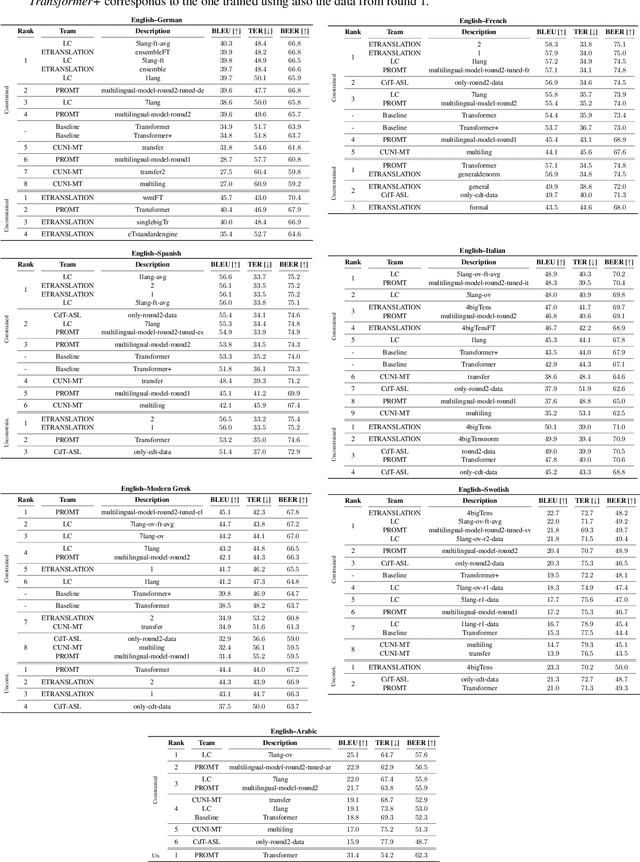

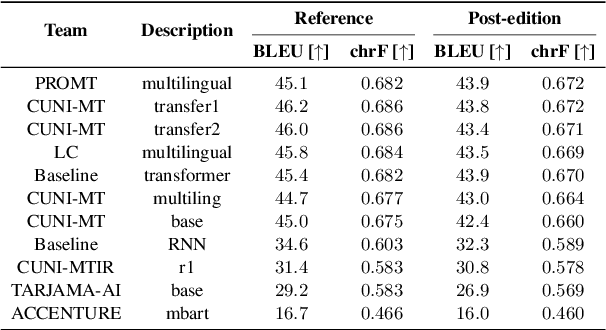

Abstract:This work presents the results of the machine translation (MT) task from the Covid-19 MLIA @ Eval initiative, a community effort to improve the generation of MT systems focused on the current Covid-19 crisis. Nine teams took part in this event, which was divided in two rounds and involved seven different language pairs. Two different scenarios were considered: one in which only the provided data was allowed, and a second one in which the use of external resources was allowed. Overall, best approaches were based on multilingual models and transfer learning, with an emphasis on the importance of applying a cleaning process to the training data.

Two Demonstrations of the Machine Translation Applications to Historical Documents

Feb 02, 2021

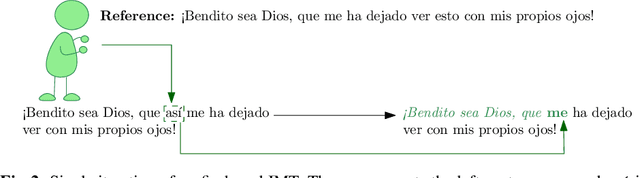

Abstract:We present our demonstration of two machine translation applications to historical documents. The first task consists in generating a new version of a historical document, written in the modern version of its original language. The second application is limited to a document's orthography. It adapts the document's spelling to modern standards in order to achieve an orthography consistency and accounting for the lack of spelling conventions. We followed an interactive, adaptive framework that allows the user to introduce corrections to the system's hypothesis. The system reacts to these corrections by generating a new hypothesis that takes them into account. Once the user is satisfied with the system's hypothesis and validates it, the system adapts its model following an online learning strategy. This system is implemented following a client-server architecture. We developed a website which communicates with the neural models. All code is open-source and publicly available. The demonstration is hosted at http://demosmt.prhlt.upv.es/mthd/.

An Interactive Machine Translation Framework for Modernizing Historical Documents

Oct 08, 2019

Abstract:Due to the nature of human language, historical documents are hard to comprehend by contemporary people. This limits their accessibility to scholars specialized in the time period in which the documents were written. Modernization aims at breaking this language barrier by generating a new version of a historical document, written in the modern version of the document's original language. However, while it is able to increase the document's comprehension, modernization is still far from producing an error-free version. In this work, we propose a collaborative framework in which a scholar can work together with the machine to generate the new version. We tested our approach on a simulated environment, achieving significant reductions of the human effort needed to produce the modernized version of the document.

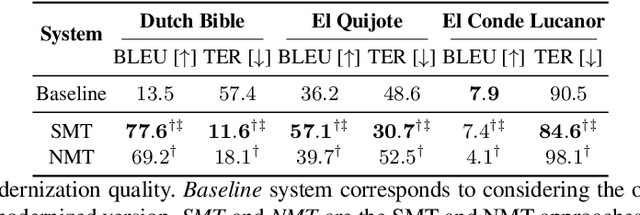

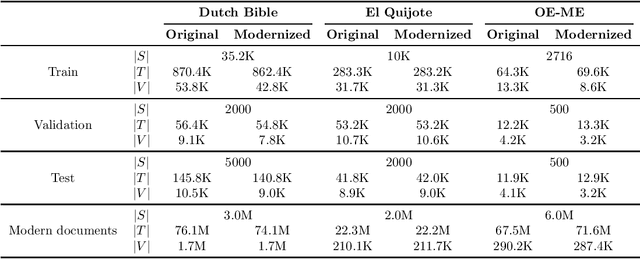

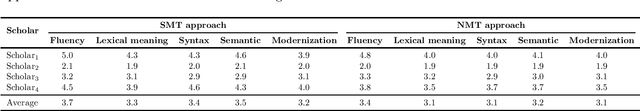

Modernizing Historical Documents: a User Study

Jul 01, 2019

Abstract:Accessibility to historical documents is mostly limited to scholars. This is due to the language barrier inherent in human language and the linguistic properties of these documents. Given a historical document, modernization aims to generate a new version of it, written in the modern version of the document's language. Its goal is to tackle the language barrier, decreasing the comprehension difficulty and making historical documents accessible to a broader audience. In this work, we proposed a new neural machine translation approach that profits from modern documents to enrich its systems. We tested this approach with both automatic and human evaluation, and conducted a user study. Results showed that modernization is successfully reaching its goal, although it still has room for improvement.

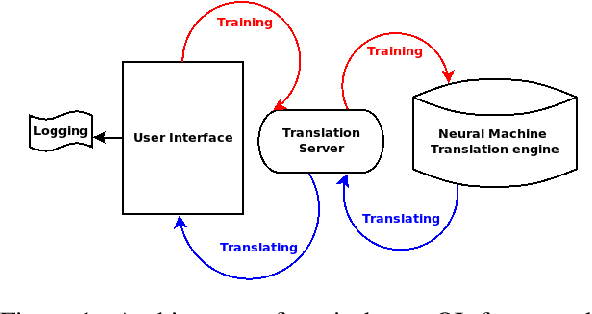

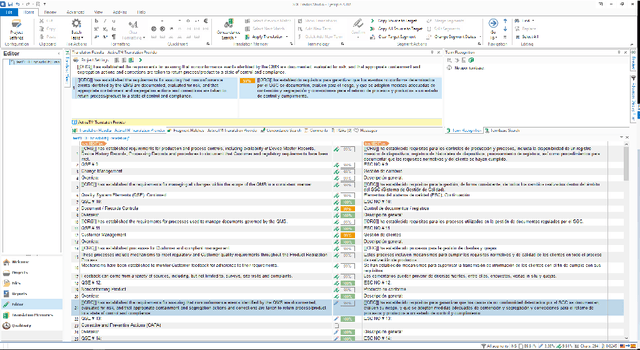

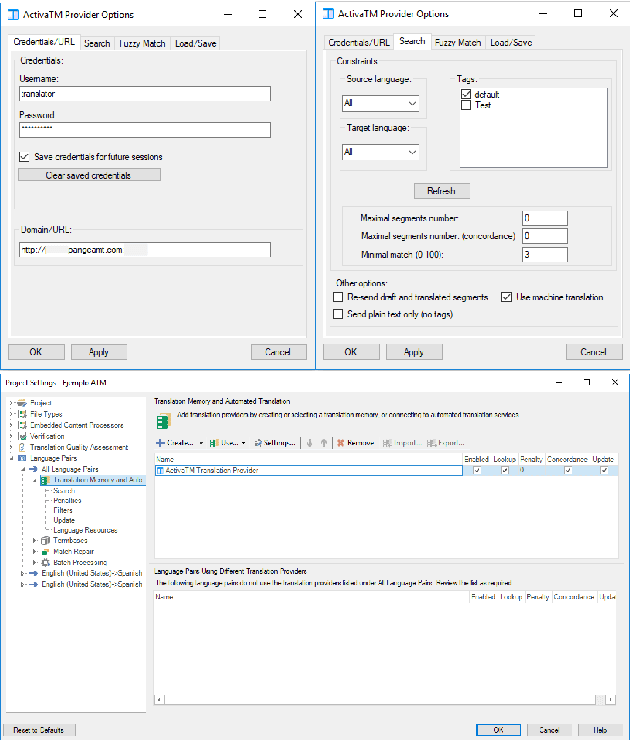

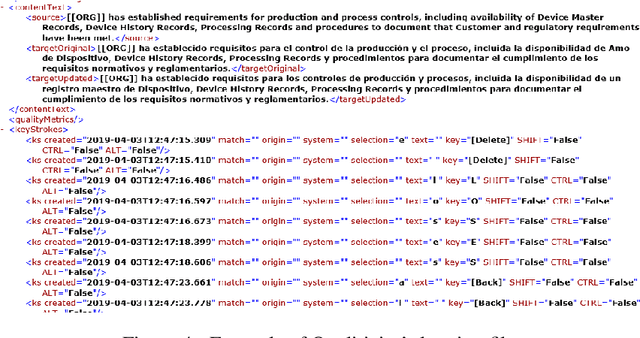

Demonstration of a Neural Machine Translation System with Online Learning for Translators

Jun 21, 2019

Abstract:We introduce a demonstration of our system, which implements online learning for neural machine translation in a production environment. These techniques allow the system to continuously learn from the corrections provided by the translators. We implemented an end-to-end platform integrating our machine translation servers to one of the most common user interfaces for professional translators: SDL Trados Studio. Our objective was to save post-editing effort as the machine is continuously learning from human choices and adapting the models to a specific domain or user style.

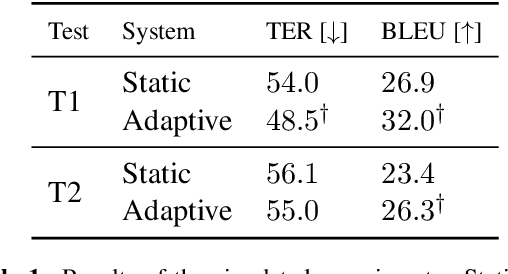

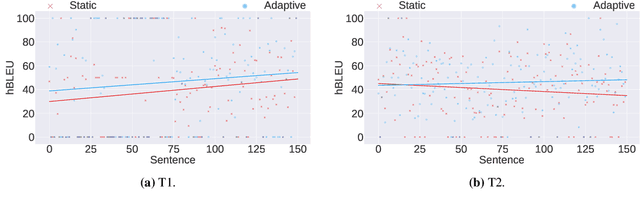

Incremental Adaptation of NMT for Professional Post-editors: A User Study

Jun 21, 2019

Abstract:A common use of machine translation in the industry is providing initial translation hypotheses, which are later supervised and post-edited by a human expert. During this revision process, new bilingual data are continuously generated. Machine translation systems can benefit from these new data, incrementally updating the underlying models under an online learning paradigm. We conducted a user study on this scenario, for a neural machine translation system. The experimentation was carried out by professional translators, with a vast experience in machine translation post-editing. The results showed a reduction in the required amount of human effort needed when post-editing the outputs of the system, improvements in the translation quality and a positive perception of the adaptive system by the users.

Interactive-predictive neural multimodal systems

May 30, 2019

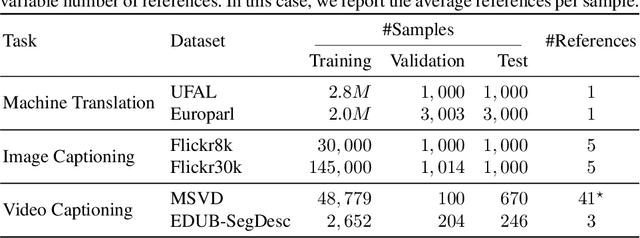

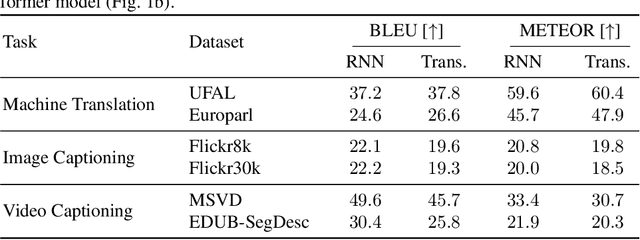

Abstract:Despite the advances achieved by neural models in sequence to sequence learning, exploited in a variety of tasks, they still make errors. In many use cases, these are corrected by a human expert in a posterior revision process. The interactive-predictive framework aims to minimize the human effort spent on this process by considering partial corrections for iteratively refining the hypothesis. In this work, we generalize the interactive-predictive approach, typically applied in to machine translation field, to tackle other multimodal problems namely, image and video captioning. We study the application of this framework to multimodal neural sequence to sequence models. We show that, following this framework, we approximately halve the effort spent for correcting the outputs generated by the automatic systems. Moreover, we deploy our systems in a publicly accessible demonstration, that allows to better understand the behavior of the interactive-predictive framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge