Filipe R. Cordeiro

ANNE: Adaptive Nearest Neighbors and Eigenvector-based Sample Selection for Robust Learning with Noisy Labels

Nov 03, 2024Abstract:An important stage of most state-of-the-art (SOTA) noisy-label learning methods consists of a sample selection procedure that classifies samples from the noisy-label training set into noisy-label or clean-label subsets. The process of sample selection typically consists of one of the two approaches: loss-based sampling, where high-loss samples are considered to have noisy labels, or feature-based sampling, where samples from the same class tend to cluster together in the feature space and noisy-label samples are identified as anomalies within those clusters. Empirically, loss-based sampling is robust to a wide range of noise rates, while feature-based sampling tends to work effectively in particular scenarios, e.g., the filtering of noisy instances via their eigenvectors (FINE) sampling exhibits greater robustness in scenarios with low noise rates, and the K nearest neighbor (KNN) sampling mitigates better high noise-rate problems. This paper introduces the Adaptive Nearest Neighbors and Eigenvector-based (ANNE) sample selection methodology, a novel approach that integrates loss-based sampling with the feature-based sampling methods FINE and Adaptive KNN to optimize performance across a wide range of noise rate scenarios. ANNE achieves this integration by first partitioning the training set into high-loss and low-loss sub-groups using loss-based sampling. Subsequently, within the low-loss subset, sample selection is performed using FINE, while the high-loss subset employs Adaptive KNN for effective sample selection. We integrate ANNE into the noisy-label learning state of the art (SOTA) method SSR+, and test it on CIFAR-10/-100 (with symmetric, asymmetric and instance-dependent noise), Webvision and ANIMAL-10, where our method shows better accuracy than the SOTA in most experiments, with a competitive training time.

Recognizing Handwritten Mathematical Expressions of Vertical Addition and Subtraction

Aug 10, 2023

Abstract:Handwritten Mathematical Expression Recognition (HMER) is a challenging task with many educational applications. Recent methods for HMER have been developed for complex mathematical expressions in standard horizontal format. However, solutions for elementary mathematical expression, such as vertical addition and subtraction, have not been explored in the literature. This work proposes a new handwritten elementary mathematical expression dataset composed of addition and subtraction expressions in a vertical format. We also extended the MNIST dataset to generate artificial images with this structure. Furthermore, we proposed a solution for offline HMER, able to recognize vertical addition and subtraction expressions. Our analysis evaluated the object detection algorithms YOLO v7, YOLO v8, YOLO-NAS, NanoDet and FCOS for identifying the mathematical symbols. We also proposed a transcription method to map the bounding boxes from the object detection stage to a mathematical expression in the LATEX markup sequence. Results show that our approach is efficient, achieving a high expression recognition rate. The code and dataset are available at https://github.com/Danielgol/HME-VAS

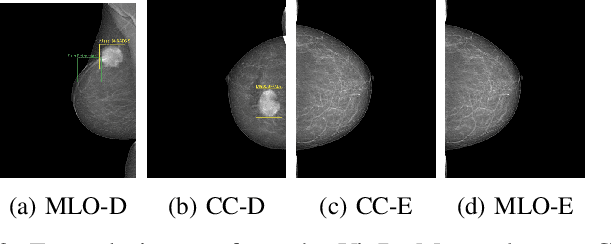

Improving Mass Detection in Mammography Images: A Study of Weakly Supervised Learning and Class Activation Map Methods

Aug 07, 2023

Abstract:In recent years, weakly supervised models have aided in mass detection using mammography images, decreasing the need for pixel-level annotations. However, most existing models in the literature rely on Class Activation Maps (CAM) as the activation method, overlooking the potential benefits of exploring other activation techniques. This work presents a study that explores and compares different activation maps in conjunction with state-of-the-art methods for weakly supervised training in mammography images. Specifically, we investigate CAM, GradCAM, GradCAM++, XGradCAM, and LayerCAM methods within the framework of the GMIC model for mass detection in mammography images. The evaluation is conducted on the VinDr-Mammo dataset, utilizing the metrics Accuracy, True Positive Rate (TPR), False Negative Rate (FNR), and False Positive Per Image (FPPI). Results show that using different strategies of activation maps during training and test stages leads to an improvement of the model. With this strategy, we improve the results of the GMIC method, decreasing the FPPI value and increasing TPR.

A Study on the Impact of Data Augmentation for Training Convolutional Neural Networks in the Presence of Noisy Labels

Aug 23, 2022

Abstract:Label noise is common in large real-world datasets, and its presence harms the training process of deep neural networks. Although several works have focused on the training strategies to address this problem, there are few studies that evaluate the impact of data augmentation as a design choice for training deep neural networks. In this work, we analyse the model robustness when using different data augmentations and their improvement on the training with the presence of noisy labels. We evaluate state-of-the-art and classical data augmentation strategies with different levels of synthetic noise for the datasets MNist, CIFAR-10, CIFAR-100, and the real-world dataset Clothing1M. We evaluate the methods using the accuracy metric. Results show that the appropriate selection of data augmentation can drastically improve the model robustness to label noise, increasing up to 177.84% of relative best test accuracy compared to the baseline with no augmentation, and an increase of up to 6% in absolute value with the state-of-the-art DivideMix training strategy.

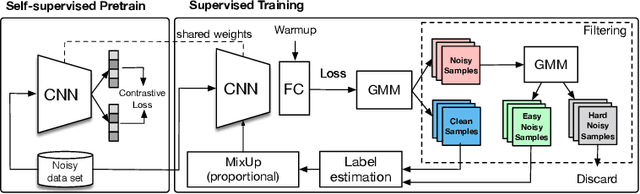

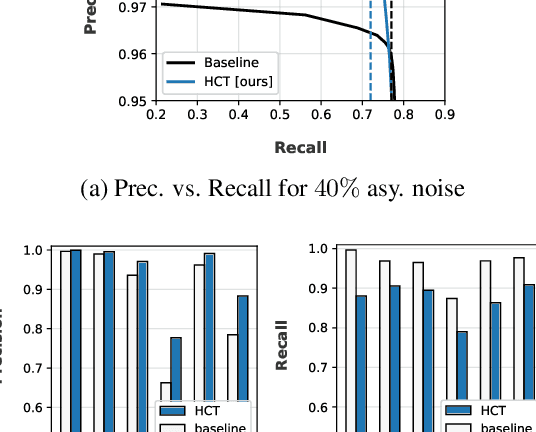

PropMix: Hard Sample Filtering and Proportional MixUp for Learning with Noisy Labels

Oct 22, 2021

Abstract:The most competitive noisy label learning methods rely on an unsupervised classification of clean and noisy samples, where samples classified as noisy are re-labelled and "MixMatched" with the clean samples. These methods have two issues in large noise rate problems: 1) the noisy set is more likely to contain hard samples that are in-correctly re-labelled, and 2) the number of samples produced by MixMatch tends to be reduced because it is constrained by the small clean set size. In this paper, we introduce the learning algorithm PropMix to handle the issues above. PropMix filters out hard noisy samples, with the goal of increasing the likelihood of correctly re-labelling the easy noisy samples. Also, PropMix places clean and re-labelled easy noisy samples in a training set that is augmented with MixUp, removing the clean set size constraint and including a large proportion of correctly re-labelled easy noisy samples. We also include self-supervised pre-training to improve robustness to high noisy label scenarios. Our experiments show that PropMix has state-of-the-art (SOTA) results on CIFAR-10/-100(with symmetric, asymmetric and semantic label noise), Red Mini-ImageNet (from the Controlled Noisy Web Labels), Clothing1M and WebVision. In severe label noise bench-marks, our results are substantially better than other methods. The code is available athttps://github.com/filipe-research/PropMix.

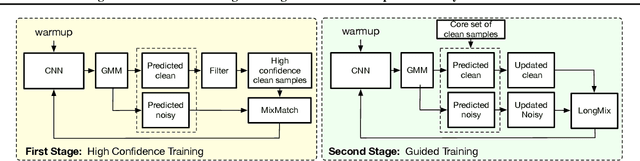

LongReMix: Robust Learning with High Confidence Samples in a Noisy Label Environment

Mar 06, 2021

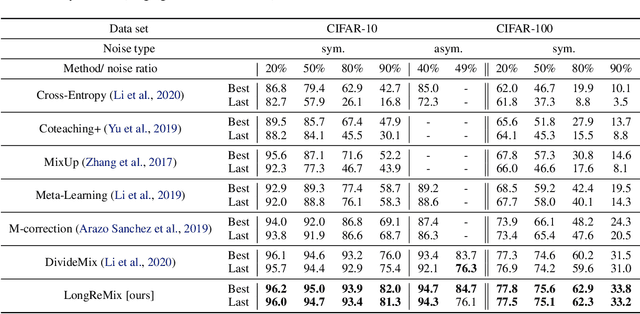

Abstract:Deep neural network models are robust to a limited amount of label noise, but their ability to memorise noisy labels in high noise rate problems is still an open issue. The most competitive noisy-label learning algorithms rely on a 2-stage process comprising an unsupervised learning to classify training samples as clean or noisy, followed by a semi-supervised learning that minimises the empirical vicinal risk (EVR) using a labelled set formed by samples classified as clean, and an unlabelled set with samples classified as noisy. In this paper, we hypothesise that the generalisation of such 2-stage noisy-label learning methods depends on the precision of the unsupervised classifier and the size of the training set to minimise the EVR. We empirically validate these two hypotheses and propose the new 2-stage noisy-label training algorithm LongReMix. We test LongReMix on the noisy-label benchmarks CIFAR-10, CIFAR-100, WebVision, Clothing1M, and Food101-N. The results show that our LongReMix generalises better than competing approaches, particularly in high label noise problems. Furthermore, our approach achieves state-of-the-art performance in most datasets. The code will be available upon paper acceptance.

Noisy Label Learning for Large-scale Medical Image Classification

Mar 06, 2021

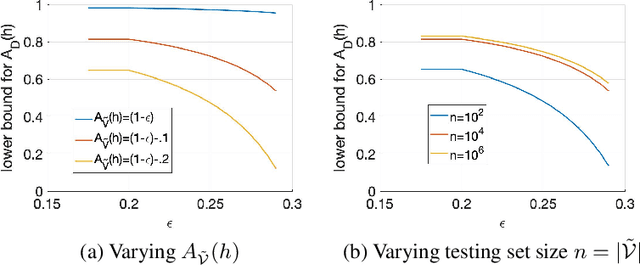

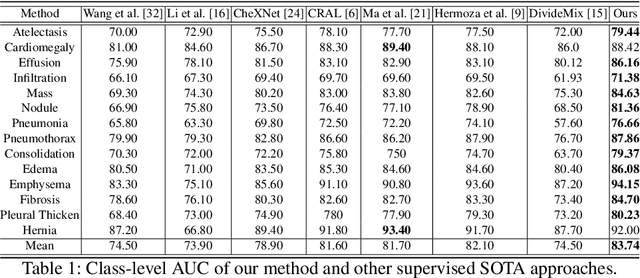

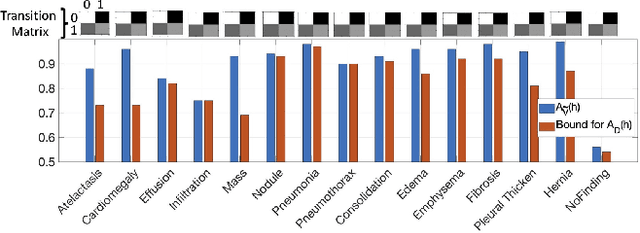

Abstract:The classification accuracy of deep learning models depends not only on the size of their training sets, but also on the quality of their labels. In medical image classification, large-scale datasets are becoming abundant, but their labels will be noisy when they are automatically extracted from radiology reports using natural language processing tools. Given that deep learning models can easily overfit these noisy-label samples, it is important to study training approaches that can handle label noise. In this paper, we adapt a state-of-the-art (SOTA) noisy-label multi-class training approach to learn a multi-label classifier for the dataset Chest X-ray14, which is a large scale dataset known to contain label noise in the training set. Given that this dataset also has label noise in the testing set, we propose a new theoretically sound method to estimate the performance of the model on a hidden clean testing data, given the result on the noisy testing data. Using our clean data performance estimation, we notice that the majority of label noise on Chest X-ray14 is present in the class 'No Finding', which is intuitively correct because this is the most likely class to contain one or more of the 14 diseases due to labelling mistakes.

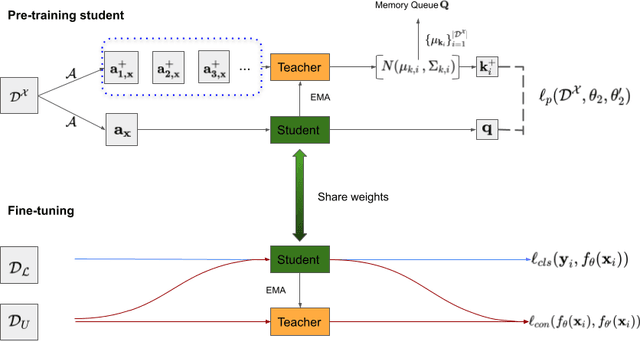

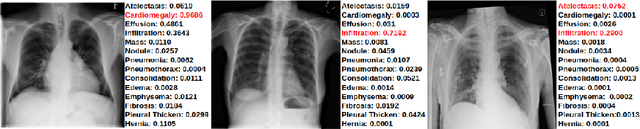

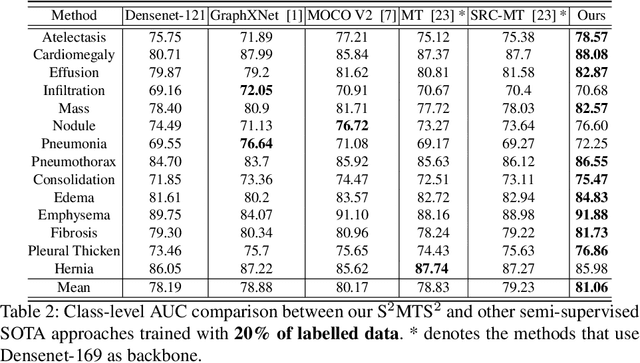

Self-supervised Mean Teacher for Semi-supervised Chest X-ray Classification

Mar 05, 2021

Abstract:The training of deep learning models generally requires a large amount of annotated data for effective convergence and generalisation. However, obtaining high-quality annotations is a laboursome and expensive process due to the need of expert radiologists for the labelling task. The study of semi-supervised learning in medical image analysis is then of crucial importance given that it is much less expensive to obtain unlabelled images than to acquire images labelled by expert radiologists.Essentially, semi-supervised methods leverage large sets of unlabelled data to enable better training convergence and generalisation than if we use only the small set of labelled images.In this paper, we propose the Self-supervised Mean Teacher for Semi-supervised (S$^2$MTS$^2$) learning that combines self-supervised mean-teacher pre-training with semi-supervised fine-tuning. The main innovation of S$^2$MTS$^2$ is the self-supervised mean-teacher pre-training based on the joint contrastive learning, which uses an infinite number of pairs of positive query and key features to improve the mean-teacher representation. The model is then fine-tuned using the exponential moving average teacher framework trained with semi-supervised learning.We validate S$^2$MTS$^2$ on the thorax disease multi-label classification problem from the dataset Chest X-ray14, where we show that it outperforms the previous SOTA semi-supervised learning methods by a large margin.

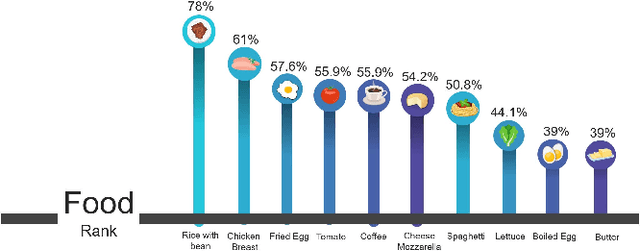

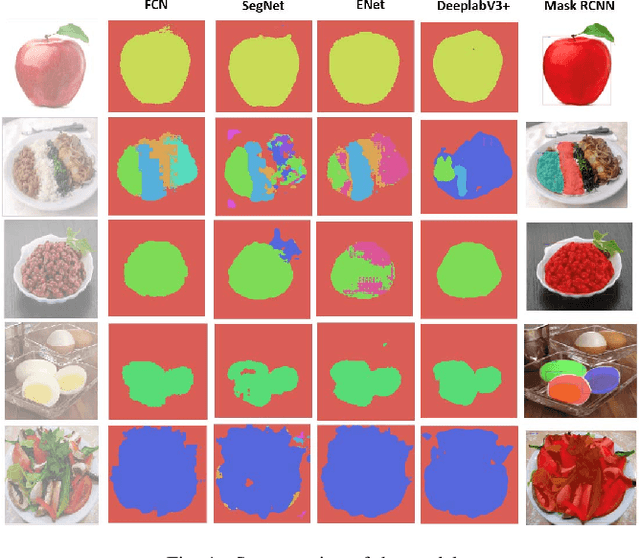

MyFood: A Food Segmentation and Classification System to Aid Nutritional Monitoring

Dec 05, 2020

Abstract:The absence of food monitoring has contributed significantly to the increase in the population's weight. Due to the lack of time and busy routines, most people do not control and record what is consumed in their diet. Some solutions have been proposed in computer vision to recognize food images, but few are specialized in nutritional monitoring. This work presents the development of an intelligent system that classifies and segments food presented in images to help the automatic monitoring of user diet and nutritional intake. This work shows a comparative study of state-of-the-art methods for image classification and segmentation, applied to food recognition. In our methodology, we compare the FCN, ENet, SegNet, DeepLabV3+, and Mask RCNN algorithms. We build a dataset composed of the most consumed Brazilian food types, containing nine classes and a total of 1250 images. The models were evaluated using the following metrics: Intersection over Union, Sensitivity, Specificity, Balanced Precision, and Positive Predefined Value. We also propose an system integrated into a mobile application that automatically recognizes and estimates the nutrients in a meal, assisting people with better nutritional monitoring. The proposed solution showed better results than the existing ones in the market. The dataset is publicly available at the following link http://doi.org/10.5281/zenodo.4041488

* Paper published at SIBRAPI 2020 (Camera ready version)

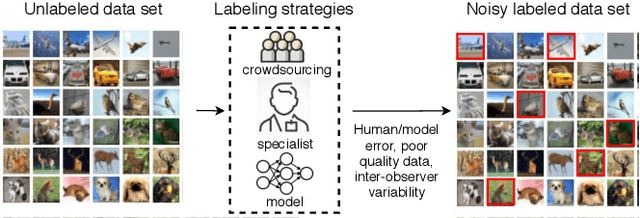

A Survey on Deep Learning with Noisy Labels: How to train your model when you cannot trust on the annotations?

Dec 05, 2020

Abstract:Noisy Labels are commonly present in data sets automatically collected from the internet, mislabeled by non-specialist annotators, or even specialists in a challenging task, such as in the medical field. Although deep learning models have shown significant improvements in different domains, an open issue is their ability to memorize noisy labels during training, reducing their generalization potential. As deep learning models depend on correctly labeled data sets and label correctness is difficult to guarantee, it is crucial to consider the presence of noisy labels for deep learning training. Several approaches have been proposed in the literature to improve the training of deep learning models in the presence of noisy labels. This paper presents a survey on the main techniques in literature, in which we classify the algorithm in the following groups: robust losses, sample weighting, sample selection, meta-learning, and combined approaches. We also present the commonly used experimental setup, data sets, and results of the state-of-the-art models.

* Paper published at SIBRAPI, 2020 (camera ready version)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge