Fenghua Li

Training Data Attribution: Was Your Model Secretly Trained On Data Created By Mine?

Sep 24, 2024

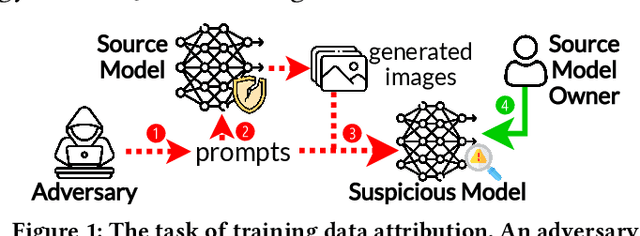

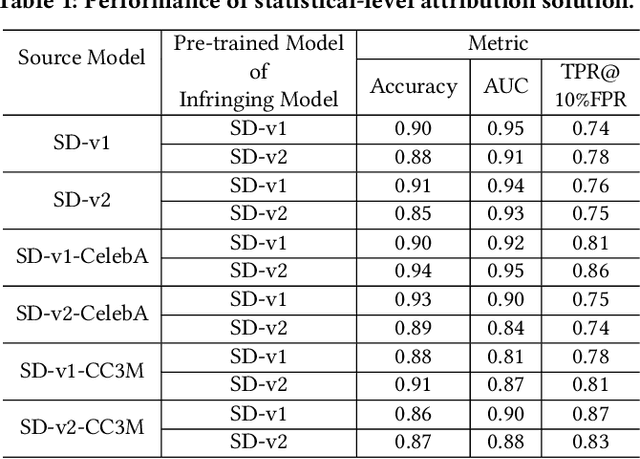

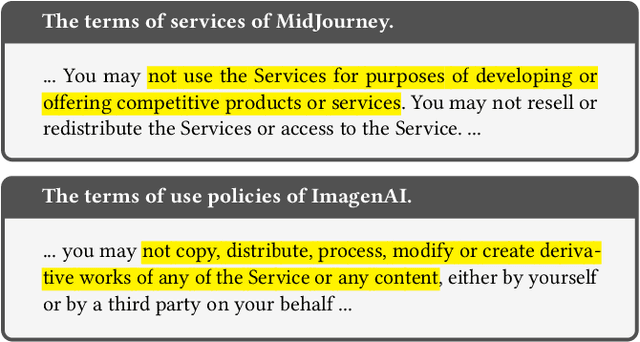

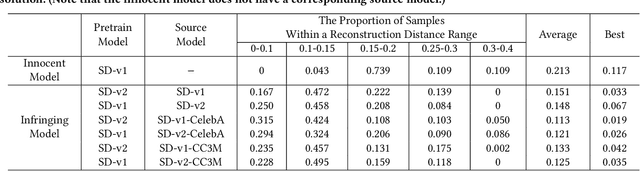

Abstract:The emergence of text-to-image models has recently sparked significant interest, but the attendant is a looming shadow of potential infringement by violating the user terms. Specifically, an adversary may exploit data created by a commercial model to train their own without proper authorization. To address such risk, it is crucial to investigate the attribution of a suspicious model's training data by determining whether its training data originates, wholly or partially, from a specific source model. To trace the generated data, existing methods require applying extra watermarks during either the training or inference phases of the source model. However, these methods are impractical for pre-trained models that have been released, especially when model owners lack security expertise. To tackle this challenge, we propose an injection-free training data attribution method for text-to-image models. It can identify whether a suspicious model's training data stems from a source model, without additional modifications on the source model. The crux of our method lies in the inherent memorization characteristic of text-to-image models. Our core insight is that the memorization of the training dataset is passed down through the data generated by the source model to the model trained on that data, making the source model and the infringing model exhibit consistent behaviors on specific samples. Therefore, our approach involves developing algorithms to uncover these distinct samples and using them as inherent watermarks to verify if a suspicious model originates from the source model. Our experiments demonstrate that our method achieves an accuracy of over 80\% in identifying the source of a suspicious model's training data, without interfering the original training or generation process of the source model.

Monitoring-based Differential Privacy Mechanism Against Query-Flooding Parameter Duplication Attack

Nov 01, 2020

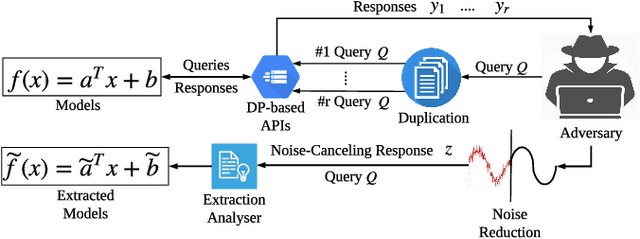

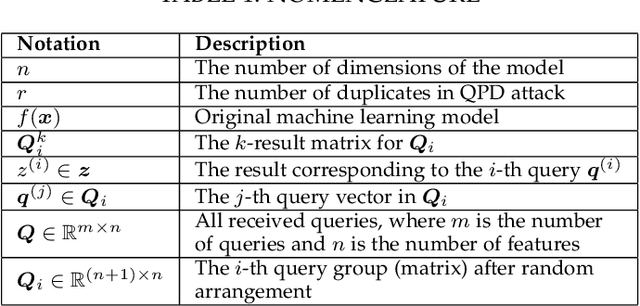

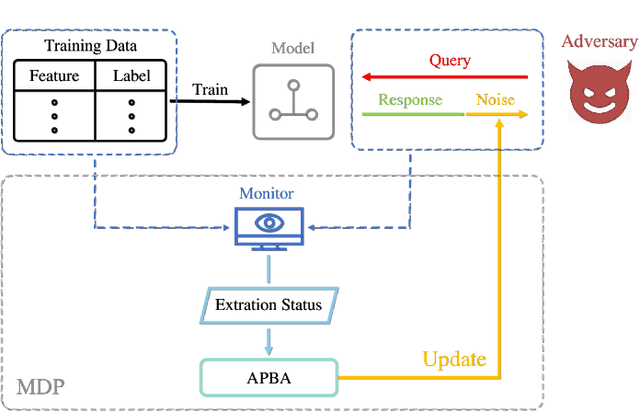

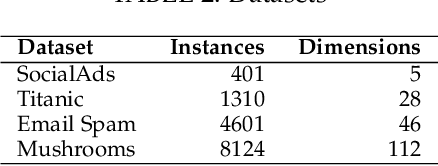

Abstract:Public intelligent services enabled by machine learning algorithms are vulnerable to model extraction attacks that can steal confidential information of the learning models through public queries. Though there are some protection options such as differential privacy (DP) and monitoring, which are considered promising techniques to mitigate this attack, we still find that the vulnerability persists. In this paper, we propose an adaptive query-flooding parameter duplication (QPD) attack. The adversary can infer the model information with black-box access and no prior knowledge of any model parameters or training data via QPD. We also develop a defense strategy using DP called monitoring-based DP (MDP) against this new attack. In MDP, we first propose a novel real-time model extraction status assessment scheme called Monitor to evaluate the situation of the model. Then, we design a method to guide the differential privacy budget allocation called APBA adaptively. Finally, all DP-based defenses with MDP could dynamically adjust the amount of noise added in the model response according to the result from Monitor and effectively defends the QPD attack. Furthermore, we thoroughly evaluate and compare the QPD attack and MDP defense performance on real-world models with DP and monitoring protection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge