Felix Krones

Combining Hough Transform and Deep Learning Approaches to Reconstruct ECG Signals From Printouts

Oct 18, 2024Abstract:This work presents our team's (SignalSavants) winning contribution to the 2024 George B. Moody PhysioNet Challenge. The Challenge had two goals: reconstruct ECG signals from printouts and classify them for cardiac diseases. Our focus was the first task. Despite many ECGs being digitally recorded today, paper ECGs remain common throughout the world. Digitising them could help build more diverse datasets and enable automated analyses. However, the presence of varying recording standards and poor image quality requires a data-centric approach for developing robust models that can generalise effectively. Our approach combines the creation of a diverse training set, Hough transform to rotate images, a U-Net based segmentation model to identify individual signals, and mask vectorisation to reconstruct the signals. We assessed the performance of our models using the 10-fold stratified cross-validation (CV) split of 21,799 recordings proposed by the PTB-XL dataset. On the digitisation task, our model achieved an average CV signal-to-noise ratio of 17.02 and an official Challenge score of 12.15 on the hidden set, securing first place in the competition. Our study shows the challenges of building robust, generalisable, digitisation approaches. Such models require large amounts of resources (data, time, and computational power) but have great potential in diversifying the data available.

Review of multimodal machine learning approaches in healthcare

Feb 12, 2024Abstract:Machine learning methods in healthcare have traditionally focused on using data from a single modality, limiting their ability to effectively replicate the clinical practice of integrating multiple sources of information for improved decision making. Clinicians typically rely on a variety of data sources including patients' demographic information, laboratory data, vital signs and various imaging data modalities to make informed decisions and contextualise their findings. Recent advances in machine learning have facilitated the more efficient incorporation of multimodal data, resulting in applications that better represent the clinician's approach. Here, we provide a review of multimodal machine learning approaches in healthcare, offering a comprehensive overview of recent literature. We discuss the various data modalities used in clinical diagnosis, with a particular emphasis on imaging data. We evaluate fusion techniques, explore existing multimodal datasets and examine common training strategies.

Exploring the Landscape of Large Language Models In Medical Question Answering: Observations and Open Questions

Oct 11, 2023Abstract:Large Language Models (LLMs) have shown promise in medical question answering by achieving passing scores in standardised exams and have been suggested as tools for supporting healthcare workers. Deploying LLMs into such a high-risk context requires a clear understanding of the limitations of these models. With the rapid development and release of new LLMs, it is especially valuable to identify patterns which exist across models and may, therefore, continue to appear in newer versions. In this paper, we evaluate a wide range of popular LLMs on their knowledge of medical questions in order to better understand their properties as a group. From this comparison, we provide preliminary observations and raise open questions for further research.

Dual Bayesian ResNet: A Deep Learning Approach to Heart Murmur Detection

May 26, 2023

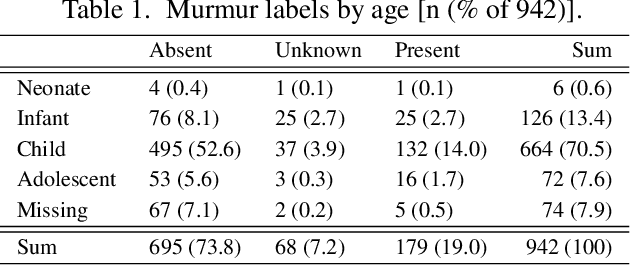

Abstract:This study presents our team PathToMyHeart's contribution to the George B. Moody PhysioNet Challenge 2022. Two models are implemented. The first model is a Dual Bayesian ResNet (DBRes), where each patient's recording is segmented into overlapping log mel spectrograms. These undergo two binary classifications: present versus unknown or absent, and unknown versus present or absent. The classifications are aggregated to give a patient's final classification. The second model is the output of DBRes integrated with demographic data and signal features using XGBoost.DBRes achieved our best weighted accuracy of $0.771$ on the hidden test set for murmur classification, which placed us fourth for the murmur task. (On the clinical outcome task, which we neglected, we scored 17th with costs of $12637$.) On our held-out subset of the training set, integrating the demographic data and signal features improved DBRes's accuracy from $0.762$ to $0.820$. However, this decreased DBRes's weighted accuracy from $0.780$ to $0.749$. Our results demonstrate that log mel spectrograms are an effective representation of heart sound recordings, Bayesian networks provide strong supervised classification performance, and treating the ternary classification as two binary classifications increases performance on the weighted accuracy.

* 5 pages, 3 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge