Fabrizio Russo

Leveraging Large Language Models for Causal Discovery: a Constraint-based, Argumentation-driven Approach

Feb 18, 2026Abstract:Causal discovery seeks to uncover causal relations from data, typically represented as causal graphs, and is essential for predicting the effects of interventions. While expert knowledge is required to construct principled causal graphs, many statistical methods have been proposed to leverage observational data with varying formal guarantees. Causal Assumption-based Argumentation (ABA) is a framework that uses symbolic reasoning to ensure correspondence between input constraints and output graphs, while offering a principled way to combine data and expertise. We explore the use of large language models (LLMs) as imperfect experts for Causal ABA, eliciting semantic structural priors from variable names and descriptions and integrating them with conditional-independence evidence. Experiments on standard benchmarks and semantically grounded synthetic graphs demonstrate state-of-the-art performance, and we additionally introduce an evaluation protocol to mitigate memorisation bias when assessing LLMs for causal discovery.

Argumentative Causal Discovery

May 18, 2024

Abstract:Causal discovery amounts to unearthing causal relationships amongst features in data. It is a crucial companion to causal inference, necessary to build scientific knowledge without resorting to expensive or impossible randomised control trials. In this paper, we explore how reasoning with symbolic representations can support causal discovery. Specifically, we deploy assumption-based argumentation (ABA), a well-established and powerful knowledge representation formalism, in combination with causality theories, to learn graphs which reflect causal dependencies in the data. We prove that our method exhibits desirable properties, notably that, under natural conditions, it can retrieve ground-truth causal graphs. We also conduct experiments with an implementation of our method in answer set programming (ASP) on four datasets from standard benchmarks in causal discovery, showing that our method compares well against established baselines.

Contestable AI needs Computational Argumentation

May 17, 2024

Abstract:AI has become pervasive in recent years, but state-of-the-art approaches predominantly neglect the need for AI systems to be contestable. Instead, contestability is advocated by AI guidelines (e.g. by the OECD) and regulation of automated decision-making (e.g. GDPR). In this position paper we explore how contestability can be achieved computationally in and for AI. We argue that contestable AI requires dynamic (human-machine and/or machine-machine) explainability and decision-making processes, whereby machines can (i) interact with humans and/or other machines to progressively explain their outputs and/or their reasoning as well as assess grounds for contestation provided by these humans and/or other machines, and (ii) revise their decision-making processes to redress any issues successfully raised during contestation. Given that much of the current AI landscape is tailored to static AIs, the need to accommodate contestability will require a radical rethinking, that, we argue, computational argumentation is ideally suited to support.

Shapley-PC: Constraint-based Causal Structure Learning with Shapley Values

Dec 18, 2023Abstract:Causal Structure Learning (CSL), amounting to extracting causal relations among the variables in a dataset, is widely perceived as an important step towards robust and transparent models. Constraint-based CSL leverages conditional independence tests to perform causal discovery. We propose Shapley-PC, a novel method to improve constraint-based CSL algorithms by using Shapley values over the possible conditioning sets to decide which variables are responsible for the observed conditional (in)dependences. We prove soundness and asymptotic consistency and demonstrate that it can outperform state-of-the-art constraint-based, search-based and functional causal model-based methods, according to standard metrics in CSL.

Causal Discovery and Injection for Feed-Forward Neural Networks

May 23, 2022

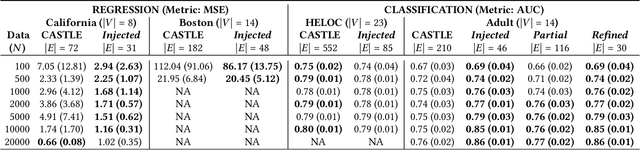

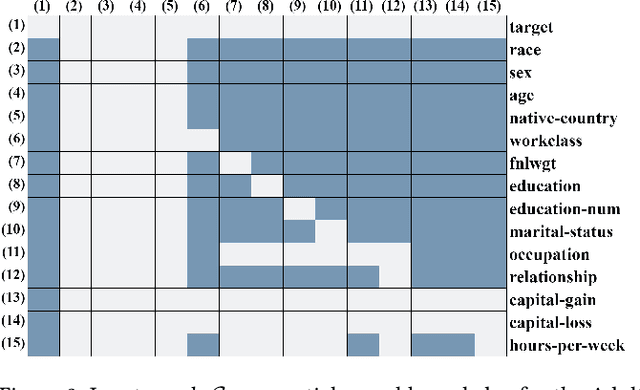

Abstract:Neural networks have proven to be effective at solving a wide range of problems but it is often unclear whether they learn any meaningful causal relationship: this poses a problem for the robustness of neural network models and their use for high-stakes decisions. We propose a novel method overcoming this issue by injecting knowledge in the form of (possibly partial) causal graphs into feed-forward neural networks, so that the learnt model is guaranteed to conform to the graph, hence adhering to expert knowledge. This knowledge may be given up-front or during the learning process, to improve the model through human-AI collaboration. We apply our method to synthetic and real (tabular) data showing that it is robust against noise and can improve causal discovery and prediction performance in low data regimes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge