Eric Kildebeck

Understanding and Estimating Domain Complexity Across Domains

Dec 20, 2023Abstract:Artificial Intelligence (AI) systems, trained in controlled environments, often struggle in real-world complexities. We propose a general framework for estimating domain complexity across diverse environments, like open-world learning and real-world applications. This framework distinguishes between intrinsic complexity (inherent to the domain) and extrinsic complexity (dependent on the AI agent). By analyzing dimensionality, sparsity, and diversity within these categories, we offer a comprehensive view of domain challenges. This approach enables quantitative predictions of AI difficulty during environment transitions, avoids bias in novel situations, and helps navigate the vast search spaces of open-world domains.

A Framework for Characterizing Novel Environment Transformations in General Environments

May 07, 2023

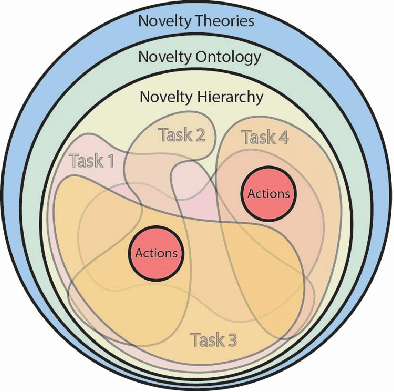

Abstract:To be robust to surprising developments, an intelligent agent must be able to respond to many different types of unexpected change in the world. To date, there are no general frameworks for defining and characterizing the types of environment changes that are possible. We introduce a formal and theoretical framework for defining and categorizing environment transformations, changes to the world an agent inhabits. We introduce two types of environment transformation: R-transformations which modify environment dynamics and T-transformations which modify the generation process that produces scenarios. We present a new language for describing domains, scenario generators, and transformations, called the Transformation and Simulator Abstraction Language (T-SAL), and a logical formalism that rigorously defines these concepts. Then, we offer the first formal and computational set of tests for eight categories of environment transformations. This domain-independent framework paves the way for describing unambiguous classes of novelty, constrained and domain-independent random generation of environment transformations, replication of environment transformation studies, and fair evaluation of agent robustness.

Toward Defining a Domain Complexity Measure Across Domains

Mar 07, 2023

Abstract:Artificial Intelligence (AI) systems planned for deployment in real-world applications frequently are researched and developed in closed simulation environments where all variables are controlled and known to the simulator or labeled benchmark datasets are used. Transition from these simulators, testbeds, and benchmark datasets to more open-world domains poses significant challenges to AI systems, including significant increases in the complexity of the domain and the inclusion of real-world novelties; the open-world environment contains numerous out-of-distribution elements that are not part in the AI systems' training set. Here, we propose a path to a general, domain-independent measure of domain complexity level. We distinguish two aspects of domain complexity: intrinsic and extrinsic. The intrinsic domain complexity is the complexity that exists by itself without any action or interaction from an AI agent performing a task on that domain. This is an agent-independent aspect of the domain complexity. The extrinsic domain complexity is agent- and task-dependent. Intrinsic and extrinsic elements combined capture the overall complexity of the domain. We frame the components that define and impact domain complexity levels in a domain-independent light. Domain-independent measures of complexity could enable quantitative predictions of the difficulty posed to AI systems when transitioning from one testbed or environment to another, when facing out-of-distribution data in open-world tasks, and when navigating the rapidly expanding solution and search spaces encountered in open-world domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge