Elias Najarro

Solving Inverse Problems in Stochastic Self-Organising Systems through Invariant Representations

Jun 13, 2025Abstract:Self-organising systems demonstrate how simple local rules can generate complex stochastic patterns. Many natural systems rely on such dynamics, making self-organisation central to understanding natural complexity. A fundamental challenge in modelling such systems is solving the inverse problem: finding the unknown causal parameters from macroscopic observations. This task becomes particularly difficult when observations have a strong stochastic component, yielding diverse yet equivalent patterns. Traditional inverse methods fail in this setting, as pixel-wise metrics cannot capture feature similarities between variable outcomes. In this work, we introduce a novel inverse modelling method specifically designed to handle stochasticity in the observable space, leveraging the capacity of visual embeddings to produce robust representations that capture perceptual invariances. By mapping the pattern representations onto an invariant embedding space, we can effectively recover unknown causal parameters without the need for handcrafted objective functions or heuristics. We evaluate the method on two canonical models--a reaction-diffusion system and an agent-based model of social segregation--and show that it reliably recovers parameters despite stochasticity in the outcomes. We further apply the method to real biological patterns, highlighting its potential as a tool for both theorists and experimentalists to investigate the dynamics underlying complex stochastic pattern formation.

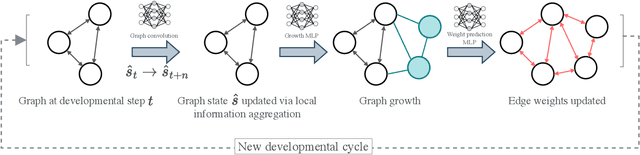

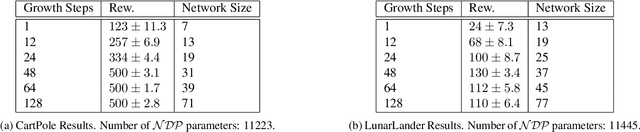

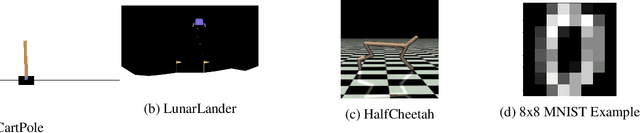

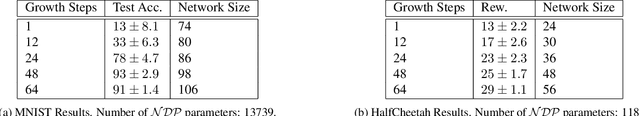

Towards Self-Assembling Artificial Neural Networks through Neural Developmental Programs

Jul 17, 2023

Abstract:Biological nervous systems are created in a fundamentally different way than current artificial neural networks. Despite its impressive results in a variety of different domains, deep learning often requires considerable engineering effort to design high-performing neural architectures. By contrast, biological nervous systems are grown through a dynamic self-organizing process. In this paper, we take initial steps toward neural networks that grow through a developmental process that mirrors key properties of embryonic development in biological organisms. The growth process is guided by another neural network, which we call a Neural Developmental Program (NDP) and which operates through local communication alone. We investigate the role of neural growth on different machine learning benchmarks and different optimization methods (evolutionary training, online RL, offline RL, and supervised learning). Additionally, we highlight future research directions and opportunities enabled by having self-organization driving the growth of neural networks.

MarioGPT: Open-Ended Text2Level Generation through Large Language Models

Feb 12, 2023

Abstract:Procedural Content Generation (PCG) algorithms provide a technique to generate complex and diverse environments in an automated way. However, while generating content with PCG methods is often straightforward, generating meaningful content that reflects specific intentions and constraints remains challenging. Furthermore, many PCG algorithms lack the ability to generate content in an open-ended manner. Recently, Large Language Models (LLMs) have shown to be incredibly effective in many diverse domains. These trained LLMs can be fine-tuned, re-using information and accelerating training for new tasks. In this work, we introduce MarioGPT, a fine-tuned GPT2 model trained to generate tile-based game levels, in our case Super Mario Bros levels. We show that MarioGPT can not only generate diverse levels, but can be text-prompted for controllable level generation, addressing one of the key challenges of current PCG techniques. As far as we know, MarioGPT is the first text-to-level model. We also combine MarioGPT with novelty search, enabling it to generate diverse levels with varying play-style dynamics (i.e. player paths). This combination allows for the open-ended generation of an increasingly diverse range of content.

HyperNCA: Growing Developmental Networks with Neural Cellular Automata

Apr 25, 2022

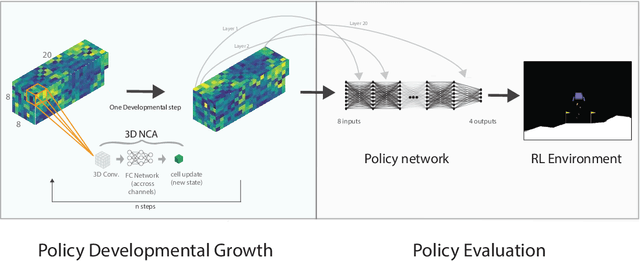

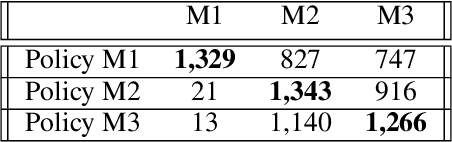

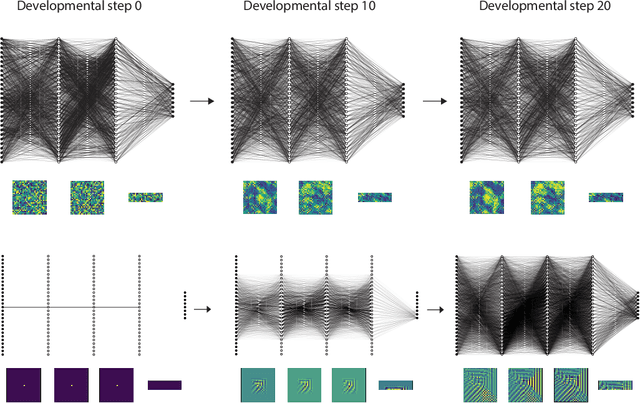

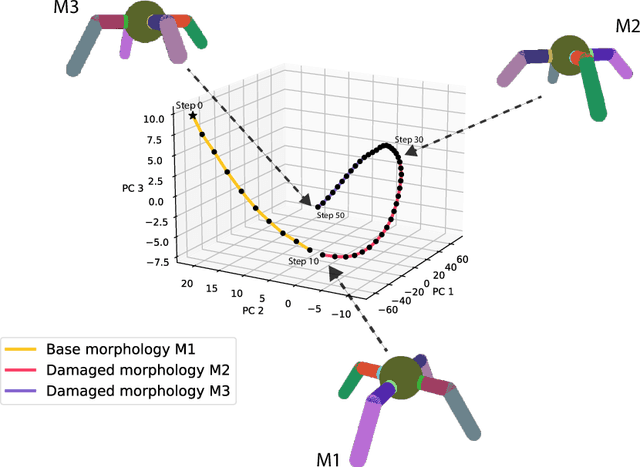

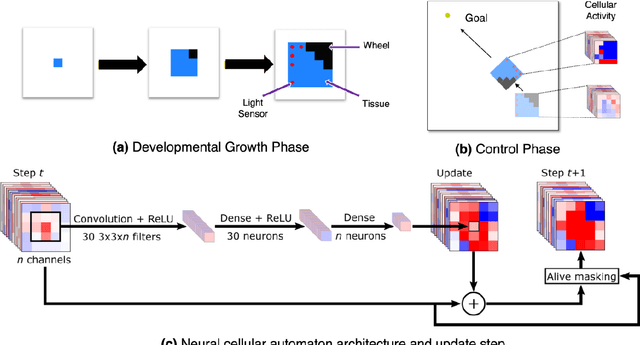

Abstract:In contrast to deep reinforcement learning agents, biological neural networks are grown through a self-organized developmental process. Here we propose a new hypernetwork approach to grow artificial neural networks based on neural cellular automata (NCA). Inspired by self-organising systems and information-theoretic approaches to developmental biology, we show that our HyperNCA method can grow neural networks capable of solving common reinforcement learning tasks. Finally, we explore how the same approach can be used to build developmental metamorphosis networks capable of transforming their weights to solve variations of the initial RL task.

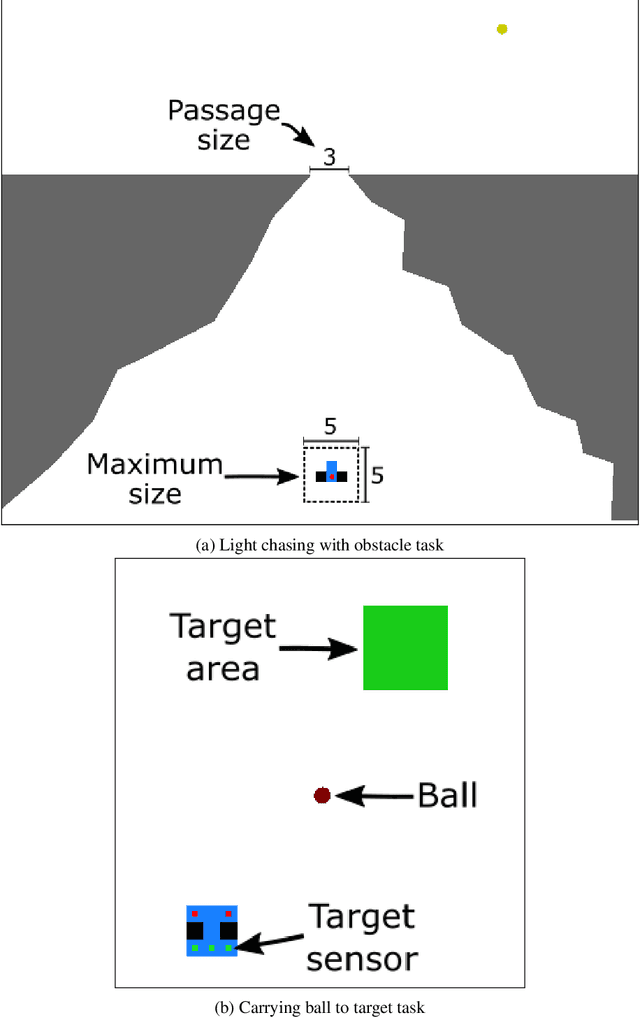

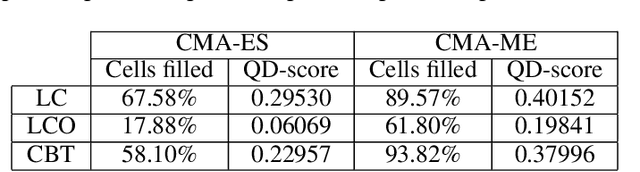

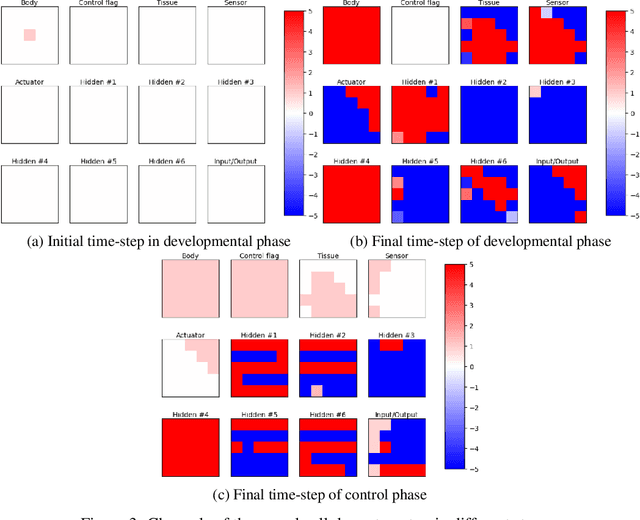

A Unified Substrate for Body-Brain Co-evolution

Mar 22, 2022

Abstract:A successful development of a complex multicellular organism took millions of years of evolution. The genome of such a multicellular organism guides the development of its body from a single cell, including its control system. Our goal is to imitate this natural process using a single neural cellular automaton (NCA) as a genome for modular robotic agents. In the introduced approach, called Neural Cellular Robot Substrate (NCRS), a single NCA guides the growth of a robot and the cellular activity which controls the robot during deployment. We also introduce three benchmark environments, which test the ability of the approach to grow different robot morphologies. We evolve the NCRS with covariance matrix adaptation evolution strategy (CMA-ES), and covariance matrix adaptation MAP-Elites (CMA-ME) for quality diversity and observe that CMA-ME generates more diverse robot morphologies with higher fitness scores. While the NCRS is able to solve the easier tasks in the benchmark, the success rate reduces when the difficulty of the task increases. We discuss directions for future work that may facilitate the use of the NCRS approach for more complex domains.

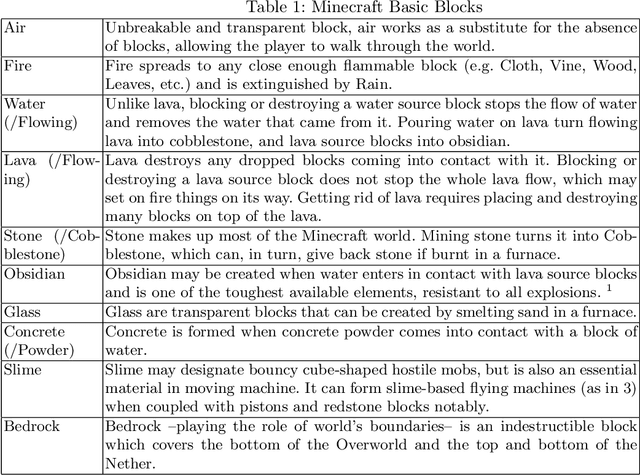

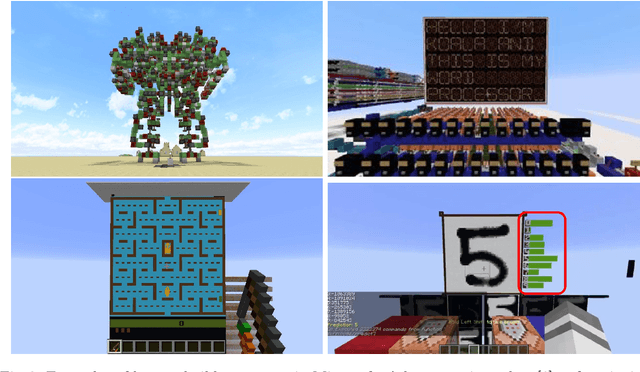

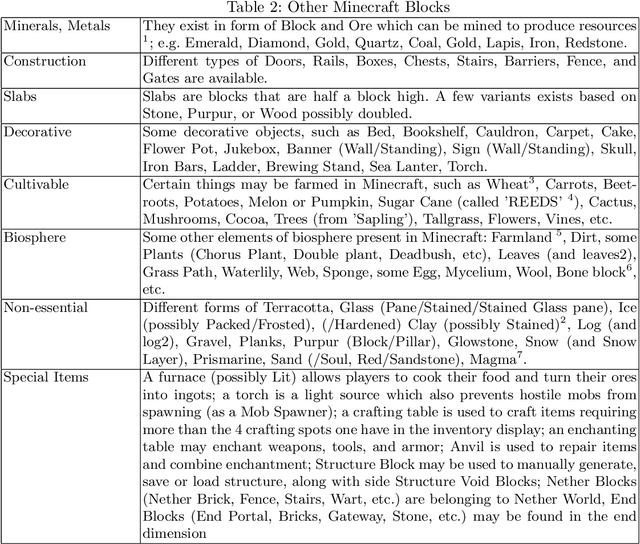

Growing 3D Artefacts and Functional Machines with Neural Cellular Automata

Mar 15, 2021

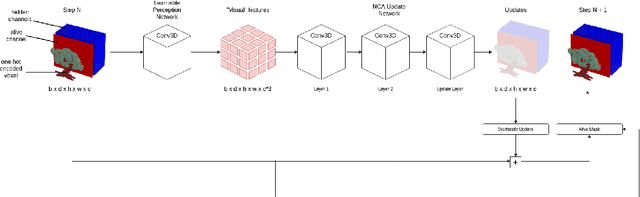

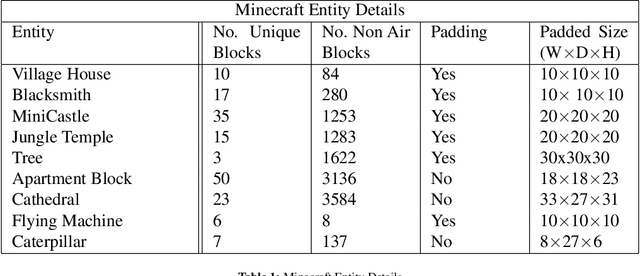

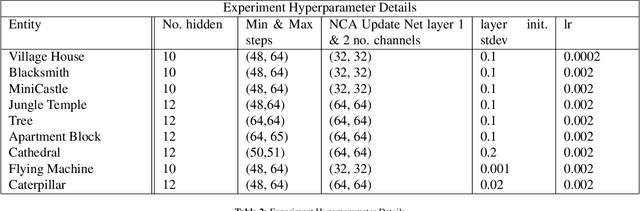

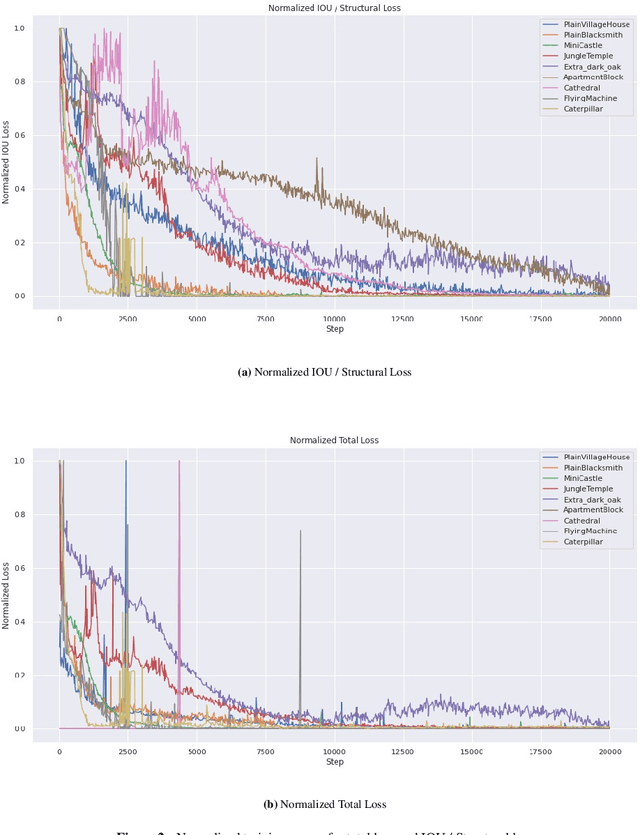

Abstract:Neural Cellular Automata (NCAs) have been proven effective in simulating morphogenetic processes, the continuous construction of complex structures from very few starting cells. Recent developments in NCAs lie in the 2D domain, namely reconstructing target images from a single pixel or infinitely growing 2D textures. In this work, we propose an extension of NCAs to 3D, utilizing 3D convolutions in the proposed neural network architecture. Minecraft is selected as the environment for our automaton since it allows the generation of both static structures and moving machines. We show that despite their simplicity, NCAs are capable of growing complex entities such as castles, apartment blocks, and trees, some of which are composed of over 3,000 blocks. Additionally, when trained for regeneration, the system is able to regrow parts of simple functional machines, significantly expanding the capabilities of simulated morphogenetic systems.

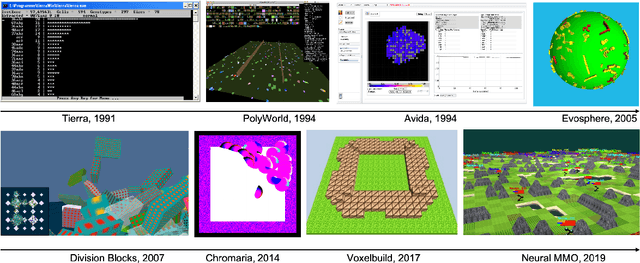

EvoCraft: A New Challenge for Open-Endedness

Dec 08, 2020

Abstract:This paper introduces EvoCraft, a framework for Minecraft designed to study open-ended algorithms. We introduce an API that provides an open-source Python interface for communicating with Minecraft to place and track blocks. In contrast to previous work in Minecraft that focused on learning to play the game, the grand challenge we pose here is to automatically search for increasingly complex artifacts in an open-ended fashion. Compared to other environments used to study open-endedness, Minecraft allows the construction of almost any kind of structure, including actuated machines with circuits and mechanical components. We present initial baseline results in evolving simple Minecraft creations through both interactive and automated evolution. While evolution succeeds when tasked to grow a structure towards a specific target, it is unable to find a solution when rewarded for creating a simple machine that moves. Thus, EvoCraft offers a challenging new environment for automated search methods (such as evolution) to find complex artifacts that we hope will spur the development of more open-ended algorithms. A Python implementation of the EvoCraft framework is available at: https://github.com/real-itu/Evocraft-py.

Testing the Genomic Bottleneck Hypothesis in Hebbian Meta-Learning

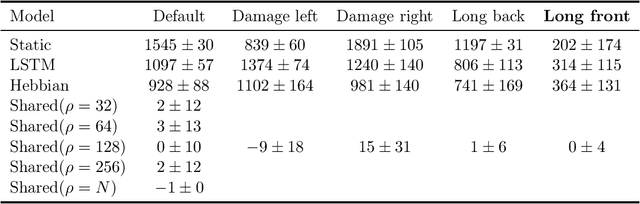

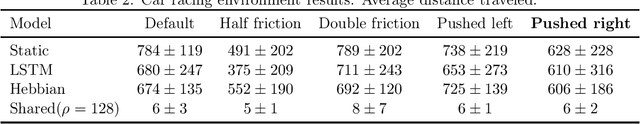

Nov 13, 2020

Abstract:Recent work has shown promising results using Hebbian meta-learning to solve hard reinforcement learning problems and adapt-to a limited degree-to changes in the environment. In previous works each synapse has its own learning rule. This allows each synapse to learn very specific learning rules and we hypothesize this limits the ability to discover generally useful Hebbian learning rules. We hypothesize that limiting the number of Hebbian learning rules through a "genomic bottleneck" can act as a regularizer leading to better generalization across changes to the environment. We test this hypothesis by decoupling the number of Hebbian learning rules from the number of synapses and systematically varying the number of Hebbian learning rules. We thoroughly explore how well these Hebbian meta-learning networks adapt to changes in their environment.

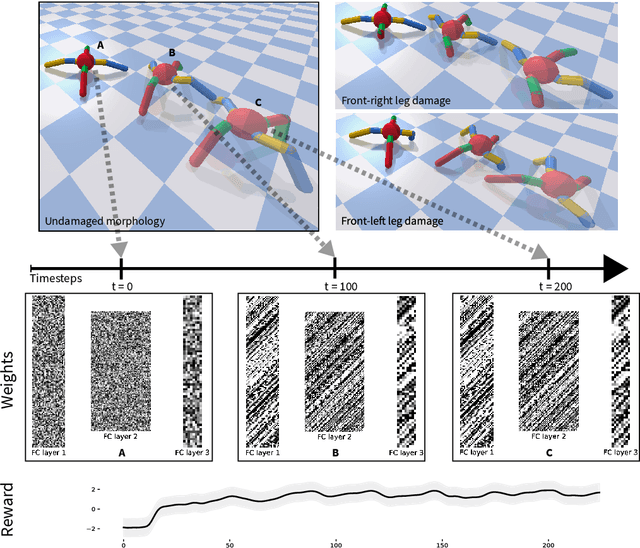

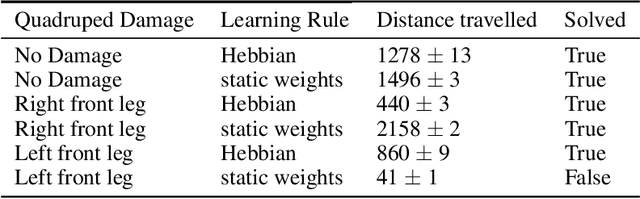

Meta-Learning through Hebbian Plasticity in Random Networks

Jul 06, 2020

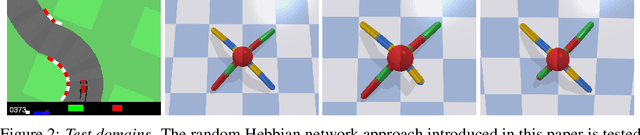

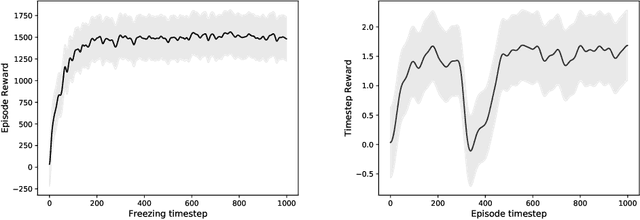

Abstract:Lifelong learning and adaptability are two defining aspects of biological agents. Modern reinforcement learning (RL) approaches have shown significant progress in solving complex tasks, however once training is concluded, the found solutions are typically static and incapable of adapting to new information or perturbations. While it is still not completely understood how biological brains learn and adapt so efficiently from experience, it is believed that synaptic plasticity plays a prominent role in this process. Inspired by this biological mechanism, we propose a search method that, instead of optimizing the weight parameters of neural networks directly, only searches for synapse-specific Hebbian learning rules that allow the network to continuously self-organize its weights during the lifetime of the agent. We demonstrate our approach on several reinforcement learning tasks with different sensory modalities and more than 450K trainable plasticity parameters. We find that starting from completely random weights, the discovered Hebbian rules enable an agent to navigate a dynamical 2D-pixel environment; likewise they allow a simulated 3D quadrupedal robot to learn how to walk while adapting to different morphological damage in the absence of any explicit reward or error signal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge