Ehsan Nekouei

Survey of Distributed Algorithms for Resource Allocation over Multi-Agent Systems

Jan 28, 2024Abstract:Resource allocation and scheduling in multi-agent systems present challenges due to complex interactions and decentralization. This survey paper provides a comprehensive analysis of distributed algorithms for addressing the distributed resource allocation (DRA) problem over multi-agent systems. It covers a significant area of research at the intersection of optimization, multi-agent systems, and distributed consensus-based computing. The paper begins by presenting a mathematical formulation of the DRA problem, establishing a solid foundation for further exploration. Real-world applications of DRA in various domains are examined to underscore the importance of efficient resource allocation, and relevant distributed optimization formulations are presented. The survey then delves into existing solutions for DRA, encompassing linear, nonlinear, primal-based, and dual-formulation-based approaches. Furthermore, this paper evaluates the features and properties of DRA algorithms, addressing key aspects such as feasibility, convergence rate, and network reliability. The analysis of mathematical foundations, diverse applications, existing solutions, and algorithmic properties contributes to a broader comprehension of the challenges and potential solutions for this domain.

Memory-based Controllers for Efficient Data-driven Control of Soft Robots

Sep 19, 2023

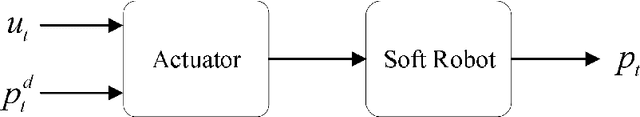

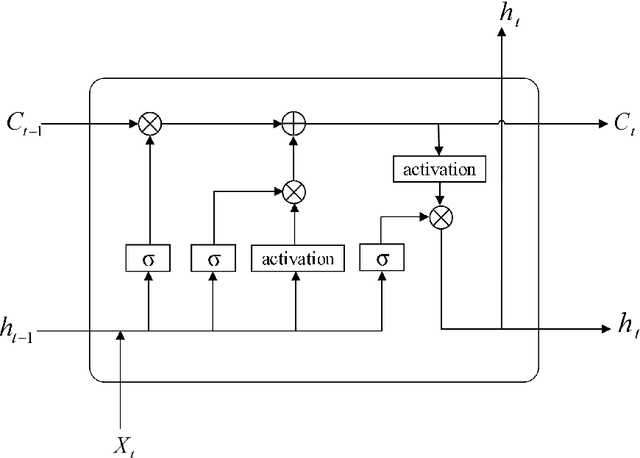

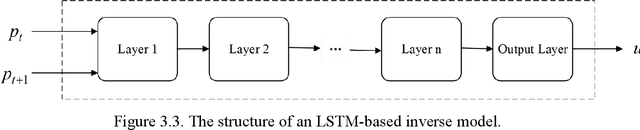

Abstract:Controller design for soft robots is challenging due to nonlinear deformation and high degrees of freedom of flexible material. The data-driven approach is a promising solution to the controller design problem for soft robots. However, the existing data-driven controller design methods for soft robots suffer from two drawbacks: (i) they require excessively long training time, and (ii) they may result in potentially inefficient controllers. This paper addresses these issues by developing two memory-based controllers for soft robots that can be trained in a data-driven fashion: the finite memory controller (FMC) approach and the long short-term memory (LSTM) based approach. An FMC stores the tracking errors at different time instances and computes the actuation signal according to a weighted sum of the stored tracking errors. We develop three reinforcement learning algorithms for computing the optimal weights of an FMC using the Q-learning, soft actor-critic, and deterministic policy gradient (DDPG) methods. An LSTM-based controller is composed of an LSTM network where the inputs of the network are the robot's desired configuration and current configuration. The LSTM network computes the required actuation signal for the soft robot to follow the desired configuration. We study the performance of the proposed approaches in controlling a soft finger where, as benchmarks, we use the existing reinforcement learning (RL) based controllers and proportional-integral-derivative (PID) controllers. Our numerical results show that the training time of the proposed memory-based controllers is significantly shorter than that of the classical RL-based controllers. Moreover, the proposed controllers achieve a smaller tracking error compared with the classical RL algorithms and the PID controller.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge