Edmund Koch

Non-rigid Point Cloud Registration for Middle Ear Diagnostics with Endoscopic Optical Coherence Tomography

Apr 26, 2023Abstract:Purpose: Middle ear infection is the most prevalent inflammatory disease, especially among the pediatric population. Current diagnostic methods are subjective and depend on visual cues from an otoscope, which is limited for otologists to identify pathology. To address this shortcoming, endoscopic optical coherence tomography (OCT) provides both morphological and functional in-vivo measurements of the middle ear. However, due to the shadow of prior structures, interpretation of OCT images is challenging and time-consuming. To facilitate fast diagnosis and measurement, improvement in the readability of OCT data is achieved by merging morphological knowledge from ex-vivo middle ear models with OCT volumetric data, so that OCT applications can be further promoted in daily clinical settings. Methods: We propose C2P-Net: a two-staged non-rigid registration pipeline for complete to partial point clouds, which are sampled from ex-vivo and in-vivo OCT models, respectively. To overcome the lack of labeled training data, a fast and effective generation pipeline in Blender3D is designed to simulate middle ear shapes and extract in-vivo noisy and partial point clouds. Results: We evaluate the performance of C2P-Net through experiments on both synthetic and real OCT datasets. The results demonstrate that C2P-Net is generalized to unseen middle ear point clouds and capable of handling realistic noise and incompleteness in synthetic and real OCT data. Conclusion: In this work, we aim to enable diagnosis of middle ear structures with the assistance of OCT images. We propose C2P-Net: a two-staged non-rigid registration pipeline for point clouds to support the interpretation of in-vivo noisy and partial OCT images for the first time. Code is available at: https://gitlab.com/nct\_tso\_public/c2p-net.

Structural Similarity based Anatomical and Functional Brain Imaging Fusion

Aug 14, 2019

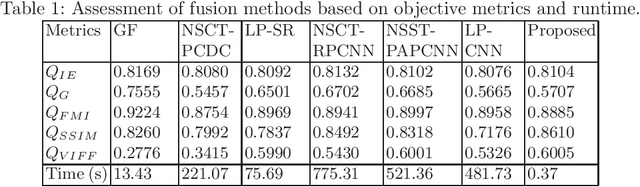

Abstract:Multimodal medical image fusion helps in combining contrasting features from two or more input imaging modalities to represent fused information in a single image. One of the pivotal clinical applications of medical image fusion is the merging of anatomical and functional modalities for fast diagnosis of malignant tissues. In this paper, we present a novel end-to-end unsupervised learning-based Convolutional Neural Network (CNN) for fusing the high and low frequency components of MRI-PET grayscale image pairs, publicly available at ADNI, by exploiting Structural Similarity Index (SSIM) as the loss function during training. We then apply color coding for the visualization of the fused image by quantifying the contribution of each input image in terms of the partial derivatives of the fused image. We find that our fusion and visualization approach results in better visual perception of the fused image, while also comparing favorably to previous methods when applying various quantitative assessment metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge