Duckyu Choi

CLOi-Mapper: Consistent, Lightweight, Robust, and Incremental Mapper With Embedded Systems for Commercial Robot Services

Jun 28, 2024

Abstract:In commercial autonomous service robots with several form factors, simultaneous localization and mapping (SLAM) is an essential technology for providing proper services such as cleaning and guidance. Such robots require SLAM algorithms suitable for specific applications and environments. Hence, several SLAM frameworks have been proposed to address various requirements in the past decade. However, we have encountered challenges in implementing recent innovative frameworks when handling service robots with low-end processors and insufficient sensor data, such as low-resolution 2D LiDAR sensors. Specifically, regarding commercial robots, consistent performance in different hardware configurations and environments is more crucial than the performance dedicated to specific sensors or environments. Therefore, we propose a) a multi-stage %hierarchical approach for global pose estimation in embedded systems; b) a graph generation method with zero constraints for synchronized sensors; and c) a robust and memory-efficient method for long-term pose-graph optimization. As verified in in-home and large-scale indoor environments, the proposed method yields consistent global pose estimation for services in commercial fields. Furthermore, the proposed method exhibits potential commercial viability considering the consistent performance verified via mass production and long-term (> 5 years) operation.

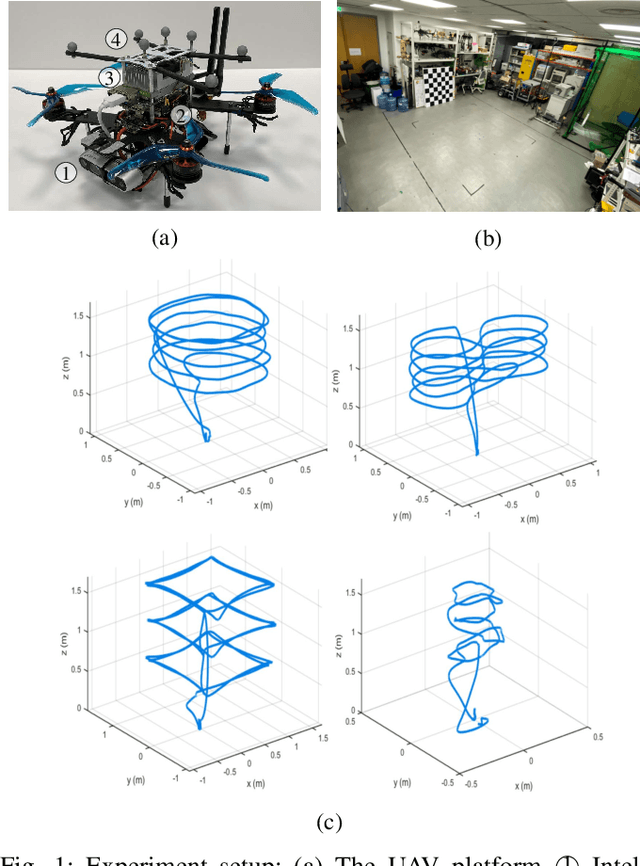

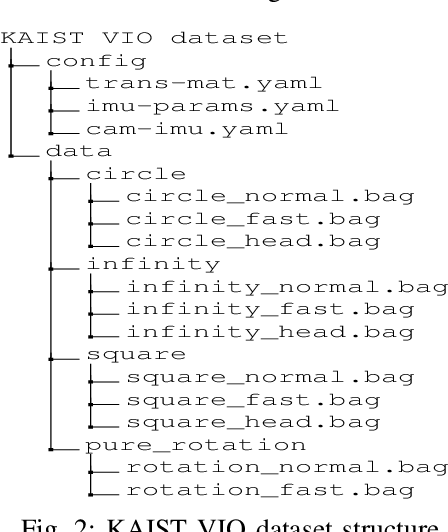

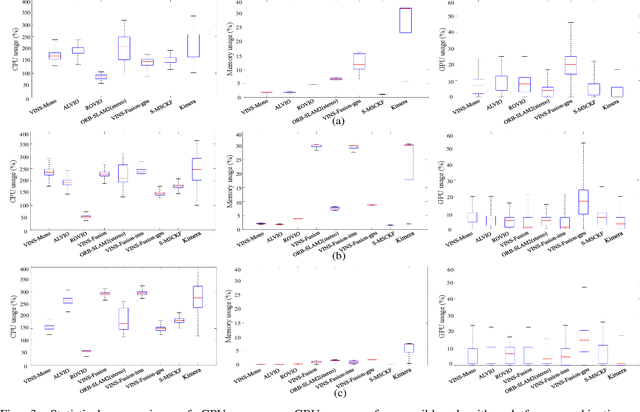

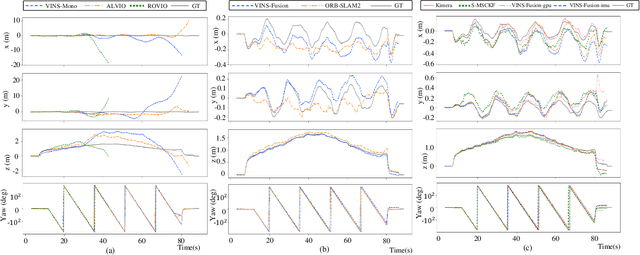

Run Your Visual-Inertial Odometry on NVIDIA Jetson: Benchmark Tests on a Micro Aerial Vehicle

Mar 02, 2021

Abstract:This paper presents benchmark tests of various visual(-inertial) odometry algorithms on NVIDIA Jetson platforms. The compared algorithms include mono and stereo, covering Visual Odometry (VO) and Visual-Inertial Odometry (VIO): VINS-Mono, VINS-Fusion, Kimera, ALVIO, Stereo-MSCKF, ORB-SLAM2 stereo, and ROVIO. As these methods are mainly used for unmanned aerial vehicles (UAVs), they must perform well in situations where the size of the processing board and weight is limited. Jetson boards released by NVIDIA satisfy these constraints as they have a sufficiently powerful central processing unit (CPU) and graphics processing unit (GPU) for image processing. However, in existing studies, the performance of Jetson boards as a processing platform for executing VO/VIO has not been compared extensively in terms of the usage of computing resources and accuracy. Therefore, this study compares representative VO/VIO algorithms on several NVIDIA Jetson platforms, namely NVIDIA Jetson TX2, Xavier NX, and AGX Xavier, and introduces a novel dataset 'KAIST VIO dataset' for UAVs. Including pure rotations, the dataset has several geometric trajectories that are harsh to visual(-inertial) state estimation. The evaluation is performed in terms of the accuracy of estimated odometry, CPU usage, and memory usage on various Jetson boards, algorithms, and trajectories. We present the {results of the} comprehensive benchmark test and release the dataset for the computer vision and robotics applications.

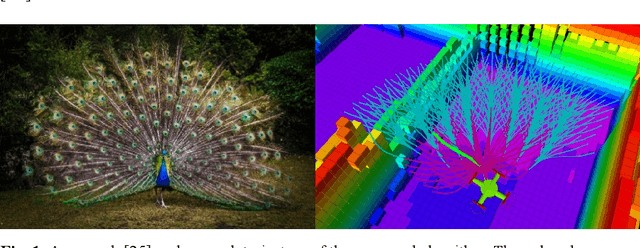

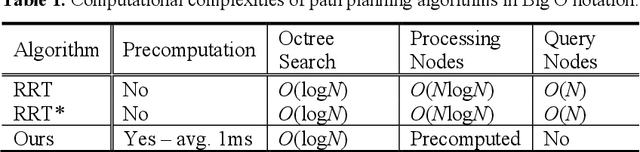

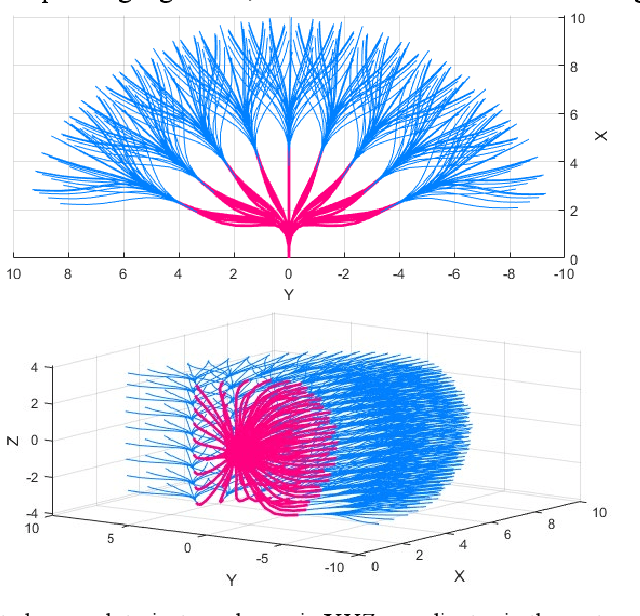

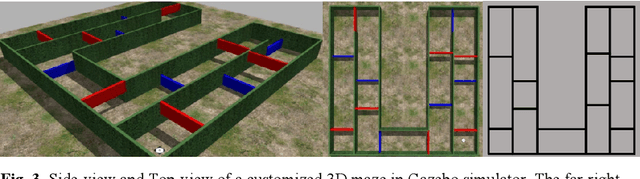

Peacock Exploration: A Lightweight Exploration for UAV using Control-Efficient Trajectory

Dec 29, 2020

Abstract:Unmanned Aerial Vehicles have received much attention in recent years due to its wide range of applications, such as exploration of an unknown environment to acquire a 3D map without prior knowledge of it. Existing exploration methods have been largely challenged by computationally heavy probabilistic path planning. Similarly, kinodynamic constraints or proper sensors considering the payload for UAVs were not considered. In this paper, to solve those issues and to consider the limited payload and computational resource of UAVs, we propose "Peacock Exploration": A lightweight exploration method for UAVs using precomputed minimum snap trajectories which look like a peacock's tail. Using the widely known, control efficient minimum snap trajectories and OctoMap, the UAV equipped with a RGB-D camera can explore unknown 3D environments without any prior knowledge or human-guidance with only O(logN) computational complexity. It also adopts the receding horizon approach and simple, heuristic scoring criteria. The proposed algorithm's performance is demonstrated by exploring a challenging 3D maze environment and compared with a state-of-the-art algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge