Donald A. Adjeroh

Rethinking Self-Supervised Learning Within the Framework of Partial Information Decomposition

Dec 03, 2024

Abstract:Self Supervised learning (SSL) has demonstrated its effectiveness in feature learning from unlabeled data. Regarding this success, there have been some arguments on the role that mutual information plays within the SSL framework. Some works argued for increasing mutual information between representation of augmented views. Others suggest decreasing mutual information between them, while increasing task-relevant information. We ponder upon this debate and propose to revisit the core idea of SSL within the framework of partial information decomposition (PID). Thus, with SSL under PID we propose to replace traditional mutual information with the more general concept of joint mutual information to resolve the argument. Our investigation on instantiation of SSL within the PID framework leads to upgrading the existing pipelines by considering the components of the PID in the SSL models for improved representation learning. Accordingly we propose a general pipeline that can be applied to improve existing baselines. Our pipeline focuses on extracting the unique information component under the PID to build upon lower level supervision for generic feature learning and on developing higher-level supervisory signals for task-related feature learning. In essence, this could be interpreted as a joint utilization of local and global clustering. Experiments on four baselines and four datasets show the effectiveness and generality of our approach in improving existing SSL frameworks.

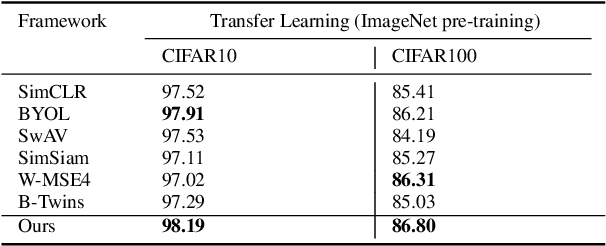

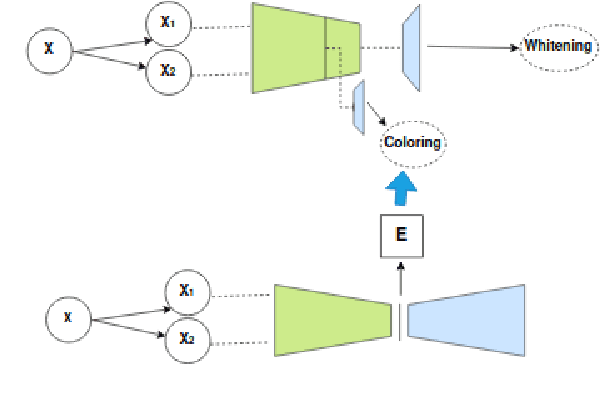

GUESS: Generative Uncertainty Ensemble for Self Supervision

Dec 03, 2024Abstract:Self-supervised learning (SSL) frameworks consist of pretext task, and loss function aiming to learn useful general features from unlabeled data. The basic idea of most SSL baselines revolves around enforcing the invariance to a variety of data augmentations via the loss function. However, one main issue is that, inattentive or deterministic enforcement of the invariance to any kind of data augmentation is generally not only inefficient, but also potentially detrimental to performance on the downstream tasks. In this work, we investigate the issue from the viewpoint of uncertainty in invariance representation. Uncertainty representation is fairly under-explored in the design of SSL architectures as well as loss functions. We incorporate uncertainty representation in both loss function as well as architecture design aiming for more data-dependent invariance enforcement. The former is represented in the form of data-derived uncertainty in SSL loss function resulting in a generative-discriminative loss function. The latter is achieved by feeding slightly different distorted versions of samples to the ensemble aiming for learning better and more robust representation. Specifically, building upon the recent methods that use hard and soft whitening (a.k.a redundancy reduction), we introduce a new approach GUESS, a pseudo-whitening framework, composed of controlled uncertainty injection, a new architecture, and a new loss function. We include detailed results and ablation analysis establishing GUESS as a new baseline.

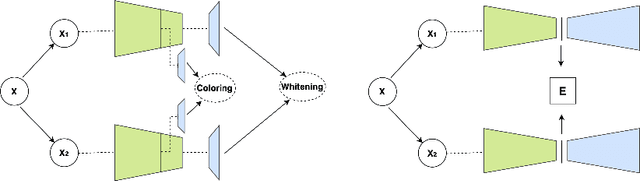

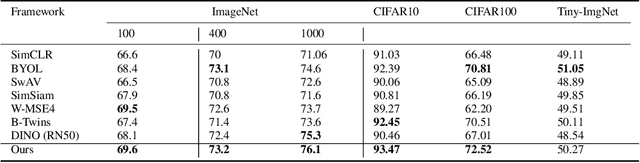

Direct Coloring for Self-Supervised Enhanced Feature Decoupling

Dec 03, 2024

Abstract:The success of self-supervised learning (SSL) has been the focus of multiple recent theoretical and empirical studies, including the role of data augmentation (in feature decoupling) as well as complete and dimensional representation collapse. While complete collapse is well-studied and addressed, dimensional collapse has only gain attention and addressed in recent years mostly using variants of redundancy reduction (aka whitening) techniques. In this paper, we further explore a complementary approach to whitening via feature decoupling for improved representation learning while avoiding representation collapse. In particular, we perform feature decoupling by early promotion of useful features via careful feature coloring. The coloring technique is developed based on a Bayesian prior of the augmented data, which is inherently encoded for feature decoupling. We show that our proposed framework is complementary to the state-of-the-art techniques, while outperforming both contrastive and recent non-contrastive methods. We also study the different effects of coloring approach to formulate it as a general complementary technique along with other baselines.

TabSeq: A Framework for Deep Learning on Tabular Data via Sequential Ordering

Oct 17, 2024

Abstract:Effective analysis of tabular data still poses a significant problem in deep learning, mainly because features in tabular datasets are often heterogeneous and have different levels of relevance. This work introduces TabSeq, a novel framework for the sequential ordering of features, addressing the vital necessity to optimize the learning process. Features are not always equally informative, and for certain deep learning models, their random arrangement can hinder the model's learning capacity. Finding the optimum sequence order for such features could improve the deep learning models' learning process. The novel feature ordering technique we provide in this work is based on clustering and incorporates both local ordering and global ordering. It is designed to be used with a multi-head attention mechanism in a denoising autoencoder network. Our framework uses clustering to align comparable features and improve data organization. Multi-head attention focuses on essential characteristics, whereas the denoising autoencoder highlights important aspects by rebuilding from distorted inputs. This method improves the capability to learn from tabular data while lowering redundancy. Our research, demonstrating improved performance through appropriate feature sequence rearrangement using raw antibody microarray and two other real-world biomedical datasets, validates the impact of feature ordering. These results demonstrate that feature ordering can be a viable approach to improved deep learning of tabular data.

Cardiovascular Disease Risk Prediction via Social Media

Sep 28, 2023Abstract:Researchers use Twitter and sentiment analysis to predict Cardiovascular Disease (CVD) risk. We developed a new dictionary of CVD-related keywords by analyzing emotions expressed in tweets. Tweets from eighteen US states, including the Appalachian region, were collected. Using the VADER model for sentiment analysis, users were classified as potentially at CVD risk. Machine Learning (ML) models were employed to classify individuals' CVD risk and applied to a CDC dataset with demographic information to make the comparison. Performance evaluation metrics such as Test Accuracy, Precision, Recall, F1 score, Mathew's Correlation Coefficient (MCC), and Cohen's Kappa (CK) score were considered. Results demonstrated that analyzing tweets' emotions surpassed the predictive power of demographic data alone, enabling the identification of individuals at potential risk of developing CVD. This research highlights the potential of Natural Language Processing (NLP) and ML techniques in using tweets to identify individuals with CVD risks, providing an alternative approach to traditional demographic information for public health monitoring.

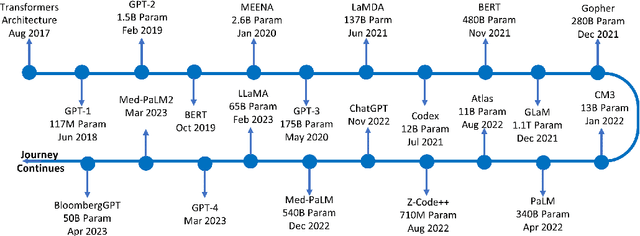

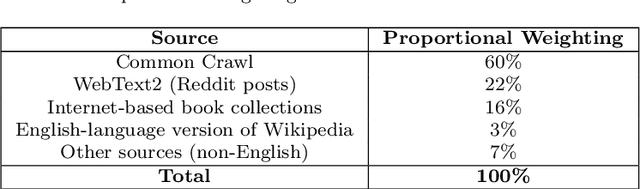

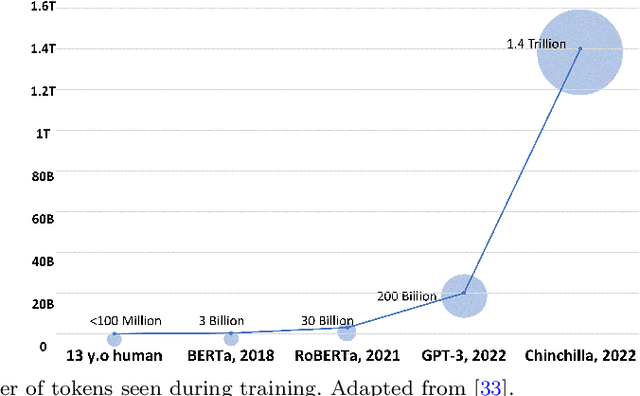

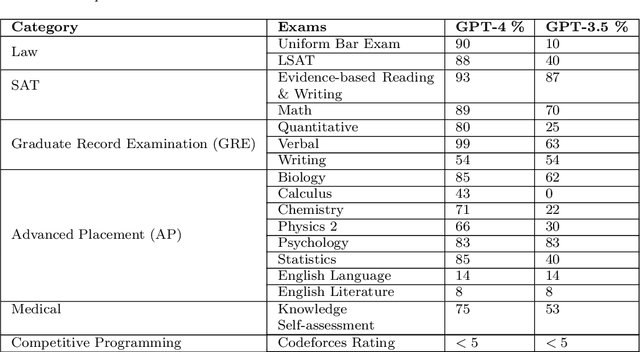

ChatGPT in the Age of Generative AI and Large Language Models: A Concise Survey

Jul 15, 2023

Abstract:ChatGPT is a large language model (LLM) created by OpenAI that has been carefully trained on a large amount of data. It has revolutionized the field of natural language processing (NLP) and has pushed the boundaries of LLM capabilities. ChatGPT has played a pivotal role in enabling widespread public interaction with generative artificial intelligence (GAI) on a large scale. It has also sparked research interest in developing similar technologies and investigating their applications and implications. In this paper, our primary goal is to provide a concise survey on the current lines of research on ChatGPT and its evolution. We considered both the glass box and black box views of ChatGPT, encompassing the components and foundational elements of the technology, as well as its applications, impacts, and implications. The glass box approach focuses on understanding the inner workings of the technology, and the black box approach embraces it as a complex system, and thus examines its inputs, outputs, and effects. This paves the way for a comprehensive exploration of the technology and provides a road map for further research and experimentation. We also lay out essential foundational literature on LLMs and GAI in general and their connection with ChatGPT. This overview sheds light on existing and missing research lines in the emerging field of LLMs, benefiting both public users and developers. Furthermore, the paper delves into the broad spectrum of applications and significant concerns in fields such as education, research, healthcare, finance, etc.

More Synergy, Less Redundancy: Exploiting Joint Mutual Information for Self-Supervised Learning

Jul 02, 2023

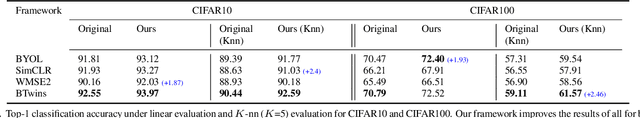

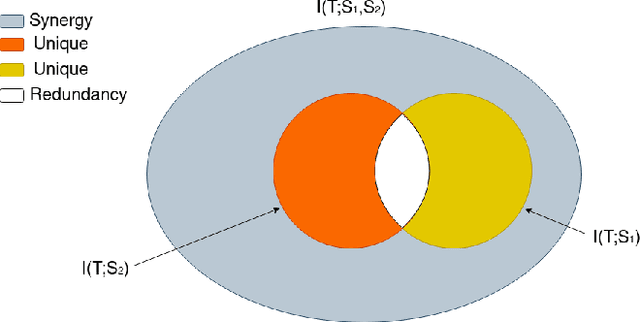

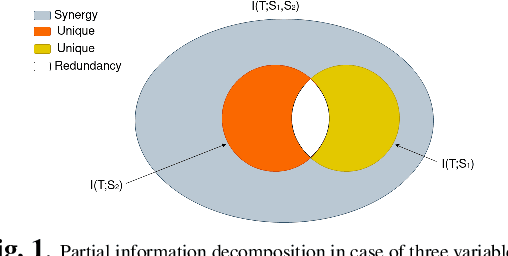

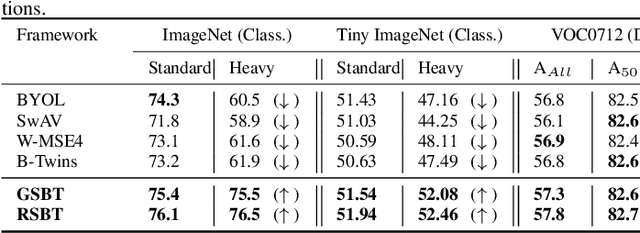

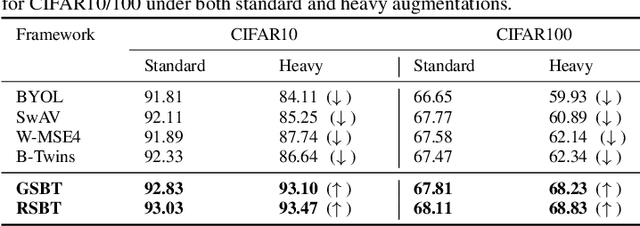

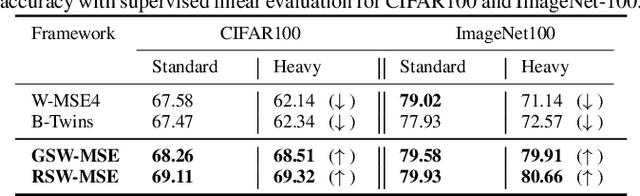

Abstract:Self-supervised learning (SSL) is now a serious competitor for supervised learning, even though it does not require data annotation. Several baselines have attempted to make SSL models exploit information about data distribution, and less dependent on the augmentation effect. However, there is no clear consensus on whether maximizing or minimizing the mutual information between representations of augmentation views practically contribute to improvement or degradation in performance of SSL models. This paper is a fundamental work where, we investigate role of mutual information in SSL, and reformulate the problem of SSL in the context of a new perspective on mutual information. To this end, we consider joint mutual information from the perspective of partial information decomposition (PID) as a key step in \textbf{reliable multivariate information measurement}. PID enables us to decompose joint mutual information into three important components, namely, unique information, redundant information and synergistic information. Our framework aims for minimizing the redundant information between views and the desired target representation while maximizing the synergistic information at the same time. Our experiments lead to a re-calibration of two redundancy reduction baselines, and a proposal for a new SSL training protocol. Extensive experimental results on multiple datasets and two downstream tasks show the effectiveness of this framework.

A Robust Likelihood Model for Novelty Detection

Jun 06, 2023Abstract:Current approaches to novelty or anomaly detection are based on deep neural networks. Despite their effectiveness, neural networks are also vulnerable to imperceptible deformations of the input data. This is a serious issue in critical applications, or when data alterations are generated by an adversarial attack. While this is a known problem that has been studied in recent years for the case of supervised learning, the case of novelty detection has received very limited attention. Indeed, in this latter setting the learning is typically unsupervised because outlier data is not available during training, and new approaches for this case need to be investigated. We propose a new prior that aims at learning a robust likelihood for the novelty test, as a defense against attacks. We also integrate the same prior with a state-of-the-art novelty detection approach. Because of the geometric properties of that approach, the resulting robust training is computationally very efficient. An initial evaluation of the method indicates that it is effective at improving performance with respect to the standard models in the absence and presence of attacks.

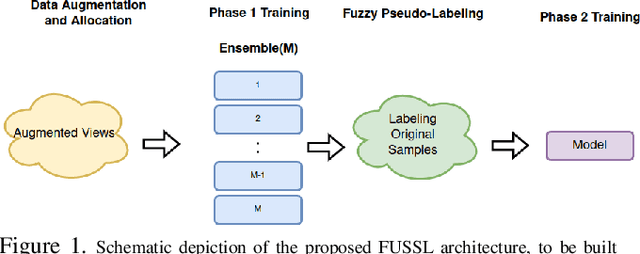

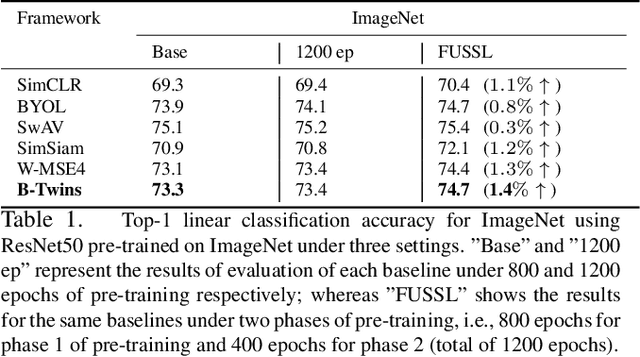

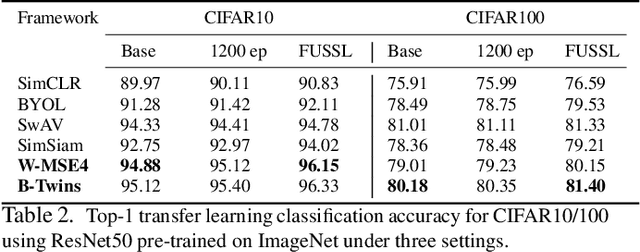

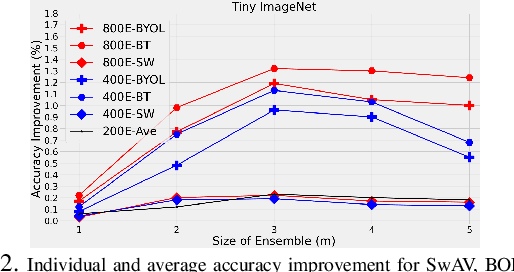

FUSSL: Fuzzy Uncertain Self Supervised Learning

Oct 28, 2022

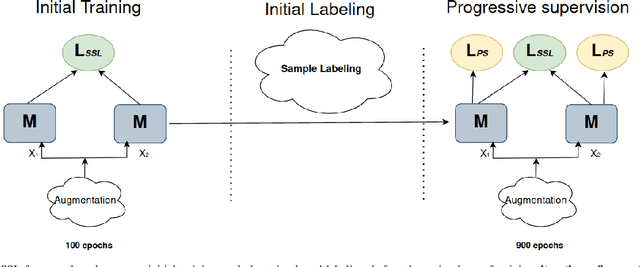

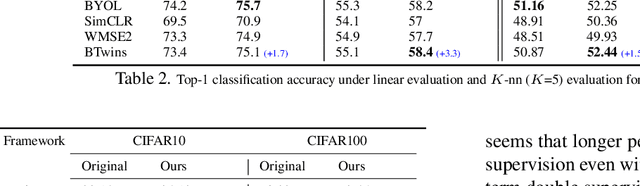

Abstract:Self supervised learning (SSL) has become a very successful technique to harness the power of unlabeled data, with no annotation effort. A number of developed approaches are evolving with the goal of outperforming supervised alternatives, which have been relatively successful. One main issue in SSL is robustness of the approaches under different settings. In this paper, for the first time, we recognize the fundamental limits of SSL coming from the use of a single-supervisory signal. To address this limitation, we leverage the power of uncertainty representation to devise a robust and general standard hierarchical learning/training protocol for any SSL baseline, regardless of their assumptions and approaches. Essentially, using the information bottleneck principle, we decompose feature learning into a two-stage training procedure, each with a distinct supervision signal. This double supervision approach is captured in two key steps: 1) invariance enforcement to data augmentation, and 2) fuzzy pseudo labeling (both hard and soft annotation). This simple, yet, effective protocol which enables cross-class/cluster feature learning, is instantiated via an initial training of an ensemble of models through invariance enforcement to data augmentation as first training phase, and then assigning fuzzy labels to the original samples for the second training phase. We consider multiple alternative scenarios with double supervision and evaluate the effectiveness of our approach on recent baselines, covering four different SSL paradigms, including geometrical, contrastive, non-contrastive, and hard/soft whitening (redundancy reduction) baselines. Extensive experiments under multiple settings show that the proposed training protocol consistently improves the performance of the former baselines, independent of their respective underlying principles.

Deep Active Ensemble Sampling For Image Classification

Oct 11, 2022

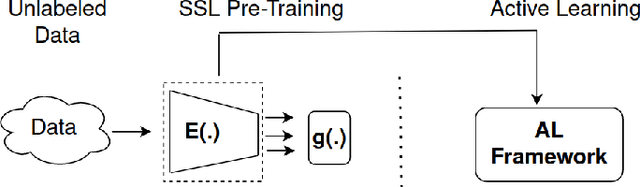

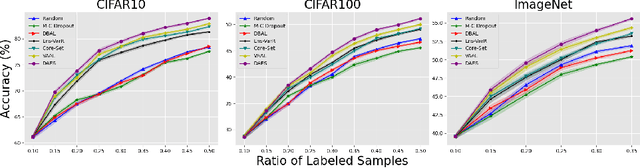

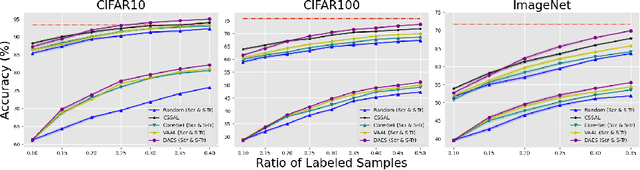

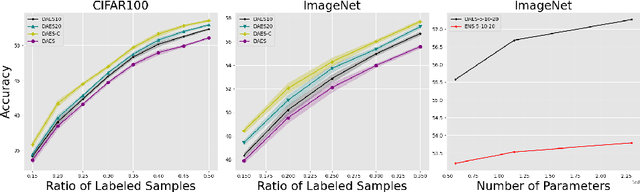

Abstract:Conventional active learning (AL) frameworks aim to reduce the cost of data annotation by actively requesting the labeling for the most informative data points. However, introducing AL to data hungry deep learning algorithms has been a challenge. Some proposed approaches include uncertainty-based techniques, geometric methods, implicit combination of uncertainty-based and geometric approaches, and more recently, frameworks based on semi/self supervised techniques. In this paper, we address two specific problems in this area. The first is the need for efficient exploitation/exploration trade-off in sample selection in AL. For this, we present an innovative integration of recent progress in both uncertainty-based and geometric frameworks to enable an efficient exploration/exploitation trade-off in sample selection strategy. To this end, we build on a computationally efficient approximate of Thompson sampling with key changes as a posterior estimator for uncertainty representation. Our framework provides two advantages: (1) accurate posterior estimation, and (2) tune-able trade-off between computational overhead and higher accuracy. The second problem is the need for improved training protocols in deep AL. For this, we use ideas from semi/self supervised learning to propose a general approach that is independent of the specific AL technique being used. Taken these together, our framework shows a significant improvement over the state-of-the-art, with results that are comparable to the performance of supervised-learning under the same setting. We show empirical results of our framework, and comparative performance with the state-of-the-art on four datasets, namely, MNIST, CIFAR10, CIFAR100 and ImageNet to establish a new baseline in two different settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge