Deyu Yang

Nearest-Neighbor Inter-Intra Contrastive Learning from Unlabeled Videos

Mar 13, 2023Abstract:Contrastive learning has recently narrowed the gap between self-supervised and supervised methods in image and video domain. State-of-the-art video contrastive learning methods such as CVRL and $\rho$-MoCo spatiotemporally augment two clips from the same video as positives. By only sampling positive clips locally from a single video, these methods neglect other semantically related videos that can also be useful. To address this limitation, we leverage nearest-neighbor videos from the global space as additional positive pairs, thus improving positive key diversity and introducing a more relaxed notion of similarity that extends beyond video and even class boundaries. Our method, Inter-Intra Video Contrastive Learning (IIVCL), improves performance on a range of video tasks.

Density-based Curriculum for Multi-goal Reinforcement Learning with Sparse Rewards

Sep 24, 2021

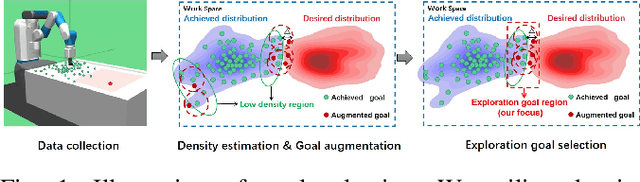

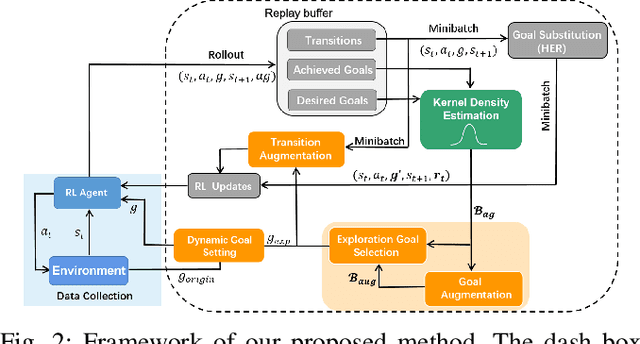

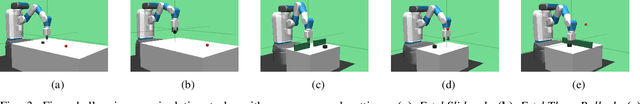

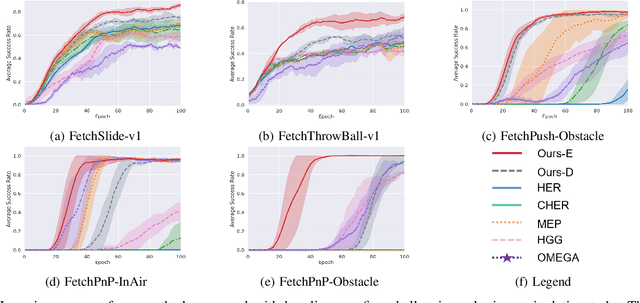

Abstract:Multi-goal reinforcement learning (RL) aims to qualify the agent to accomplish multi-goal tasks, which is of great importance in learning scalable robotic manipulation skills. However, reward engineering always requires strenuous efforts in multi-goal RL. Moreover, it will introduce inevitable bias causing the suboptimality of the final policy. The sparse reward provides a simple yet efficient way to overcome such limits. Nevertheless, it harms the exploration efficiency and even hinders the policy from convergence. In this paper, we propose a density-based curriculum learning method for efficient exploration with sparse rewards and better generalization to desired goal distribution. Intuitively, our method encourages the robot to gradually broaden the frontier of its ability along the directions to cover the entire desired goal space as much and quickly as possible. To further improve data efficiency and generality, we augment the goals and transitions within the allowed region during training. Finally, We evaluate our method on diversified variants of benchmark manipulation tasks that are challenging for existing methods. Empirical results show that our method outperforms the state-of-the-art baselines in terms of both data efficiency and success rate.

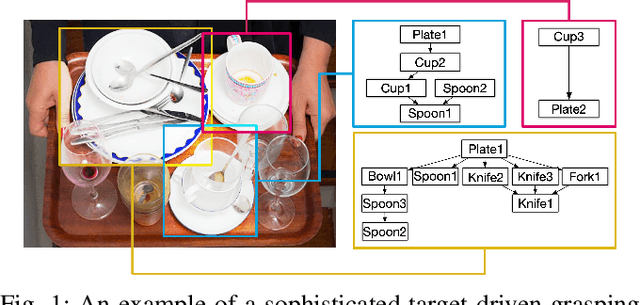

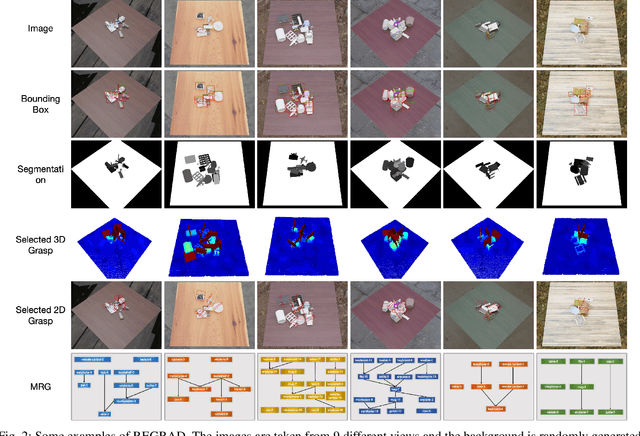

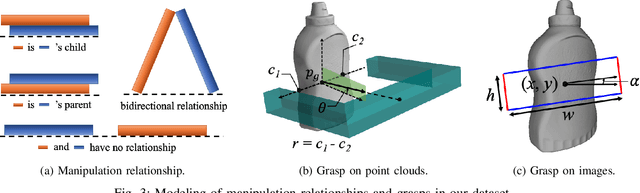

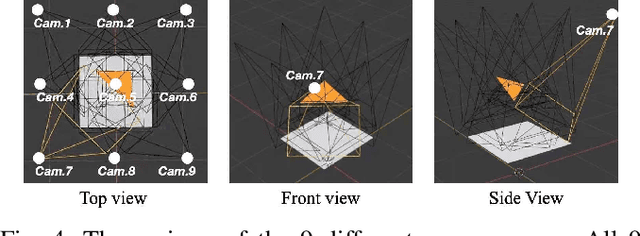

REGRAD: A Large-Scale Relational Grasp Dataset for Safe and Object-Specific Robotic Grasping in Clutter

May 31, 2021

Abstract:Despite the impressive progress achieved in robust grasp detection, robots are not skilled in sophisticated grasping tasks (e.g. search and grasp a specific object in clutter). Such tasks involve not only grasping, but comprehensive perception of the visual world (e.g. the relationship between objects). Recently, the advanced deep learning techniques provide a promising way for understanding the high-level visual concepts. It encourages robotic researchers to explore solutions for such hard and complicated fields. However, deep learning usually means data-hungry. The lack of data severely limits the performance of deep-learning-based algorithms. In this paper, we present a new dataset named \regrad to sustain the modeling of relationships among objects and grasps. We collect the annotations of object poses, segmentations, grasps, and relationships in each image for comprehensive perception of grasping. Our dataset is collected in both forms of 2D images and 3D point clouds. Moreover, since all the data are generated automatically, users are free to import their own object models for the generation of as many data as they want. We have released our dataset and codes. A video that demonstrates the process of data generation is also available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge