David Pennock

A prototype hybrid prediction market for estimating replicability of published work

Mar 01, 2023

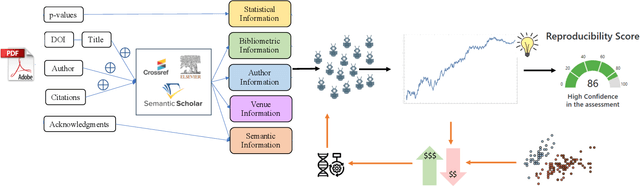

Abstract:We present a prototype hybrid prediction market and demonstrate the avenue it represents for meaningful human-AI collaboration. We build on prior work proposing artificial prediction markets as a novel machine-learning algorithm. In an artificial prediction market, trained AI agents buy and sell outcomes of future events. Classification decisions can be framed as outcomes of future events, and accordingly, the price of an asset corresponding to a given classification outcome can be taken as a proxy for the confidence of the system in that decision. By embedding human participants in these markets alongside bot traders, we can bring together insights from both. In this paper, we detail pilot studies with prototype hybrid markets for the prediction of replication study outcomes. We highlight challenges and opportunities, share insights from semi-structured interviews with hybrid market participants, and outline a vision for ongoing and future work.

A Synthetic Prediction Market for Estimating Confidence in Published Work

Dec 23, 2021

Abstract:Explainably estimating confidence in published scholarly work offers opportunity for faster and more robust scientific progress. We develop a synthetic prediction market to assess the credibility of published claims in the social and behavioral sciences literature. We demonstrate our system and detail our findings using a collection of known replication projects. We suggest that this work lays the foundation for a research agenda that creatively uses AI for peer review.

Learning Performance of Prediction Markets with Kelly Bettors

Jan 31, 2012

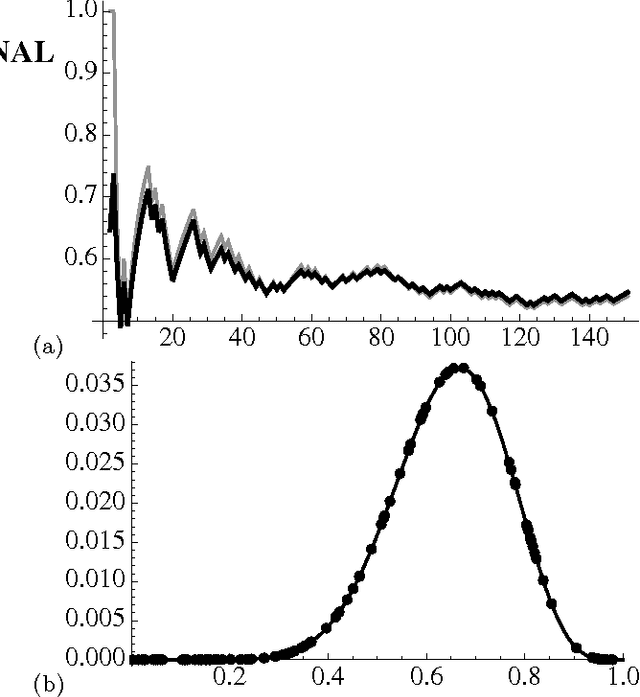

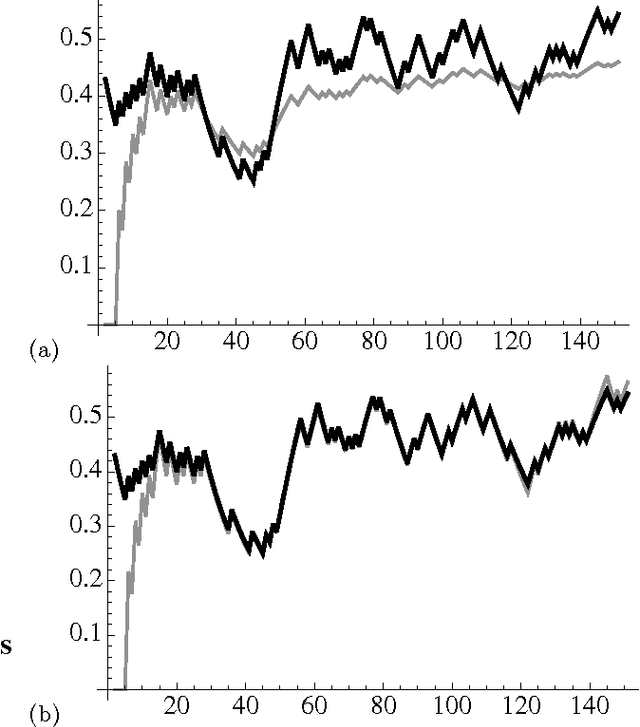

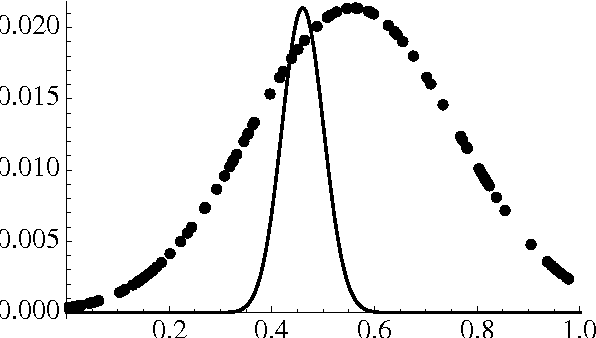

Abstract:In evaluating prediction markets (and other crowd-prediction mechanisms), investigators have repeatedly observed a so-called "wisdom of crowds" effect, which roughly says that the average of participants performs much better than the average participant. The market price---an average or at least aggregate of traders' beliefs---offers a better estimate than most any individual trader's opinion. In this paper, we ask a stronger question: how does the market price compare to the best trader's belief, not just the average trader. We measure the market's worst-case log regret, a notion common in machine learning theory. To arrive at a meaningful answer, we need to assume something about how traders behave. We suppose that every trader optimizes according to the Kelly criteria, a strategy that provably maximizes the compound growth of wealth over an (infinite) sequence of market interactions. We show several consequences. First, the market prediction is a wealth-weighted average of the individual participants' beliefs. Second, the market learns at the optimal rate, the market price reacts exactly as if updating according to Bayes' Law, and the market prediction has low worst-case log regret to the best individual participant. We simulate a sequence of markets where an underlying true probability exists, showing that the market converges to the true objective frequency as if updating a Beta distribution, as the theory predicts. If agents adopt a fractional Kelly criteria, a common practical variant, we show that agents behave like full-Kelly agents with beliefs weighted between their own and the market's, and that the market price converges to a time-discounted frequency. Our analysis provides a new justification for fractional Kelly betting, a strategy widely used in practice for ad-hoc reasons. Finally, we propose a method for an agent to learn her own optimal Kelly fraction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge