Dana Ludwig

Developing a general-purpose clinical language inference model from a large corpus of clinical notes

Oct 12, 2022

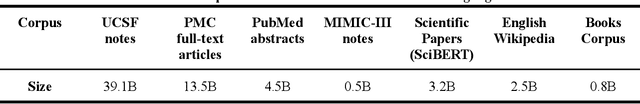

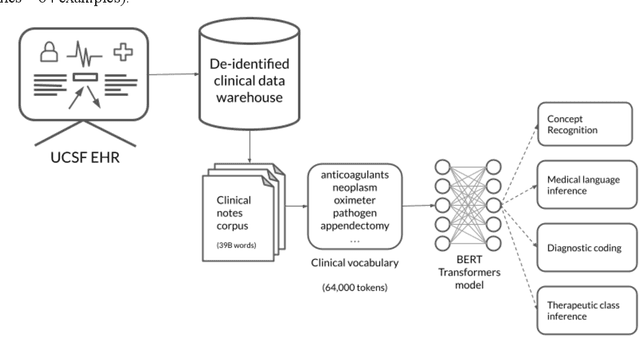

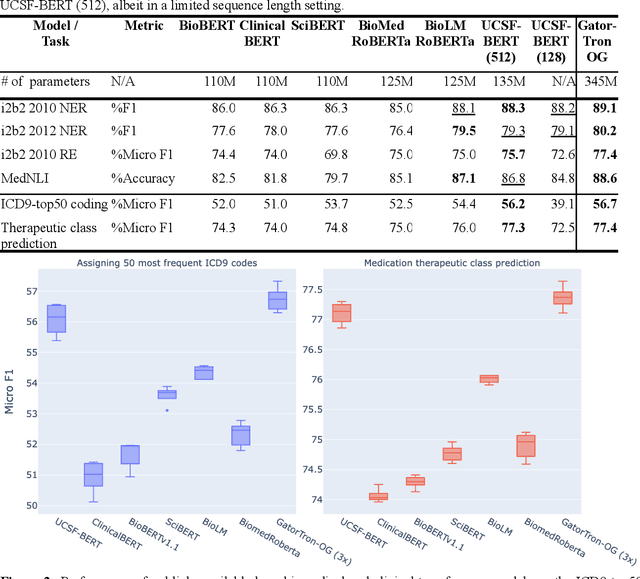

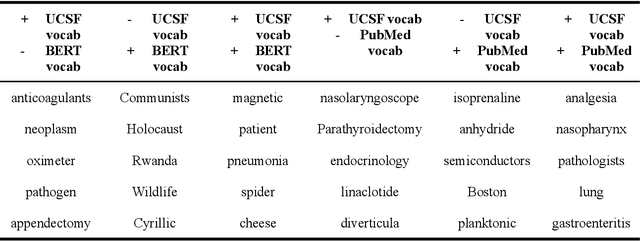

Abstract:Several biomedical language models have already been developed for clinical language inference. However, these models typically utilize general vocabularies and are trained on relatively small clinical corpora. We sought to evaluate the impact of using a domain-specific vocabulary and a large clinical training corpus on the performance of these language models in clinical language inference. We trained a Bidirectional Encoder Decoder from Transformers (BERT) model using a diverse, deidentified corpus of 75 million deidentified clinical notes authored at the University of California, San Francisco (UCSF). We evaluated this model on several clinical language inference benchmark tasks: clinical and temporal concept recognition, relation extraction and medical language inference. We also evaluated our model on two tasks using discharge summaries from UCSF: diagnostic code assignment and therapeutic class inference. Our model performs at par with the best publicly available biomedical language models of comparable sizes on the public benchmark tasks, and is significantly better than these models in a within-system evaluation on the two tasks using UCSF data. The use of in-domain vocabulary appears to improve the encoding of longer documents. The use of large clinical corpora appears to enhance document encoding and inferential accuracy. However, further research is needed to improve abbreviation resolution, and numerical, temporal, and implicitly causal inference.

Scalable and accurate deep learning for electronic health records

May 11, 2018

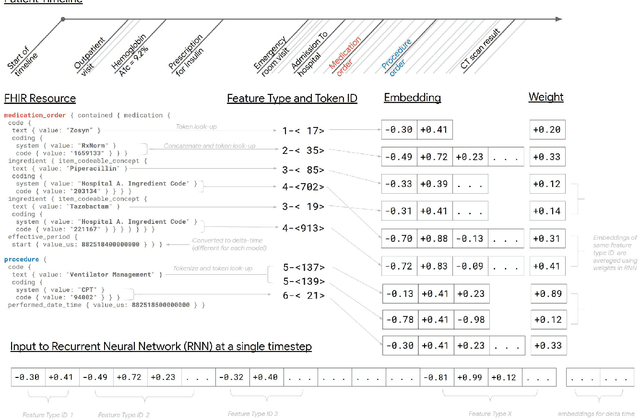

Abstract:Predictive modeling with electronic health record (EHR) data is anticipated to drive personalized medicine and improve healthcare quality. Constructing predictive statistical models typically requires extraction of curated predictor variables from normalized EHR data, a labor-intensive process that discards the vast majority of information in each patient's record. We propose a representation of patients' entire, raw EHR records based on the Fast Healthcare Interoperability Resources (FHIR) format. We demonstrate that deep learning methods using this representation are capable of accurately predicting multiple medical events from multiple centers without site-specific data harmonization. We validated our approach using de-identified EHR data from two U.S. academic medical centers with 216,221 adult patients hospitalized for at least 24 hours. In the sequential format we propose, this volume of EHR data unrolled into a total of 46,864,534,945 data points, including clinical notes. Deep learning models achieved high accuracy for tasks such as predicting in-hospital mortality (AUROC across sites 0.93-0.94), 30-day unplanned readmission (AUROC 0.75-0.76), prolonged length of stay (AUROC 0.85-0.86), and all of a patient's final discharge diagnoses (frequency-weighted AUROC 0.90). These models outperformed state-of-the-art traditional predictive models in all cases. We also present a case-study of a neural-network attribution system, which illustrates how clinicians can gain some transparency into the predictions. We believe that this approach can be used to create accurate and scalable predictions for a variety of clinical scenarios, complete with explanations that directly highlight evidence in the patient's chart.

* Published version from https://www.nature.com/articles/s41746-018-0029-1

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge