Clara Rus

FairDiverse: A Comprehensive Toolkit for Fair and Diverse Information Retrieval Algorithms

Feb 17, 2025Abstract:In modern information retrieval (IR). achieving more than just accuracy is essential to sustaining a healthy ecosystem, especially when addressing fairness and diversity considerations. To meet these needs, various datasets, algorithms, and evaluation frameworks have been introduced. However, these algorithms are often tested across diverse metrics, datasets, and experimental setups, leading to inconsistencies and difficulties in direct comparisons. This highlights the need for a comprehensive IR toolkit that enables standardized evaluation of fairness- and diversity-aware algorithms across different IR tasks. To address this challenge, we present FairDiverse, an open-source and standardized toolkit. FairDiverse offers a framework for integrating fair and diverse methods, including pre-processing, in-processing, and post-processing techniques, at different stages of the IR pipeline. The toolkit supports the evaluation of 28 fairness and diversity algorithms across 16 base models, covering two core IR tasks (search and recommendation) thereby establishing a comprehensive benchmark. Moreover, FairDiverse is highly extensible, providing multiple APIs that empower IR researchers to swiftly develop and evaluate their own fairness and diversity aware models, while ensuring fair comparisons with existing baselines. The project is open-sourced and available on https://github.com/XuChen0427/FairDiverse.

Closing the Gender Wage Gap: Adversarial Fairness in Job Recommendation

Sep 20, 2022

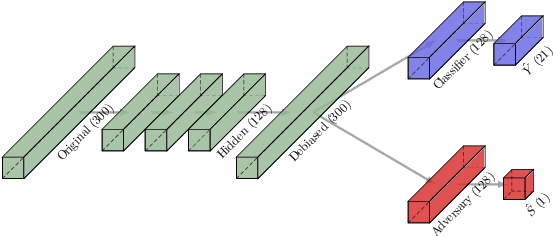

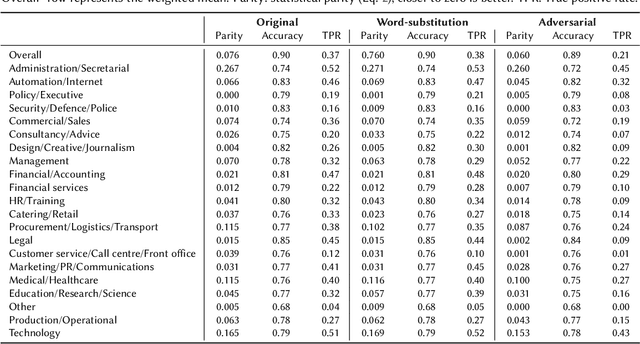

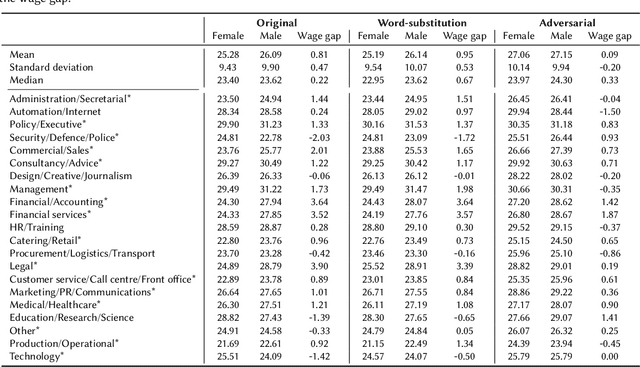

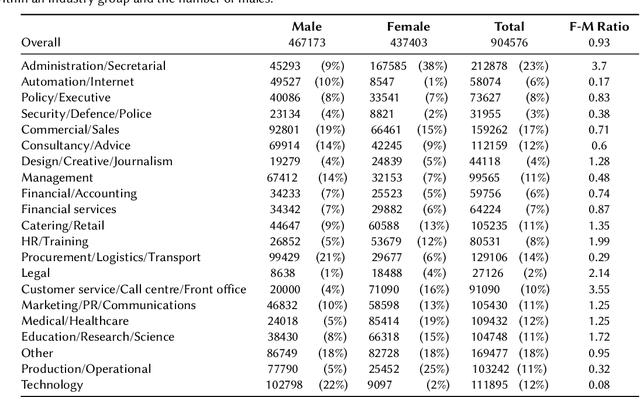

Abstract:The goal of this work is to help mitigate the already existing gender wage gap by supplying unbiased job recommendations based on resumes from job seekers. We employ a generative adversarial network to remove gender bias from word2vec representations of 12M job vacancy texts and 900k resumes. Our results show that representations created from recruitment texts contain algorithmic bias and that this bias results in real-world consequences for recommendation systems. Without controlling for bias, women are recommended jobs with significantly lower salary in our data. With adversarially fair representations, this wage gap disappears, meaning that our debiased job recommendations reduce wage discrimination. We conclude that adversarial debiasing of word representations can increase real-world fairness of systems and thus may be part of the solution for creating fairness-aware recommendation systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge