Chip-Hong Chang

CHIP: Chameleon Hash-based Irreversible Passport for Robust Deep Model Ownership Verification and Active Usage Control

May 30, 2025Abstract:The pervasion of large-scale Deep Neural Networks (DNNs) and their enormous training costs make their intellectual property (IP) protection of paramount importance. Recently introduced passport-based methods attempt to steer DNN watermarking towards strengthening ownership verification against ambiguity attacks by modulating the affine parameters of normalization layers. Unfortunately, neither watermarking nor passport-based methods provide a holistic protection with robust ownership proof, high fidelity, active usage authorization and user traceability for offline access distributed models and multi-user Machine-Learning as a Service (MLaaS) cloud model. In this paper, we propose a Chameleon Hash-based Irreversible Passport (CHIP) protection framework that utilizes the cryptographic chameleon hash function to achieve all these goals. The collision-resistant property of chameleon hash allows for strong model ownership claim upon IP infringement and liable user traceability, while the trapdoor-collision property enables hashing of multiple user passports and licensee certificates to the same immutable signature to realize active usage control. Using the owner passport as an oracle, multiple user-specific triplets, each contains a passport-aware user model, a user passport, and a licensee certificate can be created for secure offline distribution. The watermarked master model can also be deployed for MLaaS with usage permission verifiable by the provision of any trapdoor-colliding user passports. CHIP is extensively evaluated on four datasets and two architectures to demonstrate its protection versatility and robustness. Our code is released at https://github.com/Dshm212/CHIP.

DFREC: DeepFake Identity Recovery Based on Identity-aware Masked Autoencoder

Dec 10, 2024Abstract:Recent advances in deepfake forensics have primarily focused on improving the classification accuracy and generalization performance. Despite enormous progress in detection accuracy across a wide variety of forgery algorithms, existing algorithms lack intuitive interpretability and identity traceability to help with forensic investigation. In this paper, we introduce a novel DeepFake Identity Recovery scheme (DFREC) to fill this gap. DFREC aims to recover the pair of source and target faces from a deepfake image to facilitate deepfake identity tracing and reduce the risk of deepfake attack. It comprises three key components: an Identity Segmentation Module (ISM), a Source Identity Reconstruction Module (SIRM), and a Target Identity Reconstruction Module (TIRM). The ISM segments the input face into distinct source and target face information, and the SIRM reconstructs the source face and extracts latent target identity features with the segmented source information. The background context and latent target identity features are synergetically fused by a Masked Autoencoder in the TIRM to reconstruct the target face. We evaluate DFREC on six different high-fidelity face-swapping attacks on FaceForensics++, CelebaMegaFS and FFHQ-E4S datasets, which demonstrate its superior recovery performance over state-of-the-art deepfake recovery algorithms. In addition, DFREC is the only scheme that can recover both pristine source and target faces directly from the forgery image with high fadelity.

BadHMP: Backdoor Attack against Human Motion Prediction

Sep 29, 2024Abstract:Precise future human motion prediction over subsecond horizons from past observations is crucial for various safety-critical applications. To date, only one study has examined the vulnerability of human motion prediction to evasion attacks. In this paper, we propose BadHMP, the first backdoor attack that targets specifically human motion prediction. Our approach involves generating poisoned training samples by embedding a localized backdoor trigger in one arm of the skeleton, causing selected joints to remain relatively still or follow predefined motion in historical time steps. Subsequently, the future sequences are globally modified to the target sequences, and the entire training dataset is traversed to select the most suitable samples for poisoning. Our carefully designed backdoor triggers and targets guarantee the smoothness and naturalness of the poisoned samples, making them stealthy enough to evade detection by the model trainer while keeping the poisoned model unobtrusive in terms of prediction fidelity to untainted sequences. The target sequences can be successfully activated by the designed input sequences even with a low poisoned sample injection ratio. Experimental results on two datasets (Human3.6M and CMU-Mocap) and two network architectures (LTD and HRI) demonstrate the high-fidelity, effectiveness, and stealthiness of BadHMP. Robustness of our attack against fine-tuning defense is also verified.

Steganographic Passport: An Owner and User Verifiable Credential for Deep Model IP Protection Without Retraining

Apr 03, 2024

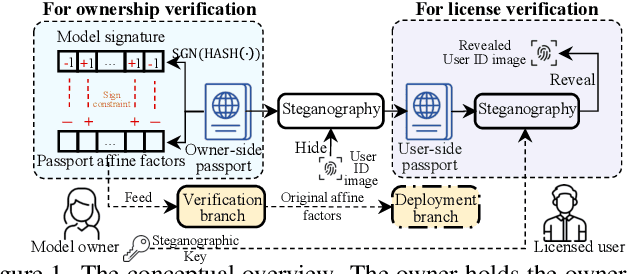

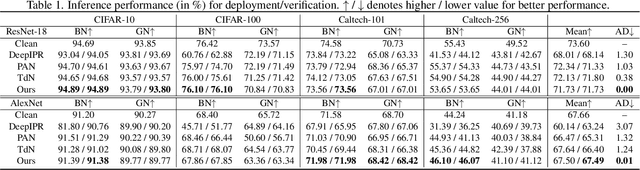

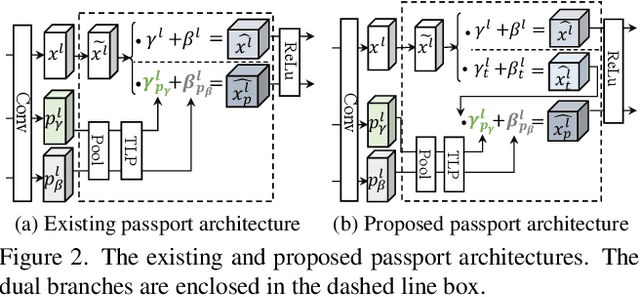

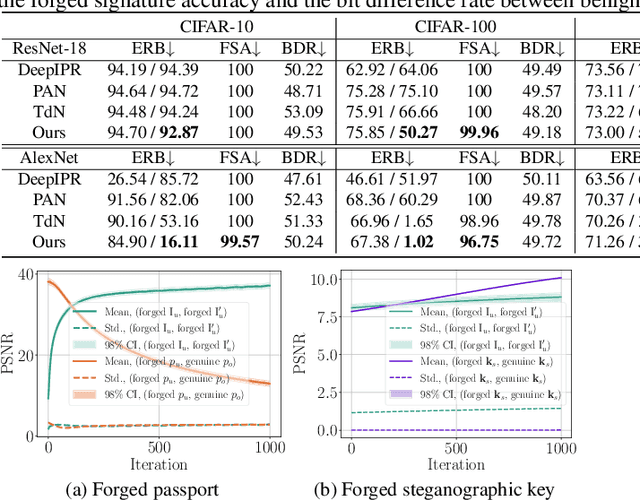

Abstract:Ensuring the legal usage of deep models is crucial to promoting trustable, accountable, and responsible artificial intelligence innovation. Current passport-based methods that obfuscate model functionality for license-to-use and ownership verifications suffer from capacity and quality constraints, as they require retraining the owner model for new users. They are also vulnerable to advanced Expanded Residual Block ambiguity attacks. We propose Steganographic Passport, which uses an invertible steganographic network to decouple license-to-use from ownership verification by hiding the user's identity images into the owner-side passport and recovering them from their respective user-side passports. An irreversible and collision-resistant hash function is used to avoid exposing the owner-side passport from the derived user-side passports and increase the uniqueness of the model signature. To safeguard both the passport and model's weights against advanced ambiguity attacks, an activation-level obfuscation is proposed for the verification branch of the owner's model. By jointly training the verification and deployment branches, their weights become tightly coupled. The proposed method supports agile licensing of deep models by providing a strong ownership proof and license accountability without requiring a separate model retraining for the admission of every new user. Experiment results show that our Steganographic Passport outperforms other passport-based deep model protection methods in robustness against various known attacks.

An Efficient Hash-based Data Structure for Dynamic Vision Sensors and its Application to Low-energy Low-memory Noise Filtering

Apr 28, 2023

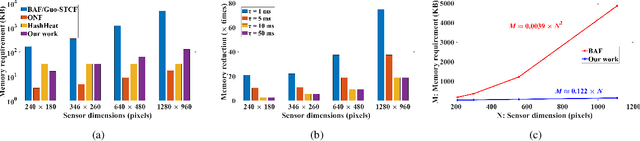

Abstract:Events generated by the Dynamic Vision Sensor (DVS) are generally stored and processed in two-dimensional data structures whose memory complexity and energy-per-event scale proportionately with increasing sensor dimensions. In this paper, we propose a new two-dimensional data structure (BF_2) that takes advantage of the sparsity of events and enables compact storage of data using hash functions. It overcomes the saturation issue in the Bloom Filter (BF) and the memory reset issue in other hash-based arrays by using a second dimension to clear 1 out of D rows at regular intervals. A hardware-friendly, low-power, and low-memory-footprint noise filter for DVS is demonstrated using BF_2. For the tested datasets, the performance of the filter matches those of state-of-the-art filters like the BAF/STCF while consuming less than 10% and 15% of their memory and energy-per-event, respectively, for a correlation time constant Tau = 5 ms. The memory and energy advantages of the proposed filter increase with increasing sensor sizes. The proposed filter compares favourably with other hardware-friendly, event-based filters in hardware complexity, memory requirement and energy-per-event - as demonstrated through its implementation on an FPGA. The parameters of the data structure can be adjusted for trade-offs between performance and memory consumption, based on application requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge