Chenyu Ren

WundtGPT: Shaping Large Language Models To Be An Empathetic, Proactive Psychologist

Jun 16, 2024

Abstract:Large language models (LLMs) are raging over the medical domain, and their momentum has carried over into the mental health domain, leading to the emergence of few mental health LLMs. Although such mental health LLMs could provide reasonable suggestions for psychological counseling, how to develop an authentic and effective doctor-patient relationship (DPR) through LLMs is still an important problem. To fill this gap, we dissect DPR into two key attributes, i.e., the psychologist's empathy and proactive guidance. We thus present WundtGPT, an empathetic and proactive mental health large language model that is acquired by fine-tuning it with instruction and real conversation between psychologists and patients. It is designed to assist psychologists in diagnosis and help patients who are reluctant to communicate face-to-face understand their psychological conditions. Its uniqueness lies in that it could not only pose purposeful questions to guide patients in detailing their symptoms but also offer warm emotional reassurance. In particular, WundtGPT incorporates Collection of Questions, Chain of Psychodiagnosis, and Empathy Constraints into a comprehensive prompt for eliciting LLMs' questions and diagnoses. Additionally, WundtGPT proposes a reward model to promote alignment with empathetic mental health professionals, which encompasses two key factors: cognitive empathy and emotional empathy. We offer a comprehensive evaluation of our proposed model. Based on these outcomes, we further conduct the manual evaluation based on proactivity, effectiveness, professionalism and coherence. We notice that WundtGPT can offer professional and effective consultation. The model is available at huggingface.

Pushing The Limit of LLM Capacity for Text Classification

Feb 16, 2024

Abstract:The value of text classification's future research has encountered challenges and uncertainties, due to the extraordinary efficacy demonstrated by large language models (LLMs) across numerous downstream NLP tasks. In this era of open-ended language modeling, where task boundaries are gradually fading, an urgent question emerges: have we made significant advances in text classification under the full benefit of LLMs? To answer this question, we propose RGPT, an adaptive boosting framework tailored to produce a specialized text classification LLM by recurrently ensembling a pool of strong base learners. The base learners are constructed by adaptively adjusting the distribution of training samples and iteratively fine-tuning LLMs with them. Such base learners are then ensembled to be a specialized text classification LLM, by recurrently incorporating the historical predictions from the previous learners. Through a comprehensive empirical comparison, we show that RGPT significantly outperforms 8 SOTA PLMs and 7 SOTA LLMs on four benchmarks by 1.36% on average. Further evaluation experiments show a clear surpassing of RGPT over human classification.

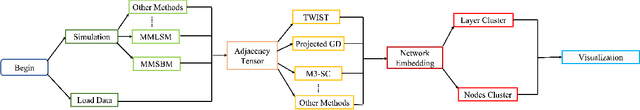

rMultiNet: An R Package For Multilayer Networks Analysis

Feb 09, 2023

Abstract:This paper develops an R package rMultiNet to analyze multilayer network data. We provide two general frameworks from recent literature, e.g. mixture multilayer stochastic block model(MMSBM) and mixture multilayer latent space model(MMLSM) to generate the multilayer network. We also provide several methods to reveal the embedding of both nodes and layers followed by further data analysis methods, such as clustering. Three real data examples are processed in the package. The source code of rMultiNet is available at https://github.com/ChenyuzZZ73/rMultiNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge