Charles T. Marx

Predictive Multiplicity in Classification

Sep 14, 2019

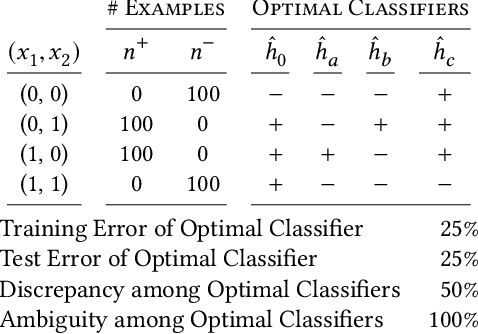

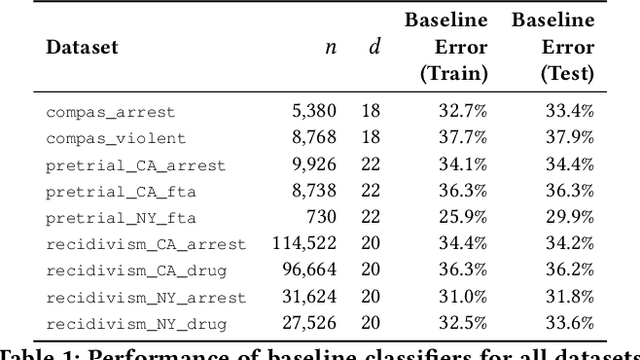

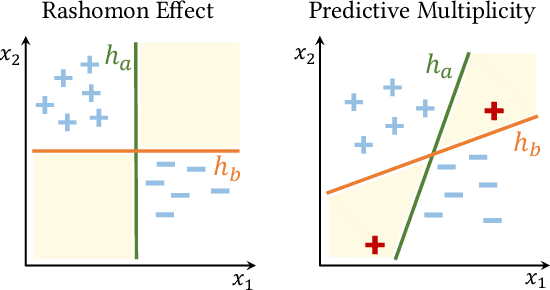

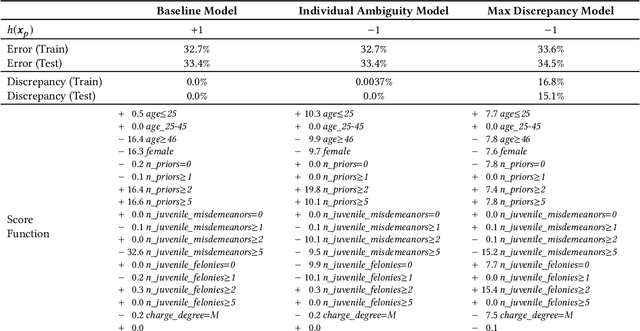

Abstract:In the context of machine learning, a prediction problem exhibits predictive multiplicity if there exist several "good" models that attain identical or near-identical performance (i.e., accuracy, AUC, etc.). In this paper, we study the effects of multiplicity in human-facing applications, such as credit scoring and recidivism prediction. We introduce a specific notion of multiplicity -- predictive multiplicity -- to describe the existence of good models that output conflicting predictions. Unlike existing notions of multiplicity (e.g., the Rashomon effect), predictive multiplicity reflects irreconcilable differences in the predictions of models with comparable performance, and presents new challenges for common practices such as model selection and local explanation. We propose measures to evaluate the predictive multiplicity in classification problems. We present integer programming methods to compute these measures for a given datasets by solving empirical risk minimization problems with discrete constraints. We demonstrate how these tools can inform stakeholders on a large collection of recidivism prediction problems. Our results show that real-world prediction problems often admit many good models that output wildly conflicting predictions, and support the need to report predictive multiplicity in model development.

Disentangling Influence: Using Disentangled Representations to Audit Model Predictions

Jun 20, 2019

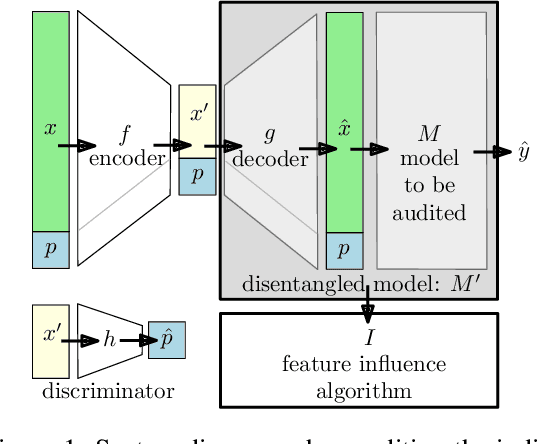

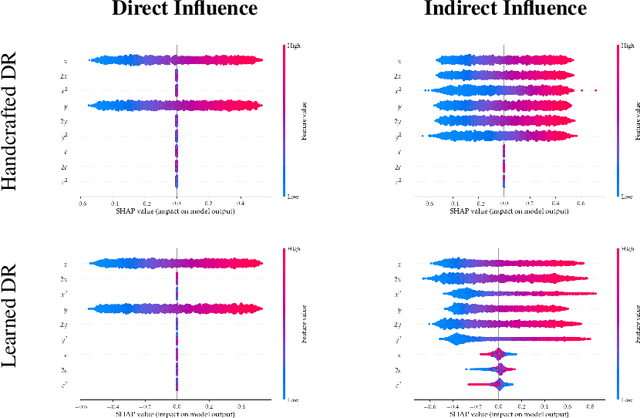

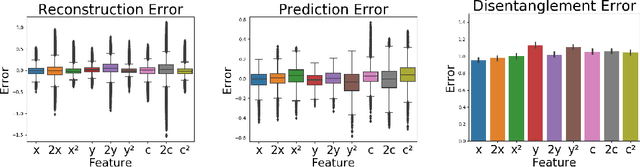

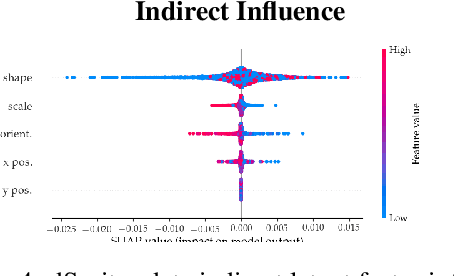

Abstract:Motivated by the need to audit complex and black box models, there has been extensive research on quantifying how data features influence model predictions. Feature influence can be direct (a direct influence on model outcomes) and indirect (model outcomes are influenced via proxy features). Feature influence can also be expressed in aggregate over the training or test data or locally with respect to a single point. Current research has typically focused on one of each of these dimensions. In this paper, we develop disentangled influence audits, a procedure to audit the indirect influence of features. Specifically, we show that disentangled representations provide a mechanism to identify proxy features in the dataset, while allowing an explicit computation of feature influence on either individual outcomes or aggregate-level outcomes. We show through both theory and experiments that disentangled influence audits can both detect proxy features and show, for each individual or in aggregate, which of these proxy features affects the classifier being audited the most. In this respect, our method is more powerful than existing methods for ascertaining feature influence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge