Casper Harteveld

Bridging Pedagogy and Play: Introducing a Language Mapping Interface for Human-AI Co-Creation in Educational Game Design

Mar 04, 2026Abstract:Educational games can foster critical thinking, problem-solving, and motivation, yet instructors often find it difficult to design games that reliably achieve specific learning outcomes. Existing authoring environments reduce the need for programming expertise, but they do not eliminate the underlying challenges of educational game design, and they can leave non-expert designers reliant on opaque suggestions from AI systems. We designed a controlled natural language framework-based web tool that positions language as the primary interface for LLM-assisted educational game design. In the tool, users and an LLM assistant collaboratively develop a structured language that maps pedagogy to gameplay through four linked components. We argue that, by making pedagogical intent explicit and editable in the interface, the tool has the potential to lower design barriers for non-expert designers, preserves human agency in critical decisions, and enables alignment and reflections between pedagogy and gameplay during and after co-creation.

Kaleidoscope Gallery: Exploring Ethics and Generative AI Through Art

May 20, 2025Abstract:Ethical theories and Generative AI (GenAI) models are dynamic concepts subject to continuous evolution. This paper investigates the visualization of ethics through a subset of GenAI models. We expand on the emerging field of Visual Ethics, using art as a form of critical inquiry and the metaphor of a kaleidoscope to invoke moral imagination. Through formative interviews with 10 ethics experts, we first establish a foundation of ethical theories. Our analysis reveals five families of ethical theories, which we then transform into images using the text-to-image (T2I) GenAI model. The resulting imagery, curated as Kaleidoscope Gallery and evaluated by the same experts, revealed eight themes that highlight how morality, society, and learned associations are central to ethical theories. We discuss implications for critically examining T2I models and present cautions and considerations. This work contributes to examining ethical theories as foundational knowledge that interrogates GenAI models as socio-technical systems.

MOSAAIC: Managing Optimization towards Shared Autonomy, Authority, and Initiative in Co-creation

May 16, 2025Abstract:Striking the appropriate balance between humans and co-creative AI is an open research question in computational creativity. Co-creativity, a form of hybrid intelligence where both humans and AI take action proactively, is a process that leads to shared creative artifacts and ideas. Achieving a balanced dynamic in co-creativity requires characterizing control and identifying strategies to distribute control between humans and AI. We define control as the power to determine, initiate, and direct the process of co-creation. Informed by a systematic literature review of 172 full-length papers, we introduce MOSAAIC (Managing Optimization towards Shared Autonomy, Authority, and Initiative in Co-creation), a novel framework for characterizing and balancing control in co-creation. MOSAAIC identifies three key dimensions of control: autonomy, initiative, and authority. We supplement our framework with control optimization strategies in co-creation. To demonstrate MOSAAIC's applicability, we analyze the distribution of control in six existing co-creative AI case studies and present the implications of using this framework.

Exploring Eye Tracking to Detect Cognitive Load in Complex Virtual Reality Training

Nov 18, 2024

Abstract:Virtual Reality (VR) has been a beneficial training tool in fields such as advanced manufacturing. However, users may experience a high cognitive load due to various factors, such as the use of VR hardware or tasks within the VR environment. Studies have shown that eye-tracking has the potential to detect cognitive load, but in the context of VR and complex spatiotemporal tasks (e.g., assembly and disassembly), it remains relatively unexplored. Here, we present an ongoing study to detect users' cognitive load using an eye-tracking-based machine learning approach. We developed a VR training system for cold spray and tested it with 22 participants, obtaining 19 valid eye-tracking datasets and NASA-TLX scores. We applied Multi-Layer Perceptron (MLP) and Random Forest (RF) models to compare the accuracy of predicting cognitive load (i.e., NASA-TLX) using pupil dilation and fixation duration. Our preliminary analysis demonstrates the feasibility of using eye tracking to detect cognitive load in complex spatiotemporal VR experiences and motivates further exploration.

GPT for Games: An Updated Scoping Review (2020-2024)

Nov 01, 2024Abstract:Due to GPT's impressive generative capabilities, its applications in games are expanding rapidly. To offer researchers a comprehensive understanding of the current applications and identify both emerging trends and unexplored areas, this paper introduces an updated scoping review of 131 articles, 76 of which were published in 2024, to explore GPT's potential for games. By coding and synthesizing the papers, we identify five prominent applications of GPT in current game research: procedural content generation, mixed-initiative game design, mixed-initiative gameplay, playing games, and game user research. Drawing on insights from these application areas and emerging research, we propose future studies should focus on expanding the technical boundaries of the GPT models and exploring the complex interaction dynamics between them and users. This review aims to illustrate the state of the art in innovative GPT applications in games, offering a foundation to enrich game development and enhance player experiences through cutting-edge AI innovations.

GPT for Games: A Scoping Review (2020-2023)

Apr 27, 2024Abstract:This paper introduces a scoping review of 55 articles to explore GPT's potential for games, offering researchers a comprehensive understanding of the current applications and identifying both emerging trends and unexplored areas. We identify five key applications of GPT in current game research: procedural content generation, mixed-initiative game design, mixed-initiative gameplay, playing games, and game user research. Drawing from insights in each of these application areas, we propose directions for future research in each one. This review aims to lay the groundwork by illustrating the state of the art for innovative GPT applications in games, promising to enrich game development and enhance player experiences with cutting-edge AI innovations.

Advancing Methodology for Social Science Research Using Alternate Reality Games: Proof-of-Concept Through Measuring Individual Differences and Adaptability and their impact on Team Performance

Jun 25, 2021

Abstract:While work in fields of CSCW (Computer Supported Collaborative Work), Psychology and Social Sciences have progressed our understanding of team processes and their effect performance and effectiveness, current methods rely on observations or self-report, with little work directed towards studying team processes with quantifiable measures based on behavioral data. In this report we discuss work tackling this open problem with a focus on understanding individual differences and its effect on team adaptation, and further explore the effect of these factors on team performance as both an outcome and a process. We specifically discuss our contribution in terms of methods that augment survey data and behavioral data that allow us to gain more insight on team performance as well as develop a method to evaluate adaptation and performance across and within a group. To make this problem more tractable we chose to focus on specific types of environments, Alternate Reality Games (ARGs), and for several reasons. First, these types of games involve setups that are similar to a real-world setup, e.g., communication through slack or email. Second, they are more controllable than real environments allowing us to embed stimuli if needed. Lastly, they allow us to collect data needed to understand decisions and communications made through the entire duration of the experience, which makes team processes more transparent than otherwise possible. In this report we discuss the work we did so far and demonstrate the efficacy of the approach.

VINS: Visual Search for Mobile User Interface Design

Feb 10, 2021

Abstract:Searching for relative mobile user interface (UI) design examples can aid interface designers in gaining inspiration and comparing design alternatives. However, finding such design examples is challenging, especially as current search systems rely on only text-based queries and do not consider the UI structure and content into account. This paper introduces VINS, a visual search framework, that takes as input a UI image (wireframe, high-fidelity) and retrieves visually similar design examples. We first survey interface designers to better understand their example finding process. We then develop a large-scale UI dataset that provides an accurate specification of the interface's view hierarchy (i.e., all the UI components and their specific location). By utilizing this dataset, we propose an object-detection based image retrieval framework that models the UI context and hierarchical structure. The framework achieves a mean Average Precision of 76.39\% for the UI detection and high performance in querying similar UI designs.

Player-AI Interaction: What Neural Network Games Reveal About AI as Play

Jan 18, 2021

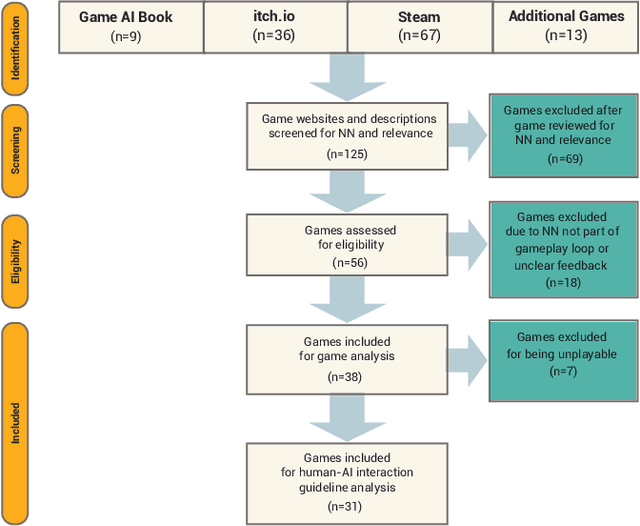

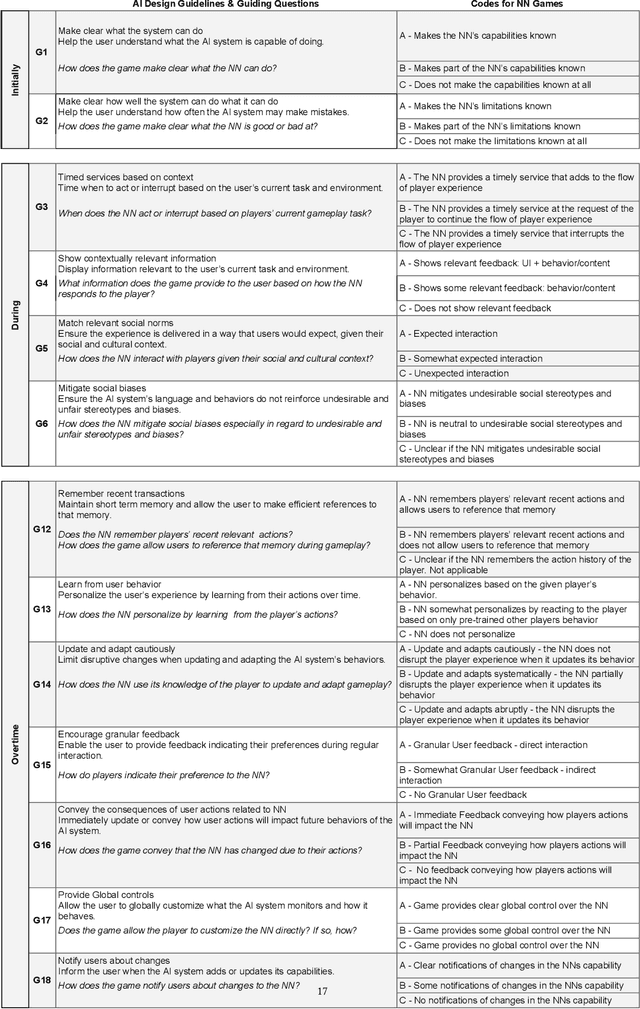

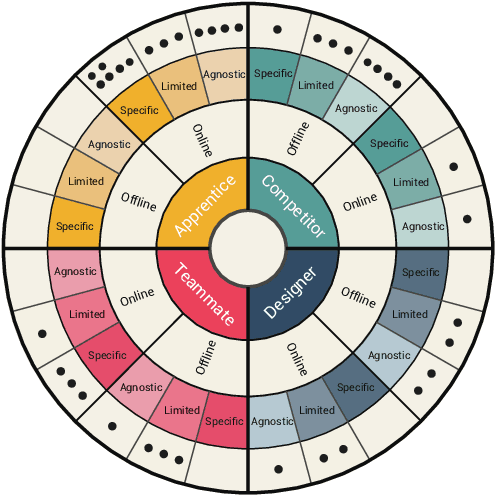

Abstract:The advent of artificial intelligence (AI) and machine learning (ML) bring human-AI interaction to the forefront of HCI research. This paper argues that games are an ideal domain for studying and experimenting with how humans interact with AI. Through a systematic survey of neural network games (n = 38), we identified the dominant interaction metaphors and AI interaction patterns in these games. In addition, we applied existing human-AI interaction guidelines to further shed light on player-AI interaction in the context of AI-infused systems. Our core finding is that AI as play can expand current notions of human-AI interaction, which are predominantly productivity-based. In particular, our work suggests that game and UX designers should consider flow to structure the learning curve of human-AI interaction, incorporate discovery-based learning to play around with the AI and observe the consequences, and offer users an invitation to play to explore new forms of human-AI interaction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge