Brij B. Gupta

PWG-IDS: An Intrusion Detection Model for Solving Class Imbalance in IIoT Networks Using Generative Adversarial Networks

Oct 06, 2021

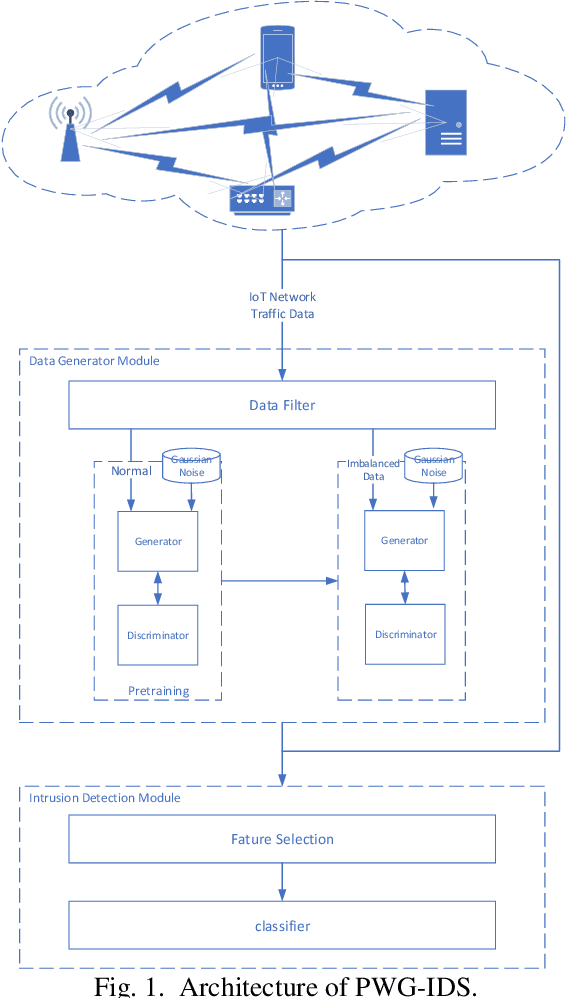

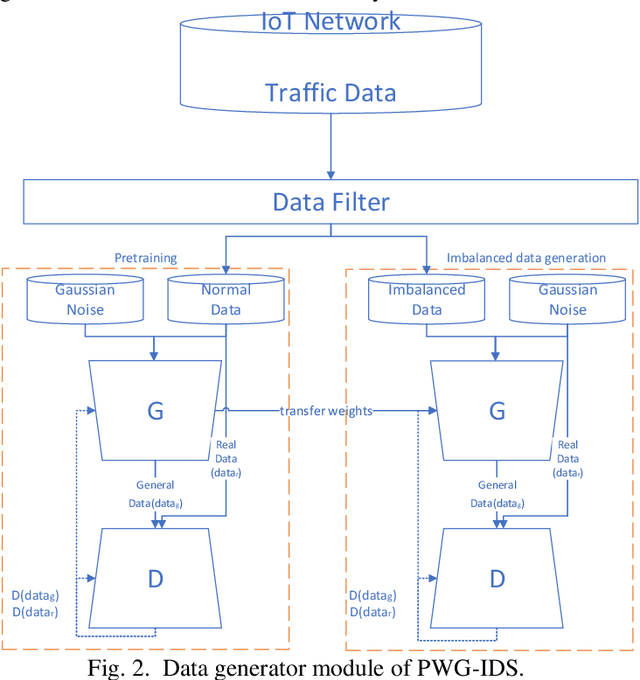

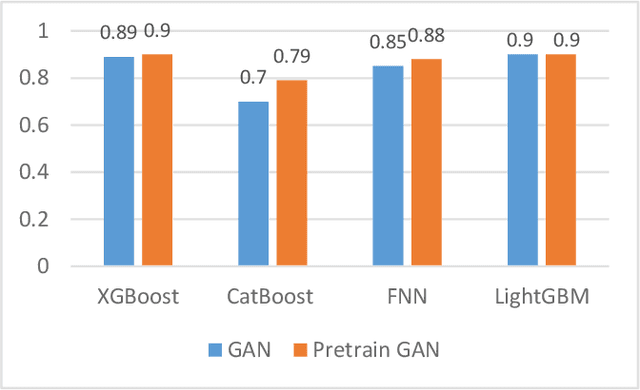

Abstract:With the continuous development of industrial IoT (IIoT) technology, network security is becoming more and more important. And intrusion detection is an important part of its security. However, since the amount of attack traffic is very small compared to normal traffic, this imbalance makes intrusion detection in it very difficult. To address this imbalance, an intrusion detection system called pretraining Wasserstein generative adversarial network intrusion detection system (PWG-IDS) is proposed in this paper. This system is divided into two main modules: 1) In this module, we introduce the pretraining mechanism in the Wasserstein generative adversarial network with gradient penalty (WGAN-GP) for the first time, firstly using the normal network traffic to train the WGAN-GP, and then inputting the imbalance data into the pre-trained WGAN-GP to retrain and generate the final required data. 2) Intrusion detection module: We use LightGBM as the classification algorithm to detect attack traffic in IIoT networks. The experimental results show that our proposed PWG-IDS outperforms other models, with F1-scores of 99% and 89% on the 2 datasets, respectively. And the pretraining mechanism we proposed can also be widely used in other GANs, providing a new way of thinking for the training of GANs.

Cloud-based Federated Boosting for Mobile Crowdsensing

May 09, 2020

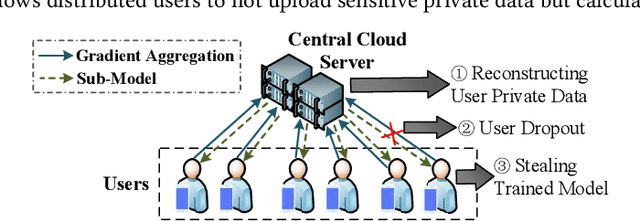

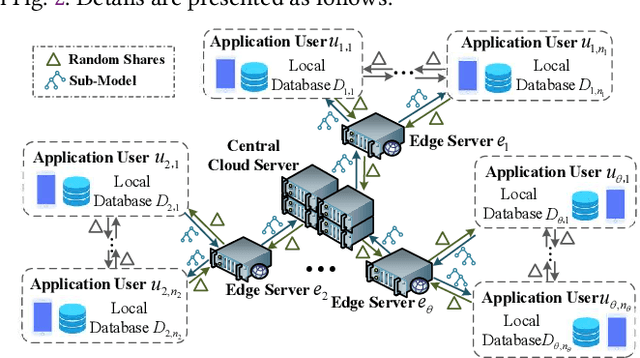

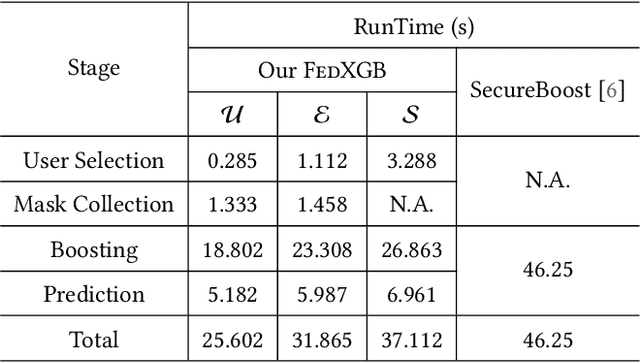

Abstract:The application of federated extreme gradient boosting to mobile crowdsensing apps brings several benefits, in particular high performance on efficiency and classification. However, it also brings a new challenge for data and model privacy protection. Besides it being vulnerable to Generative Adversarial Network (GAN) based user data reconstruction attack, there is not the existing architecture that considers how to preserve model privacy. In this paper, we propose a secret sharing based federated learning architecture FedXGB to achieve the privacy-preserving extreme gradient boosting for mobile crowdsensing. Specifically, we first build a secure classification and regression tree (CART) of XGBoost using secret sharing. Then, we propose a secure prediction protocol to protect the model privacy of XGBoost in mobile crowdsensing. We conduct a comprehensive theoretical analysis and extensive experiments to evaluate the security, effectiveness, and efficiency of FedXGB. The results indicate that FedXGB is secure against the honest-but-curious adversaries and attains less than 1% accuracy loss compared with the original XGBoost model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge