Bingxuan Zhao

SHARP: Spectrum-aware Highly-dynamic Adaptation for Resolution Promotion in Remote Sensing Synthesis

Mar 23, 2026Abstract:Text-to-image generation powered by Diffusion Transformers (DiTs) has made remarkable strides, yet remote sensing (RS) synthesis lags behind due to two barriers: the absence of a domain-specialized DiT prior and the prohibitive cost of training at the large resolutions that RS applications demand. Training-free resolution promotion via Rotary Position Embedding (RoPE) rescaling offers a practical remedy, but every existing method applies a static positional scaling rule throughout the denoising process. This uniform compression is particularly harmful for RS imagery, whose substantially denser medium- and high-frequency energy encodes the fine structures critical for aerial-scene realism, such as vehicles, building contours, and road markings. Addressing both challenges requires a domain-specialized generative prior coupled with a denoising-aware positional adaptation strategy. To this end, we fine-tune FLUX on over 100,000 curated RS images to build a strong domain prior (RS-FLUX), and propose Spectrum-aware Highly-dynamic Adaptation for Resolution Promotion (SHARP), a training-free method that introduces a rational fractional time schedule k_rs(t) into RoPE. SHARP applies strong positional promotion during the early layout-formation stage and progressively relaxes it during detail recovery, aligning extrapolation strength with the frequency-progressive nature of diffusion denoising. Its resolution-agnostic formulation further enables robust multi-scale generation from a single set of hyperparameters. Extensive experiments across six square and rectangular resolutions show that SHARP consistently outperforms all training-free baselines on CLIP Score, Aesthetic Score, and HPSv2, with widening margins at more aggressive extrapolation factors and negligible computational overhead. Code and weights are available at https://github.com/bxuanz/SHARP.

Edge Approximation Text Detector

Apr 05, 2025

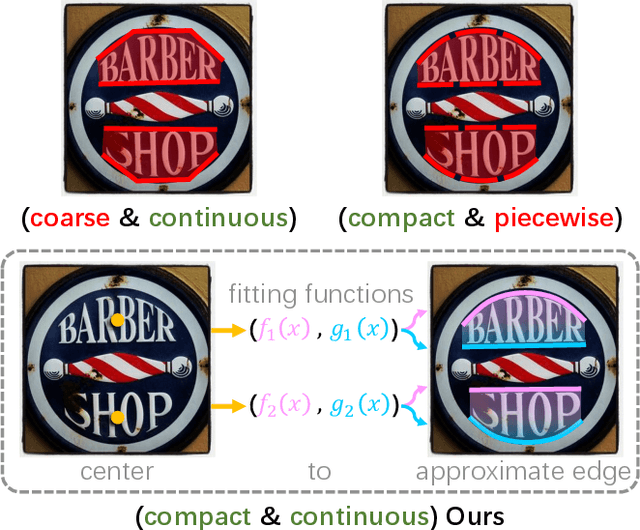

Abstract:Pursuing efficient text shape representations helps scene text detection models focus on compact foreground regions and optimize the contour reconstruction steps to simplify the whole detection pipeline. Current approaches either represent irregular shapes via box-to-polygon strategy or decomposing a contour into pieces for fitting gradually, the deficiency of coarse contours or complex pipelines always exists in these models. Considering the above issues, we introduce EdgeText to fit text contours compactly while alleviating excessive contour rebuilding processes. Concretely, it is observed that the two long edges of texts can be regarded as smooth curves. It allows us to build contours via continuous and smooth edges that cover text regions tightly instead of fitting piecewise, which helps avoid the two limitations in current models. Inspired by this observation, EdgeText formulates the text representation as the edge approximation problem via parameterized curve fitting functions. In the inference stage, our model starts with locating text centers, and then creating curve functions for approximating text edges relying on the points. Meanwhile, truncation points are determined based on the location features. In the end, extracting curve segments from curve functions by using the pixel coordinate information brought by truncation points to reconstruct text contours. Furthermore, considering the deep dependency of EdgeText on text edges, a bilateral enhanced perception (BEP) module is designed. It encourages our model to pay attention to the recognition of edge features. Additionally, to accelerate the learning of the curve function parameters, we introduce a proportional integral loss (PI-loss) to force the proposed model to focus on the curve distribution and avoid being disturbed by text scales.

MMO-IG: Multi-Class and Multi-Scale Object Image Generation for Remote Sensing

Dec 18, 2024

Abstract:The rapid advancement of deep generative models (DGMs) has significantly advanced research in computer vision, providing a cost-effective alternative to acquiring vast quantities of expensive imagery. However, existing methods predominantly focus on synthesizing remote sensing (RS) images aligned with real images in a global layout view, which limits their applicability in RS image object detection (RSIOD) research. To address these challenges, we propose a multi-class and multi-scale object image generator based on DGMs, termed MMO-IG, designed to generate RS images with supervised object labels from global and local aspects simultaneously. Specifically, from the local view, MMO-IG encodes various RS instances using an iso-spacing instance map (ISIM). During the generation process, it decodes each instance region with iso-spacing value in ISIM-corresponding to both background and foreground instances-to produce RS images through the denoising process of diffusion models. Considering the complex interdependencies among MMOs, we construct a spatial-cross dependency knowledge graph (SCDKG). This ensures a realistic and reliable multidirectional distribution among MMOs for region embedding, thereby reducing the discrepancy between source and target domains. Besides, we propose a structured object distribution instruction (SODI) to guide the generation of synthesized RS image content from a global aspect with SCDKG-based ISIM together. Extensive experimental results demonstrate that our MMO-IG exhibits superior generation capabilities for RS images with dense MMO-supervised labels, and RS detectors pre-trained with MMO-IG show excellent performance on real-world datasets.

Traffic Sign Interpretation in Real Road Scene

Nov 28, 2023

Abstract:Most existing traffic sign-related works are dedicated to detecting and recognizing part of traffic signs individually, which fails to analyze the global semantic logic among signs and may convey inaccurate traffic instruction. Following the above issues, we propose a traffic sign interpretation (TSI) task, which aims to interpret global semantic interrelated traffic signs (e.g.,~driving instruction-related texts, symbols, and guide panels) into a natural language for providing accurate instruction support to autonomous or assistant driving. Meanwhile, we design a multi-task learning architecture for TSI, which is responsible for detecting and recognizing various traffic signs and interpreting them into a natural language like a human. Furthermore, the absence of a public TSI available dataset prompts us to build a traffic sign interpretation dataset, namely TSI-CN. The dataset consists of real road scene images, which are captured from the highway and the urban way in China from a driver's perspective. It contains rich location labels of texts, symbols, and guide panels, and the corresponding natural language description labels. Experiments on TSI-CN demonstrate that the TSI task is achievable and the TSI architecture can interpret traffic signs from scenes successfully even if there is a complex semantic logic among signs. The TSI-CN dataset and the source code of the TSI architecture will be publicly available after the revision process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge